In a significant advancement for AI alignment research, computer scientists have introduced Personalized Group Relative Policy Optimization (P-GRPO), a novel framework designed to help large language models adapt to diverse individual preferences rather than converging toward a single, homogenized objective. The research, published on arXiv on February 17, 2026, addresses a fundamental limitation in current alignment methodologies that has persisted despite the growing sophistication of AI systems.

The Problem with Current Alignment Approaches

Modern large language models like GPT-4, Claude, and Llama demonstrate remarkable general capabilities but often fail to align with the diverse preferences of individual users. This limitation stems from how these models are typically fine-tuned after initial training. The dominant approach, Reinforcement Learning with Human Feedback (RLHF), optimizes models against a single, aggregated reward signal derived from human preferences.

"Standard post-training methods, like RLHF, optimize for a single, global objective," the researchers note in their paper. This creates a fundamental tension: as models become more capable, they become less adaptable to individual differences in values, communication styles, and contextual needs.

Even more advanced approaches like Group Relative Policy Optimization (GRPO), while representing progress in on-policy reinforcement learning, inherit this limitation in personalized settings. GRPO's group-based normalization implicitly assumes that all training samples are exchangeable—an assumption that conflates distinct user reward distributions and systematically biases learning toward dominant preferences while suppressing minority signals.

How P-GRPO Works: Decoupling Advantage Estimation

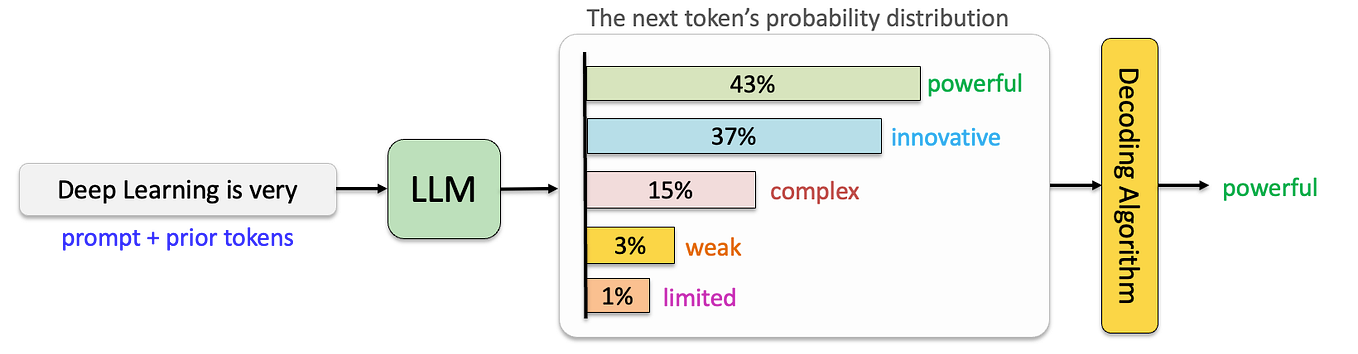

The core innovation of P-GRPO lies in its decoupling of advantage estimation from immediate batch statistics. In standard GRPO, advantages (which indicate how much better an action is compared to average) are normalized against the current batch of generated responses. This approach works well when all users share similar preferences but breaks down when preferences are heterogeneous.

P-GRPO addresses this by normalizing advantages against preference-group-specific reward histories rather than the concurrent generation group. This preserves the contrastive signal necessary for learning distinct preferences while preventing the model from being biased toward whichever preference happens to be overrepresented in a particular training batch.

Imagine training an AI assistant that needs to adapt to both formal business users and casual creative writers. With standard methods, if most training samples come from business users, the model would optimize toward formal communication even when interacting with creative writers. P-GRPO maintains separate reward histories for each preference group, allowing the model to learn appropriate responses for both contexts without one dominating the other.

Performance and Implications

The researchers evaluated P-GRPO across diverse tasks and found that it consistently achieves faster convergence and higher rewards than standard GRPO. More importantly, it demonstrates enhanced ability to recover and align with heterogeneous preference signals that would otherwise be suppressed.

These findings have significant implications for the future of AI development:

Personalized AI Assistants: P-GRPO could enable AI systems that genuinely adapt to individual users' communication styles, values, and needs rather than providing generic responses.

Cultural and Contextual Adaptation: The framework provides a pathway for developing AI systems that respect cultural differences and contextual variations in what constitutes appropriate or helpful responses.

Mitigating Majority Bias: By preserving minority preference signals, P-GRPO offers a technical approach to addressing the systematic bias toward dominant cultural perspectives in current AI systems.

Specialized Applications: The method could facilitate development of AI systems for specialized domains (medical, legal, educational) that maintain appropriate domain-specific communication norms while avoiding overgeneralization.

Technical Implementation and Future Directions

The implementation of P-GRPO requires maintaining separate reward histories for identified preference groups, which introduces additional computational considerations. However, the researchers report that the benefits in alignment quality outweigh these costs, particularly for applications where personalization is valuable.

Future research directions might include:

- Dynamic preference identification: Automatically detecting and adapting to user preferences without explicit labeling

- Hierarchical preference modeling: Handling nested or overlapping preference structures

- Cross-preference generalization: Enabling models to transfer learning across related preference groups

- Privacy-preserving implementations: Developing approaches that respect user privacy while enabling personalization

The Broader Context of AI Alignment Research

This research emerges within a growing recognition that one-size-fits-all alignment is insufficient for increasingly capable AI systems. Recent arXiv publications have explored related challenges, including modeling evolving user interests in recommendation systems and understanding how evaluation sequences affect judgments—all pointing toward the need for more nuanced approaches to aligning AI with human values and preferences.

The development of P-GRPO represents a significant step toward AI systems that can respect and adapt to human diversity rather than imposing homogenized responses. As the researchers conclude, "Accounting for reward heterogeneity at the optimization level is essential for building models that faithfully align with diverse human preferences without sacrificing general capabilities."

Source: "Personalized Group Relative Policy Optimization for Heterogenous Preference Alignment" (arXiv:2603.10009v1, February 17, 2026)