A groundbreaking study published on arXiv provides the most comprehensive analysis to date of when and how multimodal artificial intelligence actually improves clinical decision-making in healthcare. The research, titled "When Does Multimodal Learning Help in Healthcare? A Benchmark on EHR and Chest X-Ray Fusion," systematically evaluates the fusion of Electronic Health Records (EHR) and chest X-rays (CXR) using standardized cohorts from the widely-used MIMIC-IV and MIMIC-CXR datasets.

The Multimodal Promise and Reality Gap

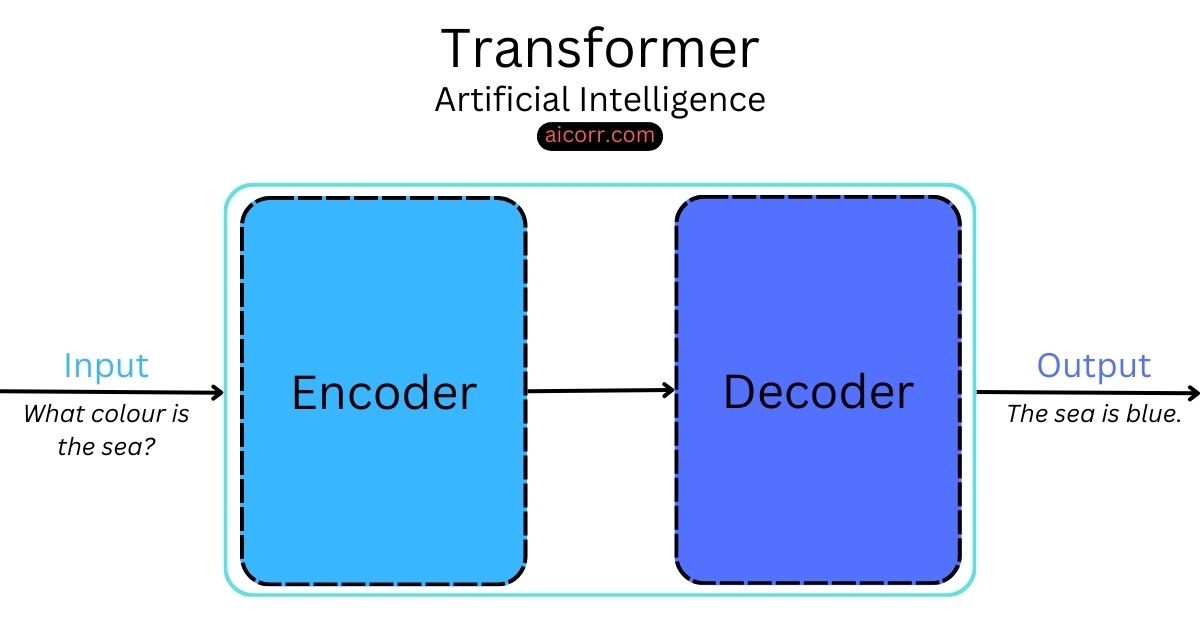

Multimodal AI—systems that combine different types of data—has been heralded as the next frontier in medical artificial intelligence. The theoretical promise is compelling: by combining structured EHR data (patient history, lab results, medications) with imaging data like chest X-rays, AI systems should achieve superior diagnostic and predictive capabilities. However, as the researchers note, "it remains unclear when multimodal learning truly helps in practice, particularly under modality missingness and fairness constraints.

This study addresses four fundamental questions that have remained largely unanswered despite years of multimodal research: 1) When does multimodal fusion actually improve clinical prediction? 2) How do different fusion strategies compare? 3) How robust are existing methods to missing modalities? 4) Do multimodal models achieve algorithmic fairness?

Key Findings: Surprising Limitations Revealed

The benchmark reveals several critical insights that challenge conventional assumptions about multimodal AI in healthcare:

1. Conditional Benefits: Multimodal fusion improves performance primarily when all modalities are complete and available. The gains concentrate specifically in diseases that require complementary information from both EHR and imaging data. For conditions where one modality provides sufficient information alone, adding additional data types offers minimal improvement.

2. Architectural Limitations: While advanced cross-modal learning mechanisms can capture clinically meaningful dependencies beyond simple data concatenation, the study found that "the rich temporal structure of EHR introduces strong modality imbalance that architectural complexity alone cannot overcome." This means that simply designing more complex neural network architectures won't solve fundamental data imbalance issues.

3. The Missing Data Problem: Under realistic clinical scenarios where data is frequently incomplete, multimodal benefits "rapidly degrade unless models are explicitly designed to handle incomplete inputs." This finding has profound implications for real-world deployment, as missing data is the norm rather than exception in clinical practice.

4. Fairness Concerns: Perhaps most surprisingly, the research demonstrates that "multimodal fusion does not inherently improve fairness." Subgroup disparities mainly arise from unequal sensitivity across demographic groups, suggesting that simply adding more data types doesn't automatically address algorithmic bias concerns.

Methodology and Benchmarking Framework

The researchers conducted their analysis using carefully constructed standardized cohorts to ensure fair comparisons across different fusion strategies. They evaluated various approaches including early fusion (concatenating features), late fusion (combining predictions), and cross-modal attention mechanisms that allow different data types to influence each other's processing.

To support reproducible research and future development, the team has released CareBench, an open-source benchmarking toolkit available at https://github.com/jakeykj/CareBench. This flexible framework enables plug-and-play integration of new models and datasets, addressing a critical need in the field for standardized evaluation protocols.

Clinical Implications and Future Directions

The findings provide actionable guidance for both researchers and healthcare organizations implementing AI systems:

Disease-Specific Implementation: Healthcare systems should prioritize multimodal AI for conditions where complementary information from different data types is genuinely needed, rather than applying it universally.

Robustness Requirements: Clinical AI systems must be explicitly designed to handle missing data from the outset, as this dramatically affects real-world performance.

Fairness by Design: The study underscores that fairness must be intentionally engineered into multimodal systems, not assumed as an automatic benefit of data fusion.

Resource Allocation: The research suggests that for some applications, improving single-modality models might provide better return on investment than pursuing complex multimodal architectures.

The Path Toward Clinically Deployable Systems

This benchmark represents a significant step toward developing "clinically deployable multimodal systems that are both effective and reliable," as the authors state. By moving beyond theoretical advantages to practical, evidence-based guidelines, the healthcare AI community can focus resources on approaches that genuinely improve patient care.

The work also highlights the importance of systematic benchmarking in AI research—particularly in healthcare where real-world consequences are significant. As AI systems move from research environments to clinical settings, understanding their limitations under realistic conditions becomes increasingly critical.

Source: arXiv:2602.23614v1, "When Does Multimodal Learning Help in Healthcare? A Benchmark on EHR and Chest X-Ray Fusion" (Submitted February 27, 2026)