In a groundbreaking paper published on arXiv, researchers are challenging one of the fundamental assumptions of modern AI: that natural language is the only path to intelligence. The study, "Training Language Models via Neural Cellular Automata," proposes a radical alternative—using synthetic, non-linguistic data generated by neural cellular automata (NCA) to pre-train large language models (LLMs) before they ever see human language.

The Problem with Natural Language Pre-training

Current LLMs like GPT-4 and Claude are trained on massive amounts of text data scraped from the internet. This approach has proven remarkably successful but comes with significant limitations. As the researchers note, high-quality text is finite, contains human biases, and entangles knowledge with reasoning in ways that make it difficult to separate fundamental capabilities from surface-level patterns.

"This raises a fundamental question: is natural language the only path to intelligence?" the authors ask in their abstract. Their work suggests the answer might be "no."

Neural Cellular Automata as Data Generators

Neural cellular automata are computational systems where simple rules govern the behavior of cells in a grid, creating complex emergent patterns over time. The researchers discovered that NCA-generated data exhibits rich spatiotemporal structure and statistical properties surprisingly similar to natural language, while being completely controllable and cheap to generate at scale.

The key innovation is what the researchers call "pre-pre-training"—training LLMs first on synthetic NCA data, then on natural language. This two-stage approach allows models to develop fundamental capabilities before being exposed to the complexities and biases of human language.

Remarkable Results with Minimal Data

The findings are striking. Pre-pre-training on only 164 million NCA tokens (roughly equivalent to 164 million words) improved downstream language modeling performance by up to 6% and accelerated convergence by up to 1.6 times. Even more surprisingly, this modest amount of synthetic data outperformed pre-pre-training on 1.6 billion tokens of natural language from Common Crawl—ten times more data—with more computational resources.

These gains weren't limited to basic language tasks. The improvements transferred to reasoning benchmarks including GSM8K (grade school math problems), HumanEval (code generation), and BigBench-Lite (general reasoning tasks).

Understanding What Transfers

The researchers conducted detailed analysis to understand why this approach works. They found that attention layers—the core mechanism that allows transformers to focus on relevant parts of input—are the most transferable components between synthetic and natural language domains.

Interestingly, different domains benefit from different NCA complexities. Code generation tasks performed better with simpler NCA dynamics, while mathematics and web text tasks favored more complex patterns. This discovery enables systematic tuning of synthetic data generation to target specific downstream applications.

Broader Implications for AI Development

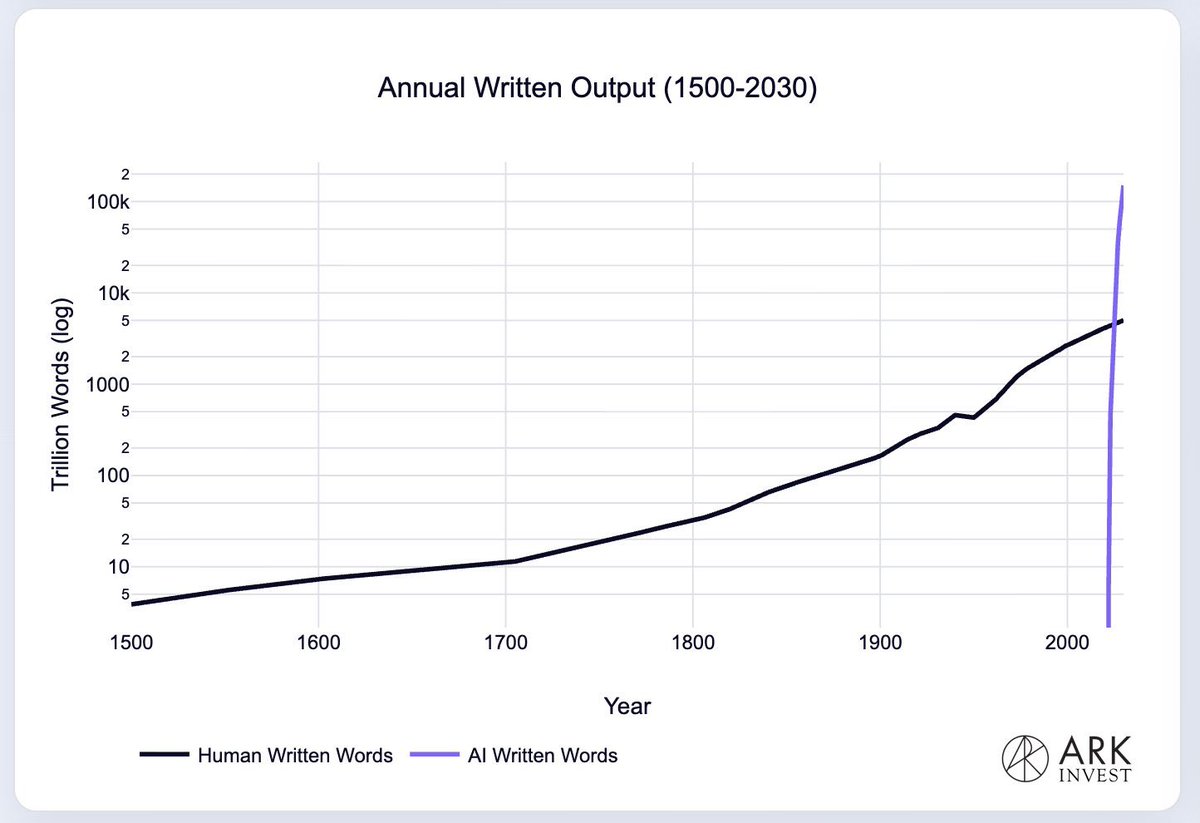

This research arrives at a critical moment for AI development. Just days before this paper's publication, analysis showed that compute scarcity is making AI increasingly expensive, forcing prioritization of high-value tasks over widespread automation. The NCA approach offers a potential solution: more efficient training that requires less data and computation.

The work also addresses growing concerns about data quality and availability. As high-quality natural language data becomes increasingly scarce and expensive, synthetic alternatives could democratize access to advanced AI training.

A New Paradigm for AI Training

While still early-stage research, this approach opens several exciting possibilities:

Bias Reduction: By separating fundamental reasoning capabilities from human language patterns, we might create AI systems less prone to inheriting human biases

Specialized Training: Different NCA configurations could be optimized for different domains—medical reasoning, scientific discovery, or creative tasks

Resource Efficiency: The dramatic reduction in required data (164M vs 1.6B tokens) suggests significant cost savings in training future models

Novel Capabilities: Synthetic data might help develop reasoning patterns not commonly found in natural language

The researchers conclude that their work "opens a path toward more efficient models with fully synthetic pre-training." While natural language will likely remain important for fine-tuning and alignment, this research suggests we might be able to build the foundations of intelligence using entirely different materials.

Source: "Training Language Models via Neural Cellular Automata" published on arXiv, March 9, 2026