Recent reports suggest that Anthropic's Claude AI system has been deployed during the Iran-Iraq War, marking a significant development in military applications of artificial intelligence. This deployment reportedly occurred despite known tensions between Anthropic and the U.S. Department of Defense (DoD) regarding the ethical use of AI technologies in warfare.

The Alleged Deployment

According to sources on social media platform X (formerly Twitter), Claude was recently utilized in the context of the Iran-Iraq War, though specific details about the timing, scope, and nature of the deployment remain unclear. The original source, @kimmonismus, stated: "Despite the tensions between Anthropic and DoW, Claude was recently deployed in the Iran-Iraq War."

It's important to note that the Iran-Iraq War officially lasted from 1980 to 1988, predating modern AI systems by decades. This discrepancy suggests either a reference to ongoing tensions or conflicts in the region, a mischaracterization of the timeline, or potentially refers to analysis of historical data from that conflict using modern AI tools.

Military Applications Highlighted

The source material emphasizes why AI models are becoming increasingly important for military operations:

Intelligence Assessments: AI systems can process vast amounts of data from multiple sources—satellite imagery, communications intercepts, human intelligence reports—to identify patterns and provide assessments far more quickly than human analysts alone.

Target Identification: Computer vision algorithms can potentially identify military targets in imagery and video feeds, though this application raises significant ethical concerns about autonomous weapons systems and the potential for misidentification.

Simulating Battle Scenarios: AI can model complex battle scenarios, predict outcomes based on various parameters, and help military planners develop strategies while potentially minimizing casualties.

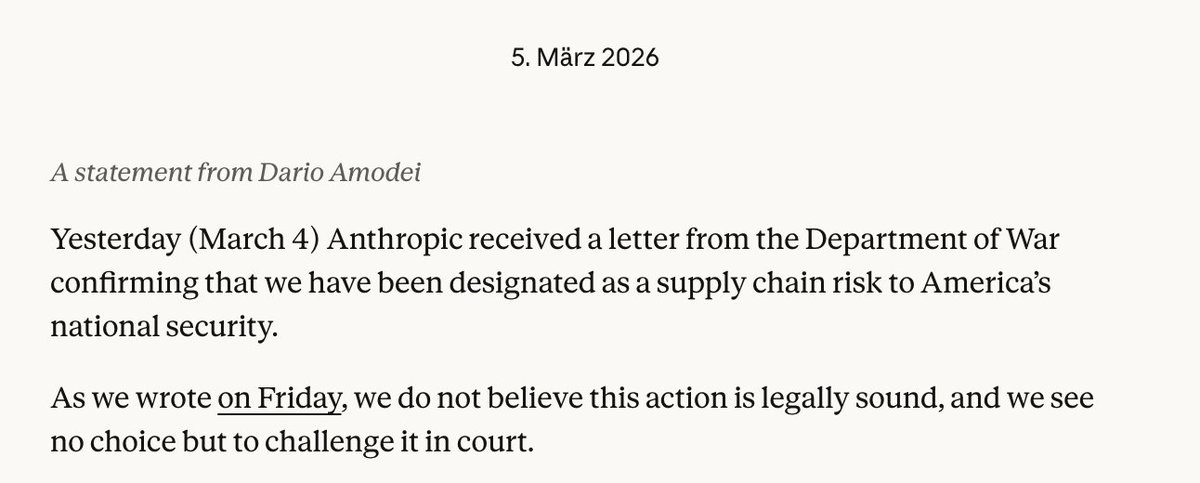

Anthropic's Stance on Military AI

Anthropic has positioned itself as an AI safety-focused company, with co-founders Dario and Daniela Amodei having previously worked at OpenAI before departing over concerns about the company's direction regarding AI safety. Anthropic's Constitutional AI approach emphasizes creating AI systems that are helpful, harmless, and honest.

The company has been reportedly cautious about military applications of its technology. In February 2024, Anthropic updated its usage policies to prohibit "military and warfare applications," though it included exceptions for "non-violent" applications like cybersecurity and search and rescue operations.

This reported deployment raises questions about how AI companies can control the use of their technologies once they are deployed, particularly when provided to government agencies or through third-party contractors.

The Broader Context of Military AI Adoption

The alleged deployment of Claude in a military context reflects a broader trend of increasing military interest in AI capabilities. The U.S. Department of Defense has been actively pursuing AI integration through initiatives like the Joint Artificial Intelligence Center (JAIC) and Project Maven, which focuses on computer vision for drone footage analysis.

Other nations are also rapidly developing military AI capabilities. China has declared its intention to become a world leader in AI by 2030, with significant military applications, while Russia has also invested in AI for military purposes.

Ethical and Strategic Implications

The potential use of Claude in military operations highlights several critical issues:

Accountability Gap: When AI systems are involved in military decision-making, it becomes increasingly difficult to assign responsibility for outcomes, particularly in cases of civilian casualties or other unintended consequences.

Escalation Risks: The speed of AI-enabled warfare could potentially outpace human decision-making, increasing the risk of rapid escalation in conflicts.

Arms Race Dynamics: The development of military AI capabilities by multiple nations creates a potential arms race dynamic that could undermine global stability.

Corporate Responsibility: This situation raises questions about how AI companies can maintain ethical boundaries when their technologies have dual-use potential with both civilian and military applications.

Verification Challenges

As with many reports originating from social media, independent verification of these claims is challenging. The original source provides limited details, and neither Anthropic nor the Department of Defense has publicly commented on this specific allegation at the time of writing.

The reference to the Iran-Iraq War specifically creates confusion, as that conflict ended decades before Claude or similar AI systems existed. This could indicate either a misunderstanding of the timeline, a reference to analysis of historical data from that conflict, or potentially a different regional conflict being referenced.

The Future of AI in Military Contexts

Regardless of the specific accuracy of this report, the discussion highlights the inevitable tension between AI companies' ethical guidelines and the practical realities of how their technologies might be used. As AI capabilities advance, governments worldwide will likely continue seeking to apply these technologies to national security challenges.

This creates a complex landscape where AI developers must navigate between commercial opportunities, ethical principles, and national security considerations. The reported deployment of Claude—if accurate—would represent a significant test case for how AI companies respond when their technologies are used in ways that may conflict with their stated principles.

Conclusion

The allegation that Claude AI has been deployed in a military context, despite tensions between Anthropic and the Department of Defense, underscores the growing intersection between artificial intelligence and national security. While details remain unclear and unverified, the discussion highlights important questions about corporate responsibility, ethical boundaries, and the future of warfare in an age of increasingly capable AI systems.

As AI technologies continue to advance, society will need to develop clearer frameworks for governing their military applications while balancing security needs with ethical considerations and international stability concerns.