Anthropic's claude-code-v2-1-139" class="entity-chip">Claude Code v2.1.139 introduces /goal, a command for autonomous development loops. A separate evaluator model checks the completion condition after each turn, freeing developers from per-step prompting.

Key facts

- Requires Claude Code v2.1.139 or later

- Uses a separate small fast model as evaluator

- One goal active per session

- Goal clears automatically when condition is met

- Run

/goalwith no argument to check status

Claude Code v2.1.139, released May 14, 2026, adds the /goal command that lets developers set a completion condition and let the agent work autonomously until it's met [According to New in Claude Code]. After each turn, a small fast model evaluates whether the condition holds; if not, Claude starts another turn instead of returning control to the user.

The command targets substantial work with verifiable end states: migrating a module to a new API until all call sites compile, implementing a design doc until acceptance criteria hold, or splitting a large file until each module is under a size budget. A single goal can be active per session; running /goal with a new argument replaces the current one.

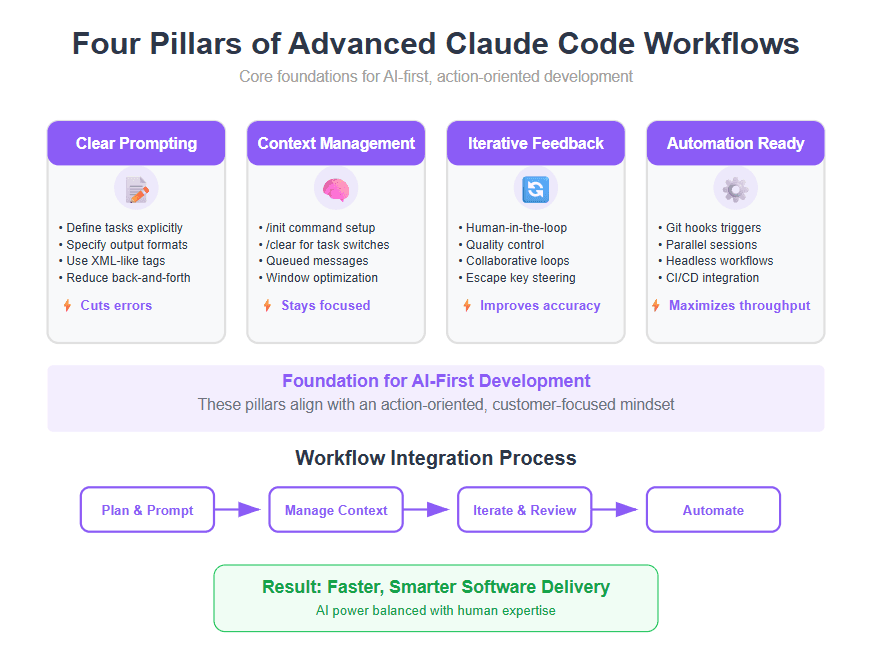

How it differs from existing workflows

Claude Code already offered /loop, Stop hooks, and auto mode. /goal is a session-scoped shortcut requiring only a typed condition. A Stop hook lives in settings files and applies across sessions. Auto mode approves tool calls within a single turn but doesn't start a new one — /goal adds a separate evaluator that checks after every turn, so completion is decided by a fresh model rather than the one doing the work. The two are complementary: auto mode removes per-tool prompts, and /goal removes per-turn prompts.

The evaluator doesn't run commands or read files independently — it judges the condition against what Claude has surfaced in conversation. An active goal shows a /goal active indicator with elapsed time. Running /goal with no argument displays turns and tokens spent so far. Run /goal clear to stop early.

Unique take: the evaluator separation matters more than autonomy

The structural innovation isn't that Claude Code can loop — /loop already did that. It's that /goal decouples the worker model from the evaluator model. This prevents the agent from prematurely declaring task completion, a known failure mode in agentic systems. The approach mirrors Anthropic's broader agent architecture, where Claude Agent uses separate models for planning and execution [As previously reported in Anthropic Research Cuts Agent Misalignment With 7 System Prompt Lessons].

What to watch

Watch for community benchmarks comparing /goal completion rates vs. /loop and Cursor's agent loops on SWE-Bench or similar coding benchmarks. Also track whether Anthropic adds evaluator model selection or custom evaluator prompts in subsequent releases.