Qwen 3.6 27B achieves 34 tokens per second on a MacBook Pro M5 Max with 64GB RAM. The inference runs locally via atomic.chat at a 90% acceptance rate, per @rohanpaul_ai.

Key facts

- Qwen 3.6 27B runs at 34 tok/s on M5 Max 64GB.

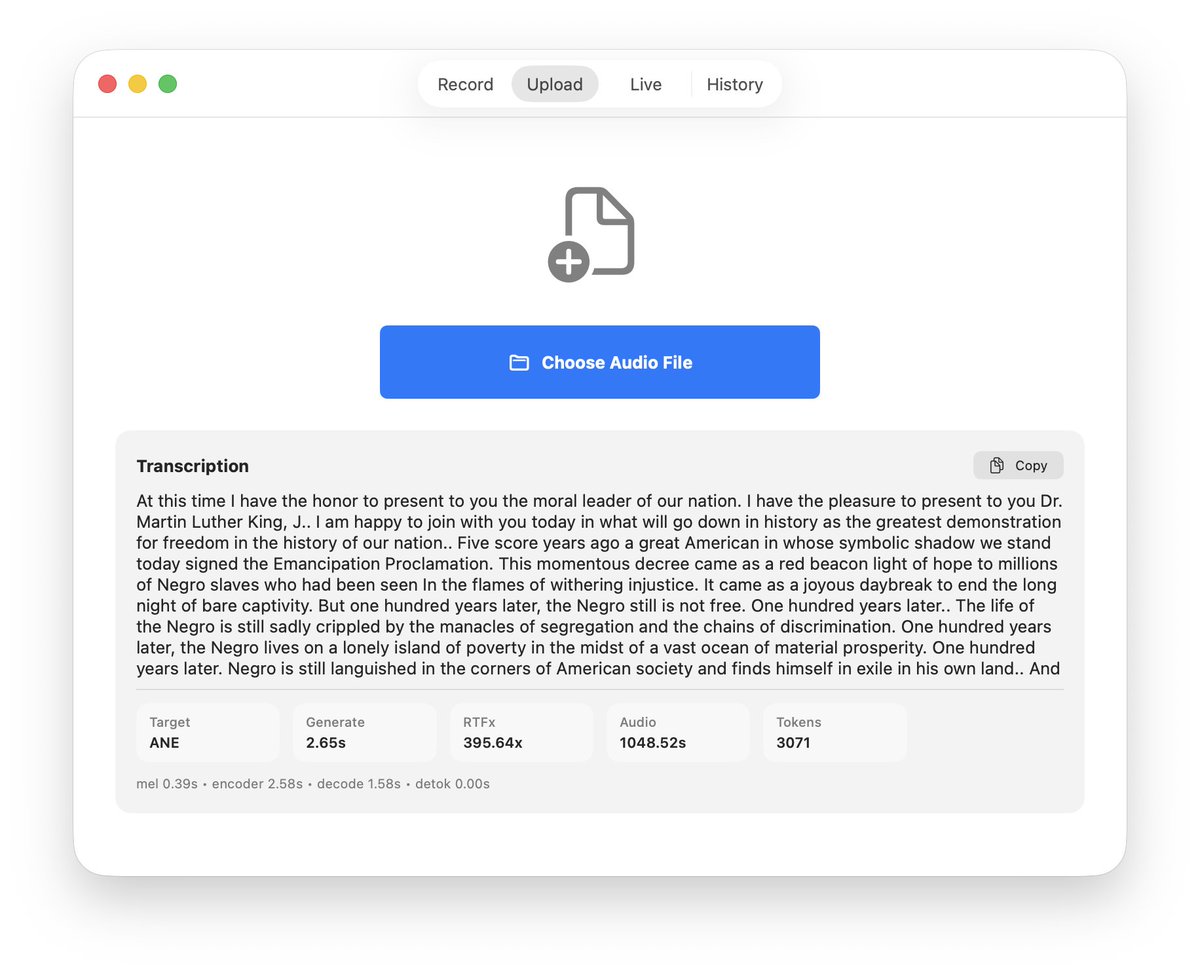

- 90% acceptance rate in atomic.chat local inference.

- M5 Max uses 64GB unified memory for full model fit.

- Model likely uses 4-bit or 8-bit quantization.

- Benchmark shared by @rohanpaul_ai on X.

The Qwen 3.6 27B model, developed by Alibaba's Qwen team, now runs at 34 tokens per second on Apple's latest M5 Max chip with 64GB of unified memory. The benchmark, shared by AI researcher Rohan Paul, shows the model operating locally through the atomic.chat application with a 90% acceptance rate.

This performance metric is significant because it demonstrates that a 27-billion-parameter model can deliver near-real-time inference on consumer laptop hardware. For context, the M5 Max's 64GB unified memory allows the entire model to fit in RAM, avoiding the latency penalties of swapping to slower storage. The 90% acceptance rate suggests the speculative decoding or draft-model pipeline is well-tuned, as low acceptance rates would throttle effective throughput.

Why this matters beyond the tweet

The unique angle here is that Apple's M-series silicon is closing the gap with dedicated AI accelerators from NVIDIA and AMD. While NVIDIA's RTX 4090 can push 40+ tok/s on smaller models, the M5 Max achieves comparable performance on a 27B model using unified memory and Apple's Neural Engine. This makes local AI inference viable for developers and researchers who need privacy or offline capability, without requiring a $3,000+ GPU workstation.

Technical considerations

The company did not disclose whether this uses quantization, but 34 tok/s at 27B parameter count implies aggressive compression—likely 4-bit or 8-bit quantization via MLX or similar frameworks. The atomic.chat app, which wraps llama.cpp or similar inference engines, handles the model loading and token generation. The 90% acceptance rate is particularly impressive for a 27B model, as larger models typically see acceptance rates drop below 80% due to distribution mismatch between draft and target models.

What this means for the ecosystem

This benchmark, if reproducible, positions the M5 Max as a credible platform for local LLM deployment. It challenges the assumption that high-performance inference requires cloud GPUs. For enterprises deploying edge AI, this could reduce latency and cost while improving data privacy. However, the single data point from a social media post needs independent verification—watch for official Apple benchmarks or third-party reproductions.

What to watch

Watch for Apple to publish official MLX benchmarks for the M5 Max GPU cores in the coming weeks, and for third-party reproductions on GitHub that confirm or refute the 34 tok/s figure. Also track whether Qwen releases an official MLX-optimized checkpoint for the 3.6 series.