Key Takeaways

- Anthropic investigated and published a post-mortem on reported quality issues with Claude Code, their AI coding assistant.

- The post outlines findings and likely fixes.

What Happened

Anthropic has published a post-mortem analyzing recent reports of quality issues with Claude Code, their AI-powered coding assistant. The announcement, made via X (formerly Twitter) by a company representative, confirms the team has been investigating user complaints and has now released a detailed analysis of what they found.

Context

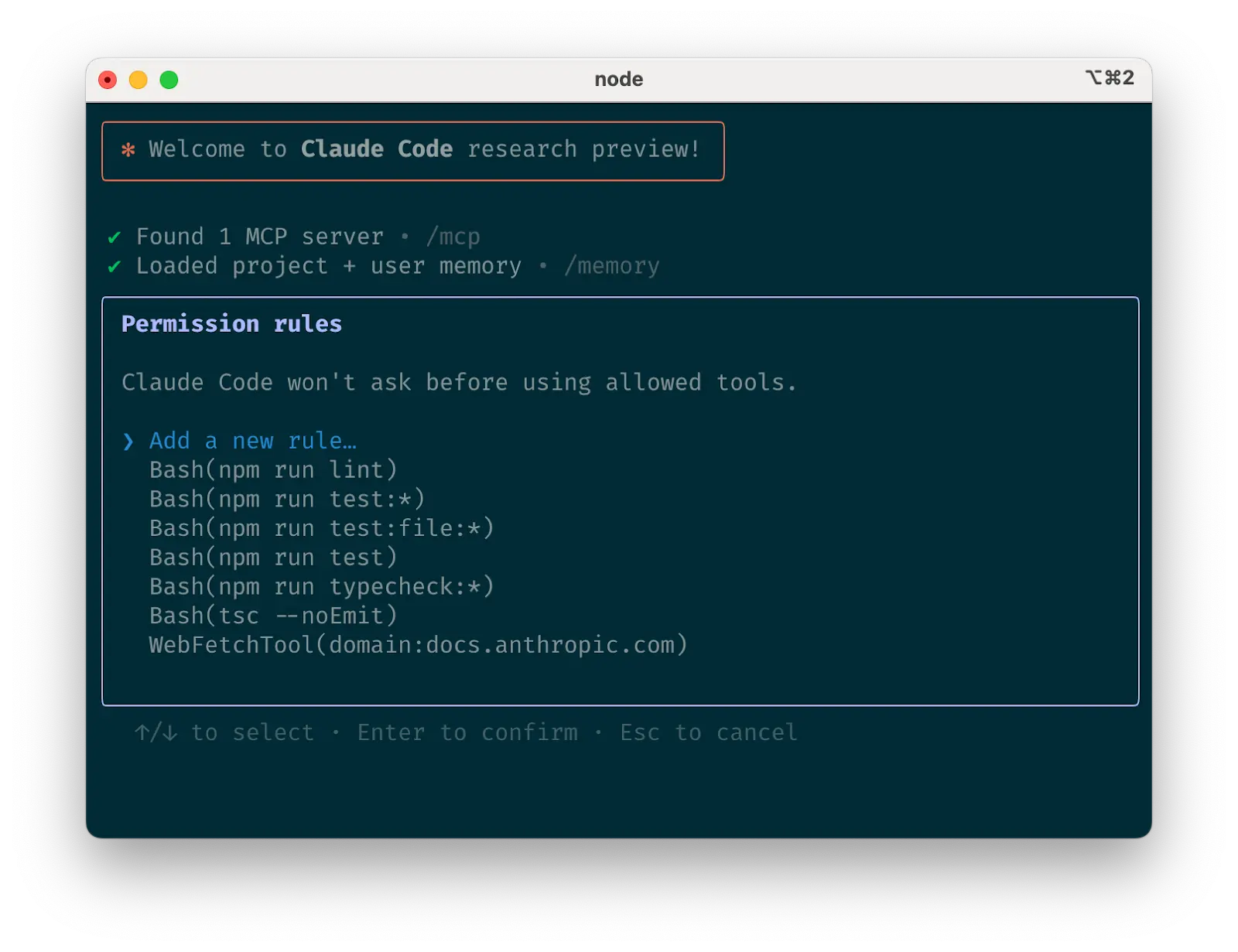

Claude Code, launched as Anthropic's competitor to GitHub Copilot and other AI coding assistants, has been under scrutiny from developers who reported inconsistent code generation quality. Users on platforms like Hacker News and Reddit had noted instances where Claude Code produced suboptimal or incorrect code suggestions, particularly in complex debugging scenarios.

Key Details from the Post-Mortem

The post-mortem reportedly covers:

- Root cause analysis of the quality issues

- Specific scenarios where Claude Code underperformed

- Steps taken or planned to address the problems

- Recommendations for users to mitigate issues

While the full post-mortem content isn't detailed in the tweet, the acknowledgment of the investigation and the publication of findings signals Anthropic's commitment to transparency and improvement.

What This Means in Practice

For developers using Claude Code, this post-mortem provides concrete guidance on known limitations and workarounds. For the broader AI coding tools market, it underscores the ongoing challenge of maintaining consistent quality in generative coding assistants, where edge cases can significantly impact developer trust.

gentic.news Analysis

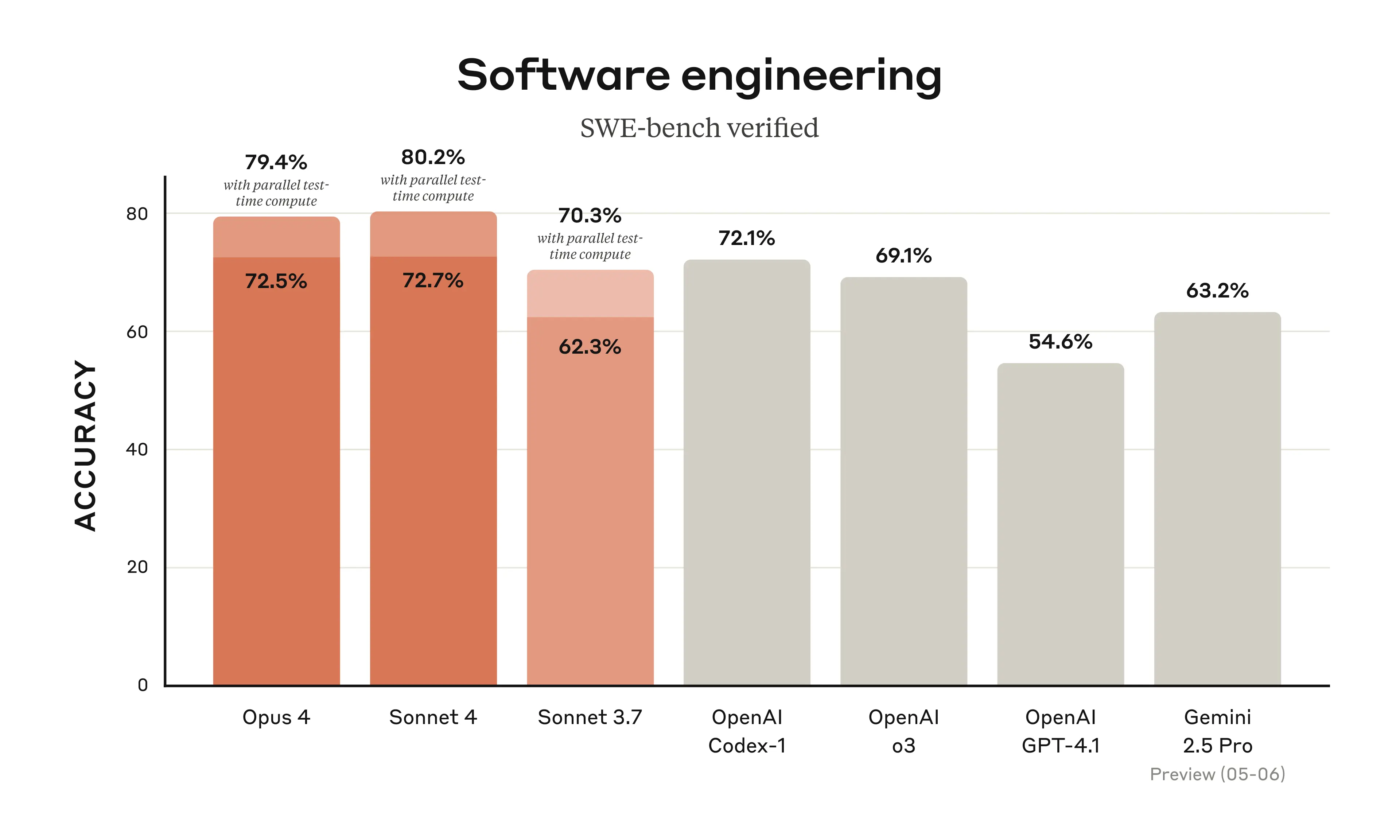

Anthropic's decision to publish a public post-mortem on Claude Code quality issues is noteworthy for several reasons. First, it reflects a shift toward greater transparency in AI product development—a trend we've observed across the industry as companies face increasing scrutiny over model reliability. Second, it acknowledges that even frontier models like Claude can have significant quality gaps in specialized domains like code generation.

The timing is interesting, as the AI coding assistant market has become increasingly competitive. Claude Code launched to strong reviews but has faced sustained pressure from established players like GitHub Copilot and newer entrants like Cursor. This post-mortem could be seen as Anthropic's attempt to maintain developer trust by being upfront about limitations, rather than letting user complaints fester unanswered.

For AI practitioners, this reinforces a key lesson: no coding assistant is infallible. The most successful teams will likely be those that combine AI tooling with robust human review processes, especially for complex or critical code paths.

Frequently Asked Questions

What exactly is Claude Code?

Claude Code is Anthropic's AI-powered coding assistant, designed to help developers write, debug, and review code using natural language prompts. It's built on Anthropic's Claude model family.

What quality issues were reported with Claude Code?

Users reported instances where Claude Code generated incorrect, inefficient, or insecure code suggestions, particularly in complex debugging scenarios or when handling edge cases in large codebases.

How is Anthropic addressing these issues?

According to the post-mortem, Anthropic has conducted a root cause analysis and is implementing fixes. The company has also published guidance for users on how to work around known limitations.

Should I stop using Claude Code because of this?

Not necessarily. The post-mortem provides transparency about limitations, but Claude Code remains a capable tool for many coding tasks. As with any AI coding assistant, best practices include reviewing generated code carefully and using the tool for tasks within its demonstrated capabilities.