What It Does

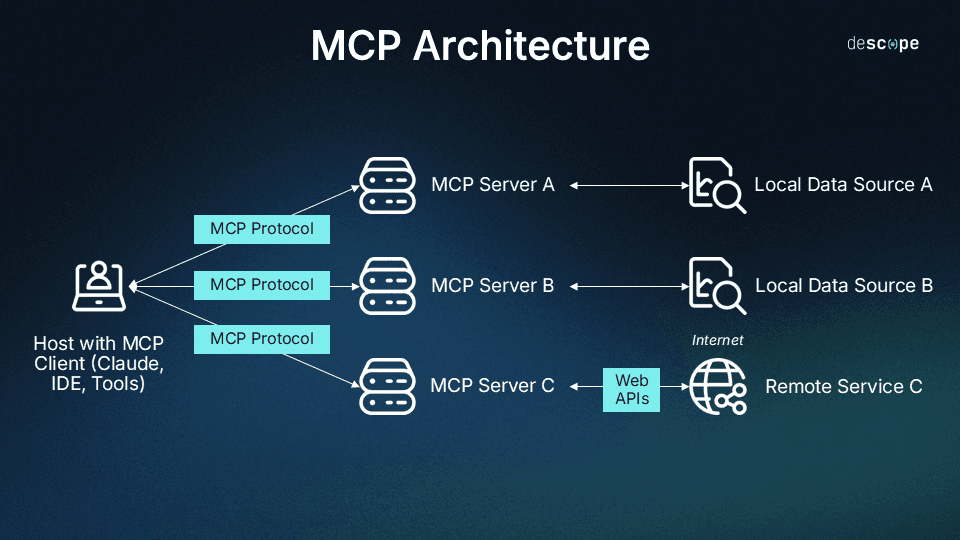

Cloudflare has launched an open-source Model Context Protocol (MCP) server called "Code Mode" that optimizes how code is sent to AI agents like Claude. The server works by preprocessing your code before it reaches the AI, automatically removing comments, whitespace, and other non-essential elements that consume tokens but don't affect functionality.

This isn't just simple minification—it's intelligent preprocessing that understands what parts of your code are actually necessary for AI analysis. When you're working with Claude Code on large codebases, this can mean the difference between hitting context limits and having smooth, uninterrupted sessions.

Setup

Installation is straightforward via npm:

npm install -g @cloudflare/code-mode-mcp-server

Then configure it in your Claude Desktop settings (claude_desktop_config.json):

{

"mcpServers": {

"code-mode": {

"command": "npx",

"args": ["@cloudflare/code-mode-mcp-server"],

"env": {

"CODEPILOT_MAX_TOKENS": "8000",

"CODEPILOT_STRIP_COMMENTS": "true"

}

}

}

}

The server exposes tools that Claude Code can call automatically when processing code files. You don't need to change your workflow—just install it and let it work in the background.

When To Use It

This MCP server shines in three specific scenarios:

Large Code Reviews: When asking Claude to analyze a pull request with 20+ files, Code Mode can reduce token usage by 40-70% by stripping out developer comments and formatting.

Legacy Code Analysis: Older codebases often have extensive documentation blocks and commented-out code. Code Mode cleans this up before sending to Claude, letting you focus on actual logic.

Cost-Sensitive Development: If you're monitoring your Claude API usage, this server pays for itself quickly. The reduction in tokens means more iterations for the same budget.

Try it with this prompt in Claude Code:

Review this entire directory structure and suggest architectural improvements.

[Attach your project]

Notice how Claude can now process more files in a single context window, thanks to the optimized code transmission.

gentic.news Analysis

This follows Cloudflare's strategic push into the AI infrastructure space, building on their previous launch of Workers AI in late 2023. The company has been steadily expanding its developer tooling ecosystem, positioning itself as more than just a CDN provider.

This aligns with the broader trend we've covered in "MCP Servers Every Developer Should Install"—specialized tools that optimize specific aspects of the AI development workflow. Cloudflare's entry into the MCP ecosystem is significant because they bring production-scale infrastructure thinking to token optimization, a problem that affects every Claude Code user working with real-world codebases.

The timing is particularly interesting given Anthropic's recent context window expansions. While Claude can now handle more tokens, efficient token usage remains critical for cost control and latency. Cloudflare's approach—preprocessing at the edge before data reaches the AI—plays to their core competency in edge computing.

For Claude Code users, this represents a practical solution to a daily pain point: paying for tokens that don't contribute to the actual analysis. As more companies enter the MCP ecosystem with specialized servers, we're seeing the emergence of a true toolchain for AI-assisted development.