In the rapidly evolving landscape of artificial intelligence, one persistent challenge has remained stubbornly resistant to solution: the reliability of AI-generated answers. While models like ChatGPT, Claude, and Gemini have demonstrated remarkable capabilities, their tendency to produce confident-sounding but factually incorrect responses—known as hallucinations—has limited their utility for critical applications. Now, a Boston-based startup called CollectivIQ is proposing a novel solution: if one AI model can't be trusted, why not consult them all?

The Multi-Model Approach

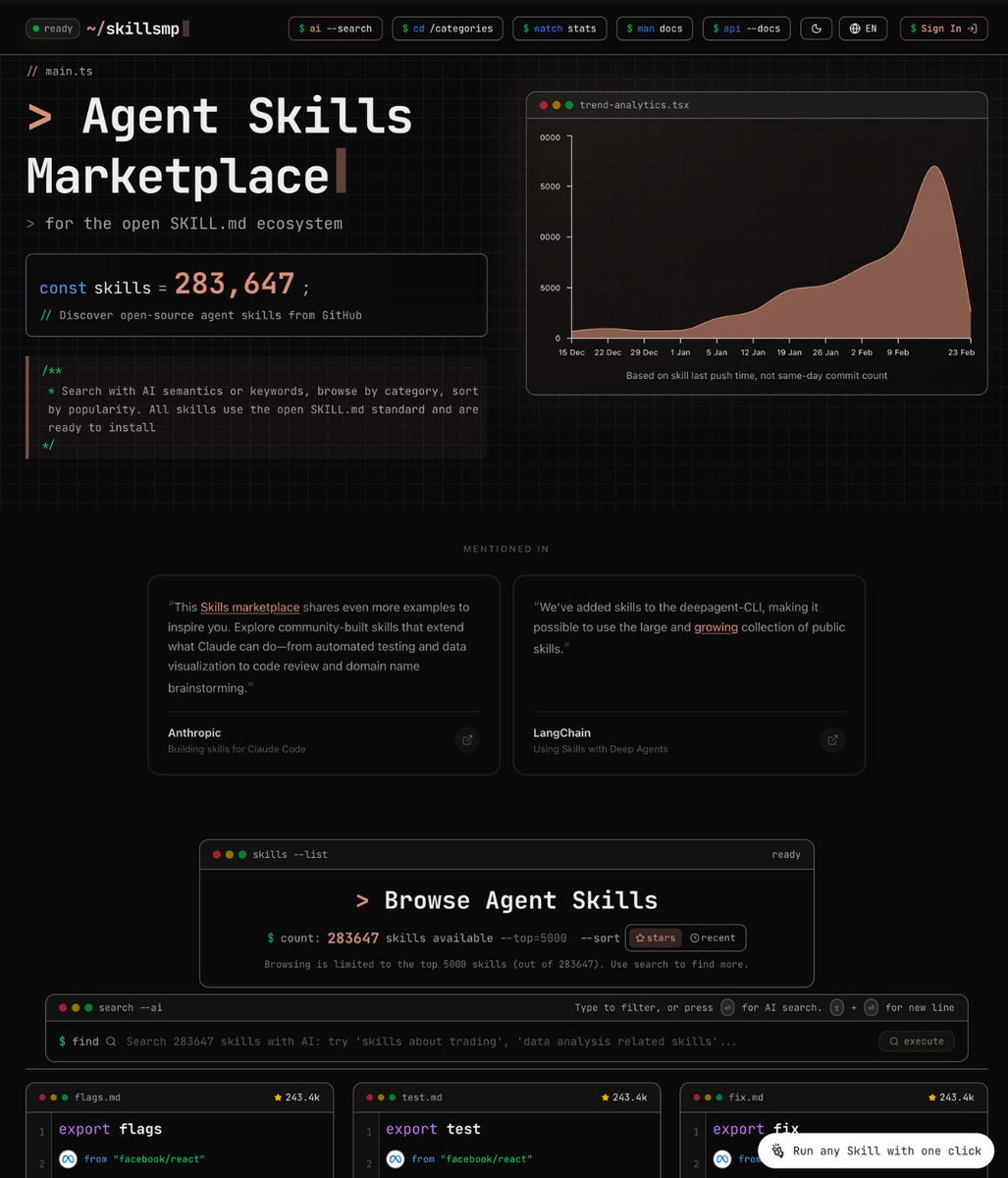

CollectivIQ, incubated at hospitality procurement enterprise Buyers Edge Platform, represents a significant departure from the single-model approach that has dominated the AI landscape. The platform aggregates responses from up to 14 different language models simultaneously, including industry leaders like ChatGPT, Gemini, Claude, and Grok, along with up to 10 additional specialized models.

The concept emerged from a practical business need. John Davie, founder and CEO of Buyers Edge Platform, sought to leverage AI for his enterprise but found existing solutions inadequate. "When he looked around, the CEO wasn't satisfied with the options," according to the original report. This dissatisfaction led to the development of CollectivIQ, which essentially creates a "wisdom of the crowd" approach to AI responses.

Technical Implementation and User Experience

From a technical perspective, CollectivIQ's approach involves parallel querying of multiple AI models, followed by sophisticated aggregation and presentation of results. Users submit a single query, and the platform distributes it across its network of connected models. The responses are then compiled and presented in a way that allows users to compare answers, identify consensus points, and spot potential inaccuracies.

This methodology addresses several key limitations of individual models. Different AI systems have varying strengths—some excel at creative tasks, others at technical analysis, and still others at factual recall. By leveraging multiple models simultaneously, CollectivIQ aims to provide more comprehensive and reliable answers than any single model could deliver alone.

The Hallucination Problem in Context

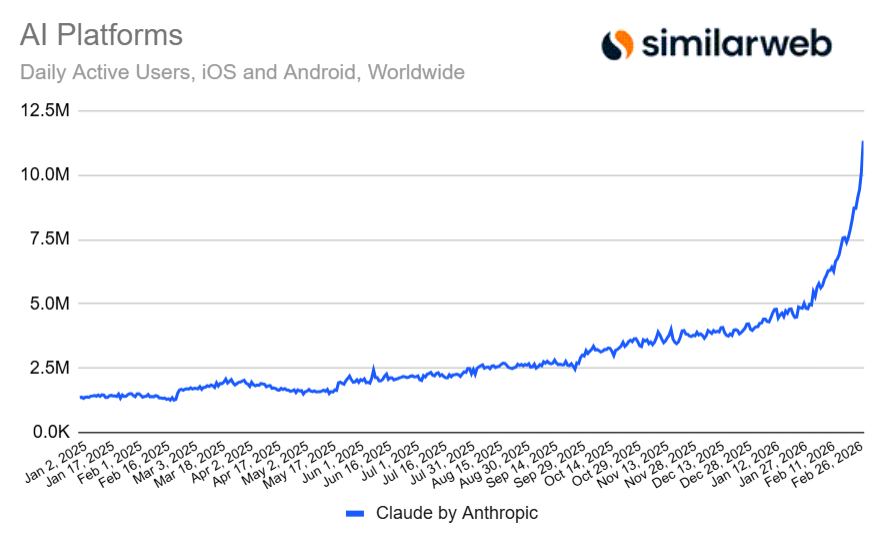

The timing of CollectivIQ's approach is particularly significant given recent developments in the AI landscape. Just days before the company's pitch was reported, Claude demonstrated real-time awareness of unfolding geopolitical events in Iran, indicating a breakthrough in real-time information processing. Meanwhile, Claude was the only major AI model to show progress in avoiding factual inaccuracies on BullshitBench v2, a benchmark for measuring hallucination rates.

These developments highlight both the progress being made in individual models and the persistent nature of the reliability challenge. Even as models improve, the fundamental architecture of large language models—which generate responses based on statistical patterns rather than factual databases—makes complete elimination of hallucinations difficult.

Industry Implications and Competitive Landscape

CollectivIQ's approach represents a potential shift in how enterprises might deploy AI technology. Rather than committing to a single vendor's ecosystem, businesses could use aggregation platforms to access the best capabilities across multiple providers. This could reduce vendor lock-in and create more competitive dynamics in the AI market.

The platform also addresses the growing concern about AI reliability in professional contexts. As noted in recent industry analysis, "rapid advancement of AI capabilities threatens traditional software models." Traditional enterprise software has typically prioritized reliability and accuracy over cutting-edge capabilities, but AI systems have reversed this priority. CollectivIQ's multi-model approach attempts to bridge this gap by combining cutting-edge capabilities with improved reliability through redundancy.

Challenges and Limitations

Despite its innovative approach, CollectivIQ faces several significant challenges. The platform's effectiveness depends on the diversity and quality of its connected models. If multiple models share similar training data or architectural approaches, they may produce correlated errors rather than independent validations.

Additionally, the computational cost of querying multiple models simultaneously is substantially higher than using a single model. This could limit the platform's scalability or make it cost-prohibitive for some applications. The user interface also presents challenges—presenting multiple conflicting answers could overwhelm users rather than providing clarity.

Future Directions and Market Potential

The success of CollectivIQ could inspire similar approaches across the AI industry. We might see the emergence of specialized aggregation services for different domains—legal AI, medical AI, creative AI—each curating their own selection of models optimized for specific use cases.

This development also raises interesting questions about the future of AI model development. If aggregation platforms become widespread, model developers might optimize not just for absolute performance but for complementary capabilities that make their models valuable additions to aggregation networks.

Conclusion: A Step Toward Trustworthy AI

CollectivIQ represents an important experiment in addressing one of AI's most persistent challenges. By acknowledging that no single model can be completely reliable and instead creating systems that leverage multiple perspectives, the platform offers a pragmatic approach to improving AI trustworthiness.

As AI systems become increasingly integrated into critical business processes and decision-making, solutions like CollectivIQ's multi-model aggregation may become essential infrastructure. The platform's success will depend not just on its technical implementation but on whether it can demonstrably improve outcomes for users facing high-stakes questions where accuracy matters more than creativity.

In an industry often focused on building ever-larger models, CollectivIQ's approach reminds us that sometimes the most innovative solutions come not from building something new, but from finding smarter ways to use what already exists.