Key Takeaways

- Researchers propose that LLM-based assistants are reconfiguring how user representations are produced and exposed, requiring a shift toward inspectable, portable, and revisable user models across services.

- They identify five research fronts for the future of recommender systems.

What Happened

A new paper published on arXiv (2604.20065) argues that the rise of LLM-based assistants is fundamentally reconfiguring the personalization stack. The authors — from the computer science and information retrieval community — contend that the key issue is not simply that LLMs can improve recommendation quality, but that they change where and how user representations are produced, exposed, and acted upon.

The Core Argument: From Hidden Profiles to Governable Personalization

Traditionally, personalization has relied on platform-specific user models — hidden profiles built by Amazon, Netflix, or Spotify from your behavior within their walled gardens. These models are optimized for prediction but remain largely inaccessible to the people they describe. You can't see what Spotify thinks you like, let alone correct it or take that profile to another service.

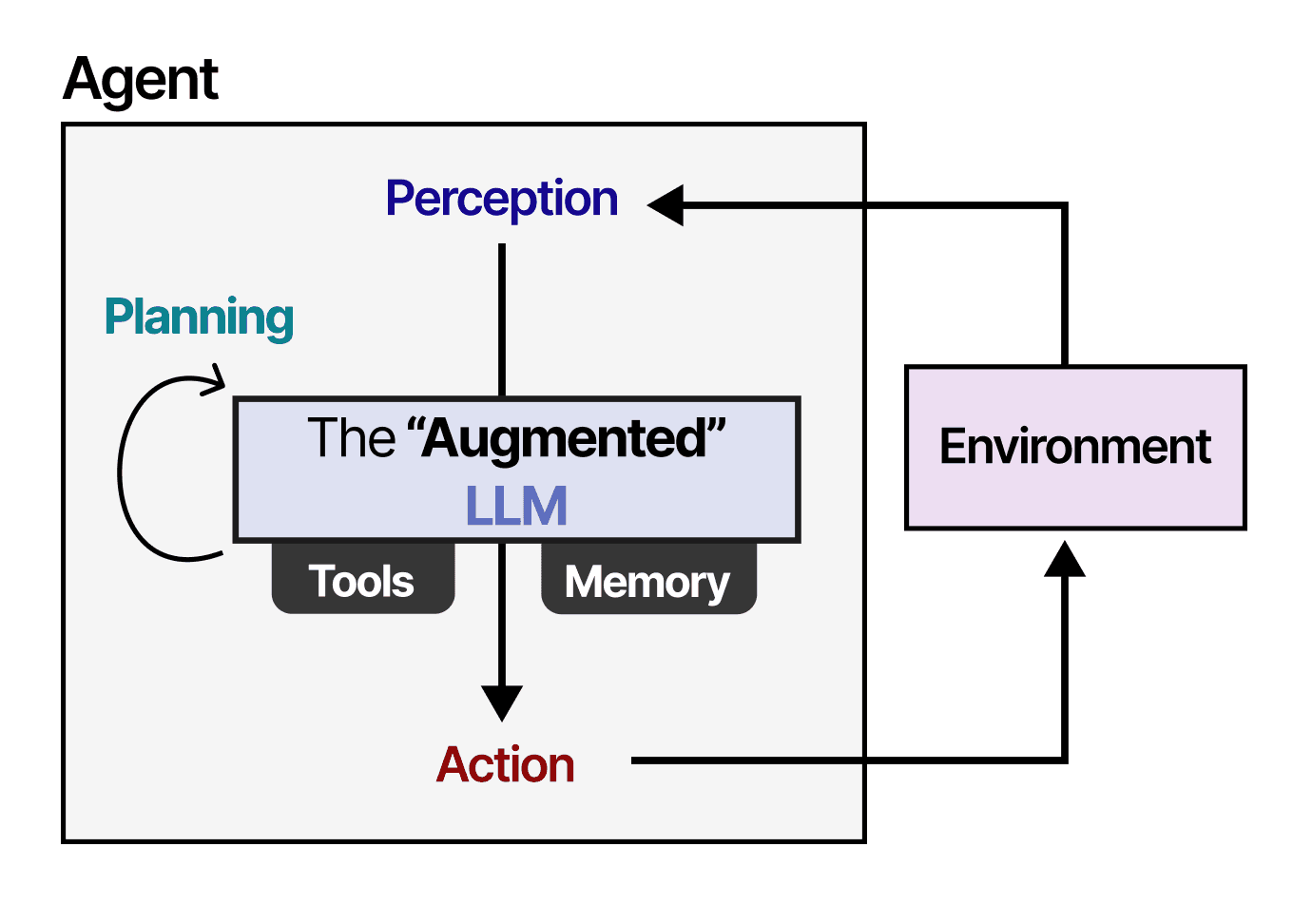

The paper's central claim is that LLM-based assistants — think ChatGPT, Claude, or custom shopping agents — are breaking this model. As these agents increasingly mediate search, shopping, travel, and content access, user representation is no longer confined to isolated platforms. The agent might have its own memory of your preferences, built from conversations and interactions across multiple services.

This creates both an opportunity and a challenge. The authors propose a shift toward "governable personalization" — systems where user representations become:

- Inspectable: Users can see what the system thinks about them

- Revisable: Users can correct or update their profiles

- Portable: User models can move between services

- Consequential: Users understand how their representations affect what they see

Five Research Fronts for Recommender Systems

The paper identifies five interconnected research challenges:

Transparent yet privacy-preserving user modeling: How do you show users their profiles without exposing sensitive data or enabling surveillance?

Intent translation and alignment: How do LLM agents accurately interpret user intent across different contexts and translate that into effective recommendations?

Cross-domain representation and memory design: How do you build user models that work across shopping, travel, content, and other domains without losing context or creating contradictions?

Trustworthy commercialization in assistant-mediated environments: How do you monetize recommendations through agents without eroding trust or creating perverse incentives?

Operational mechanisms for ownership, access, and accountability: Who owns the user model? How is access controlled? What happens when the model is wrong?

Technical Context

This paper arrives amid growing interest in agentic systems. Our coverage of the POTEMKIN framework (April 22) exposed critical trust gaps in agentic AI tools, and the GraphRAG-IRL paper (also April 22) proposed hybrid frameworks for more robust personalized recommendations. This new paper takes a step back, asking not "how do we make better recommendations" but "who controls the user model that powers them?"

The paper also builds on recent arXiv research diagnosing failure modes in LLM-based rerankers for cold-start recommendation (April 21) and analyzing exploration saturation in recommender systems (also April 21). The authors position their five research fronts not as isolated technical challenges but as interconnected design problems created by the emergence of LLM agents as intermediaries.

Retail & Luxury Implications

For luxury and retail, this paper touches on several directly relevant pain points:

The walled garden problem: Luxury brands have long struggled with platform dependency — your customer profile on Farfetch doesn't help you personalize on your own DTC site. Governable personalization could enable portable user models that follow customers across brand sites, marketplaces, and physical stores.

The concierge agent scenario: Imagine a customer asks an LLM agent to "find me a navy blue cashmere sweater under €2,000." The agent might know (from memory) that the customer prefers Loro Piana, has a size 48 chest, and bought a similar item last season. That's powerful — but who owns that memory? The brand? The platform? The customer?

Transparency as luxury: High-net-worth customers value control and discretion. A system that lets them inspect, correct, and govern their preference model could be a differentiator — especially in markets where customers are wary of opaque data collection.

Cross-brand personalization: A customer might want their preferences from Brunello Cucinelli to inform recommendations at Zegna, but not at fast-fashion competitors. Governable personalization could enable this selectively.

Business Impact

The paper is conceptual rather than empirical — it doesn't report experimental results or deployment case studies. However, the implications for retail are significant:

Reduced platform dependency: If user models become portable, brands may rely less on Amazon/Google/Facebook for personalization and more on direct customer relationships mediated by LLM agents.

New trust economics: Brands that offer transparent, governable personalization may earn premium trust — and premium margins — compared to those that rely on hidden profiling.

Implementation complexity: The paper's five research fronts are genuinely hard problems. Privacy-preserving transparency, cross-domain memory, and trustworthy commercialization are not solved. Retail leaders should expect 3-5 years before production-ready solutions emerge.

Implementation Approach

For retail AI teams, the paper suggests several practical starting points:

Audit your user models: Can your customers see what you know about them? Can they correct it? If not, you're vulnerable to the shift the paper describes.

Experiment with portable profiles: Test whether customers want to bring their preferences from one brand to another. Even simple surveys can reveal demand.

Invest in intent translation: The paper highlights that LLM agents need to translate vague user intent into precise recommendations. This is directly applicable to conversational commerce interfaces.

Monitor the agent ecosystem: As LLM agents (like those from Anthropic or OpenAI) become intermediaries, retail brands need to understand how these agents will access and use their product catalogs and customer data.

Governance & Risk Assessment

- Privacy: Transparent user modeling could expose sensitive preference data. The paper acknowledges this tension but doesn't solve it.

- Bias: Portable user models could perpetuate or amplify biases across services. A biased model from one platform could infect recommendations everywhere.

- Maturity: This is research, not production. The paper is a call to action, not a blueprint. Retail leaders should monitor progress but not bet the roadmap on it yet.

gentic.news Analysis

This paper arrives at a moment of intense interest in both recommender systems and LLM agents. Our coverage of the POTEMKIN framework (April 22) showed that trust gaps in agentic tools are already being identified, and the GraphRAG-IRL paper (also April 22) demonstrated that hybrid approaches are gaining traction for personalized recommendations.

The paper's emphasis on "governable personalization" aligns with broader trends toward AI alignment and user agency — topics we've covered extensively (11 prior articles on AI alignment). The authors explicitly connect their work to the AI alignment literature, arguing that recommender systems should be aligned not just with platform goals but with user intentions.

Notably, the paper doesn't mention specific retail or luxury applications — that gap is ours, not theirs. But the cross-domain representation challenge they identify is directly relevant to luxury conglomerates like LVMH or Kering, which manage multiple brands with overlapping customer bases. A customer who shops at Dior and Louis Vuitton might want their preferences to flow between the two, but not to Sephora. That's exactly the kind of selective portability the paper envisions.

The paper also connects to our recent coverage of LLM limitations for scientific discovery (April 21) — the Columbia professor's argument that LLMs are fundamentally interpolation-based machines. This paper implicitly addresses that critique by arguing that LLMs' value in personalization isn't about generating new knowledge but about reconfiguring who controls existing knowledge about users.

For retail AI leaders, the key takeaway is strategic: the personalization stack is about to be disrupted, and the winners will be those who give users genuine control over their own preference models. The technology isn't ready today, but the direction is clear.