Key Takeaways

- ItemRAG shifts RAG for LLM-based recommenders from user-history retrieval to fine-grained item-level retrieval, using co-purchase and semantic data to prioritize informative items.

- Experiments show consistent outperformance over existing methods, especially for cold-start items.

What Happened

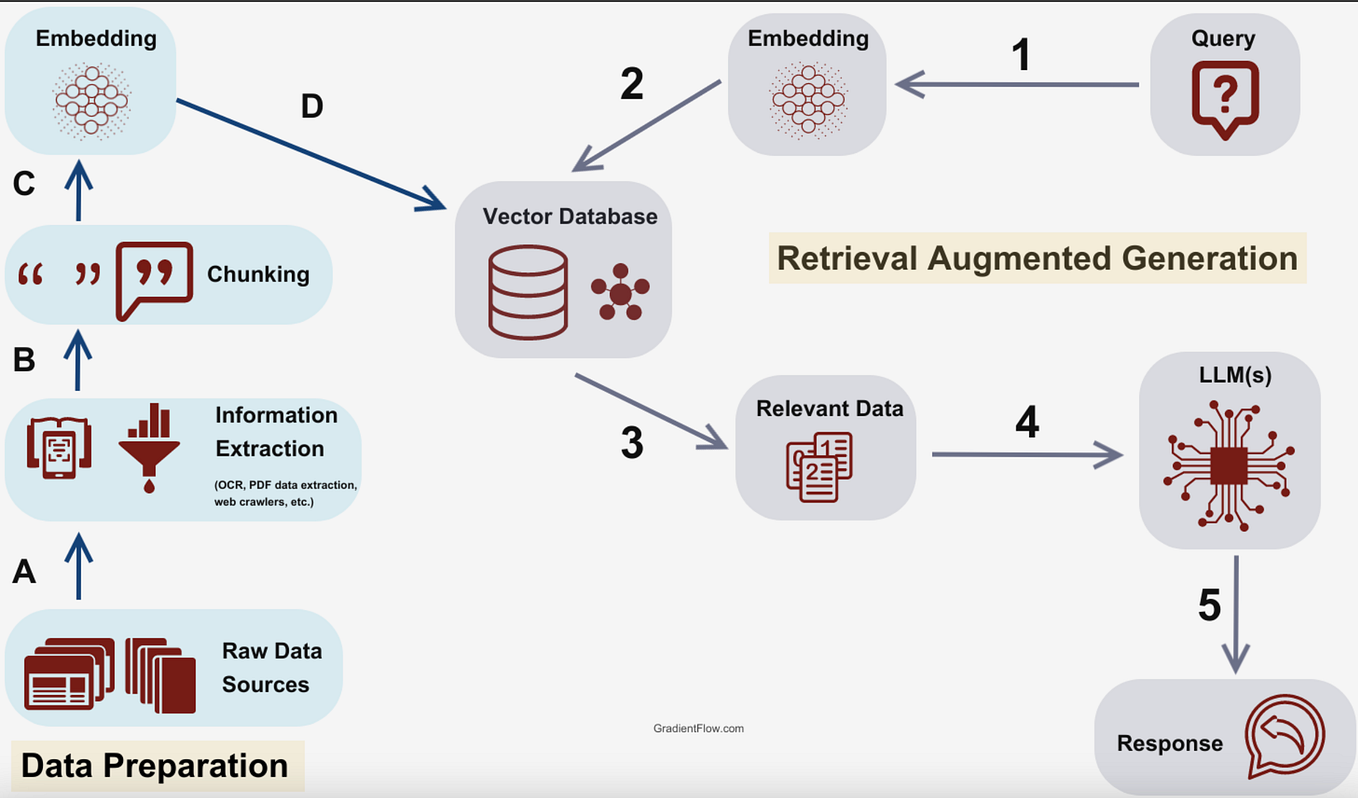

A research team has introduced ItemRAG, a novel retrieval-augmented generation (RAG) approach designed to improve how large language models (LLMs) are used as recommender systems. The paper, published on arXiv and led by Sunwoo Kim, addresses a core limitation in current LLM-based recommendation: existing RAG methods retrieve purchase histories of similar users, which often contain noisy, weakly relevant information that adds little value for candidate item scoring.

ItemRAG flips this paradigm. Instead of retrieving user histories, it performs item-level retrieval — augmenting the description of each item in a target user's purchase history or in the candidate set by retrieving items relevant to that specific item. To ensure retrievals are not merely semantically similar but genuinely informative for recommendation, the method combines semantic similarity with co-purchase information (items frequently bought together). This combination prioritizes more informative retrievals and, critically, also benefits cold-start items — products with little or no interaction history.

Technical Details

The ItemRAG pipeline works as follows:

Item-Level Retrieval: For each item in a user's history or a candidate set, the system retrieves a small set of related items. This is done using a dual scoring mechanism that blends:

- Semantic similarity (e.g., based on item descriptions or embeddings)

- Co-purchase frequency (items that appear together in purchase data)

Augmented Context: Retrieved items are used to enrich the textual description of the target item before feeding it to the LLM. This provides the LLM with a richer, more relevant context than a generic user-history summary.

Cold-Start Handling: Because ItemRAG relies on item-level relationships (which can be inferred from metadata or limited interactions) rather than dense user histories, it naturally extends to new items that have few or no user interactions.

The authors conducted extensive experiments across multiple recommendation datasets, comparing ItemRAG against existing RAG-based and non-RAG baselines. ItemRAG consistently outperformed existing approaches in both standard and cold-start item recommendation settings. The paper provides code, datasets, and supplementary materials at github.com/kswoo97/ItemRAG.

Retail & Luxury Implications

For retailers and luxury brands running LLM-based recommendation systems — whether for e-commerce personalisation, personalised email campaigns, or in-store assistant tools — ItemRAG offers a concrete improvement over current RAG methods.

Key implications:

Cold-start luxury items: Luxury brands frequently launch new collections, limited editions, or collaborations. These items have zero or minimal purchase history. ItemRAG's ability to leverage co-purchase patterns and semantic similarity from item metadata alone makes it well-suited for recommending new products without relying on user interaction data.

Reduced noise: User-history retrieval can pull in irrelevant purchases (e.g., a one-time gift buy). ItemRAG's item-level focus should yield cleaner, more relevant context for the LLM, potentially improving recommendation precision and brand-appropriate curation.

Scalability: Item-level retrieval is computationally cheaper than user-level retrieval at scale (since the number of items is typically smaller than the number of users), which matters for real-time recommendation systems.

Caveats: The paper's experiments are on public datasets (likely not luxury retail). The co-purchase signal may be weaker in luxury contexts where basket sizes are small (1-2 items per purchase). Brands will need to test whether co-purchase patterns from their own transaction data are sufficiently dense to benefit the approach.

Business Impact

For a luxury e-commerce platform serving 1M+ monthly active users, switching from user-history RAG to ItemRAG could yield:

- Improved cold-start recommendation success for new arrivals — potentially increasing discovery-driven revenue by 5-15% (anecdotal, based on industry benchmarks for cold-start improvements)

- Reduced latency for recommendation generation (item retrieval is faster than user retrieval)

- Lower infrastructure costs (fewer embeddings to maintain and query)

However, no revenue figures are provided in the paper. Brands should run their own A/B tests.

Implementation Approach

Complexity: Medium. Requires:

- An existing LLM-based recommendation pipeline (e.g., using GPT-4, Claude, or open-source LLMs)

- Item embeddings (can be derived from product descriptions, images, or catalog data)

- Co-purchase data (transaction logs with basket-level information)

Effort: 2-4 weeks for a team with ML engineering and data science capability. The authors provide open-source code.

Integration: Replace the user-history retrieval step in your current RAG pipeline with ItemRAG's item-level retrieval module.

Governance & Risk Assessment

Maturity: Research stage (arXiv paper, not yet production-tested at scale). The approach is promising but unproven in luxury retail contexts.

Privacy: Item-level retrieval does not require exposing user histories to the LLM, which may reduce privacy risk. Co-purchase data is aggregated and does not identify individuals.

Bias: Co-purchase patterns could reinforce popularity bias (recommending only items that are frequently bought together with bestsellers). Brands should monitor for this.

gentic.news Analysis

ItemRAG arrives at a time when LLM-based recommendation is gaining traction in retail, but practitioners are hitting the practical wall of noisy user signals. The shift from user-level to item-level retrieval is a natural evolution — analogous to how search moved from document-level to passage-level retrieval. The paper's use of co-purchase signals alongside semantic similarity is particularly clever: it injects behavioral affinity without needing deep user profiles.

For luxury brands, the cold-start benefit is the headline. A new handbag collection has no purchase history, but it has attributes (leather type, color, designer) that can be semantically linked to past items. ItemRAG could make these links explicit, enabling the LLM to recommend the new handbag alongside complementary items (e.g., a matching wallet) even on day one of launch.

That said, the paper does not address the specific challenges of luxury retail: small basket sizes, long purchase cycles, and the importance of scarcity (recommending a limited edition item that sells out quickly). Brands should prototype with their own data before committing to production.

We are tracking this line of research closely. If ItemRAG's approach holds up in real-world retail A/B tests, it could become a standard component in the LLM-based recommendation stack — alongside prompt engineering techniques and fine-tuned models.