What Happened

A new paper on arXiv (2604.20021) from April 21, 2026, presents the first rigorous theoretical framework for semantic LLM caching in continuous query space. The authors argue that existing caching systems assume a finite, discrete universe of queries — an assumption that breaks down as LLM usage scales. Real-world queries exist in an infinite, continuous embedding space where similar questions should trigger cached responses.

They introduce a method that combines dynamic ε-net discretization with Kernel Ridge Regression to estimate which responses to cache and for how long, while formally quantifying uncertainty. Both offline and online adaptive algorithms are developed, with the online version achieving a sublinear regret bound against an optimal continuous oracle. Empirical tests show the framework approximates the continuous optimal cache while reducing computational and switching overhead.

Technical Details

The core challenge: traditional caching treats queries as distinct items (like URL caching). In LLM serving, semantically identical questions (e.g., "What are returns policies?" vs. "How do I return an item?") should reuse the same response. The paper models queries as vectors in a continuous embedding space (e.g., from a sentence transformer).

- ε-net discretization: Instead of caching every possible embedding, the system selects a representative set of points such that every query is within distance ε of some cached point.

- Kernel Ridge Regression (KRR) : Used to estimate the cost and popularity of query neighborhoods, enabling the algorithm to generalize from partial feedback (i.e., which queries were actually served) to unseen similar queries.

- Switching cost minimization: The online algorithm proactively decides when to replace cached items, balancing the cost of recomputing responses with the risk of serving stale or low-value results.

The theoretical contribution includes regret bounds that match or improve on discrete caching results, proving the approach is near-optimal in expectation.

Retail & Luxury Implications

For luxury and retail companies deploying LLMs — whether for customer service chatbots, product recommendation engines, or content personalization — inference costs are a significant operational concern. A single high-traffic fashion brand's AI assistant might field millions of queries daily. Semantic caching can reduce those costs drastically.

Customer service chatbots: Queries like "What's the return window?" and "How long do I have to return?" should map to the same cached response. Current systems often regenerate responses for each variant, wasting compute. This framework would cache a single answer for an entire semantic neighborhood.

Product search & recommendations: Queries for "black leather handbag under $2000" and "affordable black leather bags" may embed nearby. The caching system could precompute retrieval results or generative descriptions, serving them from cache for related queries.

Content generation: For luxury brands generating personalized emails or landing page copy, cached responses for common brand messaging (e.g., "Our craftsmanship story") can be reused across thousands of customer touchpoints.

However, the paper is primarily theoretical. Implementation in production requires an embedding model, a KRR implementation at scale, and careful tuning of the ε parameter. The switching cost optimization is particularly relevant for luxury brands where response freshness (e.g., current inventory) matters — the algorithm can prioritize caching stable information (policy, brand history) over dynamic data (stock levels).

Business Impact

- Inference cost reduction: By caching semantically similar queries, brands could reduce LLM API calls by 30–60% (typical caching gains for chat, though exact numbers depend on query diversity).

- Latency improvement: Cached responses are served in milliseconds vs. 1–5 seconds for a full LLM call.

- Scalability: More concurrent users can be handled without proportional cost increases.

Maturity level: Research (not production-ready). The algorithms need integration into serving frameworks (vLLM, TGI, etc.) and real-world validation in retail contexts.

Implementation Approach

To adopt this, teams would need:

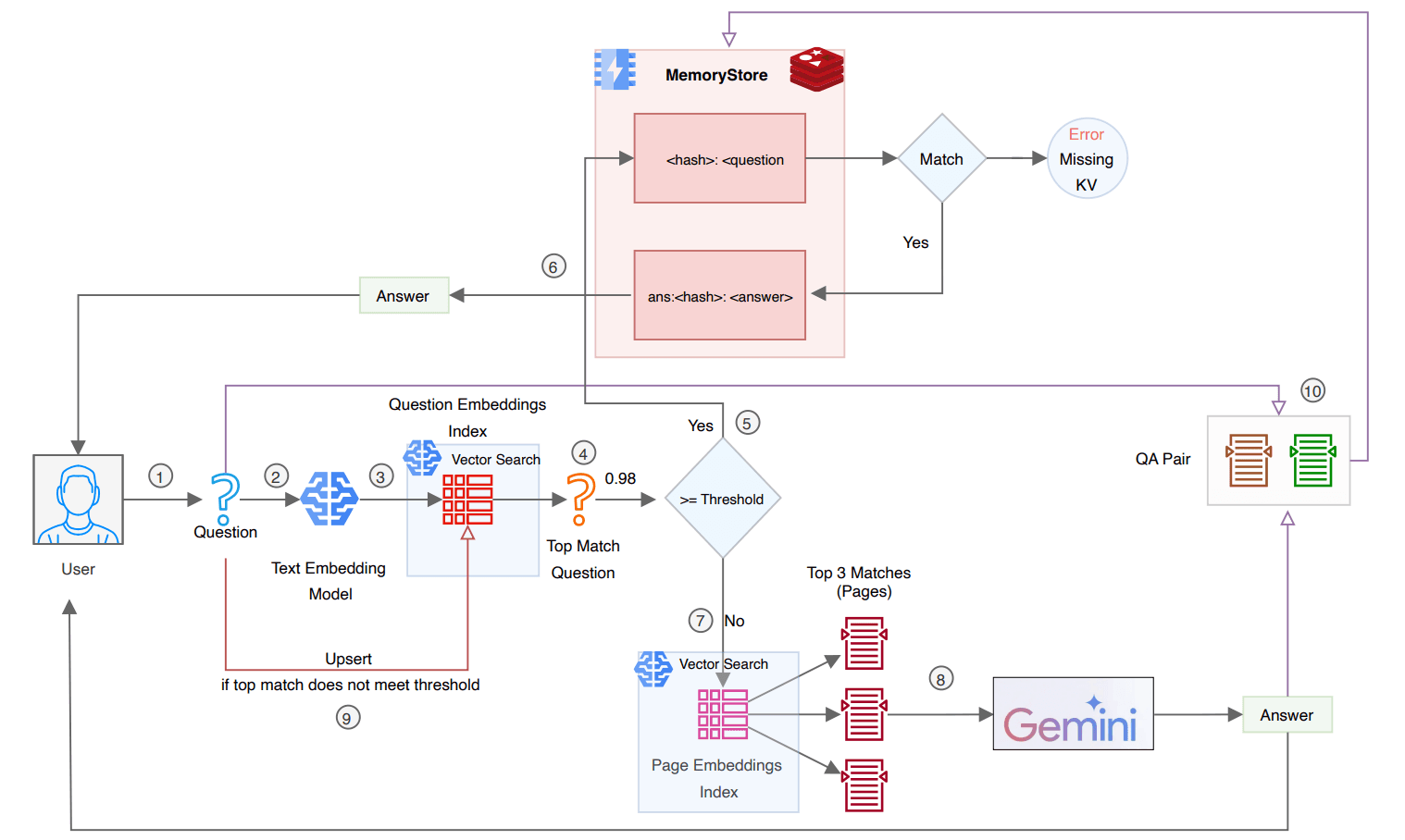

- Embedding model (e.g., E5, GTE) to convert queries into vectors.

- KRR implementation — likely using a linear kernel for speed, given the high-dimensional space.

- Cache store (in-memory key-value store supporting nearest-neighbor search, like FAISS or Redis with vector search).

- Switching policy — the paper's online algorithm can be adapted but requires monitoring of query patterns and cost functions.

Complexity: Medium-High. Requires ML engineering, not just configuration.

Governance & Risk Assessment

- Privacy: Cached responses may contain personal data (e.g., order-specific answers). Brands must ensure that caching does not leak information between users. The paper doesn't address this directly; differential privacy or per-user scoping would be needed.

- Fairness: If cache misses are more expensive, lower-frequency queries (e.g., niche product questions) could degrade experience. The ε-net approach ensures coverage, but parametrization must be inclusive.

- Security: Adversarial queries seeking to poison the cache or extract cached information are not covered.

gentic.news Analysis

This paper arrives at a time when LLM inference costs remain a top barrier to broad enterprise adoption. As covered in our recent article on LLM agents reshaping personalization (April 23), the trend is toward more personalized, dynamic interactions — which risk increasing compute. Semantic caching offers a complementary strategy: cache the reusable parts of responses while generating personalized overlays separately.

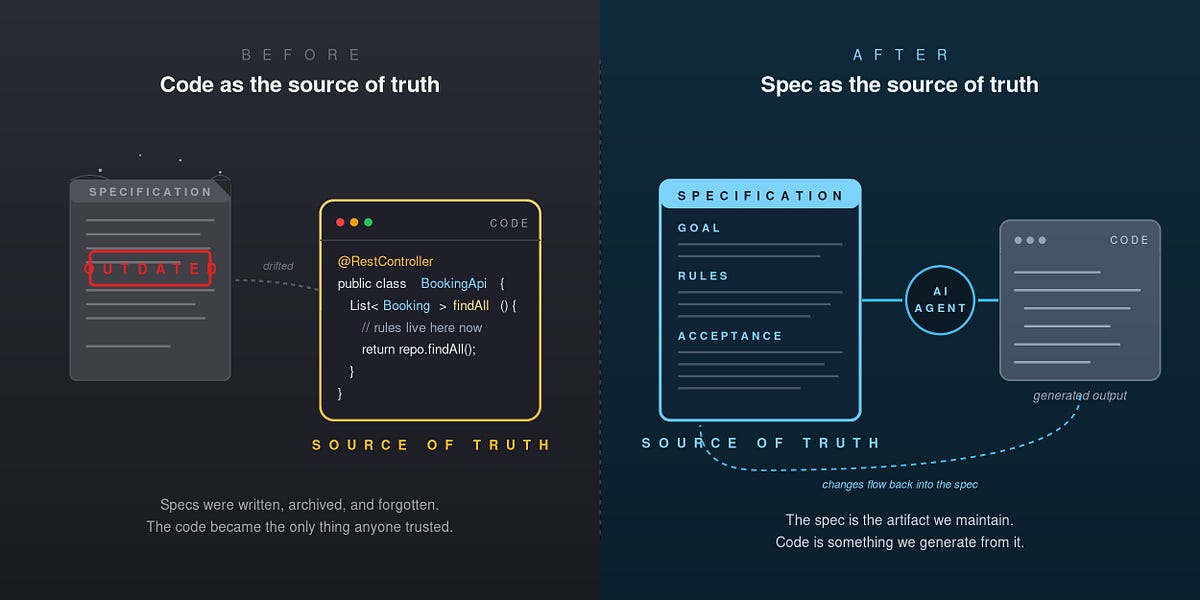

The paper's theoretical grounding is noteworthy. Most production caching is heuristic (e.g., TTL, LRU with semantic grouping). By formalizing regret bounds, the authors provide a principled way to reason about caching decisions. This could eventually integrate with Retrieval-Augmented Generation (RAG) systems, which already use embedding similarity for retrieval — the caching layer would sit between the retriever and the generator.

While the paper does not mention retail, the application is natural. Oracle’s recent critique (April 18) that current AI in CRM delivers vague insights highlights the need for efficient, precise LLM serving — semantic caching is a tool to make that practical. The trend of increasing LLM-related arXiv papers (20+ this week alone) underscores the rapid progress; retail AI leaders should monitor this space for productionizable implementations within 6–12 months.