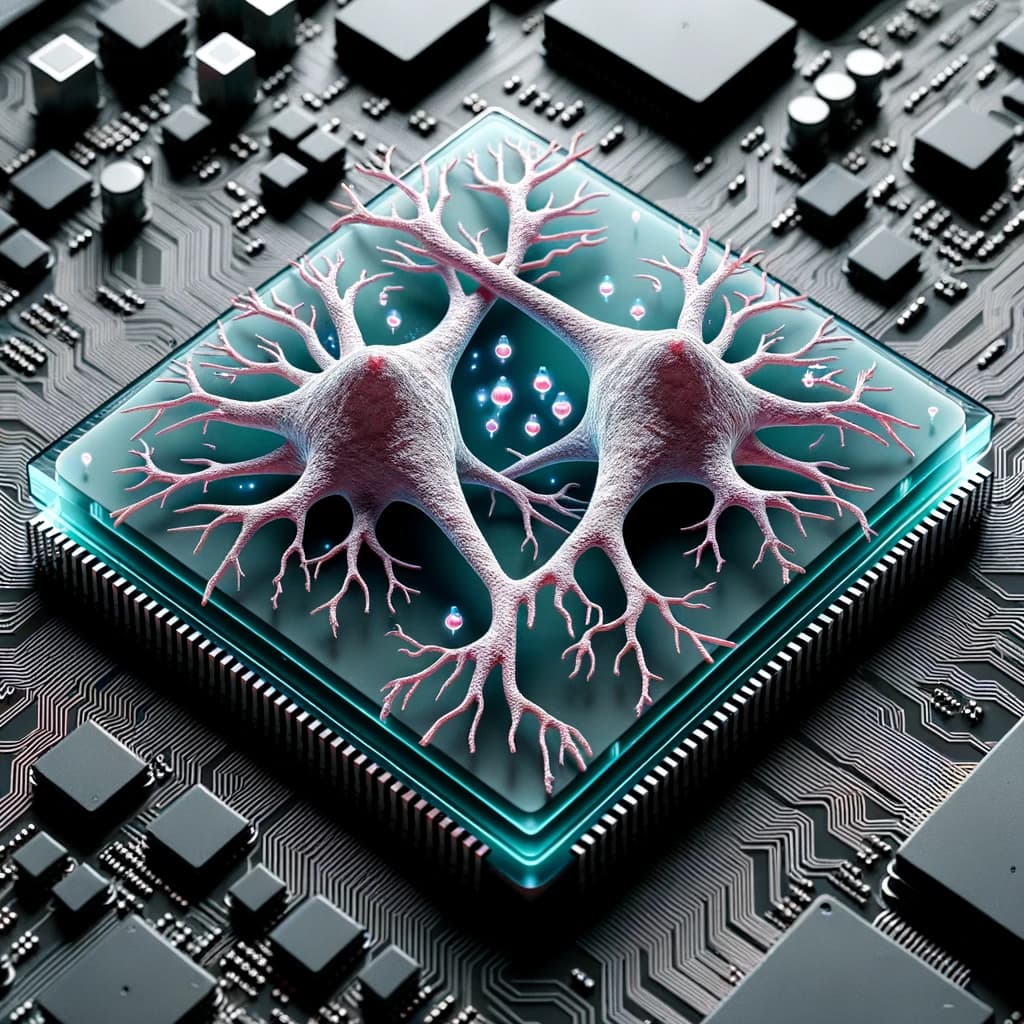

A research team at Cortical Labs has conducted an experiment connecting a living network of human brain cells grown on a chip to a large language model (LLM). The work represents a tangible step toward exploring hybrid biological-artificial intelligence systems.

Key Takeaways

- Cortical Labs grew 200,000 human brain cells on a chip and connected them to a large language model.

- This experiment explores hybrid biological-silicon intelligence.

What Happened

According to a report shared on social media, researchers at Cortical Labs successfully grew approximately 200,000 human neurons on a specialized multi-electrode array chip. This biological neural network was then interfaced with a software-based LLM. The experiment builds upon the lab's prior demonstration where they taught similar neural cultures to play a simplified version of the video game Pong.

The core achievement is the creation of a functional bridge between wetware (living biological tissue) and traditional silicon-based AI software. The neurons are derived from human stem cells and are maintained in a controlled environment that allows them to form functional connections and exhibit electrical activity. This activity can be recorded, interpreted, and potentially used to influence or augment the processing of a digital AI model.

Context

Cortical Labs, an Australian-based startup, has been a notable player in the field of biological computing. Their foundational technology, which they have dubbed "DishBrain," involves growing cortical neurons (brain cells) on high-density microelectrode arrays. These arrays can both stimulate the neurons and record their electrical "spikes" in response. The Pong-playing experiment, published several years prior, showed that these cultures could self-organize and exhibit adaptive, goal-directed behavior when given structured sensory feedback—a primitive form of learning.

Connecting such a system to an LLM is a logical, yet ambitious, next step. It probes a fundamental question: can the inherent efficiency, pattern recognition, and low-power learning capabilities of biological neural tissue be harnessed to work in concert with the vast knowledge and linguistic prowess of a large-scale artificial neural network?

Technical Implications & Challenges

The technical hurdles for this kind of integration are significant. They involve:

- Real-time Bi-directional Communication: Establishing a pipeline where neural activity can be encoded into a format an LLM can process, and where the LLM's outputs can be translated back into precise electrical stimulation patterns the neurons can interpret.

- Signal Interpretation: Neural spike trains are noisy and complex. Decoding any "meaning" or intentional state from 200,000 neurons is a monumental challenge in neuroscience itself.

- System Stability: Keeping a living neural culture alive, healthy, and functionally stable outside a biological body for extended periods is non-trivial.

The experiment, as described, is likely a proof-of-concept. It demonstrates the physical and engineering feasibility of the connection. The more profound scientific question—what unique computational advantages such a hybrid system might offer—remains largely unexplored and is the subject of ongoing research.

gentic.news Analysis

This development sits at the converging point of two major, long-term trends we track: organoid intelligence and heterogeneous AI systems. Cortical Labs is not operating in a vacuum. Their work directly parallels and competes with initiatives like the FELIX project at Indiana University Bloomington and research consortia exploring "brain-on-a-chip" technologies. The field's goal is to move beyond simply mimicking neural networks in software to incorporating actual biological neural computation.

From an AI engineering perspective, the most immediate implication is not a practical product but a research paradigm. This work provides a physical testbed for theories of embodied cognition and learning. An LLM is a static, pre-trained model; the living neural network is dynamic and plastic. Studying their interaction could yield new insights into how grounding and real-time adaptation could be injected into large language models, a key limitation of current architectures.

However, it's critical to temper expectations. The computational capacity of 200,000 neurons is minuscule compared to the hundreds of billions of parameters in a modern LLM or the ~86 billion neurons in a human brain. This is a sandbox for exploring principles, not a rival to GPT or Claude. The real signal here is the sustained investment and progress in creating operable biological-computer interfaces, a foundational technology that may, over decades, inform new AI architectures or specialized processing units.

Frequently Asked Questions

What is Cortical Labs?

Cortical Labs is an Australian biotechnology startup focused on developing biological computing systems. Their core technology involves growing functional networks of human neurons on semiconductor chips to create hybrid biological-silicon processors.

How do you connect brain cells to an AI model?

The connection is made via a multi-electrode array chip. The neurons are grown directly on this chip, which can record their electrical activity (spikes) and deliver precise electrical stimuli. This recorded activity is digitized and processed by software that acts as an interface, translating between the neural signals and the data format used by the LLM, and vice-versa.

What is the purpose of combining neurons with an LLM?

The primary purpose is fundamental research. Scientists aim to study whether biological neural networks can complement AI systems, potentially offering advantages in energy efficiency, adaptive learning, or handling ambiguous real-world data. It's an exploration of a new paradigm for computation, not an attempt to immediately build a better chatbot.

Is this like a "brain in a jar" controlling an AI?

No, that is a significant oversimplification and mischaracterization. The neural culture is not a conscious "brain." It is a simplified, two-dimensional layer of cells that exhibit basic network activity. It lacks the structure, sensory organs, and complexity of any brain region. Think of it more as a novel, biologically-derived sensor or co-processor being studied for its computational properties.