Key Takeaways

- A new arXiv preprint presents DharmaOCR, a pair of small language models (7B & 3B params) fine-tuned for structured OCR.

- They introduce a new benchmark and use Direct Preference Optimization to drastically reduce 'text degeneration'—a key cause of performance failures—while outputting structured JSON.

- The models claim superior accuracy and lower cost than proprietary APIs.

What Happened

A new research paper, posted to the arXiv preprint server on April 15, 2026, introduces DharmaOCR, a pair of specialized small language models (SSLMs) designed for structured Optical Character Recognition (OCR). The work addresses a critical, often overlooked problem in production OCR systems: text degeneration. This is when a model gets stuck in a loop, generating repetitive or nonsensical text, which not only ruins output quality but also cripples system performance by inflating response times and compute costs.

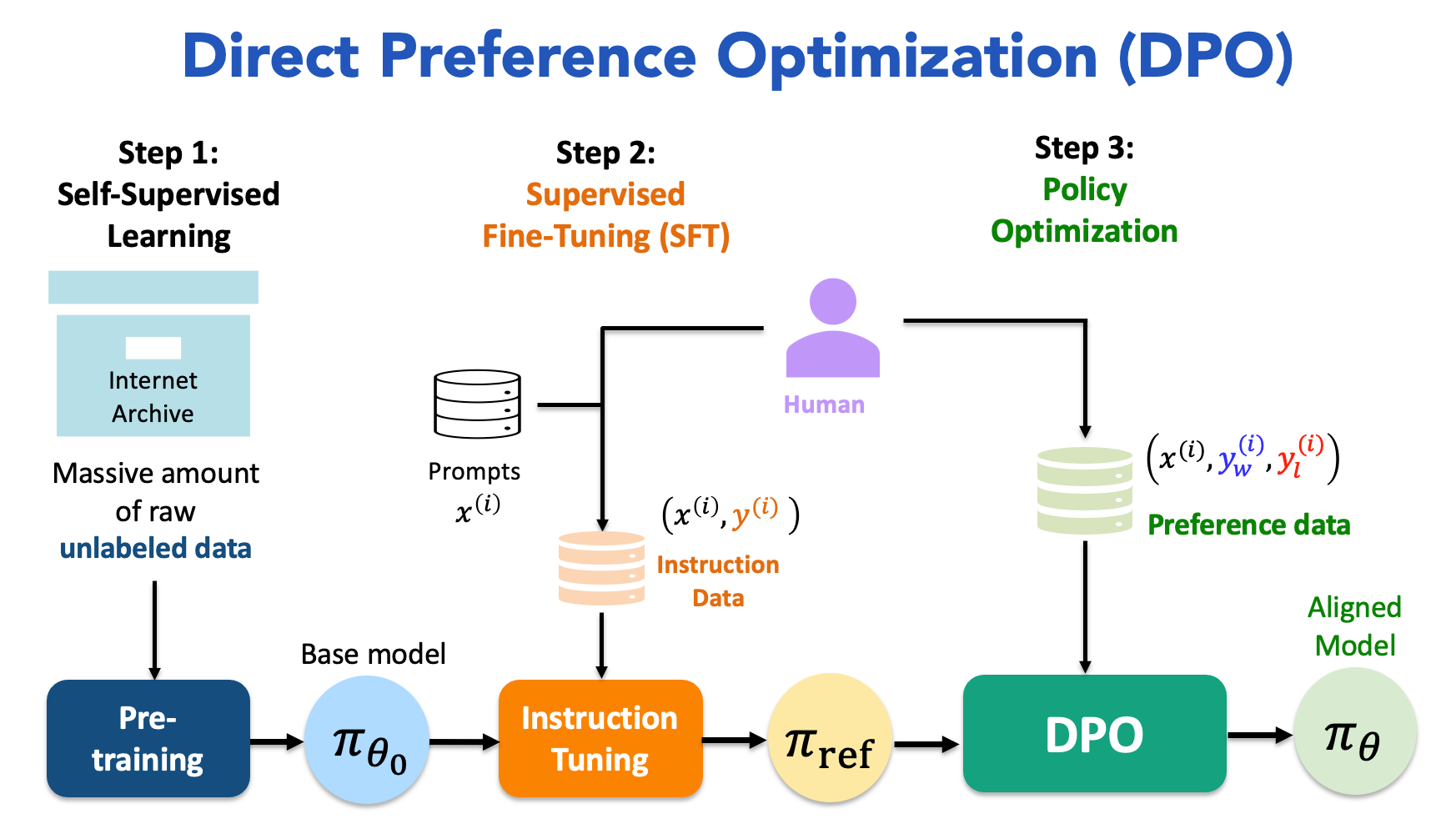

The authors present two models: DharmaOCR Full (7B parameters) and DharmaOCR Lite (3B parameters), both fine-tuned to transcribe document images into a strict JSON schema (with header, margin, footer, and text fields). The core methodological innovation is the first application of Direct Preference Optimization (DPO) for OCR, explicitly using degenerate text generations as "rejected" examples to train the model to avoid such behavior. This is combined with Supervised Fine-Tuning (SFT) to enforce the JSON structure.

Technical Details

The research makes three key contributions:

The DharmaOCR-Benchmark: A new evaluation suite covering printed, handwritten, and legal/administrative documents. It proposes a unified protocol that measures both fidelity (accuracy of text transcription) and structure (correct JSON formatting), while explicitly tracking degeneration rate and unit cost as first-class metrics.

A Novel Fine-Tuning Approach: The combination of SFT for structure and DPO for stability is central. The paper empirically shows that DPO, trained to penalize looping outputs, can reduce the degeneration rate by up to 87.6% relative to baselines, without sacrificing extraction quality.

State-of-the-Art Models: The resulting DharmaOCR models set a new SOTA on their benchmark. DharmaOCR Full achieves a 0.925 extraction score with a 0.40% degeneration rate, while the Lite version scores 0.911 with a 0.20% degeneration rate. The paper also demonstrates that applying AWQ quantization can reduce per-page inference cost by up to 22% with negligible quality loss, presenting a compelling quality-cost trade-off versus both open-source alternatives and proprietary OCR APIs.

The work underscores that in production, degeneration is not just an academic quality metric—it directly impacts latency, throughput, and cloud bills due to abnormally long, wasteful generations.

Retail & Luxury Implications

While the paper uses legal/administrative documents in its benchmark, the technology has direct and significant applications in retail and luxury. High-fidelity, structured OCR is a foundational capability for digitizing and automating back-office and customer-facing processes.

- Vendor & Supply Chain Documentation: Automating the extraction of structured data from invoices, bills of lading, quality certificates, and compliance documents from global partners. A model that reliably outputs JSON can feed directly into ERP and supply chain management systems without manual reformatting.

- Historical Archive & Heritage Digitization: Luxury houses possess vast archives of handwritten design sketches, ledgers, and client correspondence. A model robust against handwritten text degeneration can accelerate digitization projects while preserving structural metadata.

- In-Store Operations & Clienteling: Processing structured information from handwritten client notes, physical inventory sheets, or consignment agreements. Reducing degeneration is critical here, as a single looping error could corrupt a client record or inventory count.

- Cost-Effective Scalability: The emphasis on small model size (3B/7B) and quantization aligns with the industry's need for deployable, cost-controlled AI. Running a high-accuracy OCR model on-premise or in a private cloud for sensitive documents becomes more feasible than relying on expensive, batch-oriented third-party APIs.

The key takeaway is that by solving the degeneration problem, DharmaOCR-like models move OCR from a potentially unreliable preprocessing step to a robust, pipeline-ready component for mission-critical document workflows.