What Happened

A new preprint, "Ensembles at Any Cost? Accuracy-Energy Trade-offs in Recommender Systems," published on arXiv, delivers a sobering empirical analysis of a common industry practice. The research systematically measures the trade-off between the marginal accuracy gains from ensemble methods and their substantial energy and environmental costs in recommender systems.

The authors conducted 93 controlled experiments across two distinct recommendation pipelines: explicit rating prediction (using RMSE with the Surprise library) and implicit feedback ranking (using NDCG@10 with LensKit). They tested four ensemble strategies—Simple Averaging, Weighted Averaging, Stacking/Rank Fusion, and Top Performers—against optimized single-model baselines like SVD++. The evaluation spanned four datasets of varying scale: MovieLens 100K, MovieLens 1M, ModCloth, and Anime, covering from 100,000 to 7.8 million user-item interactions.

Crucially, the study measured whole-system energy consumption in watt-hours using a smart plug (EMERS system) and converted this to CO2 equivalents, providing a tangible environmental metric often absent from pure performance benchmarks.

Technical Details & Findings

The results present a clear, and at times extreme, efficiency problem:

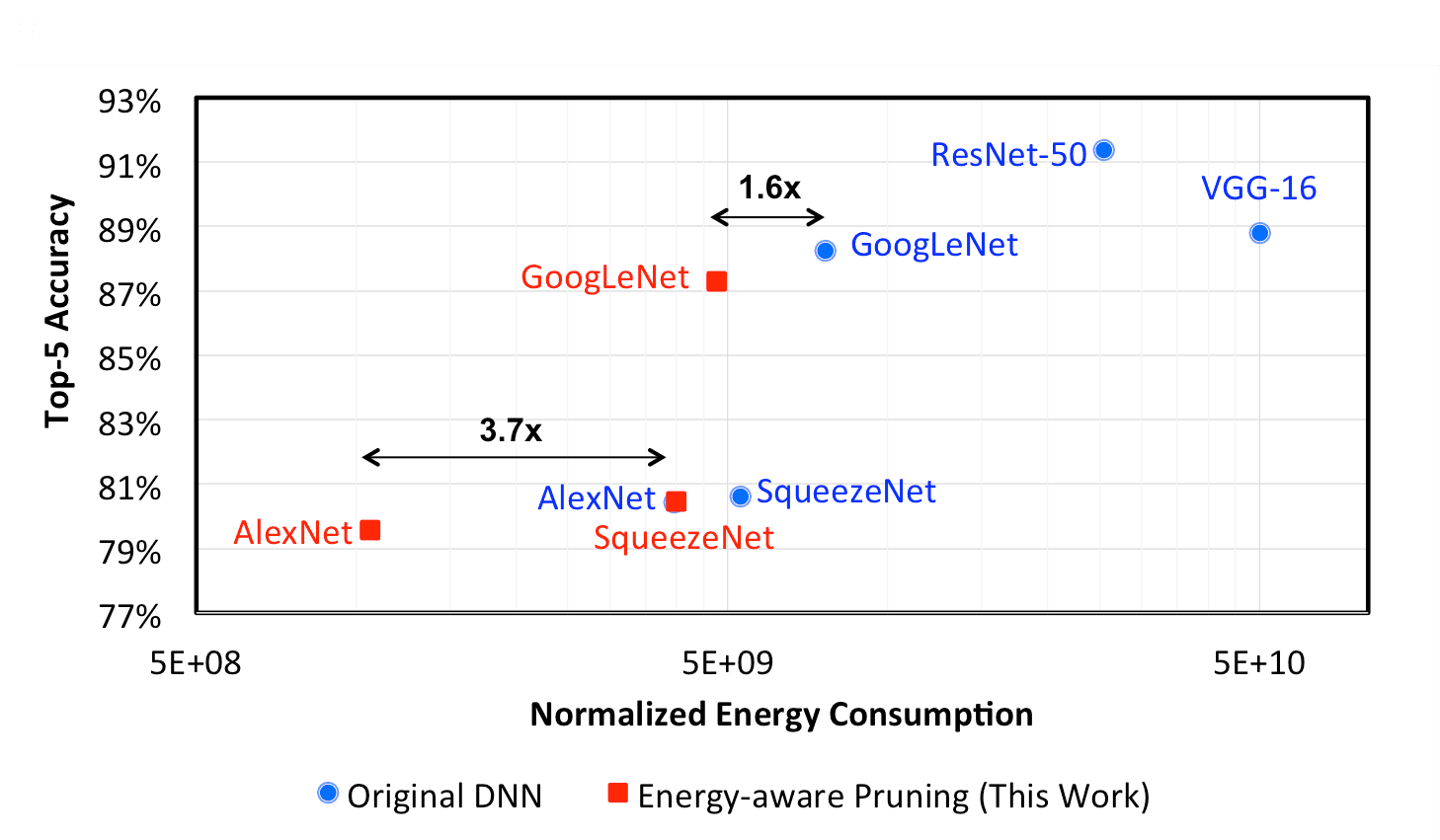

- Accuracy Gains Are Marginal: Across all experiments, ensembles improved accuracy by only 0.3% to 5.7%. On the MovieLens 1M dataset, a Top Performers ensemble improved RMSE by just 0.96% over a strong SVD++ baseline.

- Energy Costs Are Enormous: This minor accuracy came at an energy overhead ranging from 19% to a staggering 2,549%. The Anime dataset showed the most extreme case: a Surprise Top Performers ensemble improved RMSE by 1.2% but consumed 2,005% more energy (0.21 Wh vs. 0.01 Wh).

- Environmental Impact Quantified: That same Anime experiment increased CO2 emissions from 2.6 mg to 53.8 mg per recommendation task—a more than 20-fold increase for a 1.2% accuracy bump.

- Scalability Issues: On the Anime dataset, LensKit-based ensembles failed entirely due to memory limits, highlighting that computational resource constraints can make some ensemble approaches infeasible at scale.

- Selective Ensembles Are Key: The paper concludes that selective ensemble strategies (like choosing only top-performing models) are more energy-efficient than exhaustive approaches like simple averaging, but they still incur significant overhead.

The core takeaway is that the default industry pursuit of ensemble methods for incremental accuracy gains must be rigorously weighed against their operational and environmental costs.

Retail & Luxury Implications

For retail and luxury companies, where recommender systems are critical engines for personalized discovery, cross-selling, and customer retention, this research forces a strategic reckoning. The pursuit of "state-of-the-art" accuracy on a leaderboard is disconnected from the reality of deploying sustainable, cost-effective systems at scale.

Sustainability & ESG Reporting: Luxury groups like LVMH, Kering, and Richemont have public-facing commitments to reduce environmental impact. Deploying energy-inefficient AI models directly contradicts these goals. This study provides a framework to quantify the carbon footprint of personalization features, turning an abstract concern into a measurable KPI alongside click-through rate (CTR) and conversion.

Total Cost of Ownership (TCO) for AI: The cloud compute costs for serving ensembles—requiring multiple model inferences per user request—can be prohibitive. A 2,000% increase in energy use translates directly to a massive increase in infrastructure costs. For global e-commerce platforms serving millions of daily users, the difference between a single model and an ensemble could mean millions of dollars annually in additional compute spend for a fractional conversion lift.

Practical Deployment Strategy: The research suggests a more nuanced approach:

- A/B Test the Business Impact: Before deploying any ensemble, rigorously A/B test whether the tiny accuracy gain (e.g., 0.96% better RMSE) translates to a statistically significant improvement in business metrics like add-to-cart rate, average order value, or return customer rate. Often, it does not.

- Implement Cost-Aware Optimization: Move beyond optimizing solely for accuracy. Adopt multi-objective optimization that includes inference latency, compute cost, and energy consumption as explicit constraints during model development and selection.

- Favor Efficient Architectures: Invest in making a single, well-tuned model (e.g., a large matrix factorization or deep learning model) as robust as possible, rather than maintaining a pipeline of multiple models. Explore knowledge distillation techniques to compress an ensemble's knowledge into a single, efficient model.

Hybrid & Context-Aware Systems: Consider tiered or context-aware systems. Use a lightweight, efficient model for all user interactions, and trigger a more complex (and costly) ensemble model only for high-value scenarios, such as a VIP customer's session or when the confidence of the primary model is low.

The era of blindly stacking models for leaderboard points is over. For retail AI leaders, the new imperative is to build recommendation systems that are not only accurate but also efficient, scalable, and aligned with broader corporate sustainability objectives.