A new preprint, "Benchmark Shadows: Data Alignment, Parameter Footprints, and Generalization in Large Language Models," provides a controlled, empirical dissection of a growing industry concern: the disconnect between soaring benchmark scores and underwhelming real-world performance. The research, posted to arXiv on April 1, 2026, isolates data distribution as the primary culprit, demonstrating that models trained on benchmark-aligned data develop fundamentally different—and inferior—internal structures compared to those trained on more diverse, coverage-expanding data.

The findings challenge the core incentive structure of modern LLM development, where leaderboard position often dictates commercial and research priorities. The paper introduces novel parameter-space diagnostics that can detect these "benchmark shadows"—the spectral and rank signatures of overtrained, narrow models—offering a potential tool for more honest model evaluation.

Key Takeaways

- A controlled study finds that data distribution, not just volume, dictates LLM capability.

- Benchmark-aligned training inflates scores but creates narrow, brittle models, while coverage-expanding data leads to more distributed parameter adaptation and better generalization.

What the Researchers Built: A Controlled Data Experiment

The core of the study is a series of controlled interventions. Instead of comparing different models or training runs with countless variables, the researchers held the model architecture, training compute, and total data volume constant. They then manipulated only the distribution of the training data.

They created two primary data regimes:

- Benchmark-Aligned (BA) Regime: Training data is heavily weighted or curated to resemble the style, format, and content of popular evaluation benchmarks (e.g., MMLU, HellaSwag, GSM8K).

- Coverage-Expanding (CE) Regime: Training data is designed to maximize topic and stylistic diversity, even if it superficially differs from benchmark tasks.

By fixing all other variables, the study cleanly attributes any differences in model behavior and internal structure to the data distribution alone.

Key Results: The Generalization Gap

The results reveal a stark trade-off, quantified through both performance metrics and novel structural analyses.

As expected, BA-trained models excelled on the benchmarks they were aligned with. However, their performance collapsed on novel, out-of-distribution tasks designed to test reasoning, composition, and factual recall in unfamiliar formats. CE-trained models showed more robust, generalized capability, maintaining strong performance across both benchmark and novel evaluations.

The critical insight is that benchmark performance alone is a misleading indicator of true capability. A model can achieve a state-of-the-art score by becoming a narrow expert on the benchmark's "shadow," rather than developing broadly useful representations.

How It Works: Spectral Signatures in Parameter Space

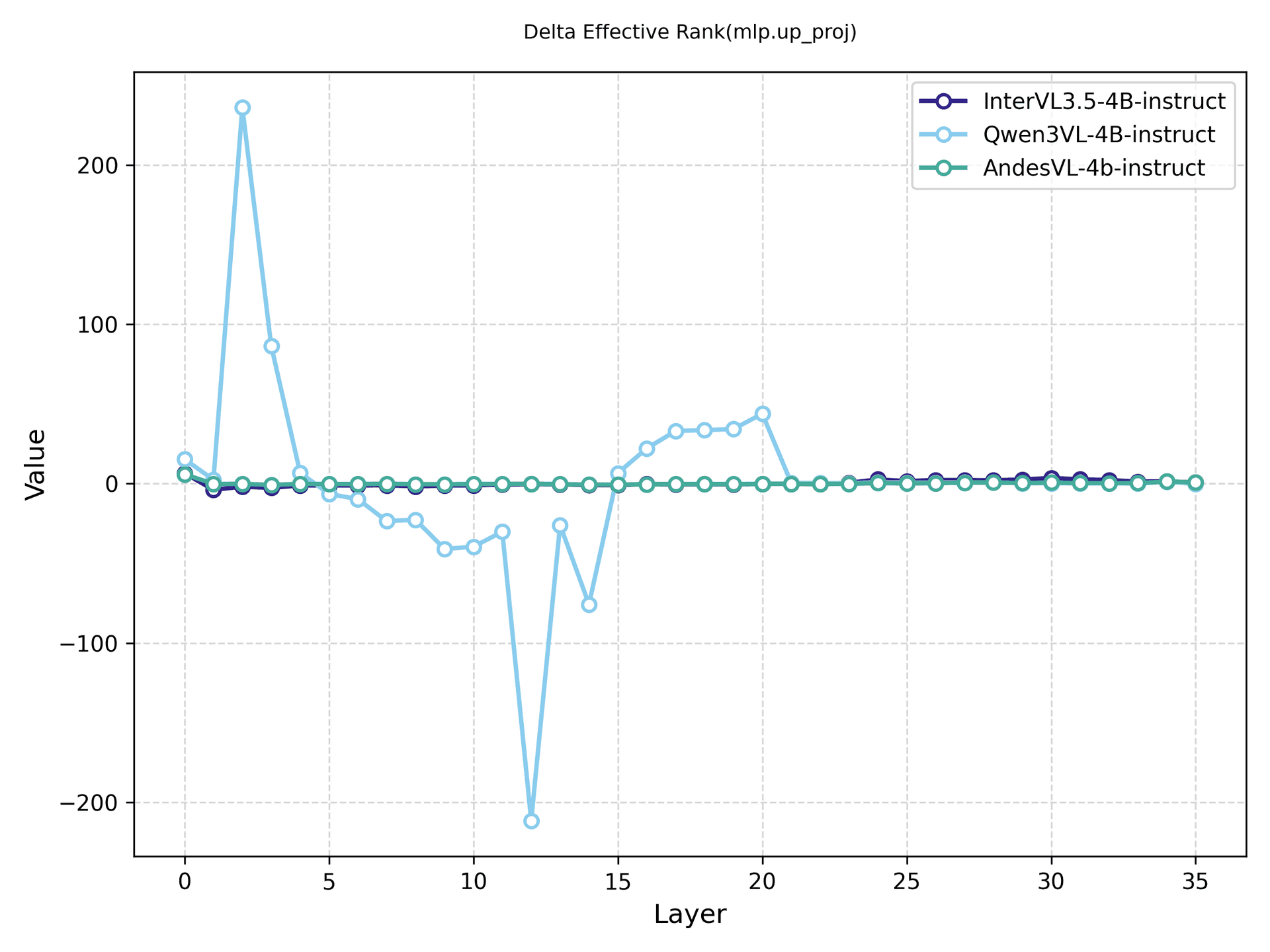

The paper's technical contribution is a method to diagnose this problem without needing a battery of new benchmarks. The researchers analyzed the models' parameter matrices (e.g., within attention and feed-forward layers) using spectral (eigenvalue) and rank analysis.

- BA Models exhibited parameter matrices with a few dominant, large-magnitude singular values. This indicates a high-rank, concentrated adaptation where a small subset of parameters becomes hyper-specialized for the benchmark tasks. The model is effectively "memorizing a shortcut."

- CE Models showed parameter matrices with a flatter, more distributed spectrum of singular values. This lower-effective-rank structure suggests a broader, more balanced learning across the network, correlating with the ability to recombine knowledge flexibly for novel tasks.

These "parameter footprints" are distinct structural signatures of the training regime. The study confirmed these patterns hold across diverse open-source model families and extended the finding to multimodal models (vision-language), suggesting the phenomenon is fundamental to large-scale pretraining.

A revealing case study on "prompt repetition"—a common data artifact—showed that not all data quirks induce this regime shift. Simple repetition led to overfitting but did not produce the same concentrated spectral signature as deliberate benchmark alignment, indicating that content and task distribution, not just artifacts, drive the effect.

Why It Matters: A Crisis of Evaluation

This research provides a formal, mechanistic explanation for the anecdotal experiences of many practitioners: a model that aces the benchmarks can feel dumber in production. It validates concerns about benchmark overfitting and data contamination, moving them from speculation to measurable phenomena.

For companies building and evaluating LLMs, the implications are direct:

- Leaderboard chasing is actively harmful if it incentivizes curating training data to match benchmark distributions.

- Model evaluation must expand beyond static benchmarks to include dynamic, out-of-distribution, and real-world task suites.

- The proposed spectral diagnostics could become a standard part of model auditing, providing a "readout" of how narrowly a model was trained.

The study arrives amid a week of intense activity on arXiv, with 16 mentions in our coverage, highlighting its role as the central nervous system for disseminating critical AI research. It also intersects with a major trend in our reporting: the evolution of Retrieval-Augmented Generation (RAG), which appeared in 8 articles this week. This research underscores why RAG is necessary—if base models are prone to becoming narrow benchmark experts, external knowledge retrieval is essential for grounding them in broader, real-world contexts.

gentic.news Analysis

This paper formalizes a suspicion that has been circulating at the engineering level for over a year. It connects directly to the MIT & Anthropic benchmark released on April 4, 2026, which revealed systematic limitations in AI coding assistants. That work showed models failing on practical coding tasks despite high benchmark scores; "Benchmark Shadows" provides the underlying why: their training data was likely aligned to coding benchmarks (like HumanEval) rather than covering the messy diversity of real software development.

The findings also critically inform the ongoing debate about the "RAG era," referenced in our April 3 coverage where Ethan Mollick discussed its potential decline as the dominant agent paradigm. If base models are inherently limited by benchmark-optimized training, then RAG or similar knowledge-augmentation techniques are not just a nice-to-have—they are a mandatory corrective. This research suggests the path forward isn't abandoning RAG, but building it with the understanding that the LLM it queries is likely a narrow expert that must be carefully guided.

For practitioners, the immediate takeaway is to be deeply skeptical of benchmark claims. When evaluating a model, ask for its performance on your data and tasks, not just MMLU. The spectral analysis techniques proposed, if adopted by the community, could become a powerful tool for due diligence, much like loss curves or attention maps are today.

Frequently Asked Questions

What is a "benchmark shadow" in LLMs?

A "benchmark shadow" refers to the phenomenon where a large language model achieves high scores on standard evaluations by essentially learning the specific format, style, and content distribution of those benchmarks, rather than developing general reasoning capabilities. The model performs well in the narrow "shadow" of the benchmark but fails to generalize to real-world, out-of-distribution tasks.

How can you tell if an LLM is overtrained on benchmark data?

The research proposes analyzing the model's internal parameter matrices using spectral (eigenvalue) and rank analysis. Models overtrained on benchmark-aligned data show parameter matrices with a few dominant, large singular values—a high-rank, concentrated structure. In contrast, models trained on diverse data show a flatter, more distributed spectrum of singular values, indicating broader learning.

Does this mean benchmarks like MMLU or GSM8K are useless?

Not useless, but insufficient. Benchmarks provide a standardized, scalable way to track progress and compare models. However, this study proves they cannot be the sole measure of capability. A comprehensive evaluation must now include performance on novel, out-of-distribution tasks and potentially the structural diagnostics described in the paper to guard against overfitting.

What should companies do to train more generalizable LLMs?

The primary recommendation is to prioritize data diversity and coverage over benchmark alignment. Training datasets should be designed to expose the model to the widest possible range of topics, writing styles, reasoning formats, and factual domains, even if that data doesn't directly resemble common benchmark questions. Avoiding the curation of data purely to boost specific benchmark scores is critical.