What Happened

A new research paper, "Learning Evolving Preferences: A Federated Continual Framework for User-Centric Recommendation," was posted on arXiv. The work introduces a novel AI framework named FCUCR (Federated Continual User-Centric Recommendation). Its primary goal is to solve two persistent problems in modern recommendation systems:

- Temporal Forgetting: Standard models, when updated with new user data, tend to "forget" a user's long-term historical preferences, over-indexing on recent behavior.

- Weakened Collaboration in Federated Settings: Federated Learning (FL) is a privacy-preserving technique where model training occurs on users' devices, and only model updates are shared. However, this decentralization can isolate users, preventing the system from leveraging patterns from similar users to improve personalization—a key strength of centralized systems.

FCUCR is designed to enable long-term, adaptive personalization while strictly adhering to privacy constraints by keeping raw user data on-device.

Technical Details

The FCUCR framework combines concepts from Federated Learning (FL) and Continual Learning (CL) to create a robust, privacy-first recommendation system.

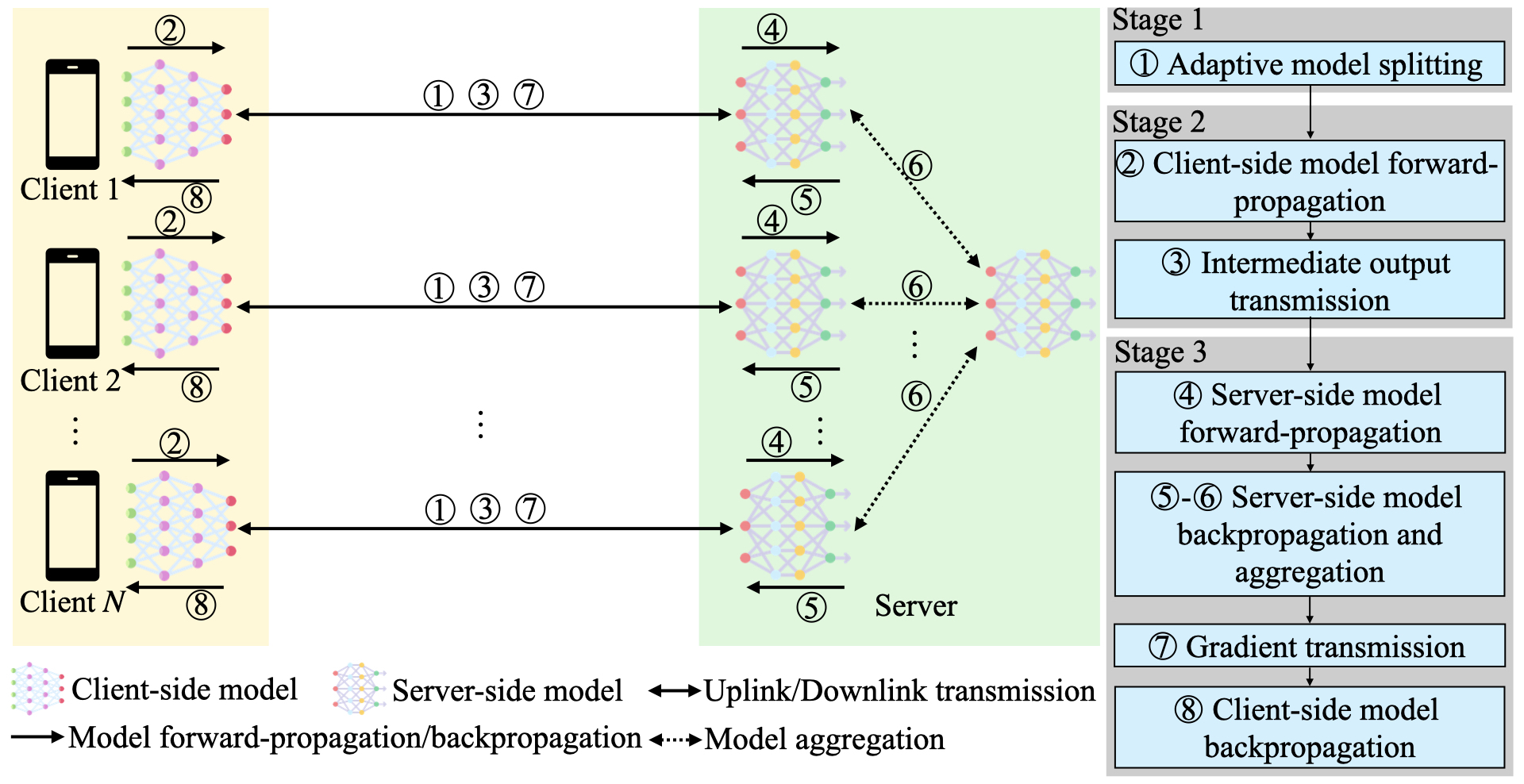

Federated Continual Learning Base: The system operates in a standard FL setup. A global recommendation model is distributed to client devices (e.g., user phones or apps). Each client trains the model locally on its own private interaction data (clicks, purchases, dwell time). Only the updated model parameters, not the raw data, are sent back to a central server for aggregation into an improved global model. This cycle repeats continually over time.

Time-Aware Self-Distillation (TASD): This is the core innovation to combat "temporal forgetting." During local training on a client device, the framework uses a technique called knowledge distillation. It treats the model's previous version (which encapsulated the user's long-term preferences) as a "teacher" and the current model being updated as a "student." The system adds a training objective that encourages the new model to retain the predictive knowledge of the old one, implicitly preserving historical preference patterns while learning from new interactions. This happens entirely on the user's device.

Inter-User Prototype Transfer Mechanism: This addresses the collaboration problem in FL. The server analyzes the aggregated model updates to identify clusters of users with similar behavioral prototypes (e.g., "luxury handbag enthusiasts," "sustainable apparel shoppers"). It then carefully transfers abstracted, anonymized "prototype" knowledge—not individual data—back to relevant users. This enriches each user's local model with broader, collaborative insights without compromising individual privacy or decision logic.

The paper reports "extensive experiments on four public benchmarks" showing FCUCR's superior effectiveness in recommendation accuracy compared to existing federated or continual learning baselines. The authors also emphasize the framework's strong compatibility and practical applicability.

Retail & Luxury Implications

The research described in FCUCR directly targets the operational and strategic challenges faced by luxury and retail brands in the age of privacy-first, hyper-personalized commerce.

1. The Privacy-Personalization Paradox: High-net-worth clients demand exquisite personalization but are increasingly sensitive about data privacy. Centralized data collection for modeling is becoming legally risky (GDPR, CCPA) and brand-damaging. FCUCR's federated approach offers a technically sound path forward. A brand's app could learn a client's evolving taste from silo to runway—from a newfound interest in vintage watches to a shift towards ready-to-wear—without ever exporting their detailed interaction history from their device.

2. Modeling Evolving Taste & Loyalty: Luxury relationships are long-term. A client's journey might span decades, moving from entry-point accessories to haute couture. Traditional models that "forget" a client's foundational preferences (e.g., their enduring love for a specific artisan or color palette) in favor of recent clicks create a brittle, ahistorical understanding. FCUCR's time-aware self-distillation is explicitly designed to maintain that long-term preference memory, allowing the brand's AI to recognize the continuity in a client's evolving style.

3. Collaborative Curation Without Data Pooling: In a centralized world, discovering that clients who buy Brand A's perfume also respond well to Brand B's scarves (under the same conglomerate) is straightforward. Federated learning typically breaks this cross-brand insight. The inter-user prototype transfer mechanism is a clever workaround. It would allow LVMH, for example, to identify a cluster of "avant-garde minimalist" clients across its portfolio of brands (Celine, Loewe, etc.) and allow each brand's app to better serve those clients with relevant products, all while keeping each brand's and each user's data separate.

Potential Use Case Scenario: A luxury fashion house deploys FCUCR within its mobile app. A long-time client, Anna, begins exploring sustainable materials in her recent browsing. The local model on her phone learns this new interest. Through TASD, it retains knowledge of her classic preference for tailored silhouettes. Simultaneously, the server identifies Anna's updated prototype as part of a "sustainable-luxury" cluster and transfers relevant knowledge, perhaps helping her local model better recommend a new line of eco-conscious cashmere. Anna receives highly personalized, relevant recommendations that reflect her full history and emerging values, and the brand never possesses a centralized log of her specific browsing sessions.