The Innovation — What the Source Reports

A new research paper, "Multi-Agent Reinforcement Learning for Dynamic Pricing: Balancing Profitability, Stability and Fairness," presents a systematic empirical evaluation of advanced AI techniques for optimizing prices in competitive retail markets. The core challenge addressed is that dynamic pricing must adapt not only to fluctuating consumer demand but also to the strategic price changes of competitors—a classic multi-agent problem.

The researchers constructed a simulated marketplace environment using real-world retail data to serve as a testing ground. They benchmarked two state-of-the-art Multi-Agent Reinforcement Learning (MARL) algorithms against a common baseline:

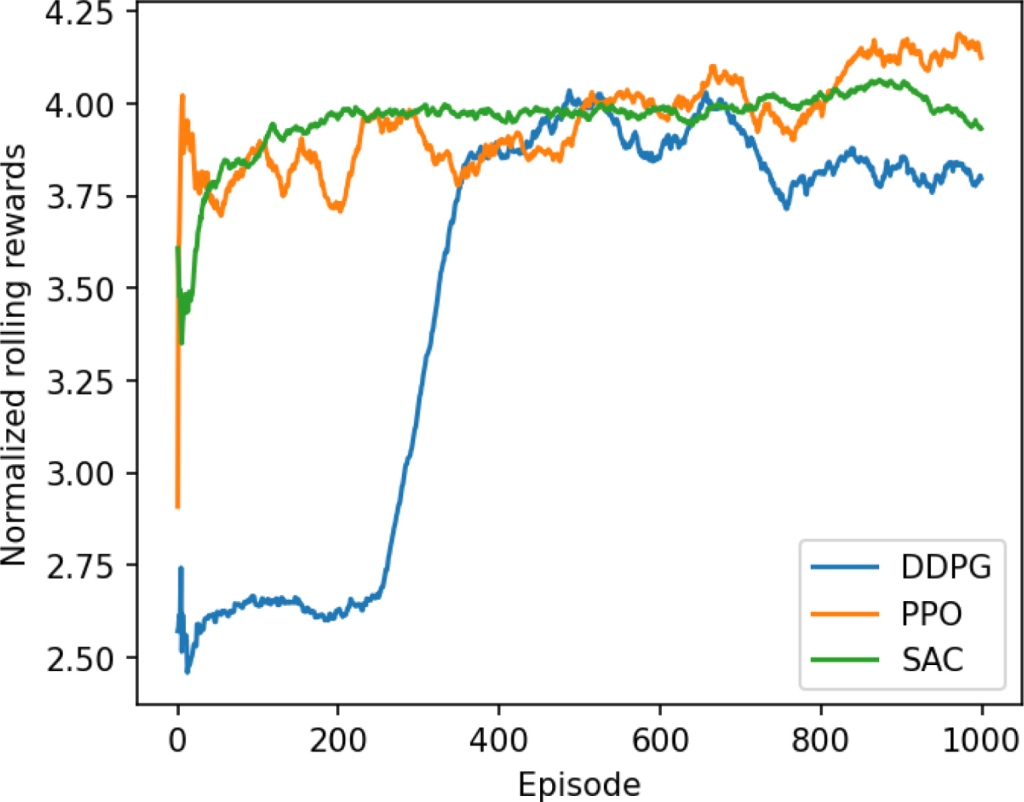

- MAPPO (Multi-Agent Proximal Policy Optimization): A centralized-training-with-decentralized-execution method known for its stability.

- MADDPG (Multi-Agent Deep Deterministic Policy Gradient): Another popular MARL algorithm where agents learn centralized critics.

- Baseline: Independent DDPG (IDDPG): A common approach where each agent learns independently, ignoring the interactive nature of the environment.

The evaluation went beyond simple profit maximization to assess four critical dimensions:

- Profit Performance: Total reward achieved.

- Stability: Variance in performance across different random seeds (crucial for reproducibility).

- Fairness: The distribution of profits among competing agents in the simulation.

- Training Efficiency: How quickly the algorithms learn.

The results were clear and nuanced:

- MAPPO consistently achieved the highest average returns with the lowest variance, making it the most stable and reproducible choice for maximizing profit in a competitive setting.

- MADDPG achieved slightly lower overall profit than MAPPO but resulted in the fairest profit distribution among the simulated agents.

- Both MARL methods (MAPPO and MADDPG) demonstrated superior scalability and stability compared to the Independent DDPG (IDDPG) baseline.

The paper concludes that MARL, and particularly MAPPO, provides a robust and scalable framework for dynamic price optimization under competition, effectively balancing multiple business objectives.

Why This Matters for Retail & Luxury

For luxury and premium retail, pricing is not merely a function of cost-plus-margin; it is a core component of brand equity, perceived value, and competitive positioning. The findings of this research have direct implications for several critical scenarios:

- E-commerce & Marketplace Competition: On platforms like Farfetch, Net-a-Porter, or brand-owned sites, brands and retailers are in constant, real-time competition. An AI agent using MAPPO could optimize a brand's pricing against a shifting landscape of competitors' promotions and new arrivals, maximizing revenue without engaging in a race-to-the-bottom that erodes brand value.

- Seasonal Collections & Markdown Optimization: For end-of-season sales or flash sales, the algorithm must react to both depleted inventory and competitors' discounting strategies. A stable algorithm like MAPPO could help maximize sell-through while protecting margin, better than rules-based or single-agent systems.

- Geographic Price Harmonization: A global brand managing prices across different regional markets (e.g., Europe vs. Asia) can be modeled as a multi-agent system. The "fairness" metric explored with MADDPG is intriguing here—it could be reconceptualized to ensure pricing strategies do not create unsustainable arbitrage opportunities or severe regional disparities that damage global brand perception.

- Portfolio Pricing for Conglomerates: Groups like LVMH or Kering, which manage portfolios of competing and non-competing brands, could use a multi-agent framework to optimize pricing across their house of brands, balancing overall group profitability with the individual strategic goals of each brand.

Business Impact

The potential impact is significant but must be quantified cautiously, as the study is a simulation. The paper demonstrates that MARL approaches can outperform standard independent learning methods. In a real-world deployment, this could translate to:

- Revenue Uplift: A more adaptive, competitive-aware pricing agent could capture marginal gains in conversion and average order value, directly impacting top-line revenue. Industry case studies from early adopters of single-agent RL pricing often cite low-single-digit percentage uplifts.

- Margin Protection: By avoiding myopic, reactive price wars and finding more stable equilibria (as shown by MAPPO's low variance), brands can better protect profitability during competitive periods.

- Strategic Agility: Reducing the time to calibrate pricing strategies from weeks to real-time adaptation.

However, the paper does not provide specific percentage lifts over existing commercial systems. The business case would hinge on the scale of sales volume; the marginal gain per transaction, applied across millions of transactions, drives ROI.

Implementation Approach

Deploying this research from simulation to production is a non-trivial engineering challenge. Here is a realistic pathway:

Environment Construction (The Hardest Part): The paper's key enabler is its "simulated marketplace environment derived from real-world retail data." Replicating this requires:

- High-Fidelity Demand Modeling: Building a model that predicts sales volume based on your price, competitor prices, time, seasonality, inventory, and marketing spend.

- Competitor Price Intelligence: A reliable, real-time feed of competitors' pricing for relevant SKUs.

- Simulation Engine: A digital twin of the market where AI agents can train safely without affecting live operations.

Algorithm Selection & Training:

- Based on the paper, MAPPO is the recommended starting point for profit and stability.

- Training requires substantial computational resources (GPU clusters) and can take days or weeks to converge, depending on environment complexity.

- The "fairness" objective of MADDPG would need to be carefully defined in a business context—is it fairness among competitors (less relevant) or fairness across customer segments or regions (more relevant)?

Production Integration & Safety:

- The trained policy must be integrated into the pricing engine, likely as a recommendation system for human overseers initially.

- Absolute guardrails are mandatory: hard-coded minimum and maximum price bounds to protect brand value, and rules to prevent absurd or collusive-looking pricing.

- A/B testing framework to measure impact against the incumbent pricing system.

Governance & Risk Assessment

Adopting autonomous multi-agent systems for pricing introduces several risks that must be governed:

- Collusion Risk: A primary concern in MARL is the emergence of tacit collusion, where agents learn to keep prices artificially high without explicit communication. The paper's focus on "fairness" and competition suggests this was studied in the simulation, but in the real world, this requires rigorous monitoring and legal review to ensure compliance with antitrust regulations.

- Brand Value Erosion: Luxury pricing is intimately tied to perception. An AI optimizing for short-term revenue might suggest discounts that damage long-term brand equity. The reward function must incorporate brand health metrics, not just immediate profit.

- Systemic Instability: Poorly designed multi-agent systems can lead to chaotic, oscillating prices. The paper's evaluation of "stability" is a direct response to this. MAPPO's low variance is a promising signal, but continuous monitoring in production is essential.

- Explainability & Control: RL models are often black boxes. Teams must develop post-hoc explanation tools (e.g., highlighting which competitor price change triggered an adjustment) to maintain operational control and trust.

- Maturity Level: This is cutting-edge academic research, not off-the-shelf software. The timeline from this paper to robust, regulated, production-ready systems in luxury retail is likely 2-5 years, starting with controlled experiments in non-core product categories.