What Happened

A new research paper, "Learning-guided Prioritized Planning for Lifelong Multi-Agent Path Finding in Warehouse Automation," was posted to arXiv on March 25, 2026. The work addresses a core operational challenge in automated warehouses: efficiently coordinating the paths of dozens or hundreds of robots (agents) as they continuously retrieve and deliver items without collisions—a problem known as Lifelong Multi-Agent Path Finding (MAPF).

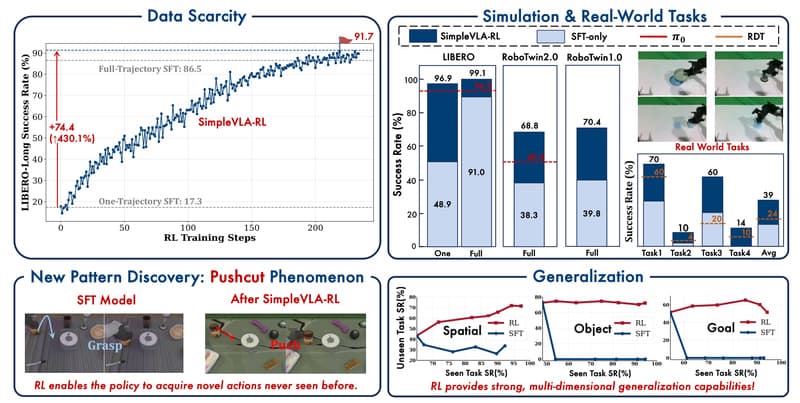

The authors note that while classical search-based solvers are reliable, they often require costly, situation-specific adaptations to handle the long-term, dynamic nature of lifelong operations. Pure machine learning approaches have been explored but haven't conclusively outperformed these classical methods. To bridge this gap, the team introduces Reinforcement Learning (RL) guided Rolling Horizon Prioritized Planning (RL-RH-PP), presented as the first framework to integrate RL directly with search-based planning for this specific problem.

Technical Details

The innovation lies in its hybrid architecture. It uses classical Prioritized Planning (PP) as a backbone. In PP, agents are assigned a static priority order; a planner then finds paths for each agent sequentially, treating higher-priority agents as moving obstacles for lower-priority ones. This is simple and flexible but can be suboptimal if the priority order is poor.

RL-RH-PP replaces the static heuristic with a dynamic, learned priority assignment policy. The key formulation is modeling the dynamic priority assignment as a Partially Observable Markov Decision Process (POMDP), allowing the system to handle the sequential decision-making of lifelong planning. The complex spatial-temporal interactions between agents—essentially, predicting and managing congestion—are delegated to a reinforcement learning agent.

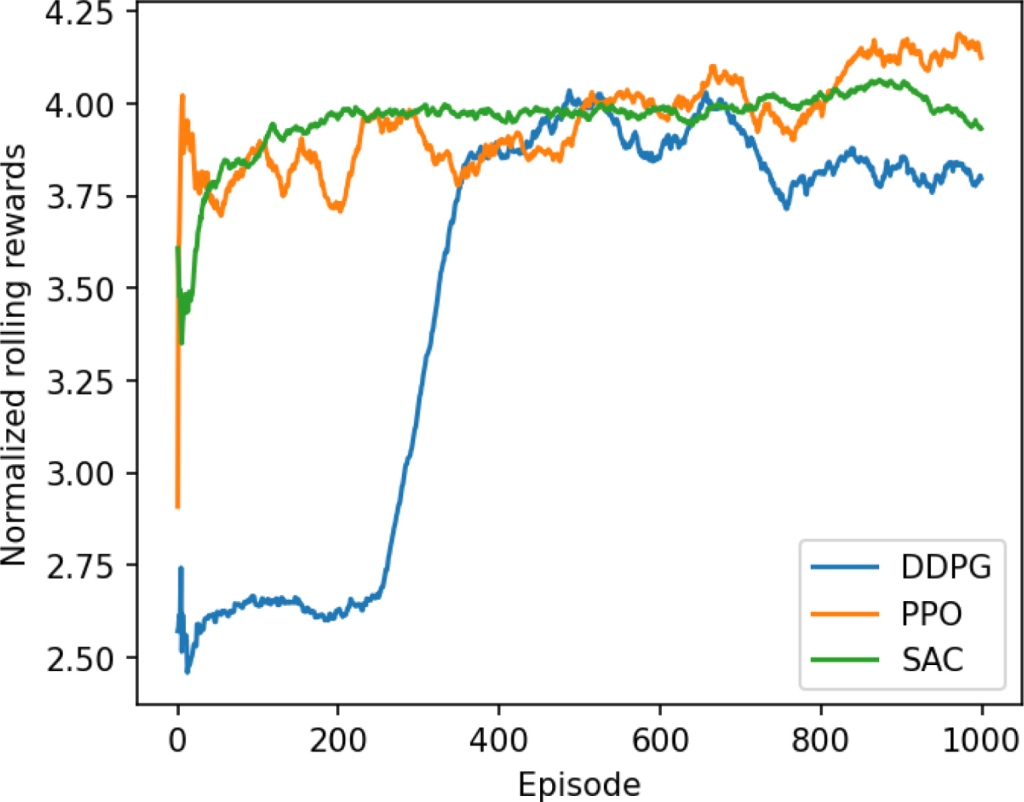

Technically, an attention-based neural network autoregressively decodes the optimal priority order for agents on-the-fly within a rolling horizon. This means the system doesn't plan all paths for all time but looks ahead a set number of steps, assigns priorities optimally for that window, executes, and then replans. This allows the underlying PP planner to work efficiently by solving a series of single-agent pathfinding problems.

Results from realistic warehouse simulations showed that RL-RH-PP achieved the highest total throughput (successful deliveries per timestep) compared to baseline methods. The framework also demonstrated effective generalization across different agent densities, planning horizon lengths, and warehouse layouts. Interpretive analysis revealed the learned policy's logic: it proactively prioritizes agents already in congested areas and strategically redirects other agents away from developing congestion, thereby smoothing overall traffic flow.

Retail & Luxury Implications

While the paper is framed for general warehouse automation, the implications for retail and luxury supply chains are direct and significant. The backend logistics powering e-commerce fulfillment, store replenishment, and even intra-facility movement in large distribution centers rely on increasingly automated systems. For luxury groups managing complex inventories of high-value items across global hubs, efficiency and reliability are paramount.

- Fulfillment Center Optimization: This research directly targets the kind of dense, dynamic robot fleets used in modern fulfillment centers. Higher throughput means faster order processing, which is critical for meeting next-day or same-day delivery promises in premium retail.

- Flexibility in Operations: The ability to generalize across different layouts and agent densities suggests a system that could be deployed or adapted without extensive re-engineering for each new facility or seasonal scaling of robot fleets.

- Beyond Basic Warehousing: The core problem—coordinating many moving agents in a shared space—extends to other retail-adjacent environments. This could include optimizing the movement of automated guided vehicles (AGVs) in manufacturing plants for apparel or accessories, or even modeling the flow of goods and equipment in highly automated port operations that handle imported luxury goods.

The approach represents a pragmatic shift: instead of replacing proven search algorithms, it uses machine learning to optimize the high-level coordination strategy fed into them. This "learning-guided" paradigm reduces risk compared to a full end-to-end learned controller, as the classical planner guarantees collision-free paths.

Implementation & Governance Considerations

For a technical leader evaluating this, the path from arXiv paper to production is non-trivial. The system requires:

- Integration with Existing Systems: The RL component needs to interface with the warehouse management system (WMS) and the real-time positioning/control system for the physical robots.

- Significant Training & Simulation Infrastructure: Developing and training the POMDP model requires a high-fidelity simulation of the specific warehouse environment and robot dynamics. This demands substantial computational resources and MLops expertise.

- Safety-Critical Validation: Any learned policy controlling physical robots must undergo rigorous testing and validation in controlled environments before full deployment. The "rolling horizon" approach and the reliance on a classical planner for final path calculation provide important safety guardrails.

- Ongoing Monitoring & Adaptation: The model may need periodic retraining or fine-tuning if the warehouse layout, product flow, or robot fleet characteristics change significantly.

The maturity level is late-stage research / early prototyping. The convincing simulation results are a strong proof-of-concept, but real-world deployment would involve overcoming sensor noise, communication delays, mechanical failures, and the sheer scale of a 24/7 operation.