In the rapidly evolving field of computer vision, a fundamental limitation has persisted: most video classification systems are essentially sophisticated pattern matchers. They excel at recognizing what they've seen before but struggle when faced with novel variations within familiar categories—a person performing an unusual dance, an animal exhibiting rare behavior, or a vehicle moving in unexpected ways. This "open-instance" challenge has constrained AI's real-world applicability, where diversity is the norm rather than the exception.

Published on arXiv on March 11, 2026, a groundbreaking paper titled "From Imitation to Intuition: Intrinsic Reasoning for Open-Instance Video Classification" introduces DeepIntuit, a framework that fundamentally rethinks how AI understands moving images. The research represents a significant departure from conventional approaches, moving beyond mere feature imitation toward what the authors term "intrinsic reasoning"—essentially teaching AI to think about what it sees rather than just match it to stored patterns.

The Open-Instance Problem: Why Current Video AI Falls Short

Traditional video classification models operate as what the researchers call "effective imitators." Trained on carefully curated datasets with relatively homogeneous examples, these systems learn to associate specific visual patterns with labels. They perform impressively on benchmark tests but encounter serious limitations when deployed in real-world scenarios where intra-class variations can be vast and unpredictable.

Consider classifying "dancing" videos. A conventional model trained on ballet, hip-hop, and salsa might fail completely when presented with a traditional cultural dance from a region not represented in its training data, even though a human would immediately recognize it as dancing. This distribution mismatch between training data and real-world application has been a persistent bottleneck in computer vision.

Vision-language models (VLMs) offered a promising alternative with their superior generalization capabilities, but as the paper notes, "have not fully leveraged their reasoning capabilities (intuition) for such tasks." While VLMs can describe what they see, translating that descriptive capability into reliable classification has remained challenging.

The DeepIntuit Framework: A Three-Stage Approach to Intuitive Reasoning

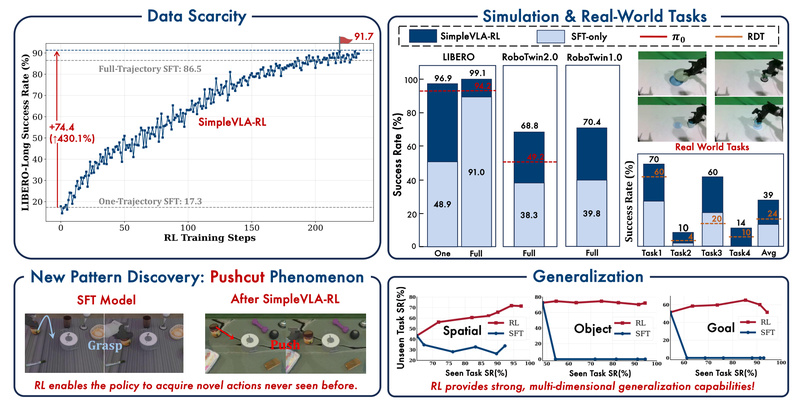

DeepIntuit addresses these limitations through an innovative three-stage pipeline designed to cultivate genuine reasoning rather than pattern matching:

1. Cold-Start Supervised Alignment

The process begins by initializing the system's reasoning capability through supervised learning. This stage establishes basic connections between visual inputs and linguistic reasoning, creating a foundation for more sophisticated processing.

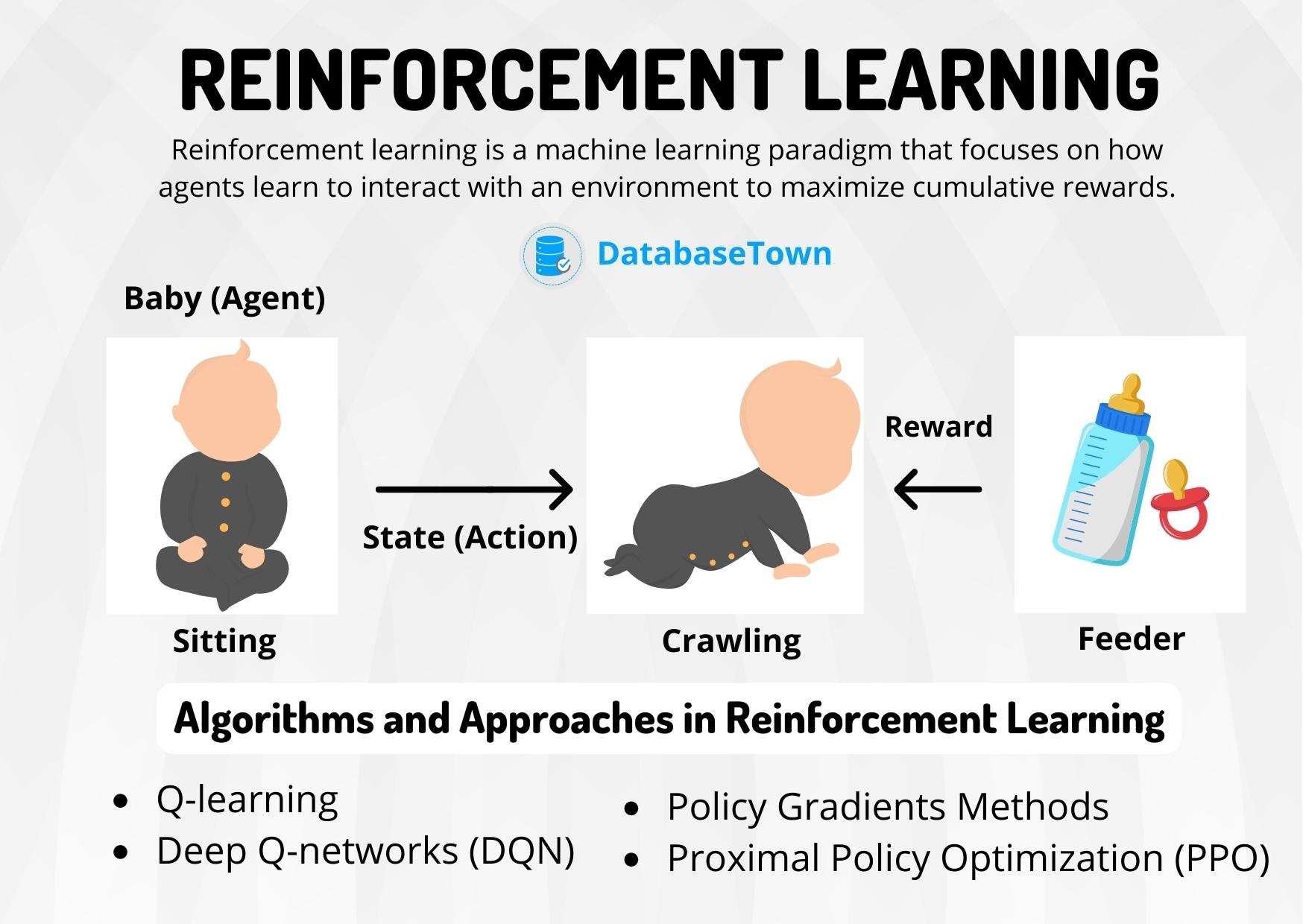

2. Group Relative Policy Optimization (GRPO)

Here's where DeepIntuit introduces its most novel component. Using reinforcement learning, the system refines its reasoning coherence through GRPO. Unlike traditional reinforcement learning approaches that optimize for single outcomes, GRPO considers reasoning as a group activity, enhancing the logical consistency of the AI's thought process about what it's viewing.

3. Intuitive Calibration

The crucial final stage translates reasoning into accurate classification. A classifier is trained on the "intrinsic reasoning traces" generated by the refined VLM. This approach ensures stable knowledge transfer without the distribution mismatch problems that plague conventional systems. Essentially, the AI learns to classify based on its understanding of the content rather than surface-level features.

Technical Innovation: Beyond Traditional Computer Vision

What makes DeepIntuit particularly noteworthy is its departure from standard computer vision architectures. Rather than treating video classification as a pure visual pattern recognition task, the framework approaches it as a reasoning problem. The system generates textual reasoning about what it observes in videos, then uses that reasoning to make classification decisions.

This approach aligns with broader trends in AI toward more interpretable systems. The "reasoning traces" mentioned in the paper provide a window into how the AI reaches its conclusions—a significant advantage over black-box models that offer classifications without explanation.

The timing of this research is particularly significant, coming just days after other notable arXiv publications including advances in verifiable reasoning for recommendation systems (March 10) and image-based shape retrieval (March 10). This cluster of publications suggests a growing research focus on making AI systems more transparent and reasoning-based across multiple domains.

Implications and Applications

The potential applications of DeepIntuit-style systems are extensive:

Content Moderation: Platforms could more accurately identify nuanced forms of problematic content that don't match exact training examples but share underlying concerning characteristics.

Medical Imaging: Systems could recognize rare disease presentations by reasoning about physiological principles rather than requiring examples of every possible manifestation.

Autonomous Systems: Vehicles and robots could better interpret unusual situations by reasoning about physics, intent, and context rather than relying solely on pattern matching.

Creative Industries: More sophisticated content categorization and recommendation systems could understand the emotional or thematic essence of videos beyond surface characteristics.

Challenges and Future Directions

While promising, the DeepIntuit approach raises important questions. The computational requirements for generating and processing reasoning traces may be substantial compared to conventional classification systems. Additionally, the quality of reasoning will depend heavily on the underlying VLM's capabilities and training.

The researchers acknowledge that their work represents an initial step toward more intuitive video understanding systems. Future developments might include more efficient reasoning mechanisms, integration with other sensory modalities, and applications beyond classification to prediction and generation tasks.

As AI systems increasingly move into real-world applications where they encounter novel situations daily, approaches like DeepIntuit that emphasize reasoning over rote memorization may become essential. The framework represents not just an incremental improvement in video classification accuracy but a conceptual shift in how we design AI to understand the visual world.

The project is publicly available at https://bwgzk-keke.github.io/DeepIntuit/, inviting further research and development in this promising direction toward more intuitive, reasoning-based artificial intelligence.