A groundbreaking new framework called Adaptive Split Federated Learning (ASFL) promises to dramatically reduce the computational burden of federated learning systems while maintaining data privacy protections. Published on arXiv on February 19, 2026, the research addresses one of the most persistent challenges in distributed AI: how to train sophisticated models on resource-constrained devices without compromising performance or privacy.

The Federated Learning Bottleneck

Federated learning has emerged as a crucial privacy-preserving approach to AI training, allowing multiple clients (such as smartphones, IoT devices, or edge servers) to collaboratively train a shared model without exchanging raw data. Instead, only model updates are transmitted to a central server for aggregation. While this approach protects user privacy, it comes with significant computational costs.

Traditional federated learning requires each client device to train the entire model locally, which can be prohibitively expensive for devices with limited processing power, memory, or battery life. This limitation has constrained the types of models that can be deployed in federated settings and has slowed adoption in real-world applications where devices operate under strict energy and latency constraints.

How ASFL Works: Adaptive Model Splitting

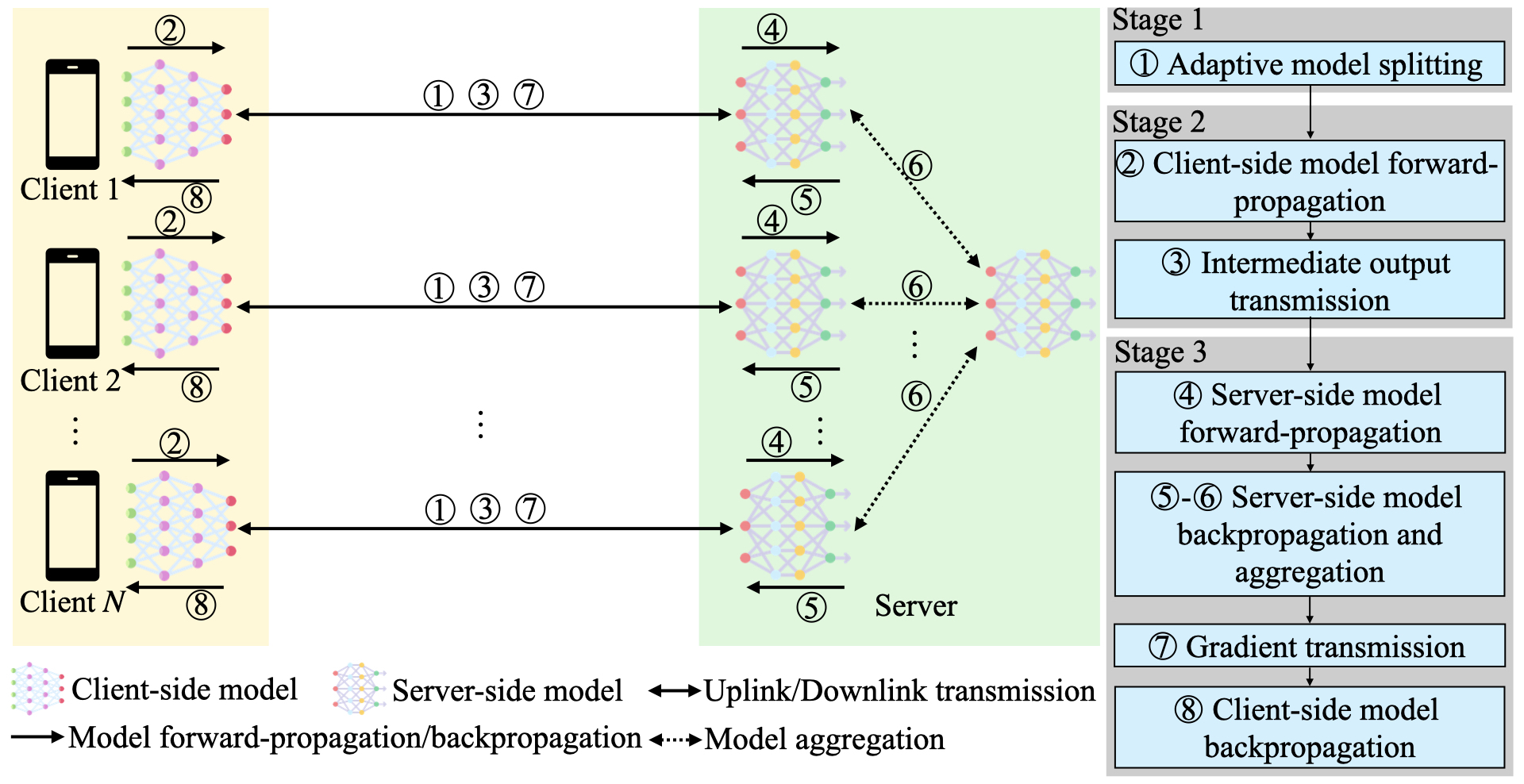

The ASFL framework introduces an innovative solution: adaptive model splitting combined with intelligent resource allocation. Instead of requiring each client to train the complete model, ASFL dynamically partitions the model between client devices and a central server based on current conditions.

"ASFL exploits the computation resources of the central server to train part of the model and enables adaptive model splitting as well as resource allocation during training," the researchers explain in their paper. This means that computationally intensive portions of the model can be offloaded to the server, while simpler operations remain on the client devices.

The system continuously monitors network conditions, device capabilities, and model requirements to determine the optimal split point for each training round. This adaptive approach ensures that resources are used efficiently while maintaining the privacy guarantees of federated learning.

Technical Innovations and Optimization

The research team faced significant technical challenges in developing ASFL. They needed to theoretically analyze the convergence rate of their split learning approach and formulate a joint optimization problem that balanced learning performance with resource constraints.

"To optimize the learning performance (i.e., convergence rate) and efficiency (i.e., delay and energy consumption) of ASFL, we theoretically analyze the convergence rate and formulate a joint learning performance and resource allocation optimization problem," the paper states.

The optimization problem was particularly challenging due to long-term delay and energy consumption constraints, as well as the complex coupling between model splitting decisions and resource allocation. To solve this, the researchers developed an Online Optimization Enhanced Block Coordinate Descent (OOE-BCD) algorithm that iteratively finds optimal solutions.

Performance Results: Dramatic Efficiency Gains

Experimental results demonstrate remarkable improvements over existing approaches. When compared with five baseline federated learning schemes, ASFL not only converged faster but also reduced total delay by up to 75% and energy consumption by up to 80%.

These efficiency gains could enable previously impractical applications of federated learning, including:

- Real-time AI on mobile devices with strict battery constraints

- Large-scale IoT networks with thousands of resource-limited sensors

- Healthcare applications where both privacy and responsiveness are critical

- Autonomous systems requiring continuous learning with minimal energy overhead

Implications for Edge AI and Privacy-Preserving Systems

The development of ASFL represents a significant advancement in making privacy-preserving AI more practical and scalable. By dramatically reducing the computational burden on client devices, the framework opens new possibilities for deploying sophisticated AI models in distributed environments.

This research aligns with growing concerns about both data privacy and environmental sustainability in AI development. The energy savings alone could have substantial environmental impact as federated learning systems scale to billions of devices worldwide.

Future Directions and Applications

While the current research focuses on wireless networks, the principles underlying ASFL could be adapted to various distributed computing environments. Future work might explore:

- Integration with heterogeneous device networks mixing high- and low-capability devices

- Application to specific domains like healthcare, finance, or autonomous vehicles

- Extension to more complex model architectures including large language models

- Real-world deployment challenges and security considerations

The ASFL framework demonstrates that through clever algorithmic design and optimization, we can overcome some of the most significant barriers to widespread adoption of privacy-preserving AI systems.

Source: arXiv:2603.04437v1, "ASFL: An Adaptive Model Splitting and Resource Allocation Framework for Split Federated Learning" (February 19, 2026)