What Happened

On May 23, 2024, Google published a research blog post detailing its "TurboQuant" AI technology. Within hours, financial markets reacted with a sell-off in memory semiconductor stocks. According to market reports, shares of major memory manufacturers dropped significantly:

- Micron Technology (MU): Down approximately 4%

- Samsung Electronics: Down approximately 3%

- SK Hynix: Down approximately 2%

The sell-off appears directly linked to investor concerns that TurboQuant's AI-driven efficiency improvements could reduce future demand for memory hardware in AI infrastructure.

Context: What is TurboQuant?

While the specific technical details of TurboQuant haven't been fully disclosed in the initial reports, the research appears to focus on AI-driven quantization techniques that optimize neural network inference. Quantization reduces the precision of numerical calculations in AI models (e.g., from 32-bit floating point to 8-bit integers), which dramatically decreases:

- Memory bandwidth requirements

- Memory capacity needs

- Power consumption

- Computational overhead

For large language models and other AI systems, memory bandwidth and capacity are often the primary bottlenecks. More efficient quantization directly translates to less hardware demand for the same computational output.

Market Reaction Analysis

The immediate market reaction reveals how sensitive semiconductor investors have become to AI efficiency breakthroughs. The memory sector has experienced unprecedented demand growth during the AI boom, with:

- High-bandwidth memory (HBM) becoming critical for AI accelerators

- DRAM prices rising significantly throughout 2023-2024

- Memory manufacturers investing billions in capacity expansion

Google's research suggests that future AI systems may achieve similar performance with less memory hardware, potentially flattening the demand curve that has driven recent memory stock valuations.

The Broader Trend: AI Efficiency vs. Hardware Demand

This episode highlights a fundamental tension in the AI hardware ecosystem: Every breakthrough in AI efficiency potentially reduces the need for more hardware. While chip designers like NVIDIA benefit from both performance improvements and hardware sales, memory manufacturers face a more direct trade-off.

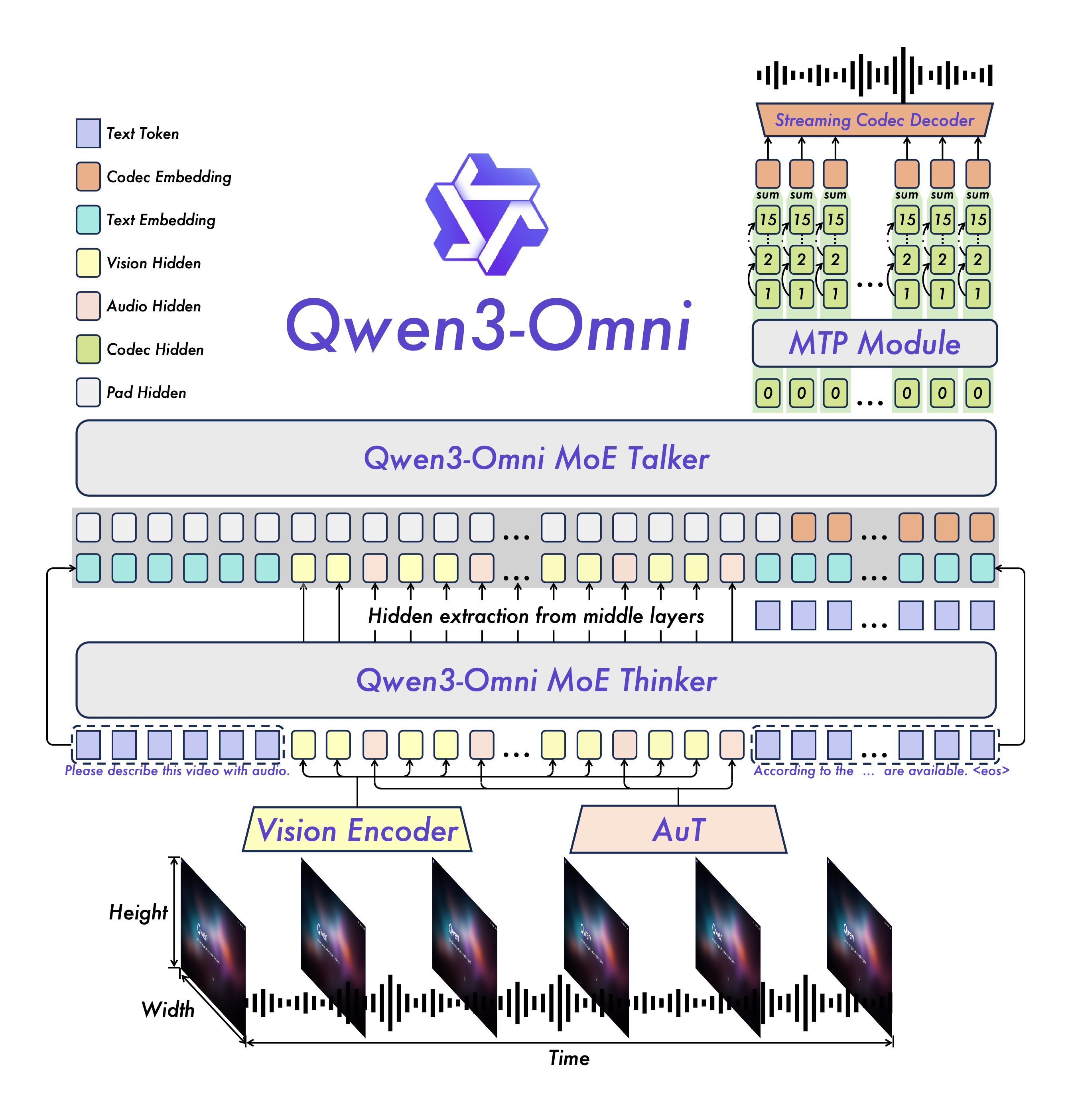

Recent months have seen multiple efficiency-focused developments:

- OpenAI's o1 model family emphasizing reasoning efficiency

- Various 1-bit and 2-bit quantization research papers

- Mixture-of-experts architectures that activate only portions of models

Each of these reduces the memory footprint required for AI inference and training.

gentic.news Analysis

This market reaction to a single research blog post demonstrates how tightly coupled AI research and semiconductor markets have become. The immediate 2-4% drop in memory stocks—representing billions in market capitalization—occurred based on investor interpretation of technical research, not financial results or guidance changes.

This follows a pattern we've observed throughout 2024: AI efficiency gains are becoming a bearish signal for hardware suppliers. In March 2024, we covered how Groq's LPU architecture challenged traditional GPU assumptions, and in April, we analyzed how OpenAI's efficiency improvements were changing cloud provider economics. Each time, the market has reacted more quickly to connect technical advances to financial implications.

The TurboQuant reaction is particularly notable because it involves Google—a company that both develops AI technology and purchases enormous amounts of AI hardware. When Google announces efficiency improvements, investors reasonably assume the company will purchase less hardware from suppliers. This creates a direct feedback loop where Google's R&D success could negatively impact its own suppliers' financial performance.

Looking at the broader landscape, this event reinforces the importance of differentiation in the memory sector. Companies like Micron, Samsung, and SK Hynix that can develop specialized AI memory (like HBM3E and beyond) may be somewhat insulated from generic efficiency gains, as their products enable the performance that makes advanced AI possible. However, if quantization techniques improve enough, even specialized memory demand could plateau.

Frequently Asked Questions

What exactly is TurboQuant?

TurboQuant appears to be Google's latest research into advanced quantization techniques for AI models. While full technical details aren't available from the initial reports, quantization typically involves reducing the numerical precision of model weights and activations (e.g., from 16-bit to 8-bit or 4-bit) to decrease memory requirements and increase inference speed while maintaining accuracy.

Why would AI efficiency hurt memory stock prices?

AI systems, particularly large language models, require enormous amounts of high-speed memory. The current AI boom has driven unprecedented demand for DRAM and high-bandwidth memory (HBM). If AI models become more efficient and require less memory to run, future demand growth for memory chips could slow, potentially reducing revenue projections for memory manufacturers.

Is this a long-term threat to memory companies?

The threat is real but nuanced. While efficiency gains may reduce the memory needed per AI model, the total number of AI models and applications continues to grow exponentially. Additionally, memory manufacturers are developing specialized products (like HBM4) that enable next-generation AI capabilities. The most likely scenario is that efficiency gains moderate but don't reverse the growth trajectory of AI memory demand.

How reliable are market reactions to single research papers?

Market reactions to technical research are often exaggerated in the short term but can indicate legitimate long-term trends. The 2-4% drop reflects immediate investor concern, but memory stocks have shown resilience throughout the AI boom. The more significant signal is the pattern of efficiency research consistently emerging from major AI labs, suggesting this is a sustained focus rather than a one-off development.