Google has released details on TurboQuant, a new family of "theoretically grounded" quantization algorithms designed to tackle one of the most significant and growing costs in running large language models (LLMs) with long contexts: the Key-Value (KV) cache.

The KV cache is a memory structure that stores intermediate representations (keys and values) for every token in a sequence. As a conversation or document grows longer, this cache expands linearly, consuming massive amounts of high-bandwidth memory (HBM). This often becomes the primary bottleneck for long-context inference, not raw compute, due to the cost of moving this data.

Standard quantization methods reduce the number of bits used to store each number but often introduce hidden overhead from metadata and bookkeeping data, diminishing real-world memory savings. TurboQuant directly attacks this overhead with a two-stage compressor that preserves the useful geometric structure of the vectors.

What Google Built: A Two-Stage Compressor

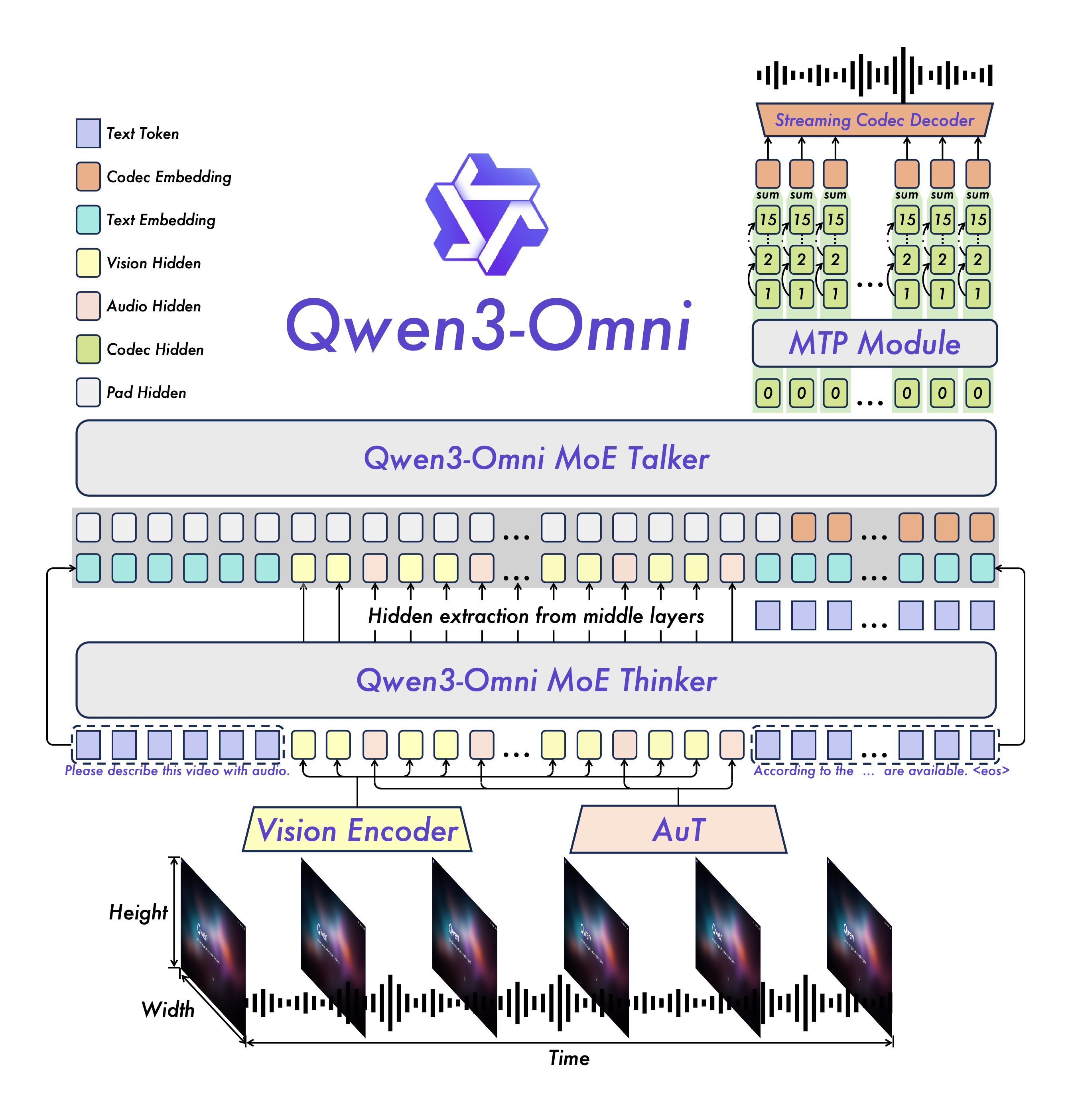

TurboQuant's innovation lies in its decomposition of the compression task:

PolarQuant: This first stage handles the bulk of the signal. It applies a random rotation to the input vector, then rewrites pairs of coordinates into a length and an angle (polar coordinates). This transformation makes the data more amenable to tight packing without needing to store extra per-block constants, which are a common source of hidden overhead in other methods.

Quantized Johnson-Lindenstrauss (QJL): This second stage acts as a high-precision correction mechanism. After PolarQuant captures the main shape of the vector, a tiny residual error remains. QJL applies a 1-bit quantization (storing only the sign) to this error. This allows the final attention scores to remain accurate, preventing the drift that plagues aggressive quantization.

A simple analogy: PolarQuant stores the broad strokes of a painting, while QJL adds the fine, detail-correcting brushstrokes almost for free.

Key Technical Results

According to Google's testing, TurboQuant delivers substantial improvements in the three critical axes for inference: memory, accuracy, and speed.

KV Cache Memory Footprint Reduced by ≥6x Compared to standard 16-bit (FP16) storage. Storage Precision 3-bit with no accuracy loss Maintains accuracy on long-context benchmarks at 3 bits. Attention Scoring Speed Up to 8x faster Achieved with 4-bit quantization on NVIDIA H100 GPUs.The ability to reach 3-bit storage without degrading accuracy on long-context tasks is a notable result, as most production quantization techniques struggle below 4 bits without significant fine-tuning or accuracy trade-offs.

How It Works: Post-Training and Geometry-Aware

A major practical advantage of TurboQuant is that it is a post-training quantization (PTQ) method. It does not require retraining or fine-tuning the base LLM. This means it can be applied as a lightweight layer underneath an existing, deployed model, dramatically improving its efficiency without forcing a costly retraining cycle or altering its weights.

The algorithm's effectiveness stems from its geometry-aware approach. By using random rotations and polar coordinate transformations, it structures the data in a way that minimizes the informational cost of compression. The two-stage process explicitly separates the high-energy signal (compressed with high fidelity via PolarQuant) from the low-energy noise (crushed efficiently via 1-bit QJL), which is a more principled approach than applying uniform quantization across the entire vector.

Why It Matters: Attacking the Real Bottleneck

For AI engineers deploying LLMs, the primary constraint on context length is increasingly memory bandwidth, not FLOPs. TurboQuant's significance isn't just a higher compression ratio on paper; it's that the compression directly targets the hidden metadata overhead that nullifies real-world gains. This explains the unusually strong speed improvements—by drastically reducing the physical amount of data that needs to be fetched from memory during the attention operation, it alleviates the actual bottleneck.

This development is particularly critical for the growing use cases of long-context LLMs, such as document analysis, long-form conversation, codebase manipulation, and agentic workflows, where KV cache memory can easily balloon to dozens of gigabytes.

gentic.news Analysis

Google's release of TurboQuant is a direct and powerful move in the intensifying inference optimization race. It follows a pattern of recent activity from Google DeepMind and Google Research focused on making Transformer-based models radically more efficient, a necessity for both cost-effective scaling and enabling new capabilities like million-token contexts. This work dovetails with other recent advances we've covered, such as Gemma 2, which itself incorporated sophisticated weight quantization techniques, and the push towards Mixture-of-Experts (MoE) architectures like Gemini 1.5 that aim to reduce active parameter count.

TurboQuant's post-training nature makes it a immediate threat to and differentiator from many third-party quantization startups and libraries (e.g., GGUF, AWQ, GPTQ), which often require calibration or can introduce accuracy instability. By open-sourcing a theoretically robust method, Google is setting a new benchmark for what's expected in production-ready compression. It also strategically undermines a key advantage of competitors who rely on custom, efficient inference runtimes—if the compression is applied at the algorithm level, the benefits propagate across hardware and software stacks.

Looking at the competitive landscape, this pressures other cloud providers (AWS with Titan, Microsoft Azure with Phi and MaaS) and closed-model companies (Anthropic, OpenAI) to demonstrate similar or better memory efficiency gains. For the open-source community, TurboQuant provides a new, high-performance baseline to integrate into popular frameworks like Llama.cpp and vLLM. The real test will be independent benchmarking on a wider variety of models and hardware, but the published results suggest a meaningful step forward in tackling the KV cache problem.

Frequently Asked Questions

What is the KV cache and why is it a problem?

The Key-Value (KV) cache is a memory structure used during LLM inference to store the "keys" and "values" computed for every previous token in a sequence. This avoids recomputing them for each new token, speeding up generation. However, for long conversations or documents, this cache grows linearly with the number of tokens, consuming massive amounts of GPU memory and becoming the primary bottleneck for throughput and latency, as moving this data is slow.

How is TurboQuant different from other quantization methods like GPTQ or AWQ?

Most quantization methods focus primarily on compressing the model's weights. TurboQuant is specifically designed for the KV cache, a different and dynamic data structure. Furthermore, it uses a novel two-stage, geometry-aware approach (PolarQuant + QJL) that explicitly minimizes the hidden metadata overhead common in other methods, leading to larger real-world memory savings and speedups. It also requires no retraining, unlike some fine-tuning-based quantization techniques.

Can I use TurboQuant with any LLM?

In principle, yes. As a post-training quantization (PTQ) method, TurboQuant is designed to be applied to the KV cache of existing models without modifying their weights. However, its integration requires implementation into the model's inference engine (like vLLM or TensorRT-LLM). Widespread adoption will depend on Google open-sourcing the code and the community porting it to popular frameworks.

Does TurboQuant also quantize the model weights, or just the KV cache?

Based on the available information, TurboQuant is described specifically as a method for compressing the KV cache. It is not a weight quantization technique. The goal is to make long-context inference efficient by solving the memory bottleneck during generation, not to reduce the model's storage footprint on disk.