Anthropic published research on Natural Language Autoencoders, training Claude to translate its internal activations into readable text. The method decodes the model's numerical thought encodings into human language.

Key facts

- Technique: Natural Language Autoencoders decode activation vectors into text.

- Target: Claude's internal numerical thought encodings.

- Approach: Trains autoencoder on activation-text pairs.

- Contrast: Prior work probed neurons; this captures full patterns.

- Status: Research announcement; no code or benchmarks released.

Anthropic released a new interpretability technique called Natural Language Autoencoders, which train Claude to map its internal activations—the numerical vectors representing its reasoning state—into human-readable text [According to @AnthropicAI]. Unlike prior approaches that probed individual neurons or circuits, this method captures full activation patterns and translates them as coherent sentences.

The core idea is that while Claude 'thinks' in high-dimensional vectors, those vectors encode concepts that can be decoded via a learned autoencoder into natural language. The autoencoder is trained on pairs of activations and corresponding text, allowing direct inspection of what the model is 'thinking' at inference time.

Why This Matters for Interpretability

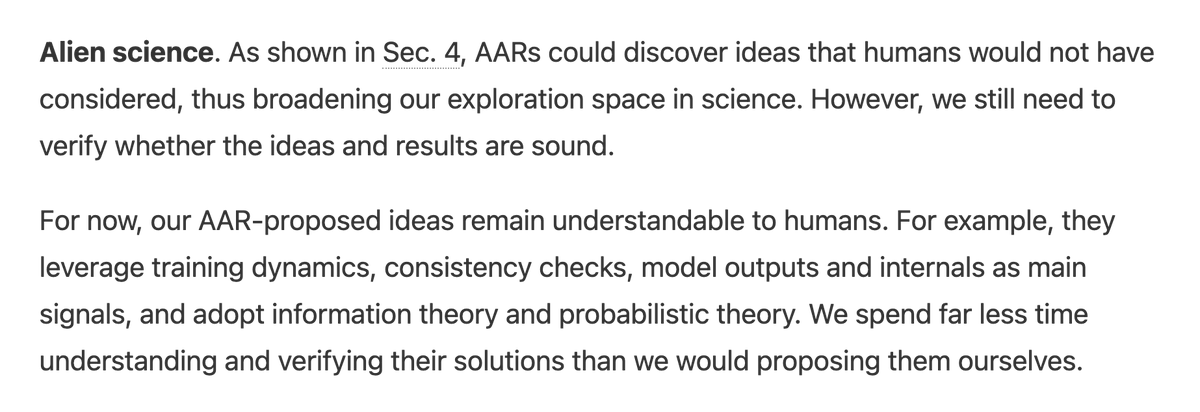

This is a structural departure from the dominant interpretability playbook. Most current work—like Anthropic's own earlier feature visualization or OpenAI's activation patching—targets individual neurons or sparse features. Natural Language Autoencoders instead produce dense, sentence-level translations of entire activation states. This could enable debugging of chain-of-thought reasoning, detection of hidden biases, or verification that the model's internal reasoning matches its output.

The research does not claim to solve interpretability, but it offers a new lens. The autoencoder's fidelity—how accurately the decoded text reflects the true activation content—is not fully characterized in the announcement, and Anthropic did not release benchmark numbers or open-source code with the post.

Limitations and Open Questions

A key unknown: whether the autoencoder produces faithful translations or plausible-sounding confabulations. If the decoder hallucinates explanations that don't match the actual activation semantics, the tool could mislead as easily as illuminate. Anthropic's post does not present ablation studies or comparison against ground-truth reasoning traces.

Additionally, the technique requires training a separate autoencoder for each model or task, limiting scalability. The announcement focuses on Claude specifically, and it's unclear how well the approach generalizes to other architectures or training stages.

What to watch

Watch for Anthropic to release benchmark results comparing autoencoder fidelity against ground-truth reasoning traces—likely in a follow-up paper or blog post. Also track whether the method integrates into Claude's deployment monitoring for safety.