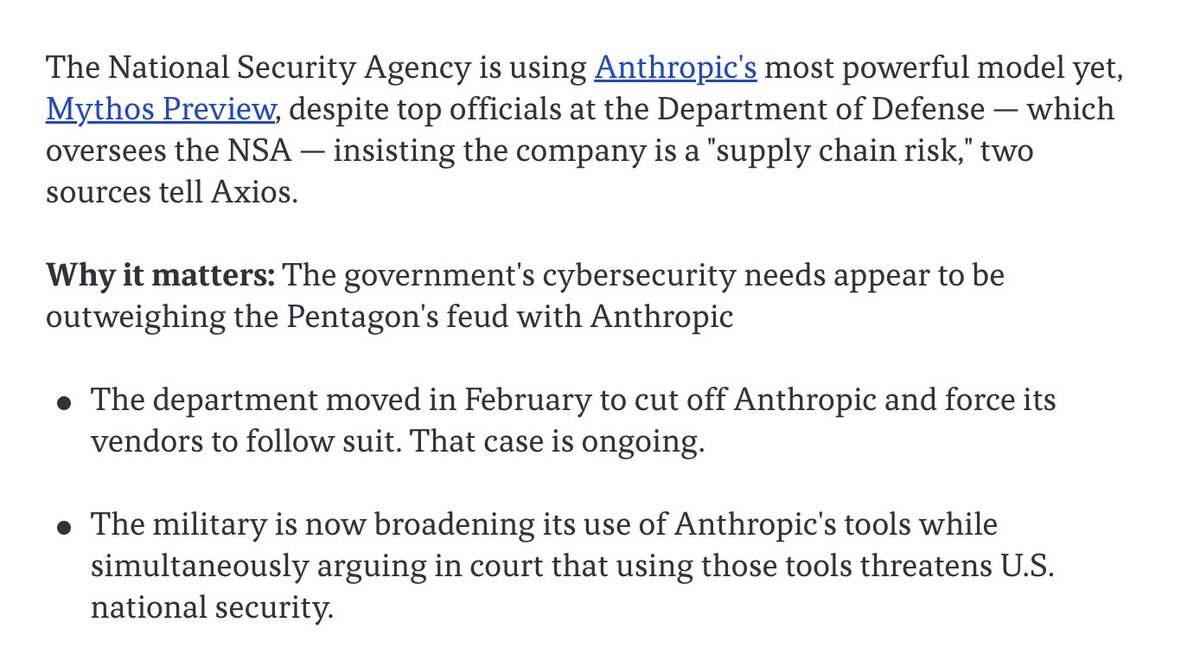

Anthropic's Alex Albert posted that an early Claude Mythos Preview snapshot achieved more than 2x the time horizon of the next best model on METR's 80% success rate benchmark. The claim positions Claude Mythos as a step change in autonomous agent capability.

Key facts

- 2x time horizon over next best model at 80% success rate

- METR measures autonomous agent time horizon

- 80% success rate is the evaluation threshold

- Snapshot may differ from final Claude Mythos Preview

- Anthropic has not disclosed absolute time horizon values

Anthropic's Alex Albert tweeted that an early Claude Mythos Preview snapshot delivered a time horizon "more than 2x the next best model" on METR's 80% success rate benchmark [According to @alexalbert__]. METR (Model Evaluation for Responsible AI) measures how long an AI agent can autonomously pursue complex, multi-step tasks before requiring human intervention. The 80% success rate threshold is a key milestone — it means the model completes the task without help 80% of the time at that time horizon.

The tweet links to an unspecified METR evaluation. The company has not disclosed the absolute time horizon in hours or the model name of the "next best" competitor. Anthropic has not released a full spec sheet for Claude Mythos Preview; this snapshot may differ from the final production version.

Why this matters more than the press release suggests

Time horizon is the single most important metric for autonomous agent economics. A 2x improvement means an agent can complete twice as many tasks before a human must step in, directly reducing the cost-per-task of agentic workflows. For enterprise use cases like software engineering, research analysis, or customer operations, longer time horizons translate to lower supervision costs and higher throughput.

Context from prior Claude releases

Claude 3.5 Sonnet (released June 2024) scored 71.2 on SWE-Bench, a coding agent benchmark. Claude Opus (March 2024) was the first model to exceed 50% on METR evaluations at the time. This 2x improvement over the next best — which could be GPT-4o, Gemini 2.0, or a prior Claude variant — represents a non-trivial jump in autonomous capability.

What we don't know

Anthropic has not published the raw time horizon numbers, the exact benchmark task set, or whether the result generalizes across task domains. METR evaluations can vary by task complexity and environment. The company also hasn't confirmed whether this snapshot will ship unchanged as the final Claude Mythos Preview.

What to watch

Watch for Anthropic to publish the absolute time horizon in hours and confirm whether the final Claude Mythos Preview release matches this snapshot's performance. Also track third-party replication on METR's public benchmark suite and comparison against GPT-5 or Gemini 2.0 results.