A groundbreaking evaluation of OpenAI's GPT-5 family reveals substantial advances in clinical reasoning capabilities while highlighting persistent limitations in specialized medical domains. The study, published on arXiv as "Evaluating GPT-5 as a Multimodal Clinical Reasoner: A Landscape Commentary," provides the first comprehensive assessment of how this next-generation foundation model performs across diverse medical tasks requiring integrated reasoning.

The Clinical Reasoning Challenge

Clinical medicine represents one of the most demanding environments for artificial intelligence systems. Unlike narrow AI applications, clinical diagnosis requires synthesizing ambiguous patient narratives, laboratory data, and multimodal imaging—a cognitive process that involves weighting uncertain information against objective findings. As AI transitions from task-specific systems toward general-purpose foundation models, researchers are asking fundamental questions about their capacity to support this integrated reasoning.

"The transition from task-specific artificial intelligence toward general-purpose foundation models raises fundamental questions about their capacity to support the integrated reasoning required in clinical medicine," the authors note in their abstract. This landscape commentary addresses precisely those questions through controlled, cross-sectional evaluation.

Methodology and Scope

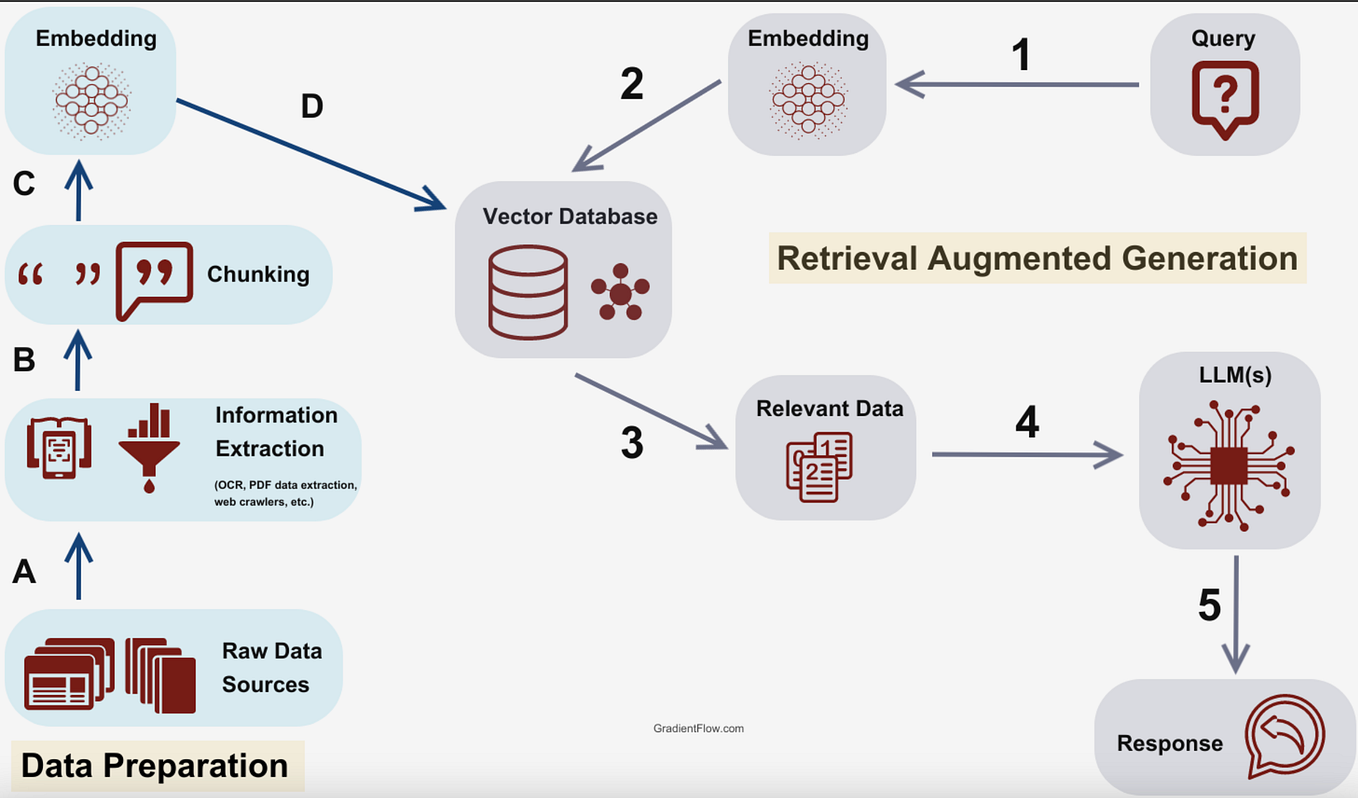

The research team evaluated three variants of the GPT-5 family (GPT-5, GPT-5 Mini, GPT-5 Nano) against its predecessor GPT-4o across a diverse spectrum of clinically grounded tasks. Their methodology employed a standardized zero-shot chain-of-thought protocol across three primary domains:

- Medical education examinations - Testing knowledge recall and application

- Text-based reasoning benchmarks - Assessing clinical reasoning from narrative descriptions

- Visual question-answering - Evaluating multimodal synthesis in neuroradiology, digital pathology, and mammography

This comprehensive approach allowed researchers to assess both the model's knowledge base and its ability to integrate different types of clinical information.

Performance Breakthroughs in Textual Reasoning

GPT-5 demonstrated remarkable improvements in expert-level textual reasoning, with absolute improvements exceeding 25 percentage points on the MedXpertQA benchmark compared to previous models. This represents a substantial leap forward in the model's ability to process and reason about medical text.

When tasked with multimodal synthesis, GPT-5 effectively leveraged this enhanced reasoning capacity to ground uncertain clinical narratives in concrete imaging evidence. The model achieved state-of-the-art or competitive performance across most visual question-answering benchmarks and outperformed GPT-4o by margins of 10-40% in mammography tasks requiring fine-grained lesion characterization.

"GPT-5 demonstrated substantial gains in expert-level textual reasoning," the researchers report, noting that "when tasked with multimodal synthesis, GPT-5 effectively leveraged this enhanced reasoning capacity to ground uncertain clinical narratives in concrete imaging evidence."

Persistent Limitations in Specialized Domains

Despite these advances, the study reveals significant limitations in highly specialized medical imaging tasks. GPT-5's performance remained moderate in neuroradiology, achieving only 44% macro-average accuracy. In mammography, while showing improvement over previous models, GPT-5's 52-64% accuracy significantly lagged behind domain-specific systems that exceed 80% accuracy.

These findings highlight a crucial distinction between general reasoning capabilities and specialized perceptual tasks. While GPT-5 shows promise in integrating different types of clinical information, it cannot yet match the performance of purpose-built systems in areas requiring fine-grained image analysis.

Implications for Clinical AI Integration

The research suggests that GPT-5 represents a meaningful advance toward integrated multimodal clinical reasoning, mirroring the clinician's cognitive process of biasing uncertain information with objective findings. However, the authors caution that "generalist models are not yet substitutes for purpose-built systems in highly specialized, perception-critical tasks."

This has important implications for how AI might be integrated into clinical workflows. Generalist models like GPT-5 could potentially serve as preliminary screening tools or as assistants for integrating patient information, while specialized systems would handle detailed image analysis and final diagnostic recommendations.

The Future of Clinical AI

The study points toward a hybrid future for clinical AI, where generalist foundation models work alongside specialized systems. This approach could leverage the strengths of each type of system while mitigating their weaknesses. Generalist models could handle the integrative reasoning that connects different data sources, while specialized systems provide the perceptual precision needed for specific diagnostic tasks.

As foundation models continue to evolve, their ability to support clinical reasoning will likely improve. However, this research suggests that even advanced models may continue to face challenges in highly specialized domains where years of focused training and specific architectural choices give dedicated systems an advantage.

Research Context and Significance

Published on arXiv on March 5, 2026, this study represents an important contribution to understanding how general-purpose AI models perform in complex, real-world domains like medicine. While arXiv papers are not peer-reviewed, they provide valuable early insights into emerging technologies and their capabilities.

The findings are particularly significant given the growing interest in applying foundation models to healthcare. As healthcare systems worldwide face increasing pressure from staffing shortages and growing patient loads, AI assistance could potentially help alleviate some of these challenges—but only if the technology is appropriately matched to clinical needs and limitations.

Conclusion

GPT-5 represents a substantial step forward in clinical reasoning capabilities, particularly in integrating different types of medical information and performing text-based analysis. However, its limitations in specialized imaging tasks suggest that the future of clinical AI will likely involve hybrid systems that combine generalist reasoning with specialized perception.

As the authors conclude, while GPT-5 shows meaningful progress toward integrated multimodal clinical reasoning, purpose-built systems remain essential for highly specialized diagnostic tasks. This balanced assessment provides valuable guidance for healthcare organizations considering AI integration and for researchers working on the next generation of clinical AI systems.