What Happened

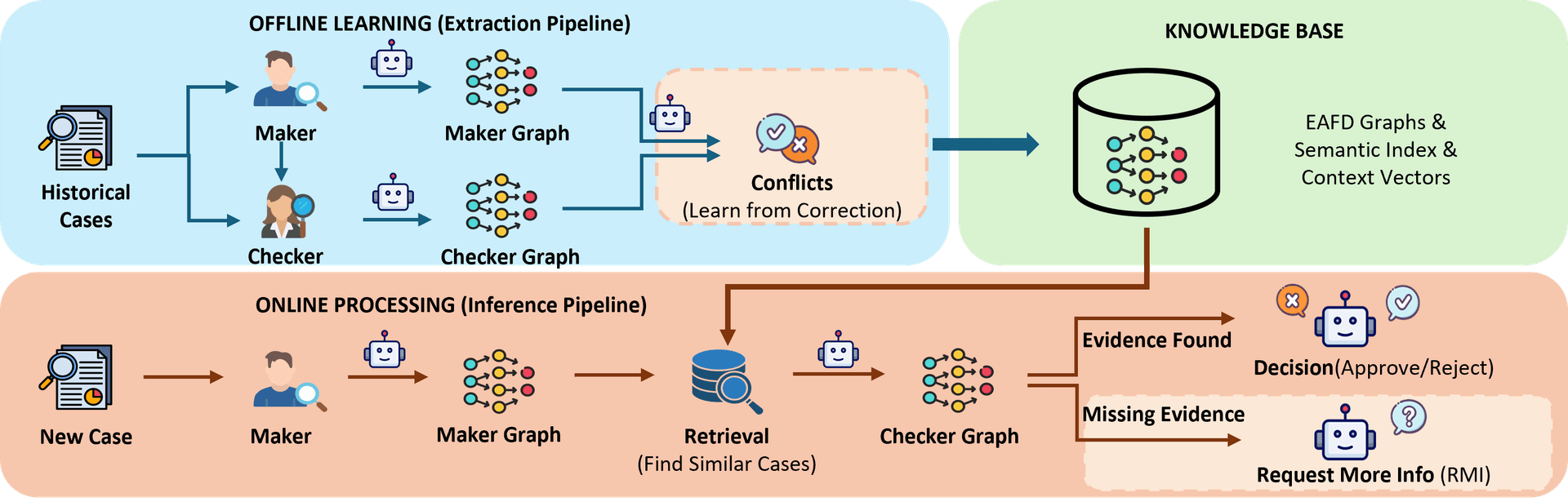

A research paper published on arXiv introduces a novel framework designed to automate and improve complex, hierarchical decision-making processes, specifically within the context of e-commerce seller appeal adjudication. The core problem it addresses is the information asymmetry inherent in two-tier review systems, where a second reviewer (the "Checker") often overturns a first reviewer's (the "Maker") decision based on verification actions or evidence not initially available.

Standard Large Language Models (LLMs) struggle in this environment. When trained on historical correction data, they tend to hallucinate reasons for decisions because they cannot distinguish between conclusions drawn from available evidence and those requiring additional, unperformed verification steps. The paper's solution is to explicitly model the actions required for verification, grounding the AI's reasoning in a structured, operational process rather than unconstrained text generation.

Technical Details: The EAFD Schema & Graph Framework

The innovation is the Evidence-Action-Factor-Decision (EAFD) schema, a minimal representation that structures adjudication reasoning.

- Evidence: The raw data or information presented in a case.

- Action: The specific verification step (e.g., "check seller's ID against government database," "review last 10 transaction logs") needed to validate evidence.

- Factor: The interpretable conclusion drawn after an action is performed (e.g., "ID is valid," "logs show suspicious pattern").

- Decision: The final adjudication outcome (Approve, Deny).

This schema prevents hallucination by tethering every factor and decision to a verifiable action. The system learns not just from final decisions, but from the conflict signals between Maker and Checker decisions, which highlight missing actions or misinterpreted factors.

The framework builds a conflict-aware knowledge graph from historical cases where disagreements occurred. Each case is represented as an EAFD graph. For a new appeal, the system:

- Retrieves similar precedent cases from the graph knowledge base.

- Projects the validated resolution paths from those precedents onto the new case.

- Performs top-down deductive reasoning to arrive at a decision.

A critical feature is the Request More Information (RMI) outcome. Instead of guessing, the system can identify precisely which verification actions from its schema remain unexecuted given the available evidence and generate a targeted request (e.g., "Please provide the shipment tracking number for order XYZ").

Retail & Luxury Implications

While the paper's evaluation is in e-commerce seller appeals, the framework's applicability to luxury and retail operations is direct and profound. The core challenge—making consistent, high-stakes judgments based on incomplete information within a structured workflow—is ubiquitous.

Concrete Application Scenarios:

Fraud & Chargeback Adjudication: A luxury brand's payment operations team reviews disputed transactions. A first-line agent may deny a claim based on the customer's purchase history. A senior reviewer might overturn this after performing the specific action of "cross-referencing the shipping address with the card's billing address via a third-party service," finding a match. An EAFD-grounded AI can learn this action-dependent logic, automate the cross-referencing, and achieve near-expert consistency.

Return & Authenticity Verification: A customer returns a high-value handbag. The store associate (Maker) might approve the return based on visual inspection. The central authentication team (Checker) could deny it after performing the action "scan the internal microchip with the proprietary reader" and finding a discrepancy. This framework can codify such critical verification steps, ensuring automated systems don't bypass them.

Vendor Compliance & Sustainability Audits: Reviewing a supplier's documentation for ethical compliance involves a checklist of verification actions (e.g., "validate certificate XYZ against issuing body's online registry"). An AI using this framework can systematically ensure each action is completed before rendering a decision, improving audit rigor and traceability.

Clienteling & Personal Shopping Requests: Allocating limited-edition items or approving high-value client requests often follows an approval chain. The system could model actions like "check client's lifetime spend" or "confirm product allocation from inventory system," providing junior staff with a clear, action-backed rationale for decisions and flagging cases needing senior review.

Business Impact: The performance leap demonstrated—from 70.8% to 96.3% alignment with human experts—translates directly to operational efficiency and risk reduction. It means:

- Faster resolution of customer and partner disputes.

- Dramatically reduced reliance on scarce tier-2 expert reviewers for routine cases.

- Consistent application of complex business rules and compliance standards.

- A clear, auditable trail for every decision, crucial for regulatory compliance in luxury (e.g., anti-money laundering, product authenticity).

Implementation Approach & Governance

Adopting this framework is a significant technical undertaking, not a plug-and-play solution. It requires:

- Schema Definition: Collaborating with domain experts (e.g., fraud analysts, legal teams, quality managers) to decompose existing review policies into the foundational EAFD schema—identifying all possible evidence types, verification actions, and decision factors.

- Historical Data Structuring: Processing past review cases, especially those with escalations or overrules, to build the initial conflict graph. This is a major data engineering effort.

- System Integration: The AI does not operate in a vacuum. It needs APIs to execute verification actions (e.g., querying a CRM, checking an inventory database) and to receive structured evidence from case management systems.

- Human-in-the-Loop Design: The RMI function must integrate seamlessly into agent workflows, and there must be clear protocols for cases the system flags as lacking precedent or requiring novel judgment.

Governance & Risk: The primary risk is schema brittleness. If business rules or verification processes change, the EAFD schema and knowledge graph must be updated. There is also a risk of automation bias, where human agents over-trust the system's "projected path" from precedents. Rigorous monitoring of the 3-4% of cases where the system may diverge from experts is essential. However, the framework inherently promotes transparency and reduces the "black box" problem of pure LLMs, as every decision can be traced back to specific actions and precedent graphs.