In a surprising development that challenges conventional wisdom about AI advancement, a relatively unknown startup has achieved what seemed improbable: outperforming Anthropic's sophisticated Claude Code system using architectural principles dating back half a century. This breakthrough represents more than just a competitive upset—it signals a potential paradigm shift in how we approach artificial intelligence development, suggesting that the path forward might involve looking backward to fundamental computer science concepts.

The Unexpected Upset

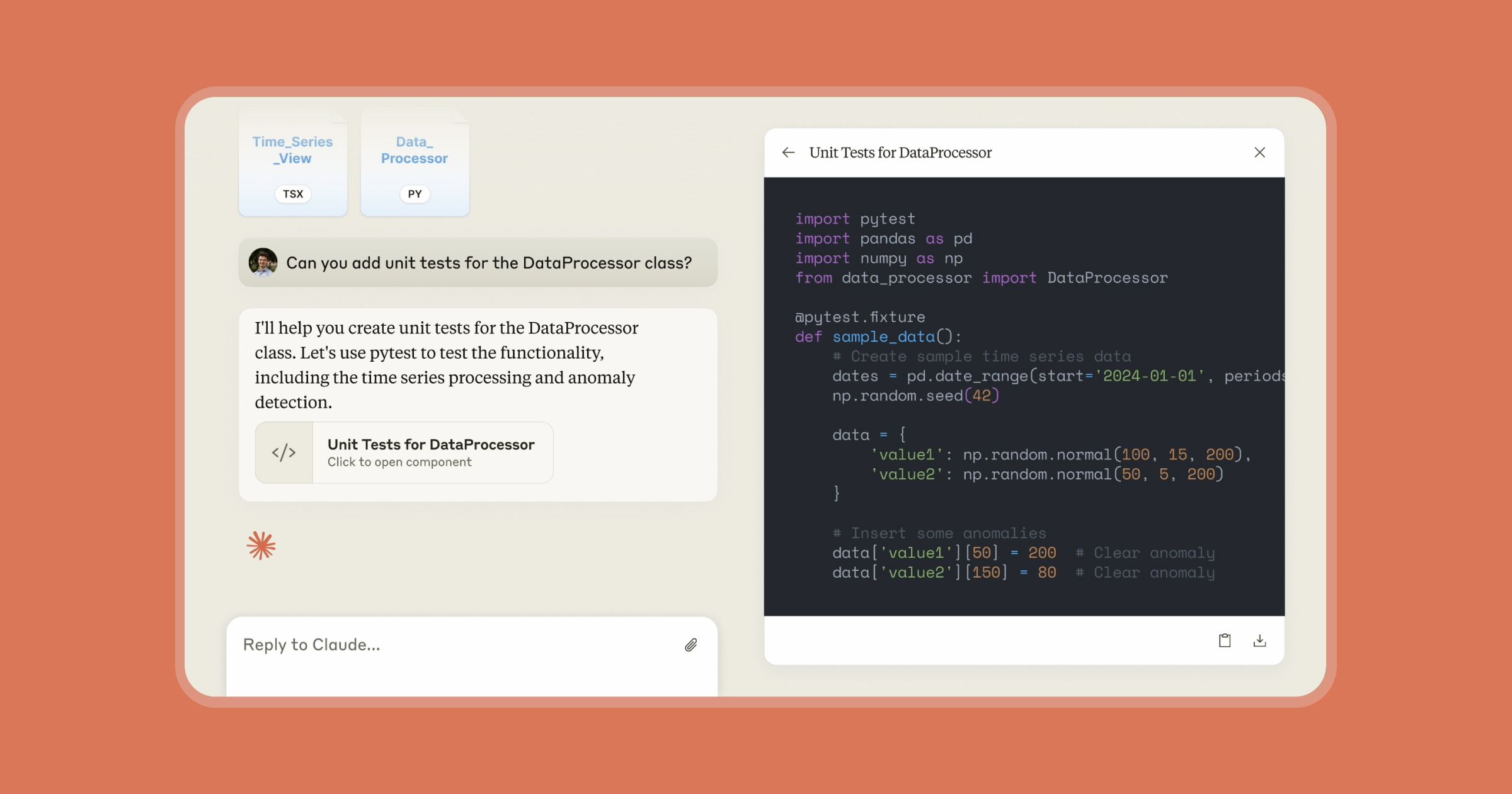

According to recent reports, Anthropic's flagship coding agent, part of the Claude family that includes Claude Opus 4.6 and Claude AI, was outperformed by a small startup employing what's described as "a 50-year-old idea from computer science." While specific performance metrics haven't been disclosed, the implications are significant given Anthropic's position in the AI landscape.

Anthropic, founded by former OpenAI researchers, has been positioning itself as a major competitor in the AI space, with projections suggesting it could surpass OpenAI in annual recurring revenue by mid-2026. The company has recently expanded its offerings with tools like an AI system for automating COBOL modernization and introduced innovative metrics like the AI Fluency Index (AFI) for measuring human-AI interaction quality.

The Secret Architecture: Persistent Memory Systems

The breakthrough reportedly centers on implementing persistent memory systems—a concept with roots in early computer science—within modern AI architectures. Unlike traditional large language models that process information within limited context windows, this approach creates what amounts to an "infinite memory" capability, allowing AI systems to maintain and reference information across extended interactions and complex tasks.

This architectural innovation addresses one of the most significant limitations in current AI systems: their inability to maintain consistent memory and context across extended interactions. While models like Claude Code excel at specific coding tasks within constrained contexts, they struggle with maintaining coherence and consistency across longer development sessions or more complex projects.

Why This Matters Beyond the Benchmark

The significance of this development extends far beyond a single performance benchmark. First, it demonstrates that innovation in AI isn't solely about scaling model parameters or training data. Sometimes, the most impactful advances come from rethinking fundamental architectural decisions.

Second, this approach could have profound implications for enterprise applications. Anthropic has been targeting significant markets, including partnerships with Infosys and engagements with the U.S. Department of Defense. A system with enhanced memory capabilities would be particularly valuable in these contexts, where maintaining context across complex, multi-session tasks is crucial.

Third, this development arrives at a critical moment for Anthropic, which recently uncovered a massive AI model theft operation targeting Claude by Chinese firms. The competitive landscape is intensifying, and architectural innovations like persistent memory could become key differentiators.

The Broader AI Development Context

This breakthrough occurs against a backdrop of rapid evolution in the AI coding assistant space. Anthropic has been actively expanding its Claude Code family, most recently with the COBOL modernization tool announced in February 2026. The company has also been researching fundamental aspects of AI behavior, publishing studies like the "Polished AI Paradox" based on analysis of 10,000 Claude conversations.

The persistent memory approach aligns with growing recognition in the AI community that simply making models larger isn't sustainable or necessarily effective for all applications. There's increasing interest in architectural innovations that make better use of existing capabilities rather than constantly pursuing scale.

Technical Implications and Future Directions

The successful implementation of persistent memory systems suggests several technical directions for future AI development:

Hybrid Architectures: Combining large language models with specialized memory systems could become standard practice for complex applications.

Efficiency Gains: By reducing the need to constantly reprocess information, such systems could offer significant efficiency improvements, potentially lowering computational costs.

Specialized Applications: This approach might be particularly valuable for domains requiring extended context, such as software development, legal analysis, scientific research, and complex decision support systems.

Human-AI Collaboration: Enhanced memory capabilities could fundamentally improve how humans interact with AI systems, creating more coherent, consistent, and useful partnerships over time.

Competitive Landscape Implications

For Anthropic, this development represents both a challenge and an opportunity. While being outperformed in a specific benchmark is never ideal, it highlights areas for potential improvement in their own systems. Given Anthropic's research capabilities and resources, they're well-positioned to either develop their own implementations of similar concepts or acquire companies working in this space.

The broader competitive implications are significant. If persistent memory systems prove broadly effective, they could level the playing field between large AI companies with massive resources and smaller, more nimble startups with innovative architectural approaches.

Looking Forward: The Next Generation of AI Systems

This development suggests we may be entering a new phase of AI advancement where architectural innovation becomes as important as scale. The "secret architecture" that reportedly beat Claude Code points toward AI systems that are more than just pattern recognizers—they're becoming true computational systems with structured memory and reasoning capabilities.

As AI continues to evolve, we can expect more cross-pollination between traditional computer science and modern machine learning. Concepts from operating systems, database design, and systems architecture may become increasingly relevant to AI development, creating opportunities for researchers and developers with diverse backgrounds.

The success of this approach also raises questions about what other "old ideas" might find new life in AI. As the field matures, we may discover that many solutions to current limitations already exist in the rich history of computer science, waiting to be rediscovered and adapted to the unique challenges of artificial intelligence.

Source: Towards AI article on persistent memory systems outperforming Claude Code