What Changed — Claude Code Now Works with Local LLMs

On March 21, 2026, an open-source script was released that enables Claude Code to connect to local Large Language Models (LLMs) through llama.cpp. This means you can now run Claude Code entirely offline, using models you host on your own machine instead of relying on Anthropic's cloud API.

The script essentially creates a bridge between Claude Code's Model Context Protocol (MCP) interface and local LLM inference engines. While the official Claude Code client still connects to Anthropic's servers by default, this script intercepts those calls and redirects them to your local LLM setup.

What It Means For You — Privacy, Cost, and Offline Access

Complete Privacy: Your code, prompts, and generated outputs never leave your machine. This is crucial for developers working with proprietary codebases, sensitive data, or in regulated industries where cloud AI services pose compliance risks.

Zero API Costs: You eliminate Claude API usage fees entirely. Once you've downloaded the model weights, you can run Claude Code indefinitely without paying per-token charges.

Offline Development: No internet connection required. You can use Claude Code on planes, in remote locations, or anywhere without reliable internet access.

Model Choice Flexibility: You're not limited to Claude models. You can experiment with various open-source models optimized for coding tasks, finding the one that best fits your workflow.

How To Set It Up — Step-by-Step Implementation

Prerequisites:

- Python 3.8+ installed

- llama.cpp compiled and ready

- A capable local LLM (like CodeLlama, DeepSeek-Coder, or StarCoder) converted to GGUF format

Installation Steps:

- Clone the repository:

git clone https://github.com/[username]/claude-code-local-llm

cd claude-code-local-llm

- Install dependencies:

pip install -r requirements.txt

- Configure your local LLM endpoint in

config.yaml:

llm_backend: "llama.cpp"

model_path: "/path/to/your/model.gguf"

n_gpu_layers: 20 # Adjust based on your GPU

context_size: 16384

- Run the bridge server:

python claude_bridge.py

- Configure Claude Code to use your local endpoint by setting the environment variable:

export CLAUDE_API_BASE="http://localhost:8000/v1"

- Launch Claude Code normally:

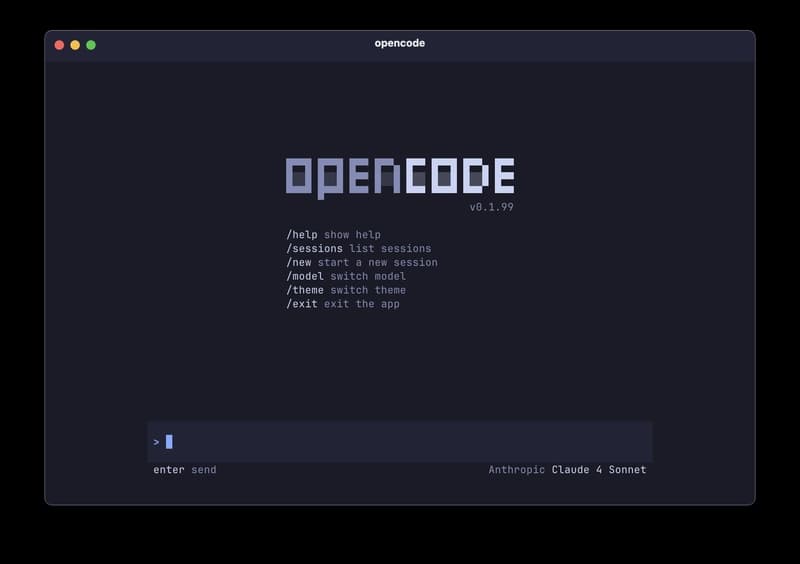

claude code

Performance Considerations:

- Expect slower response times compared to cloud Claude, especially on CPU-only systems

- Memory usage will be higher as the model loads into RAM/VRAM

- Quality may vary depending on the local model you choose

When To Use Local vs. Cloud Claude Code

Use Local When:

- Working with sensitive/proprietary code

- You have limited or no internet access

- You want to avoid API costs for simple tasks

- You need deterministic, reproducible outputs

Stick with Cloud When:

- You need Claude Opus 4.6's superior reasoning for complex problems

- Speed is critical for your workflow

- You're using memory-intensive features that benefit from Anthropic's infrastructure

- You want access to the latest model updates immediately

Current Limitations and Future Potential

The script currently has some limitations:

- Not all Claude Code features may work perfectly with local models

- Memory management and context handling might differ from cloud behavior

- You'll need to manually update the bridge when Claude Code's API changes

However, this represents a significant step toward making Claude Code more accessible and flexible. As local LLMs continue to improve in coding capability, this approach could become increasingly viable for day-to-day development work.