Key Takeaways

- A developer used an AI agent powered by Claude Code and the Model Context Protocol (MCP) to diagnose a severe GPU performance bottleneck.

- The agent analyzed system kernel traces, pinpointing excessive CPU context switches as the culprit, demonstrating a practical application of agentic AI for complex technical debugging.

What Happened

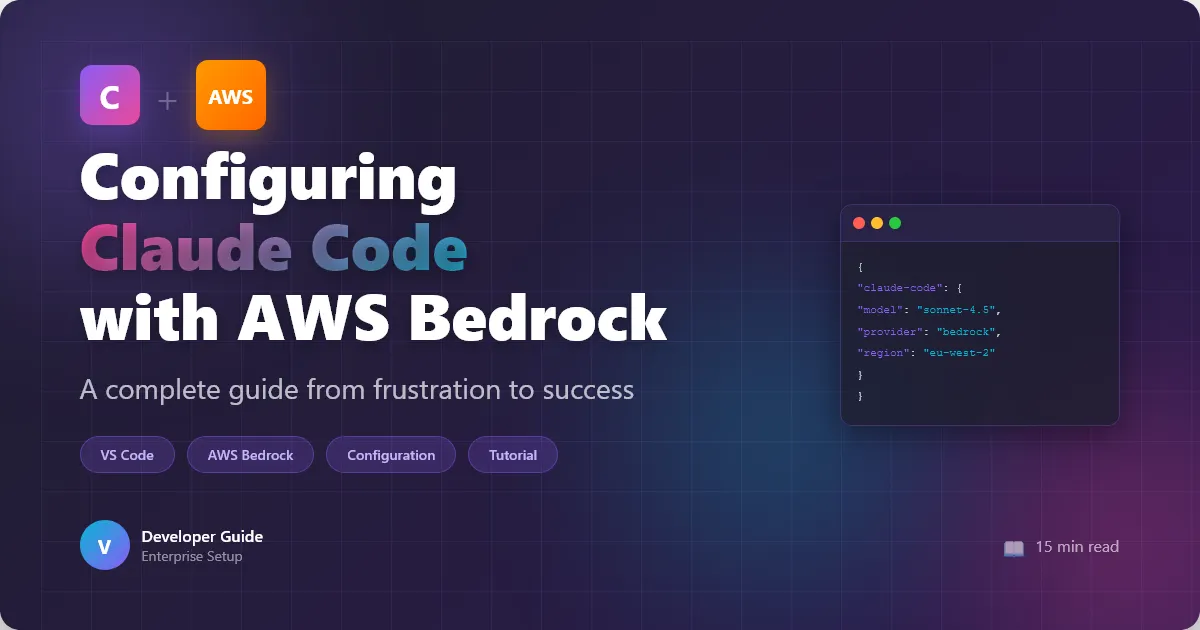

A developer documented a case where an AI agent successfully debugged a critical performance issue in a PyTorch machine learning pipeline. The agent was tasked with investigating why a DataLoader was running 114 times slower than expected. By leveraging Claude Code—Anthropic's agentic coding tool—integrated with the Model Context Protocol (MCP), the AI was given access to system-level diagnostic tools.

The agent's primary action was to read and interpret kernel traces—low-level system performance data that logs CPU and GPU activity. Through this analysis, the AI identified that the GPU was being starved due to an excessive number of CPU context switches (specifically, 3,676 switches). This pinpointed the root cause of the bottleneck. The entire diagnostic process, from problem statement to identified root cause, was completed in under 30 minutes.

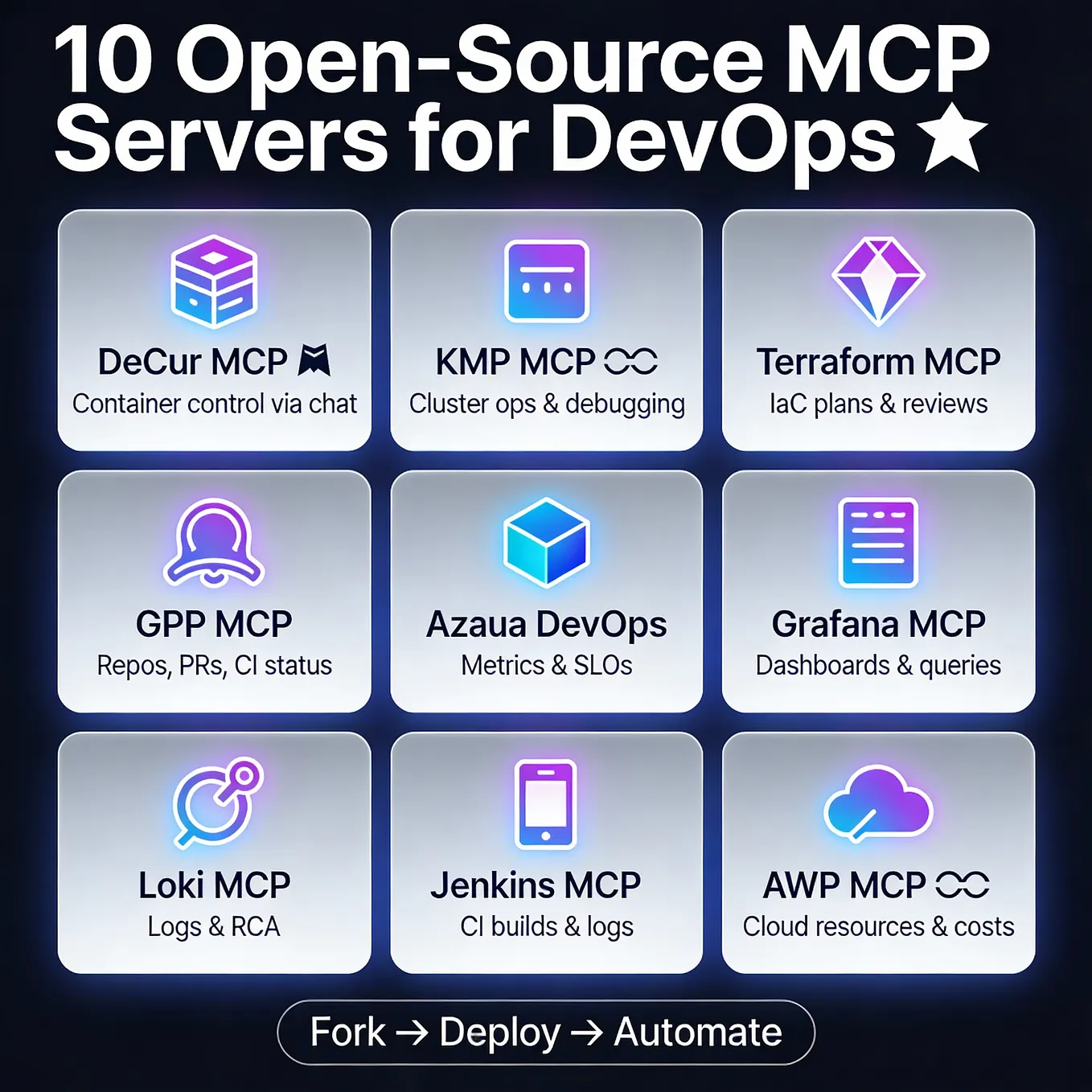

Technical Details: MCP and Agentic Tool Use

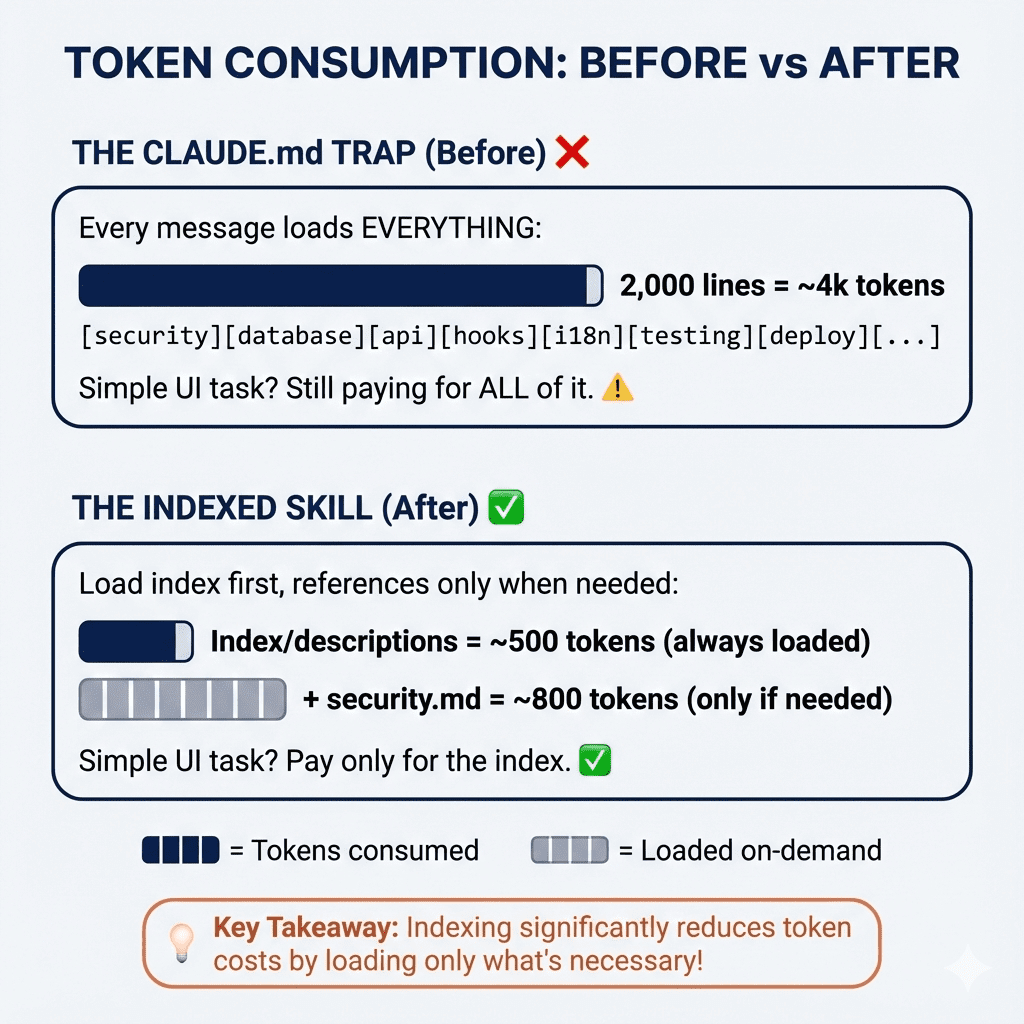

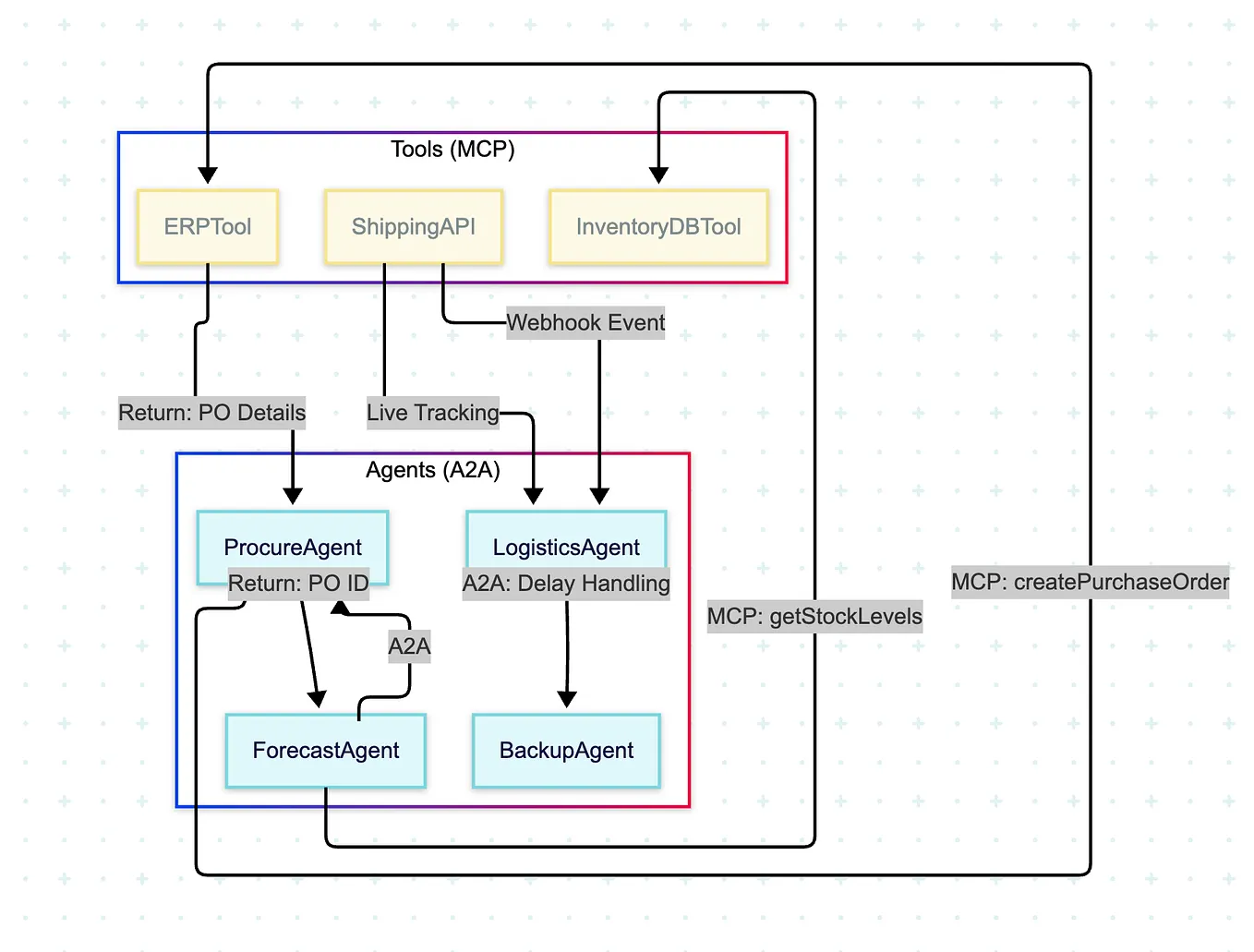

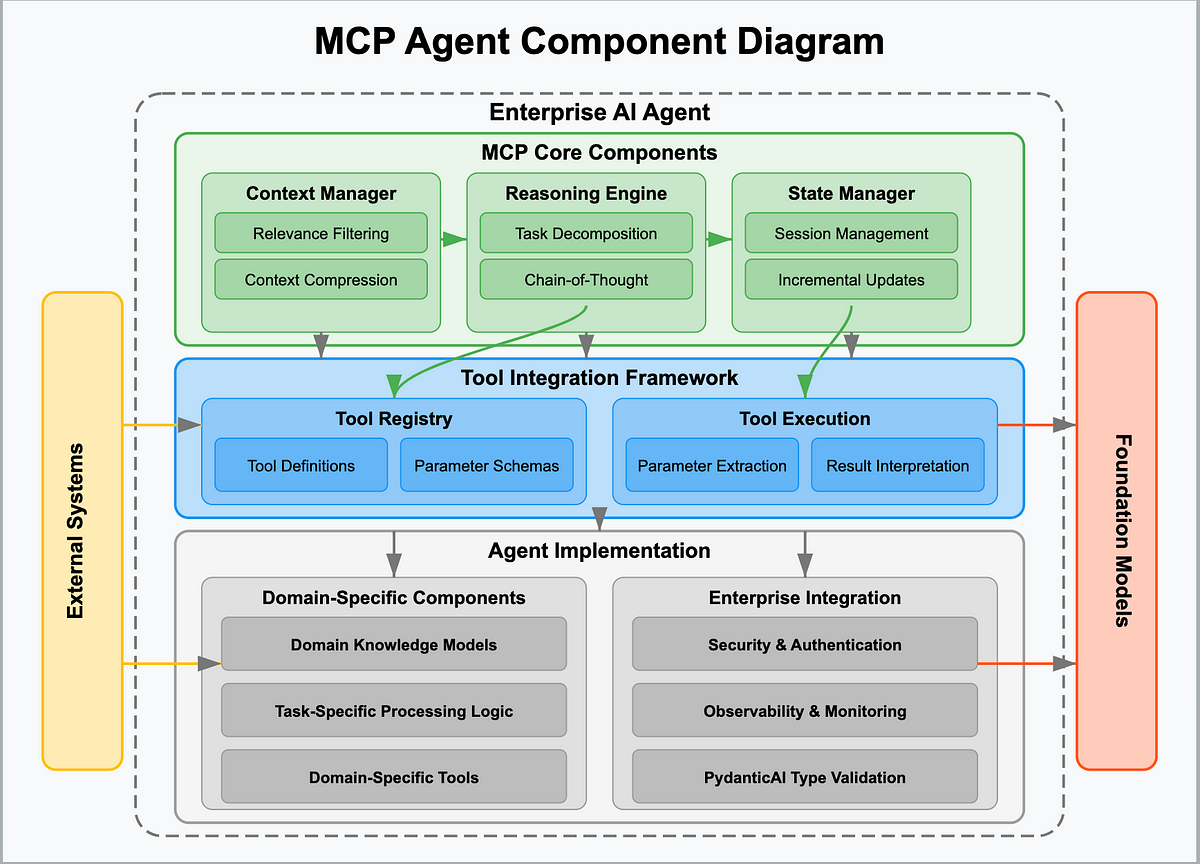

The breakthrough here is less about a novel algorithm and more about the orchestration of existing tools by an AI agent. The key enabling technology is Anthropic's Model Context Protocol (MCP), an open standard that allows AI models to securely connect to and interact with external data sources and tools. In this instance, MCP was used to give the Claude-powered agent access to the perf subsystem and kernel trace files on the developer's machine.

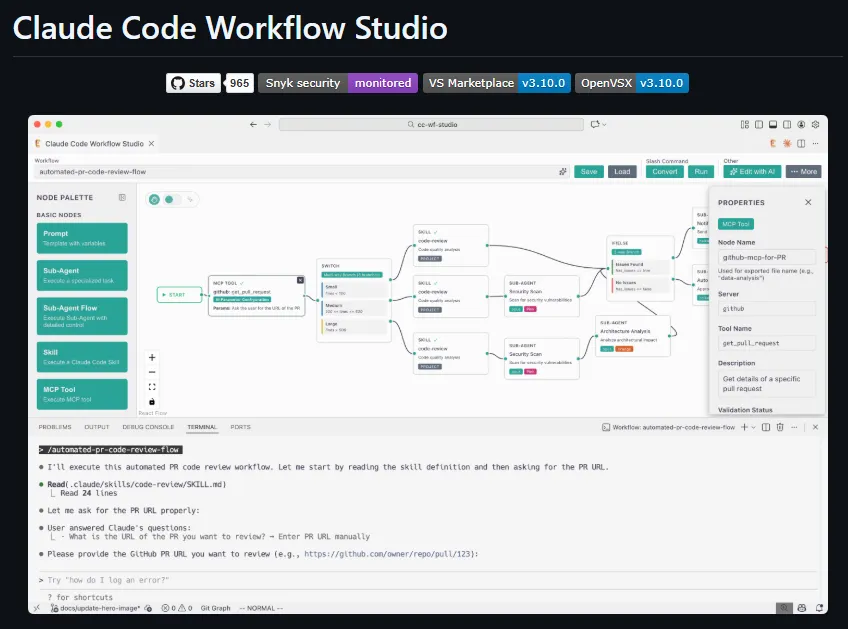

This represents a shift from AI as a conversational assistant to AI as an autonomous investigative agent. The agent didn't just suggest commands; it executed a diagnostic workflow:

- Perception: It accessed and read the complex, structured data from kernel traces.

- Reasoning: It analyzed the data to identify abnormal patterns (the spike in context switches).

- Conclusion: It synthesized the finding into a coherent, actionable root cause.

The agent utilized Claude Opus 4.6, Anthropic's most capable model for complex reasoning, which has been central to their recent push into agentic systems. This follows the industry-wide trend, noted in our Knowledge Graph, where 2026 has been predicted as a breakthrough year for AI agents crossing critical reliability thresholds.

Retail & Luxury Implications

While the source example is from general ML ops, the implications for retail and luxury AI teams are significant and direct. The core value proposition is dramatically accelerated time-to-insight for complex system performance issues, which are endemic in data-heavy retail applications.

Potential Applications:

AI Model Training & Fine-Tuning: Luxury houses training large vision models (for product recognition, virtual try-on, or trend forecasting) or LLMs (for customer service and copywriting) often face opaque training bottlenecks. An agent like this could diagnose why a training job on a costly GPU cluster is underperforming, saving days of engineer time and thousands in compute costs.

Real-Time Recommendation Systems: High-traffic e-commerce platforms rely on low-latency inference from models powering recommendations and search. If performance degrades, an AI debugging agent could analyze traces from production servers (in a sandboxed environment) to identify issues like memory leaks, inefficient batching, or data pipeline stalls faster than a human SRE team.

Supply Chain & Demand Forecasting Pipelines: Batch processing jobs for inventory optimization or sales forecasting can become slow without clear reason. An agent equipped with MCP tools could audit the entire data pipeline—from database queries to feature computation to model inference—to find the critical path blockage.

The Gap to Production: The demonstrated use case is a controlled, single-user debugging scenario. For enterprise retail adoption, significant work is needed on security, governance, and scalability. Granting an AI agent access to kernel-level traces or production logs requires robust sandboxing and strict permission protocols. The technology is proven in concept but requires mature MLOps integration to be deployed safely in a corporate environment.

gentic.news Analysis

This case study is a concrete validation of the agentic AI trend that has dominated our coverage. It directly connects several key entities in our Knowledge Graph: Claude Code (the acting product), the Model Context Protocol (the enabling framework), and Claude Opus 4.6 (the reasoning engine). The fact that Claude Code has appeared in 69 articles this week alone underscores its rapid ascent as a core tool in the developer's AI stack, nearly tripling its share recently. This growth is likely fueled by practical demonstrations of value like this one, moving beyond hype to tangible productivity gains.

The development also exists in a competitive landscape. Cursor is noted as a direct competitor to Claude Code, and our related coverage shows OpenAI Codex is rapidly adding agentic capabilities like screen control and long-running agents. This debugging use case represents a battleground: whichever platform most reliably automates complex, time-consuming developer workflows will capture market share. The outage experienced by Claude Code in mid-April 2026 highlights that reliability remains a critical factor for adoption.

For retail AI leaders, the takeaway is that agentic debugging is transitioning from research to viable prototype. The prerequisite is having ML pipelines worth optimizing—which top luxury brands certainly do. The logical next step is to pilot such an agent in a non-critical environment, such as analyzing performance logs from a staging server for a computer vision model. Success there could pave the way for embedding this capability into the MLOps lifecycle, creating a more resilient and efficient AI infrastructure—a key advantage when competing on personalization and customer experience.