In a significant development for the AI research community, Andrej Karpathy—renowned for his work at Tesla and OpenAI—has open-sourced "autoresearch," a minimalist Python tool that enables AI agents to autonomously conduct machine learning experiments. This 630-line framework represents a paradigm shift in how researchers approach iterative optimization, particularly for those working with limited computational resources.

The Autoresearch Framework: Simplicity Meets Power

Autoresearch is built around a stripped-down version of nanochat, Karpathy's minimal large language model training framework, condensed into a single-file repository optimized for execution on a single NVIDIA GPU. The core architecture is elegantly simple: humans refine a high-level prompt in a Markdown file (program.md), while an AI agent—powered by an external LLM like Claude or Codex—autonomously edits the training script (train.py) to experiment with improvements.

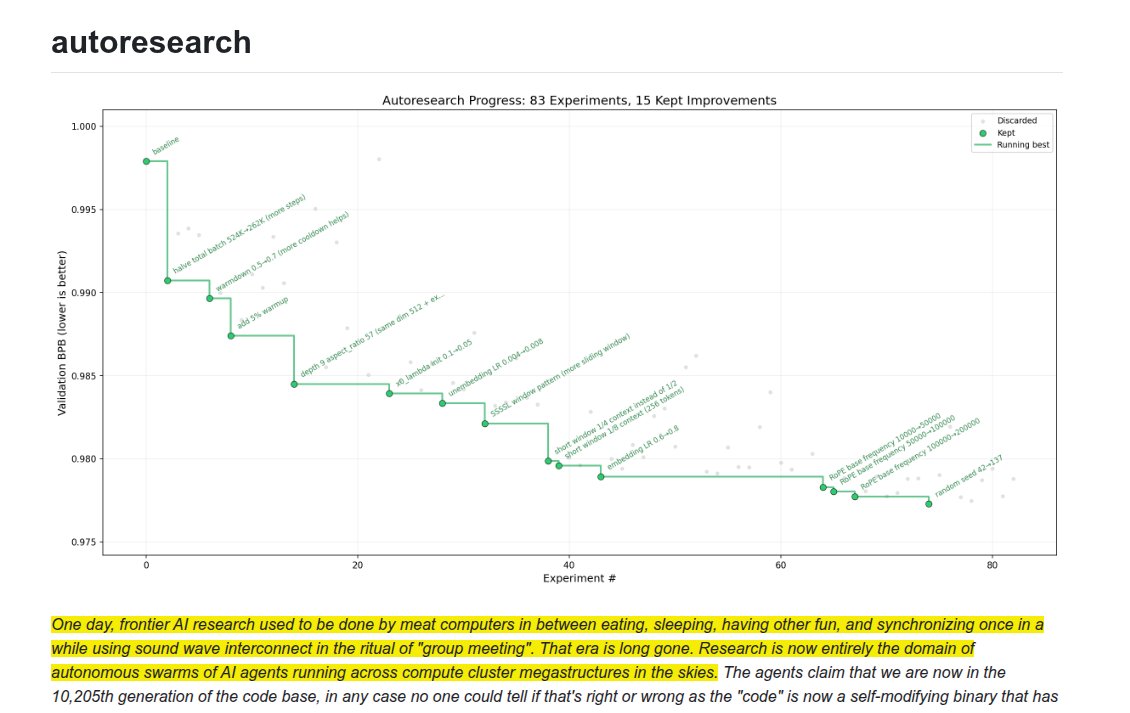

The system operates on a clear objective: achieve the lowest possible validation bits per byte (val_bpb) in fixed 5-minute training runs. This constraint simulates rapid, iterative research cycles that mirror real-world experimentation while maintaining computational feasibility for individual researchers and small teams.

How Autonomous Iteration Works

The autonomous iteration process transforms traditional ML experimentation into an agentic loop. The AI agent proposes code changes based on the human-provided prompt, runs experiments, evaluates results, and iteratively refines its approach. This creates a feedback loop where the system learns from each iteration, gradually optimizing hyperparameters and architectural elements without continuous human intervention.

What makes autoresearch particularly noteworthy is its accessibility. By optimizing for single-GPU execution, Karpathy has effectively democratized autonomous ML experimentation. Researchers without access to massive computational clusters can now leverage agentic AI systems to accelerate their work, potentially leveling the playing field in AI research.

Context in the Evolving AI Landscape

This release comes at a pivotal moment in AI development. Recent events indicate that autonomous AI agents have crossed a critical reliability threshold that fundamentally transformed programming capabilities in late 2026. Simultaneously, NVIDIA—whose hardware underpins autoresearch's single-GPU optimization—has been reportedly developing new hybrid AI chips combining NVIDIA GPU and Groq hardware technology, while also introducing Nemotron-Terminal, a data engineering pipeline for scaling terminal-based LLM agents.

The convergence of these developments suggests a broader trend toward more accessible, agent-driven research tools. As AI agents become increasingly positioned to revolutionize corporate finance departments by automating complex processes, tools like autoresearch extend this automation potential to the research domain itself.

Implications for Research Methodology

Autoresearch represents more than just another open-source tool; it embodies a philosophical shift in how we approach machine learning research. By externalizing the iterative experimentation process to AI agents, researchers can focus more on high-level problem formulation and creative direction rather than getting bogged down in repetitive optimization tasks.

This approach could accelerate research cycles dramatically. Instead of manually testing dozens of hyperparameter combinations, researchers can define their objectives and constraints, then let the agent explore the solution space autonomously. The 5-minute training run constraint ensures this exploration remains computationally tractable while still yielding meaningful insights.

Future Directions and Community Impact

As an open-source project, autoresearch invites community contributions and adaptations. Researchers might extend the framework to different problem domains beyond language model training, apply it to other types of neural architectures, or integrate it with more sophisticated agentic systems.

The timing of this release is particularly significant given the broader industry context. With AI agents demonstrating increasing reliability and NVIDIA continuing to innovate at the hardware level, tools like autoresearch could catalyze a new wave of decentralized AI research conducted by smaller teams and individual researchers rather than exclusively by well-funded corporate labs.

Conclusion: A Step Toward Democratized AI Research

Andrej Karpathy's autoresearch represents a thoughtful contribution to the AI research ecosystem—one that prioritizes accessibility, simplicity, and practical utility. By condensing autonomous ML experimentation into 630 lines of Python code optimized for single GPUs, Karpathy has provided researchers with a template for agentic research that balances sophistication with approachability.

As the AI field continues to evolve at breakneck speed, tools that democratize access to advanced research methodologies will become increasingly valuable. Autoresearch offers a glimpse into a future where AI doesn't just solve problems but actively participates in the research process itself, potentially accelerating innovation across the entire field.

Source: Based on Andrej Karpathy's open-source release of autoresearch as reported by MarkTechPost and additional technical analysis.