What Happened

The source article presents a technical comparison between two frameworks for orchestrating AI agents: LangGraph and Temporal. The core thesis is that building reliable, long-running AI agent workflows requires moving beyond simple for loops to a durable execution architecture. This architecture ensures that complex, multi-step LLM-powered processes can handle failures, persist state, and resume execution without losing progress—a critical requirement for production systems.

The article positions LangGraph as a framework focused on planning and orchestrating the logical flow of an AI agent, using a graph-based structure to define nodes (tasks) and edges (conditional pathways). It's inherently tied to the LangChain ecosystem and is designed for defining the agent's reasoning and decision-making process.

In contrast, Temporal is presented as a general-purpose workflow orchestration platform that excels at execution durability. It provides features like checkpointing, state persistence, retries, and webhook integrations. Its strength is in guaranteeing that a defined workflow runs to completion, even across server restarts or infrastructure failures.

The key insight is that these tools can be complementary. LangGraph can define the agent's "brain"—the plan—while Temporal can be the "central nervous system" that reliably executes that plan at scale, especially for pipelines involving thousands of items (e.g., "10,000-item LLM pipelines").

Technical Details

The Problem: Beyond For Loops

Simple scripting or looping over a task list is insufficient for production AI agents. Agents performing long-horizon tasks—like processing a massive product catalog, conducting multi-turn customer service dialogues, or running a multi-day competitive analysis—need:

- State Persistence: The ability to save progress and resume from a checkpoint.

- Fault Tolerance: Automatic retries and recovery from LLM API failures, network timeouts, or code bugs.

- Observability: Detailed logs and tracing to understand the agent's decision path.

- Scalability: Distributing work across workers and handling concurrent executions.

LangGraph: The Planning Layer

LangGraph models an agent's workflow as a stateful graph. Developers define:

- Nodes: Functions that perform a step (e.g., "call search API," "generate draft response," "validate output").

- Edges: Rules that determine which node to execute next based on the current state.

- State: A shared data structure passed between nodes, updated at each step.

It is excellent for encoding complex reasoning, loops, and conditional branches within a single agent's execution. However, managing the durability and distributed execution of many such graphs over long periods is not its primary design goal.

Temporal: The Durable Execution Engine

Temporal guarantees workflow durability. Its core abstraction is the Workflow, a function whose execution is automatically persisted by the Temporal system. Key features include:

- Automatic Checkpointing: The state of the workflow function is recorded without developer intervention.

- Deterministic Execution: Workflows can be replayed from any checkpoint, ensuring consistent outcomes.

- Activity Orchestration: Long-running or non-deterministic tasks (like calling an LLM API) are modeled as "Activities," which Temporal schedules and retries.

- Scalable & Language-Agnostic: Workflows can be written in multiple languages and scaled across a worker fleet.

For an AI agent pipeline, Temporal would manage the overarching job—like "process all new customer inquiries"—ensuring each sub-task (agent invocation) is completed reliably.

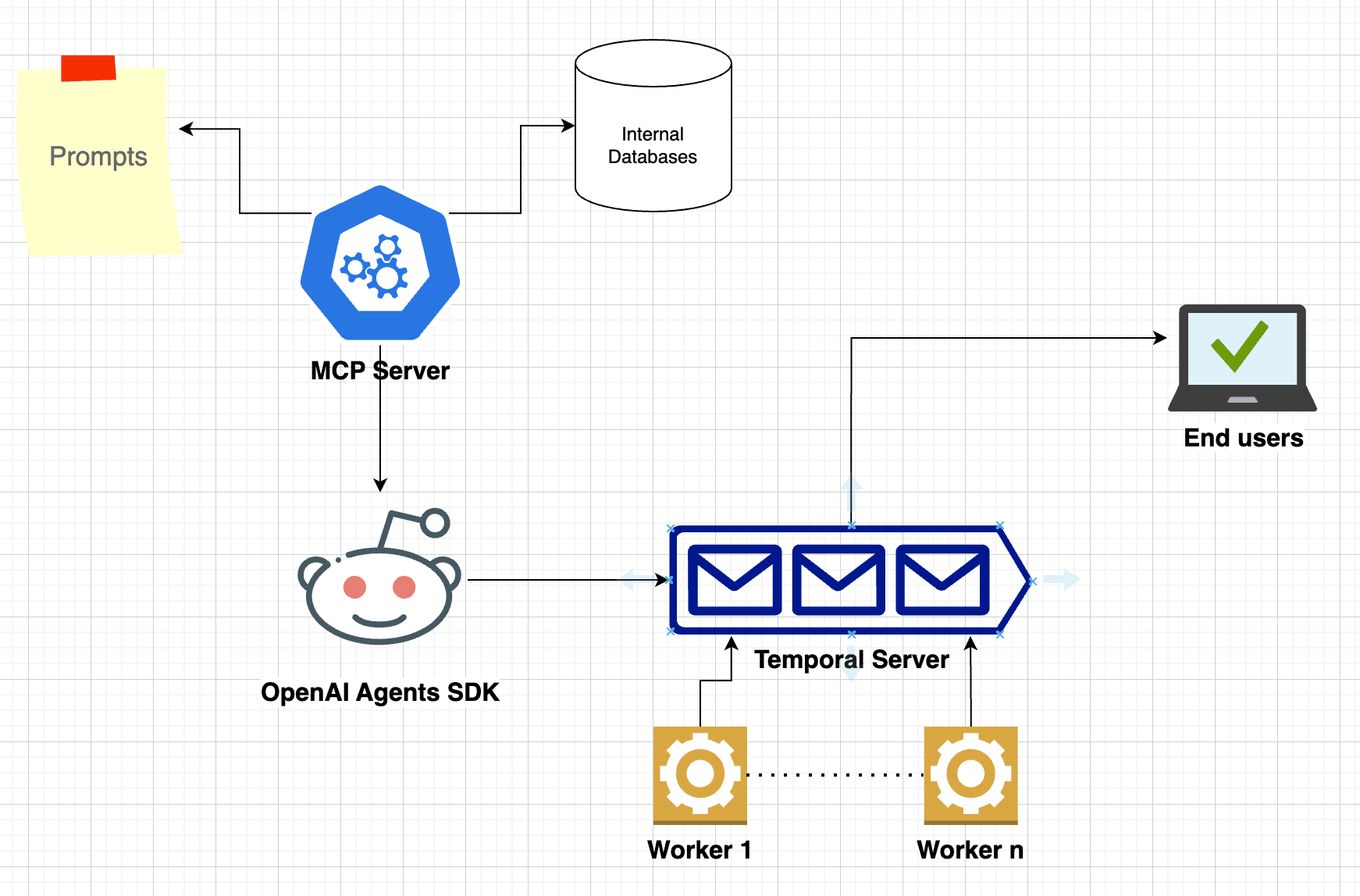

Synergy: Plan vs. Execute

A potent architecture suggested by the source is using LangGraph inside a Temporal Activity. Temporal manages the durable, scalable execution of the high-level job. For each item in the job (e.g., a single customer query), it launches an Activity. That Activity, in turn, uses a LangGraph to execute the specific, intelligent agentic workflow for that item. This combines LangGraph's flexible planning with Temporal's rock-solid execution guarantees.

Retail & Luxury Implications

While the article is a general technical comparison, the implications for retail and luxury are significant, as these sectors are increasingly deploying AI agents for complex, high-value tasks.

Potential High-Impact Use Cases:

- Personalized Outreach & CRM Orchestration: An agent workflow that segments customers, generates hyper-personalized copy for emails or messages, selects the optimal channel and timing, executes the send, and processes responses. A durable execution framework is essential to manage this multi-day, multi-step campaign across thousands of clients without dropping a single interaction.

- Automated Competitive & Market Intelligence: A persistent agent tasked with daily monitoring of competitor pricing, new product launches, social sentiment, and trend reports. It would need to run indefinitely, synthesize information from dozens of sources, and generate actionable briefs. Temporal could ensure it never stops running, while LangGraph could manage the reasoning chain for analysis.

- Large-Scale Catalog Enrichment & Tagging: Processing 10,000+ new SKUs involves multiple AI steps: vision models for attribute extraction, LLMs for generating compelling descriptions and SEO tags, and validation steps for quality control. A durable pipeline is critical to handle failures on individual items and ensure complete, accurate processing.

- Multi-Turn Virtual Personal Shopper Agents: Advanced shopping assistants that engage in prolonged dialogues, remember context across sessions, and execute actions (like checking inventory or creating a lookbook). This requires a persistent agent state (LangGraph) that is reliably maintained across user sessions and infrastructure updates (Temporal).

The move towards agentic AI—where systems autonomously perform sequences of tasks—makes the choice of underlying orchestration architecture a foundational technical decision. Building on flimsy orchestration will lead to fragile, unreliable customer-facing experiences and internal tools. Investing in durable execution from the outset is a prerequisite for moving AI agents from proof-of-concept to production-grade reliability.