A research team associated with Meta's Chief AI Scientist Yann LeCun has published a paper introducing LeWorldModel, a compact world model that solves a fundamental training instability problem that has plagued the field. The model, which has just 15 million parameters, is described as the first world model that "can't collapse" during training due to a novel mathematical constraint.

World models are a core component of LeCun's vision for autonomous machine intelligence. They learn to predict future states of an environment from raw sensory inputs (like video frames) and actions. This capability is foundational for robots that need to plan movements, autonomous vehicles that must simulate outcomes before steering, or any AI that acts in the physical world rather than just processing language.

The Collapse Problem and the SIGReg Solution

The central technical hurdle for world models has been training collapse. During training, the model's encoder—which compresses a video frame into a compact latent vector—can find a catastrophic shortcut: it learns to map every possible input to the same output vector. The predictor network, which takes this vector and an action to forecast the next state, then simply learns to output a constant. The model achieves a low prediction loss by predicting the average of all future states, rendering it useless for any specific planning task. It's akin to a weather app that only ever predicts "sunny."

Previous attempts to prevent collapse created a "fragile stack" of engineering fixes: using frozen encoders, applying stop-gradient operations, and meticulously tuning six or more loss hyperparameters. These systems were brittle and difficult to scale or reproduce.

The LeWorldModel paper, highlighted by AI commentator Lior OnAI, takes a different approach. The researchers asked: what if you make collapse mathematically impossible?

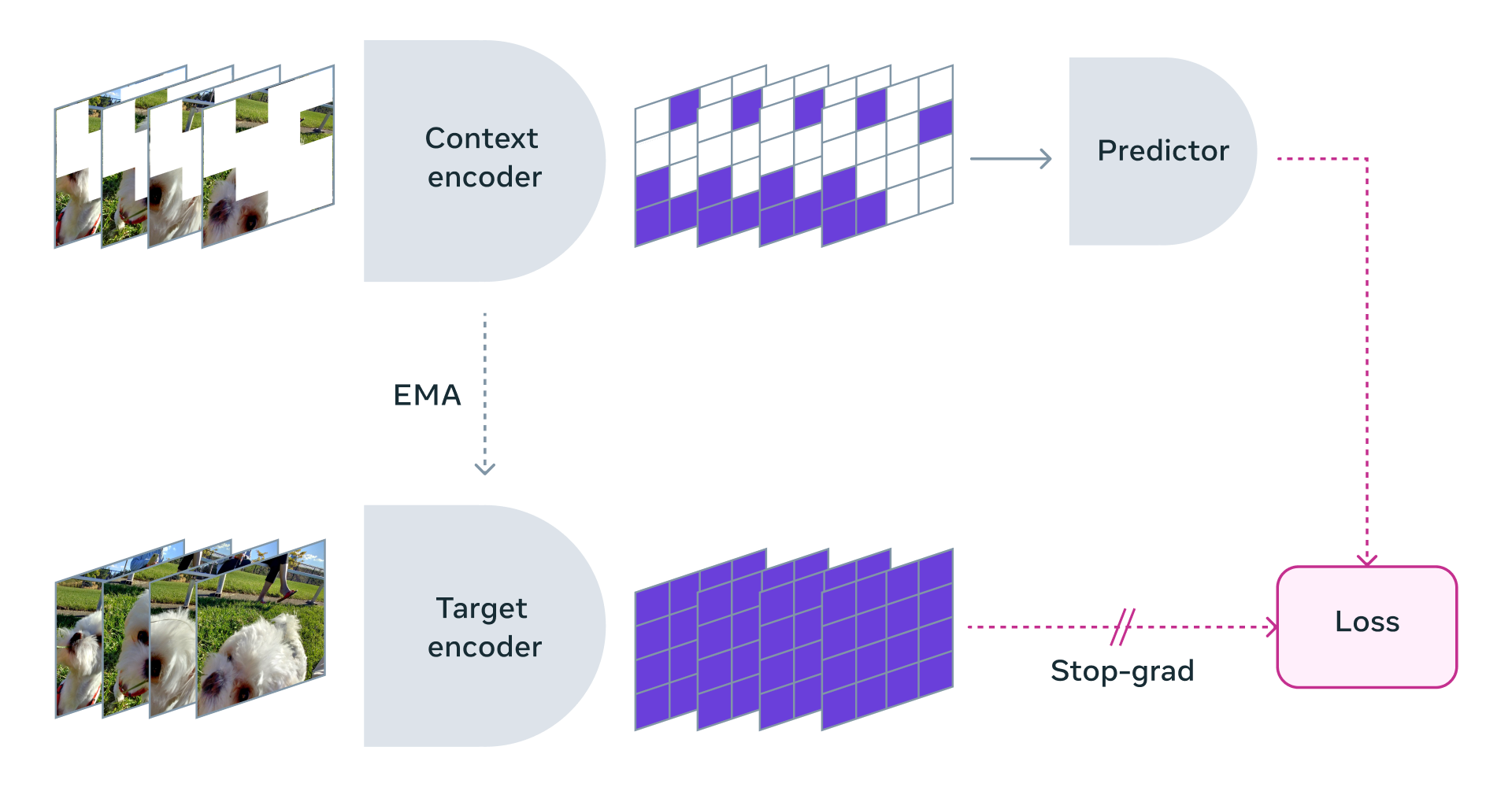

The architecture is straightforward:

- An encoder transforms each video frame into a small latent vector (

z). - A predictor takes the current latent vector and an action, and outputs a prediction for the next latent vector (

z_hat).

The training uses two losses:

- First loss (Prediction): The mean squared error between the predicted next latent

z_hatand the actual encoded next latentz. - Second loss (Regularization): This is the key innovation—SIGReg (Sparse Information Geometric Regularization). This loss continuously monitors the statistical distribution of the latent vectors

zacross a batch. It penalizes the model if these vectors begin to cluster or look too similar, effectively forcing them to maintain a spread-out, informative distribution (approximating a bell curve). If the encoder tries to collapse all inputs to a single point, the SIGReg loss spikes, preventing the optimization from proceeding down that path.

Key Results and Practical Implications

The simplicity of this approach yields significant practical advantages, as outlined in the source analysis:

- Reduced Complexity: The "fragile stack" of 6+ hyperparameters is reduced to essentially one (the weight on the SIGReg loss).

- Small Scale: The model contains only 15 million parameters.

- Accessible Training: It can be trained on a single GPU in a matter of hours.

- Efficiency: The model encodes visual inputs using ~200x fewer tokens than a comparable VQ-VAE approach and can plan 48x faster.

- Open Source: The model is publicly available.

This combination of stability, small size, and open-source availability has a democratizing effect. As LiorOnAI notes, it changes "who gets to build physical AI. Not just big labs anymore. Any team, any startup, any grad student."

The development is a direct answer to a major criticism of LeCun's long-advocated Joint Embedding Predictive Architecture (JEPA) framework, which world models exemplify. Critics have pointed to training instability as a fundamental weakness. This paper provides a concrete, working solution to that instability.

gentic.news Analysis

This paper represents a critical, pragmatic step forward for LeCun's vision of energy-based models and hierarchical planning, which he has consistently positioned as an alternative to pure autoregressive large language models. For years, LeCun has argued that true understanding and safe action in the physical world require models that learn internal representations of how the world works—a "world model." The training collapse problem was the most cited technical roadblock preventing widespread adoption of this approach. By solving it with a mathematically elegant regularizer, the team has removed a primary objection and provided a viable, minimal baseline for the community to build upon.

The timing is significant. The AI research landscape is currently defined by a stark dichotomy: the scaling race towards ever-larger LLMs requiring massive compute, versus the pursuit of smaller, more efficient models that learn grounded, physical reasoning. LeCun is the most prominent advocate for the latter path. This release is a tangible artifact of that philosophy—a small, efficient, open model that performs a core cognitive function. It enables research in robotics, embodied AI, and video prediction to proceed without the overhead of billion-parameter models or unstable training regimes.

Practitioners working on simulation, robotics, or autonomous systems should pay close attention. LeWorldModel isn't a monolithic AI that does everything; it's a specialized, stable component for state prediction. Its value will be in how it's integrated into larger systems for planning and control. The fact that it can be trained and run on modest hardware makes it an ideal candidate for rapid prototyping and academic research, potentially accelerating progress in these fields far beyond the walls of well-funded corporate labs.

Frequently Asked Questions

What is a "world model" in AI?

A world model is an AI component that learns to predict the future state of an environment from its current state and a potential action. It builds an internal, compressed representation of how the world dynamics work, allowing an agent to "imagine" or simulate the consequences of its actions before taking them. This is considered essential for intelligent, plan-based behavior in robots or autonomous systems.

What does "training collapse" mean?

Training collapse is a failure mode where a world model's encoder learns to ignore the input and output the same latent vector for every frame. The predictor then learns to output a constant, averaging all future states. The model achieves a deceptively low training error by being consistently vaguely right, but becomes completely incapable of making specific, useful predictions for planning. It's the model equivalent of giving up and guessing the average.

How does SIGReg prevent collapse?

SIGReg (Sparse Information Geometric Regularization) is a loss function that acts as a regularizer. It continuously measures the statistical distribution of the latent vectors produced by the encoder across a batch of data. If the vectors start to become too similar—the first sign of collapse—the SIGReg loss increases dramatically. This penalizes the model during training, forcing the optimization process to find solutions where the encoder produces diverse, informative representations, making collapse mathematically untenable.

Why does the small size (15M parameters) of LeWorldModel matter?

The small size has multiple implications. First, it makes the model incredibly efficient to train (single GPU, hours) and run, enabling fast planning (48x faster than a baseline). Second, it reduces the risk of overfitting and improves generalization. Most importantly, it democratizes access. Researchers and startups without massive compute budgets can now experiment with and build upon a stable world model, potentially lowering the barrier to innovation in robotics and embodied AI.