A research team including Meta's Chief AI Scientist Yann LeCun has published a paper introducing LeWorldModel, a method for training world models from raw visual inputs without the fragile collection of training tricks typically required to prevent model collapse. The work, detailed in the paper "LeWorldModel: Stable End-to-End Joint-Embedding Predictive Architecture from Pixels," presents a simplified, stable training recipe that could lower the barrier to developing agents that understand and predict environmental dynamics.

What the Researchers Built

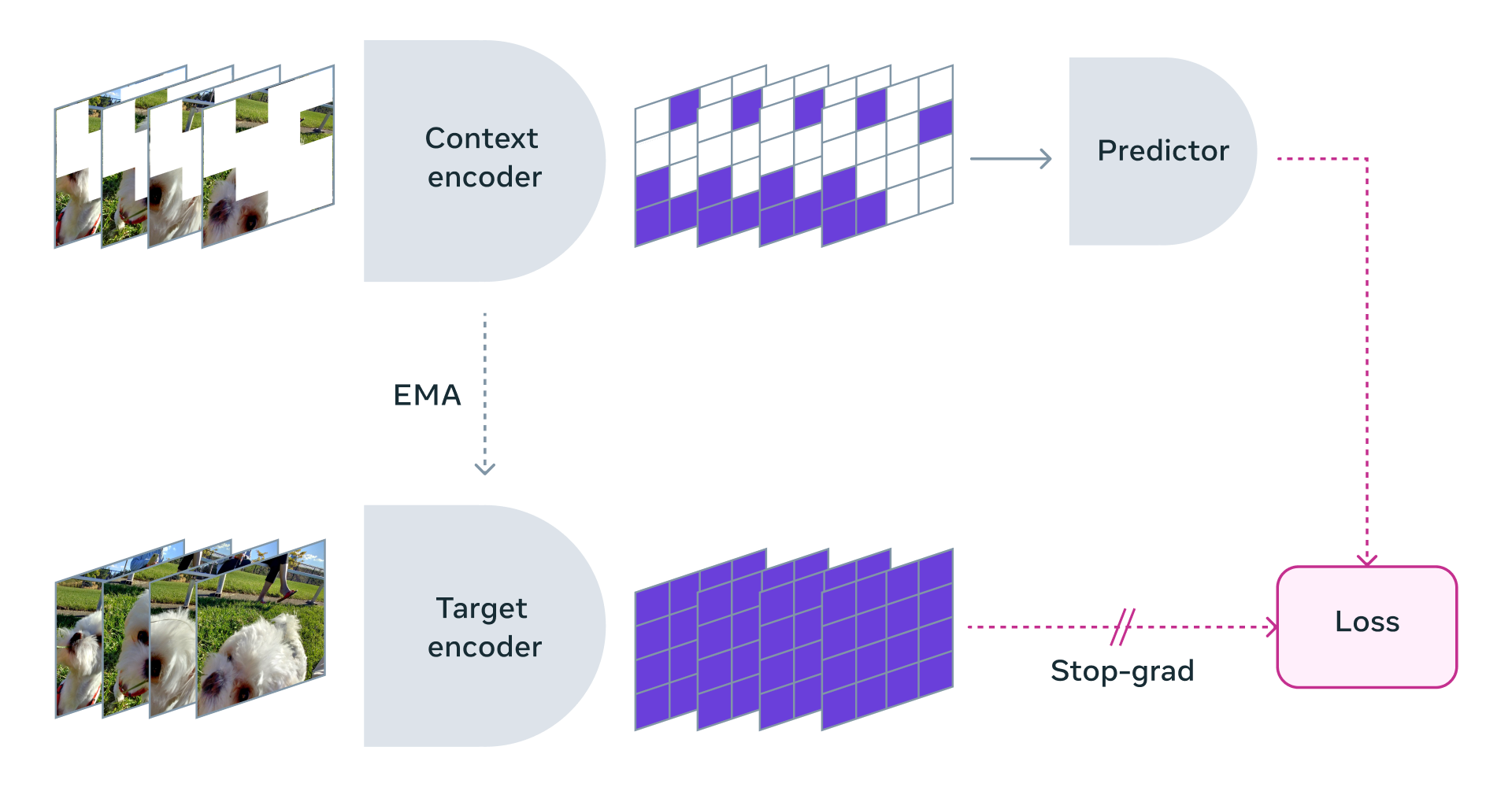

LeWorldModel is a Joint-Embedding Predictive Architecture (JEPA) designed to learn a world model—a system that predicts how a visual scene will change given an action—directly from pixel observations. Unlike large language models that learn patterns in text, a world model aims to learn the underlying dynamics of an environment, a critical component for robotic control and planning where an agent must anticipate the consequences of its actions.

The core challenge in training such models is representation collapse: the model learns to map many different visual frames to nearly identical latent representations, making prediction trivial but useless. Historically, preventing collapse required a patchwork of techniques like frozen encoders, moving-average targets, stop-gradient operations, and carefully tuned auxiliary loss weights. These made systems complex, fragile, and difficult to scale.

LeWorldModel's architecture seeks to achieve the same goal—learning predictive representations from pixels—using only two core components, eliminating the need for the aforementioned bag of tricks.

How It Works: A Two-Loss Recipe

LeWorldModel's training stability stems from a deliberately simple objective composed of two losses:

Predictive Loss: This is the primary task objective. The model (an encoder and predictor) must predict the next latent state representation given the current latent state and an action. Formally, if (z_t) is the encoded representation of frame (x_t), and (a_t) is the action taken, the predictor outputs (\hat{z}{t+1}). The loss minimizes the difference between this prediction and the true future representation (z{t+1}).

SIGReg Loss (Spatially-Invariant Gaussian Regularization): This is the key innovation for preventing collapse. Instead of adding complexity to the encoder or predictor, SIGReg acts directly on the latent space. It applies a regularization term that encourages the batch of latent representations (z) to maintain diversity and spread. It does this by penalizing the latent codes if their distribution deviates from a unit Gaussian, effectively preventing them from collapsing into a single point or small cluster. The "spatially-invariant" aspect ensures the regularization is applied consistently across spatial dimensions of the latent map.

This combination allows the entire system—encoder and predictor—to be trained end-to-end from pixels without freezing components or using exponentially moving averages (EMA) of parameters.

Key Results & Performance

According to the paper, the simplicity of the approach translates to practical efficiency:

- Model Scale: The evaluated model contains 15 million parameters, orders of magnitude smaller than typical foundation models used for video prediction.

- Training Efficiency: It trains in "a few hours" on a single GPU using standard datasets like CARLA (a driving simulator) and RT-1 (robotic manipulation).

- Planning Speed: In planning experiments, the small LeWorldModel is reported to be up to 48 times faster than using a large foundation model as a world model.

- Stability: The paper demonstrates stable training curves where the latent representations maintain healthy variance throughout training, directly contrasting with collapsed baselines.

Why It Matters: Lowering the Barrier to World Models

The significance of LeWorldModel is less about achieving superhuman prediction accuracy and more about providing a robust, simple baseline for world model research. By removing the need for a delicate cocktail of stabilization techniques, it makes the process of training a world model more reproducible, accessible, and easier to build upon.

As the authors note, stable training means world models can become "cheaper, smaller, and easier to test." This is crucial for robotics and embodied AI, where rapid iteration and simulation are key. A 15M-parameter model that trains in hours enables researchers to experiment with architectural variants, loss functions, and training regimes much more freely than when dealing with a brittle, complex setup.

gentic.news Analysis

This paper is a direct and pragmatic iteration within Yann LeCun's long-standing advocacy for energy-based models and joint-embedding architectures as a path toward machine common sense and reasoning. For years, LeCun has argued that pure autoregressive LLMs are a dead-end for true intelligence, proposing instead a world-model-centric approach, most recently formalized in his "Joint Embedding Predictive Architecture (JEPA)" and "Hierarchical JEPA" frameworks. LeWorldModel is a concrete, stripped-down implementation of the core JEPA idea, specifically tackling its most notorious training difficulty: collapse.

The timing is notable. This push for simple, stable, small-scale world models runs counter to the industry's dominant trend of scaling up video prediction models (like Google's V-JEPA or OpenAI's Sora) to massive sizes using immense compute and data. LeCun's team is making a different bet: that the fundamental principles of learning and stability must be solved at small scale first. This mirrors the philosophical divide in AI: one camp seeks emergent capabilities from scale, while another, represented here, seeks engineered understanding from first principles. If the LeWorldModel approach scales successfully, it could offer a dramatically more compute-efficient path to capable embodied agents.

Furthermore, the focus on real-time planning speed (48x faster) directly addresses a critical bottleneck for robotics. Foundation models are often too slow for the tight control loops required in the physical world. A small, dedicated world model like this could be a key component in a hierarchical system, handling fast, low-level trajectory prediction while a larger "reasoning" model sets goals.

Frequently Asked Questions

What is a "world model" in AI?

A world model is an AI system that learns a compressed representation of an environment and can predict how that representation will change over time, especially in response to actions. Unlike a language model that predicts the next word, a world model tries to learn the underlying dynamics or "physics" of a scene from observations, which is essential for planning and control in robotics, autonomous driving, and game-playing agents.

What was the "collapse" problem in previous world models?

Collapse, or representation collapse, occurred when a model's encoder learned to map many different visual inputs (e.g., varied video frames) to nearly identical latent codes. While this made the prediction task trivial—always predicting the same next latent state—it rendered the model useless because it lost all information about the input. Preventing collapse previously required a complex set of training hacks that made systems fragile.

How does LeWorldModel's SIGReg loss prevent collapse?

The SIGReg (Spatially-Invariant Gaussian Regularization) loss prevents collapse by acting as a regularizer on the latent space itself. It encourages the distribution of latent codes for a batch of inputs to match a unit Gaussian distribution. This penalizes the codes if they cluster too tightly or collapse to a single point, forcing the encoder to maintain diversity and spread in its representations throughout training.

Why does a 15M-parameter model matter?

A 15-million-parameter model is extremely small by modern AI standards (LLMs often have billions or trillions). This small size means it can train quickly on a single GPU and execute inferences with very low latency. For applications like real-time robot control or embedded systems, this efficiency is critical. It also democratizes research, allowing more teams to experiment with world models without massive computational resources.