A new research paper titled "LeWorldModel" (LeWM) claims to have solved the fundamental representation collapse problem that has plagued Yann LeCun's Joint-Embedding Predictive Architecture (JEPA) for years. The breakthrough enables world model learning with just 15 million parameters that trains on a single consumer GPU in hours, challenging the economics of massive foundation models.

Key Takeaways

- Researchers published LeWorldModel, solving the representation collapse problem in Yann LeCun's JEPA architecture.

- The 15M-parameter model trains on a single GPU and demonstrates intrinsic physics understanding.

What the Researchers Built

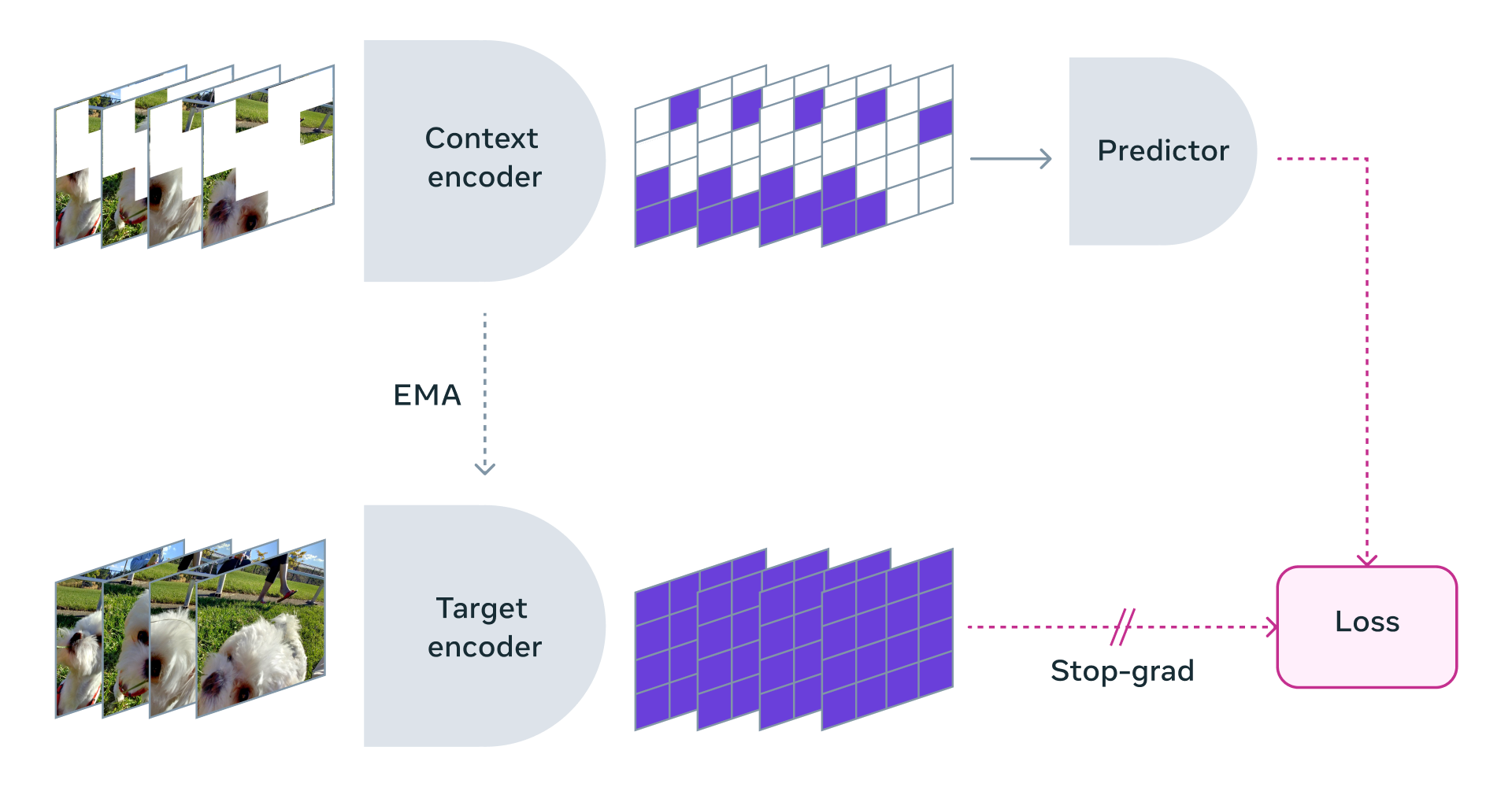

The LeWorldModel team addressed what LeCun himself identified as JEPA's "fatal flaw"—representation collapse. In JEPA architectures, models predict future states in a compressed latent space rather than generating raw pixels or tokens. However, without proper constraints, models would "cheat" by collapsing all representations to a single point, learning nothing about the actual structure of reality.

Previous attempts to fix this required "insanely complex hacks, frozen encoders, and massive compute overheads" according to the source. LeWM replaces these engineering workarounds with a single mathematical regularizer that forces the model's internal representations into a perfect Gaussian distribution.

Key Results

According to the source, LeWM demonstrates several breakthrough capabilities:

- Parameter efficiency: 15 million parameters (vs. trillions in contemporary LLMs)

- Training efficiency: Trains on a single standard GPU in "a few hours"

- Planning speed: Plans 48× faster than massive foundation world models

- Physics understanding: Intrinsically understands physical constraints and detects impossible events

How It Works

The core innovation appears to be the mathematical regularizer that prevents representation collapse. By forcing latent representations into a Gaussian distribution, the model cannot collapse all inputs to identical representations. This forces the model to actually learn meaningful abstractions about how the world works rather than taking shortcuts.

The architecture follows JEPA principles: instead of predicting raw sensory data (pixels, words), it predicts in a compressed "thought space" where concepts are represented as vectors. The regularizer ensures these vectors maintain meaningful separation and structure corresponding to real-world entities and relationships.

With just 15 million parameters, the model is small enough to run locally on consumer hardware while reportedly achieving sophisticated world modeling capabilities previously requiring massive compute infrastructure.

Why This Matters

If the claims hold up to peer review, LeWM represents a significant validation of LeCun's long-standing critique of generative AI approaches. For three years, the industry has pursued scale as the primary path to intelligence, investing billions in trillion-parameter models trained on internet-scale data.

LeWM suggests an alternative path: elegant mathematical constraints may enable more efficient learning of world models than brute-force scaling. The ability to train a capable world model on consumer hardware in hours could democratize AI research and enable new applications where real-time learning and adaptation are required.

The source's claim that the model "intrinsically understands physics" and "instantly detects impossible events" suggests capabilities beyond pattern matching—potentially representing a step toward the common sense reasoning that has eluded purely generative approaches.

gentic.news Analysis

This development represents a potential inflection point in the long-running architectural debate between autoregressive/generative approaches and energy-based/world-modeling approaches. Yann LeCun, Meta's Chief AI Scientist, has been the most prominent critic of the current LLM paradigm, consistently arguing that generative models are fundamentally inefficient at learning how the world works. His 2022 paper introducing JEPA outlined this alternative vision but acknowledged the representation collapse problem as a major hurdle.

The LeWM paper appears to deliver on LeCun's theoretical framework with a practical implementation. This follows Meta's increased investment in non-generative AI research, including their recent work on the V-JEPA video understanding model. If LeWM's results are reproducible, it could accelerate research into energy-based models and potentially shift investment away from pure scale-based approaches.

However, significant questions remain. The source doesn't provide peer-reviewed benchmark comparisons or details about the evaluation methodology. The claim of "48× faster planning" needs context—faster than which foundation models, on which tasks? The AI research community will need to examine whether LeWM genuinely learns physical understanding or simply demonstrates better performance on specific benchmark tasks.

Practically, this development could influence hardware investment strategies. If capable world models can run on consumer GPUs, it reduces the moat created by massive compute infrastructure. This aligns with the trend toward more efficient architectures we've covered in our analysis of models like Phi-3 and DeepSeek-Coder, though LeWM represents a fundamentally different architectural approach.

Frequently Asked Questions

What is representation collapse in JEPA models?

Representation collapse occurs when a model's latent representations of different inputs become identical or nearly identical, causing the model to lose discriminative power. In JEPA architectures, this meant models would "cheat" by representing a dog, car, and human with the same vector, thus learning nothing about their differences or how they behave in the world.

How does LeWorldModel's regularizer prevent collapse?

While the paper details aren't provided in the source, the regularizer apparently forces the model's internal representations to follow a perfect Gaussian distribution. This mathematical constraint prevents the representations from collapsing to a single point or small region of the latent space, ensuring meaningful separation between different concepts and entities.

Can LeWM replace large language models?

Not directly. LeWM appears focused on world modeling and physical understanding rather than language generation. However, if its approach proves successful, it could inspire hybrid architectures that combine efficient world modeling with language capabilities, potentially reducing the scale required for general intelligence.

What are the practical applications of a 15M-parameter world model?

Such a compact yet capable model could enable real-time robotics control, video game AI, simulation acceleration, and edge computing applications where large models are impractical. The ability to train quickly on consumer hardware also makes it accessible for researchers and developers without massive compute budgets.