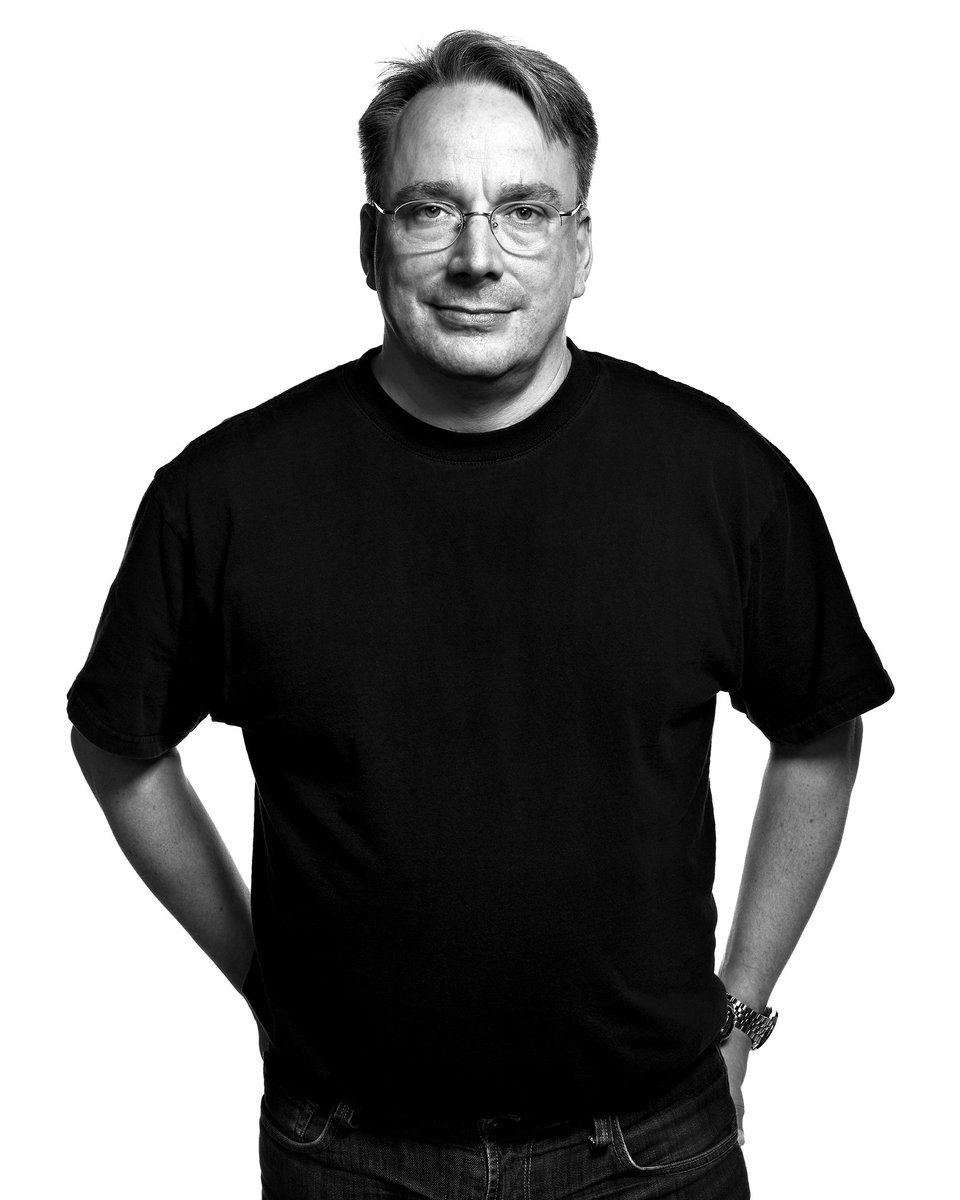

A notable shift in the utility of AI for software engineering is emerging from one of the world's most demanding codebases. According to a report from The Register, Linus Torvalds, the principal maintainer of the Linux kernel, has observed a qualitative improvement in AI-assisted contributions. Where he once dismissed such submissions as "slop," he now notes that AI tools are increasingly identifying genuine bugs and, crucially, proposing functional patches to fix them.

What Happened

The report, relayed via social media, indicates that Torvalds commented on the evolving state of AI-generated code during a discussion at the Open Source Summit. His key observation was that the noise-to-signal ratio has improved dramatically. Instead of producing irrelevant or broken suggestions, AI tools are now capable of analyzing the kernel's massive codebase, pinpointing actual defects, and generating correct patches that can be accepted into the mainline kernel. This represents a tangible, practical application of AI moving beyond experimental demos into real-world maintenance workflows.

Context: The Linux Kernel as the Ultimate Testbed

The Linux kernel is arguably the most rigorous proving ground for any software development tool. It comprises over 30 million lines of code, has a decades-long development history with intricate interdependencies, and is maintained by a distributed, expert-led community with zero tolerance for low-quality contributions. For years, AI coding assistants have been benchmarked on simpler tasks like solving LeetCode problems or generating standalone functions. Their ability to navigate the scale, complexity, and subtle conventions of a project like the Linux kernel was largely unproven and often met with skepticism from veteran developers.

Torvalds's previous characterization of early AI submissions as "slop" reflected this gap between academic promise and production-ready utility. His updated assessment signals that the technology has crossed a critical threshold in understanding context, code semantics, and project-specific norms.

The Implication: From Assistant to Collaborator

This development points to AI's evolution from a basic autocomplete tool to a potential collaborator in systems programming. Identifying a bug in the kernel is a non-trivial task requiring deep comprehension of system behavior. Proposing a correct patch is even more complex, as it must adhere to strict coding standards, not introduce regressions, and fit within the existing architectural patterns. That AI tools are now succeeding in this environment suggests significant advances in code representation learning, program analysis, and perhaps retrieval-augmented generation trained on the kernel's own history.

For maintainers of large open-source projects, this could begin to alleviate the immense burden of triaging issues and reviewing patches. It could also serve as a force multiplier for security, enabling more systematic scanning for certain classes of vulnerabilities across the entire code history.

gentic.news Analysis

This report from Torvalds is a critical data point in the ongoing narrative of AI's integration into professional software development. It aligns with a trend we've been tracking: the movement of AI coding tools from novelty to necessity, particularly for complex, legacy codebases. As we covered in our analysis of GitHub Copilot Workspace, the industry is pushing beyond simple code generation toward full-task automation, including understanding bug reports and formulating solutions.

The Linux kernel community, led by entities like the Linux Foundation, has historically been a cautious adopter of new tooling, prioritizing stability and correctness above all. Torvalds's shifted stance is therefore a powerful endorsement. It suggests that the underlying models have improved not just in raw code generation, but in their grasp of system-level context and their ability to reason about patches—a capability closer to the work of DeepSeek Coder or Claude 3.5 Sonnet on coding benchmarks than earlier, more superficial assistants.

However, this is not an endpoint. The next questions are about scale and scope. How many of these AI-generated patches are being accepted? What types of bugs are they finding (e.g., memory safety, logic errors, concurrency issues)? Does this tooling risk creating a homogenization of code style or overlook subtle, non-local implications of a change? The trend is clearly positive, but the most challenging aspects of kernel maintenance—design decisions, performance optimization, and architectural evolution—likely remain firmly in the human domain for the foreseeable future.

Frequently Asked Questions

What did Linus Torvalds say about AI bug reports?

Linus Torvalds, the lead maintainer of the Linux kernel, stated that AI-generated bug reports and patches are no longer "slop." He reported that they now frequently identify actual bugs in the kernel code and suggest working patches that can be integrated, marking a significant improvement in their practical utility.

Why is the Linux kernel a good test for AI coding tools?

The Linux kernel is one of the largest and most complex open-source software projects in existence, with over 30 million lines of code and strict maintenance standards. Successfully navigating its codebase to find subtle bugs and generate correct patches requires deep understanding of context, conventions, and system architecture, making it a far more rigorous test than typical coding benchmarks.

What does this mean for software developers?

This development indicates that AI coding assistants are evolving from basic autocomplete tools into capable collaborators for complex software maintenance tasks. For developers working on large, legacy codebases, it suggests AI could become a valuable tool for bug detection, patch generation, and potentially security auditing, acting as a force multiplier for maintainer teams.

Are AI tools now writing the Linux kernel?

No. The report indicates AI is assisting with identifying bugs and generating specific patches for those bugs. The overarching design, architectural decisions, code reviews, and final integration authority remain firmly with human maintainers. The AI is acting as an advanced assistant, not an autonomous developer.