This week, the Linux kernel project—the foundational open-source operating system kernel—established its first formal, project-wide policy explicitly governing the use of artificial intelligence in code contributions. The policy, shaped by the pragmatic philosophy of project founder Linus Torvalds, permits AI-assisted contributions but enforces strict new disclosure requirements and unequivocally places legal and technical liability on the human developer, not the AI model.

Key Takeaways

- The Linux kernel project has established a formal policy permitting AI-assisted code contributions, requiring strict developer disclosure.

- Crucially, the human developer retains full legal and technical liability for any submitted code, treating AI as just another tool.

What Happened: The New Policy

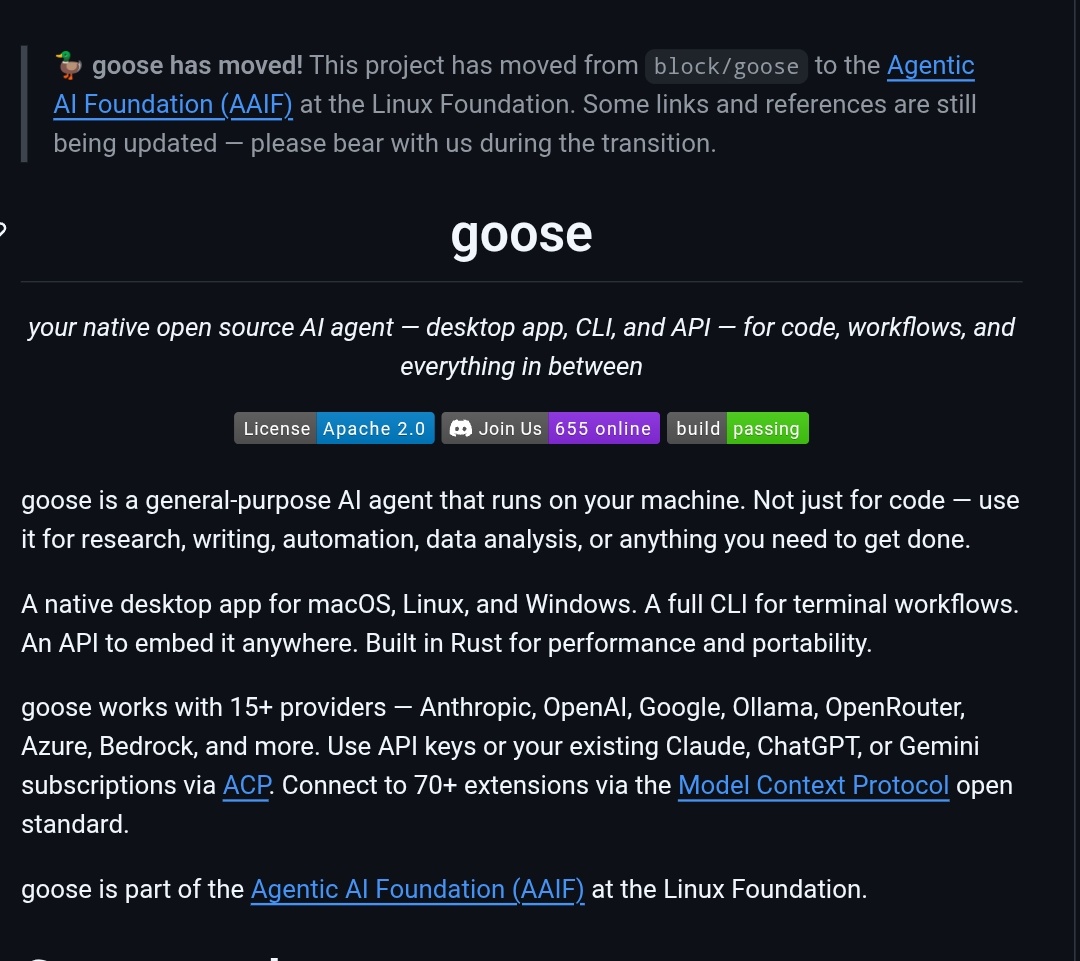

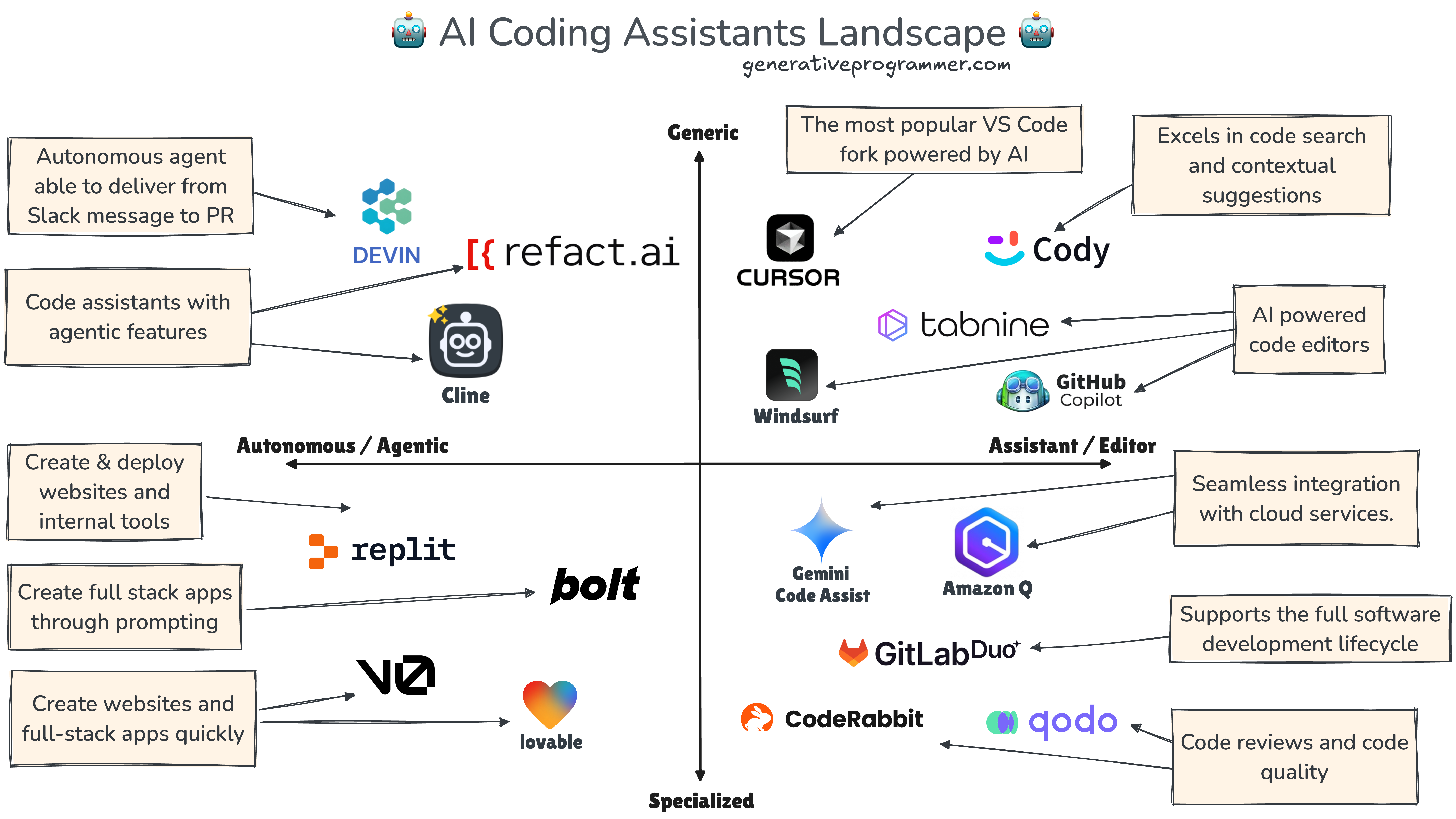

The Linux kernel's leadership has approved a policy that allows developers to use AI tools (like GitHub Copilot, Claude, or GPT) to write or modify code for submission. However, this permission comes with critical guardrails:

- Mandatory Disclosure: Developers must explicitly disclose the use of AI in their patch submissions.

- Human-Only Certification: The

Signed-off-byline, which certifies the Developer Certificate of Origin (DCO), must be added by a human. The policy explicitly states AI agents "MUST NOT" add this certification, as it is a legal attestation. - Unchanged Liability: The human developer whose tag is on the patch bears full responsibility for its correctness, security, and licensing. As the policy clarifies: if an AI introduces a bug—like a race condition—and the developer approves it, the patch carries the developer's tag, not the model's.

The Philosophical Stance: AI as a Tool, Not a Shield

The policy's rationale, heavily influenced by Torvalds, is direct and practical. It rejects the notion that more documentation or tool restrictions can prevent bad code. Instead, it focuses on accountability. The core principle is that AI is just another tool, akin to a compiler or a debugger. The kernel project will not attempt to police what software developers run on their local machines but will hold them accountable for what they submit.

This stance is a deliberate contrast to what the source describes as "panic in other parts of the open-source scene," where projects have been overwhelmed by low-quality, AI-generated contributions—often referred to as "AI slop."

The Context: An Open-Source Ecosystem Flooded by "AI Slop"

The Linux kernel's move is a direct response to a growing crisis in open-source maintenance. The source cites several high-profile examples:

- cURL: Creator Daniel Stenberg closed the project's bug bounty program after a flood of hallucinated, nonsensical bug reports generated by AI.

- tldraw: The drawing app began automatically closing external pull requests as a defensive measure against AI-generated spam.

- Node.js & OCaml: These major projects have received massive, often useless, AI-generated patches exceeding 10,000 lines of code.

These incidents illustrate the signal-to-noise ratio collapse that maintainers face, making the Linux kernel's structured, accountability-first approach a notable experiment in governance.

What This Means in Practice

For kernel developers, the rules are now clear: use AI if it helps, but you must flag it, and you are 100% on the hook for the result. For the broader open-source community, the Linux kernel—often a bellwether for systems software engineering practices—has provided a potential blueprint for managing AI's impact: embrace the tool, enforce transparency, and double down on human responsibility.

gentic.news Analysis

This policy is a landmark moment in the convergence of large-scale open-source development and generative AI. It represents a mature, engineering-focused response to a problem that has caused reactive chaos in smaller projects. The Linux kernel's sheer scale (over 30 million lines of code, with thousands of contributors) forces a systemic solution rather than ad-hoc defenses.

This development directly connects to the trend of AI-generated code spam we've tracked across the ecosystem. Our previous coverage of cURL shutting its bug bounty and tldraw's automated PR defenses highlighted the symptom; the Linux kernel's policy is a leading attempt at a cure. It aligns with a growing consensus among veteran maintainers that gatekeeping must happen at the point of human review and legal attestation, not at the toolchain level.

Furthermore, this policy subtly reinforces the irreducible role of human expertise in systems programming. While AI can suggest code, the deep, context-specific knowledge required to validate a kernel patch for correctness, security, and performance cannot be outsourced. The policy institutionalizes this reality. It also creates an interesting precedent for liability chains in software development. By explicitly forbidding AI from adding the Signed-off-by line—a legal certification—the project draws a bright line that could influence how other organizations, including corporations, define accountability for AI-assisted work.

Looking ahead, the key metric will be compliance and enforcement. Will the disclosure rule be consistently followed? How will maintainers handle patches where AI use is suspected but not disclosed? The kernel's success or failure with this policy will likely influence similar decisions in other foundational open-source foundations, such as the Apache Software Foundation or the Eclipse Foundation, in the coming year.

Frequently Asked Questions

What does the new Linux kernel AI policy actually require?

The policy requires any developer submitting code to the Linux kernel to explicitly disclose if they used an AI-assisted tool (like Copilot or Claude) in creating the patch. Most importantly, the human developer must personally add the Signed-off-by line, taking full legal and technical responsibility for the code's contents. The AI itself cannot be listed as a contributor or certifier.

Why is the Linux kernel allowing AI-generated code?

The project's leadership, led by Linus Torvalds, views AI as simply another tool in a developer's toolkit, similar to a sophisticated compiler or IDE. The policy's philosophy is that banning tools is ineffective; instead, the project should focus on holding the human developer accountable for the quality and correctness of whatever they submit, regardless of how it was authored.

What is "AI slop" in open source?

"AI slop" is a colloquial term for the flood of low-quality, often hallucinated or nonsensical contributions generated by AI and submitted to open-source projects. This includes bogus bug reports, massive irrelevant patches, and poorly reasoned feature requests. It overwhelms volunteer maintainers, wasting time and resources, and has led several projects to implement defensive measures like closing bug bounties or automating PR rejections.

How does this policy affect developer liability?

It makes the liability framework explicit. Before, the Signed-off-by line already meant the developer certified the code's origin and license. The new policy clarifies that this human certification covers AI-assisted code. If an AI introduces a subtle kernel bug that causes a system crash or security vulnerability, the human developer who signed off on the patch is responsible, not the AI model's creator (e.g., OpenAI or Anthropic).