Key Takeaways

- A new arXiv paper introduces LLM-HYPER, a framework that treats large language models as hypernetworks to generate parameters for click-through rate estimators in a training-free manner.

- It uses multimodal ad content and few-shot prompting to infer feature weights, drastically reducing the cold-start period for new promotional ads and has been deployed on a major U.S.

What Happened

A new research paper, LLM-HYPER, was posted to arXiv on April 13, 2026, proposing a novel solution to a persistent problem in online advertising: the cold-start problem for new promotional ads. When a new ad campaign launches, it lacks historical user interaction data (clicks, conversions), making traditional Click-Through Rate (CTR) prediction models ineffective. This leads to poor initial performance and wasted ad spend during the critical launch period.

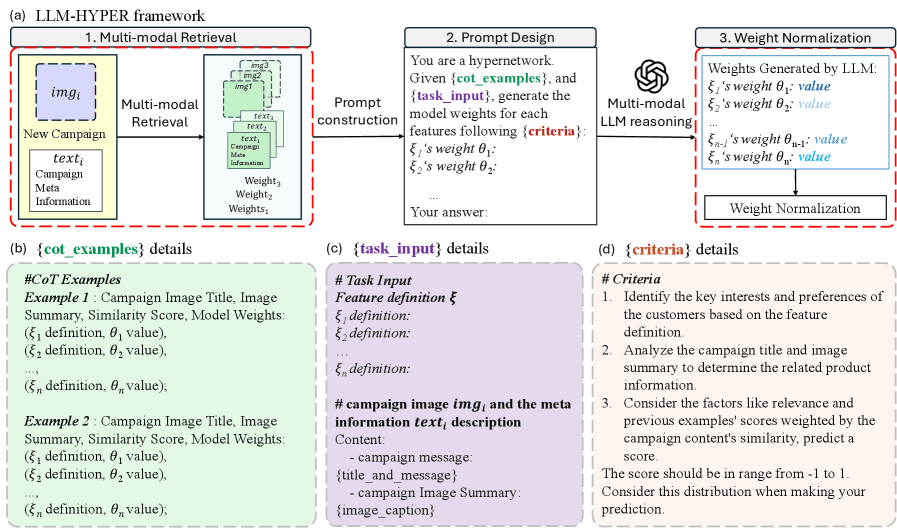

The core innovation of LLM-HYPER is its treatment of a Large Language Model (LLM) as a hypernetwork. Instead of training a CTR model from scratch with sparse data, the framework uses the LLM to directly generate the parameters (the weights) of a linear CTR predictor. This is done in a training-free, few-shot manner.

Technical Details

The LLM-HYPER pipeline works as follows:

- Multimodal Content Understanding: For a new "cold" ad, the system extracts both text and image content. It uses CLIP embeddings (a vision-language model developed by OpenAI) to create a semantic representation of the ad.

- Semantic Retrieval: Using the CLIP embedding, the system retrieves a few examples of past, semantically similar ad campaigns that do have historical performance data.

- Chain-of-Thought Prompting: These retrieved examples are formatted into a structured prompt with demonstrations. The prompt guides the LLM through a reasoning process about:

- Customer Intent: What is the likely goal of a user engaging with this ad?

- Feature Influence: Which aspects of the ad (e.g., headline keywords, visual style, call-to-action) are most influential for clicks?

- Content Relevance: How well does the ad content match potential user queries or interests?

- Weight Generation & Calibration: Based on this reasoning, the LLM outputs numerical weights for each feature in the linear CTR model. The researchers introduced specific normalization and calibration techniques to ensure these LLM-generated weights produce stable, production-ready CTR score distributions that align with the platform's existing models.

- Deployment: The generated linear model is immediately used for CTR prediction, bypassing the traditional data collection and training period.

Retail & Luxury Implications

The implications for retail and luxury advertising are direct and significant. This is not a tangential research concept; it's a deployed system solving a core business problem.

1. Launching New Products & Campaigns: Luxury houses frequently launch limited-edition collections, seasonal campaigns, or new product lines. Each launch is a cold-start scenario. LLM-HYPER could enable these brands to achieve effective, personalized ad targeting from day one, maximizing the impact and ROI of their high-stakes marketing campaigns.

2. Dynamic Creative Optimization (DCO) at Scale: Retailers running DCO platforms test thousands of ad creative variations (images, copy). LLM-HYPER's ability to infer CTR potential from content alone could be used to intelligently seed the initial weights for new creatives, accelerating the optimization cycle and reducing wasted spend on poorly performing variants.

3. Bridging Brand and Performance Marketing: The framework's reliance on reasoning about "customer intent" and "content relevance" based on ad assets aligns with luxury marketing's focus on brand narrative and aesthetic. It offers a path to make high-concept, brand-driven creative immediately actionable in performance advertising channels.

4. Operational Efficiency: The training-free nature of the approach reduces computational costs and engineering overhead associated with continually retraining cold-start models. For a retail media network or a brand's in-house ad team, this simplifies the tech stack.

The paper reports compelling results: a 55.9% improvement in NDCG@10 over cold-start baselines in offline tests, and successful online A/B tests on a top U.S. e-commerce platform, leading to production deployment. This validates the approach in a real-world, large-scale retail environment.

Implementation Approach

For a retail AI team considering this approach, the technical requirements are substantial but clear:

- Multimodal Foundation Models: Access to a capable LLM (e.g., GPT-4, Claude 3) and a vision-language model like CLIP or a competitor (the Knowledge Graph notes Penguin-VL as a competitor to CLIP).

- Vector Database: A system to store and retrieve CLIP embeddings of historical ad creatives and campaigns.

- Prompt Engineering & Orchestration: Significant effort is needed to design the few-shot Chain-of-Thought prompts and the pipeline that retrieves examples, formats the prompt, calls the LLM, and post-processes the output.

- Calibration Infrastructure: Implementing the proposed normalization and calibration techniques is critical for production stability. This requires integration with the existing ad serving and model scoring infrastructure.

The complexity is high, but the payoff—eliminating the cold-start period—is a major competitive advantage in performance marketing.

Governance & Risk Assessment

- Transparency & Explainability: While the LLM provides a "reasoning" chain, the direct generation of model weights is a black box. Retailers must establish governance to audit these weights for potential bias (e.g., unintentionally deprioritizing certain customer segments based on ad imagery).

- Brand Safety: The system's performance hinges on the quality and relevance of the retrieved past campaigns. Curating a "demonstration set" that reflects brand values and safe content is essential.

- Model Drift: The approach is static per ad; it doesn't continuously learn from new data. A governance plan is needed to decide when to switch from the LLM-generated cold-start model to a traditionally trained, data-rich model.

- Cost: LLM API costs for generating weights for millions of ad variations could be significant and must be factored into the business case.

gentic.news Analysis

This paper arrives amidst a clear trend on arXiv focusing on the intersection of generative AI and recommendation systems. The Knowledge Graph shows generative recommendation has been mentioned in 11 prior articles, and just days before this paper, on March 31, arXiv posted a preprint titled 'Cold-Starts in Generative Recommendation: A Reproducibility Study'. LLM-HYPER provides a concrete, deployed answer to the very challenge that study aimed to evaluate.

The framework's use of CLIP for semantic retrieval connects to a broader industry movement toward multimodal understanding in retail, a trend we've covered in prior analyses of vision-language models. The paper also aligns thematically with other recent work on arXiv, such as the HARPO framework for conversational recommendation we covered on April 14, indicating a sustained research push to make recommendation and advertising systems more adaptive and context-aware.

For luxury and retail AI leaders, the key takeaway is the operationalization of LLM reasoning. LLM-HYPER doesn't use the LLM to write ad copy or analyze sentiment; it uses it as a core, inferential component of a mission-critical prediction system. This represents a maturation of LLM application beyond content generation into the heart of decision engines. The reported production deployment on a major U.S. platform signals that this is beyond academic speculation—it's a viable architecture that directly addresses the multi-billion dollar problem of cold-start advertising inefficiency.