For years, the dominant question in applied AI has been "which model should we use?" A new paper from Stanford and MIT researchers argues that's only half the story. The real performance lever might be the system code that wraps the model—the often-overlooked "harness" that manages context, tools, retrieval, and workflow execution.

Their research demonstrates that with the same underlying LLM, changing only the harness code can create up to a 6× performance gap on the same benchmark. This finding fundamentally shifts optimization priorities from model selection to system architecture.

What the Researchers Built: Meta-Harness

The team introduced Meta-Harness, an outer-loop optimization system that automatically improves harness code through iterative experimentation. Unlike traditional approaches that give an optimizing agent only a final score or brief summary, Meta-Harness provides rich diagnostic access through a filesystem-like interface.

This interface gives the agent access to:

- Prior harness code versions

- Execution logs and traces

- Intermediate results and failure patterns

- Tool usage histories

The core insight: better visibility into why a harness succeeds or fails enables more intelligent optimization. The system can learn from past attempts, identify patterns in failures, and make targeted improvements rather than random exploration.

Key Results: Harness Optimization Delivers Substantial Gains

The paper presents compelling evidence across multiple challenging domains:

Online Text Classification +7.7 points over SOTA context management Uses 4× fewer context tokens Retrieval-Augmented Math Reasoning +4.7 points average across 5 held-out models On 200 IMO-level problems Agentic Coding (TerminalBench-2) Beats strong hand-engineered baselines Discovered harnesses outperform manual designsThe most striking finding: The performance variation attributable to harness design alone—up to 6× on the same benchmark with the same model—suggests that many reported model comparisons might be confounded by harness implementation differences.

How Meta-Harness Works: Filesystem Abstraction Enables Learning

Meta-Harness treats harness optimization as a reinforcement learning problem where the agent learns to modify code based on execution feedback. The key innovation is the filesystem abstraction that exposes:

- Code evolution history - Previous versions and their performance

- Execution traces - Detailed logs of what happened during runs

- Failure patterns - Systematic errors that recur across attempts

- Resource usage - Token consumption, latency, memory patterns

This approach contrasts with typical black-box optimization where the agent sees only final scores. By understanding why certain harness designs work better, Meta-Harness can make informed, compositional improvements rather than random mutations.

The system operates in a continuous improvement loop:

- Execute current harness with full instrumentation

- Store execution traces in the filesystem interface

- Agent analyzes patterns and proposes harness modifications

- Test modified harness and measure improvement

- Repeat with accumulated knowledge

Why This Matters: Shifting from Model-Centric to System-Centric AI

This research represents a paradigm shift in how we think about AI system performance. For years, the industry has focused overwhelmingly on model capabilities—parameters, training data, architecture innovations. The harness has been treated as implementation detail.

Meta-Harness demonstrates the harness is equally important. In production systems, the harness determines:

- Reliability - How failures are detected and recovered

- Tool usage - When and how to call external APIs

- Context management - What information to retain and present

- Cost efficiency - Token usage and computational overhead

- Latency - Parallelization and caching strategies

The paper's conclusion is unambiguous: "The harness around a model matters as much as the model itself." This has immediate practical implications:

- Benchmarking practices need revision - Results should report both model and harness specifications

- Deployment optimization should prioritize harness design - Not just model fine-tuning

- Research should study harness patterns systematically - Not treat them as incidental implementation

gentic.news Analysis

This research arrives at a critical inflection point in AI deployment. As model capabilities converge across major providers (OpenAI's GPT-5, Anthropic's Claude 3.7, Google's Gemini 2.0), competitive differentiation increasingly shifts to system design rather than raw model performance. Meta-Harness provides the methodological foundation for treating harness optimization as a first-class engineering discipline.

The timing aligns with several industry trends we've tracked. First, the rising importance of agentic workflows—where LLMs orchestrate multi-step processes with tools—makes harness design exponentially more complex. Second, the proliferation of evaluation frameworks (SWE-bench, AgentBench, LiveCodeBench) creates precisely the structured feedback loops that Meta-Harness leverages. Third, increasing attention to inference cost optimization makes token-efficient context management a business imperative, not just a technical concern.

This work also connects to broader movements toward observability-driven development in AI systems. Just as software engineering evolved from print-debugging to comprehensive logging and tracing, AI system development needs similar instrumentation. Meta-Harness's filesystem abstraction provides exactly this: structured observability data that fuels automated improvement.

Looking forward, we expect to see three developments: (1) Harness optimization becoming a standard MLOps practice, integrated into continuous deployment pipelines; (2) Emergence of harness design patterns and best practices, similar to software architecture patterns; and (3) Specialized tools and platforms for harness development, testing, and optimization. The 6x performance gap suggests the ROI on harness investment could dwarf many model-focused optimizations.

Frequently Asked Questions

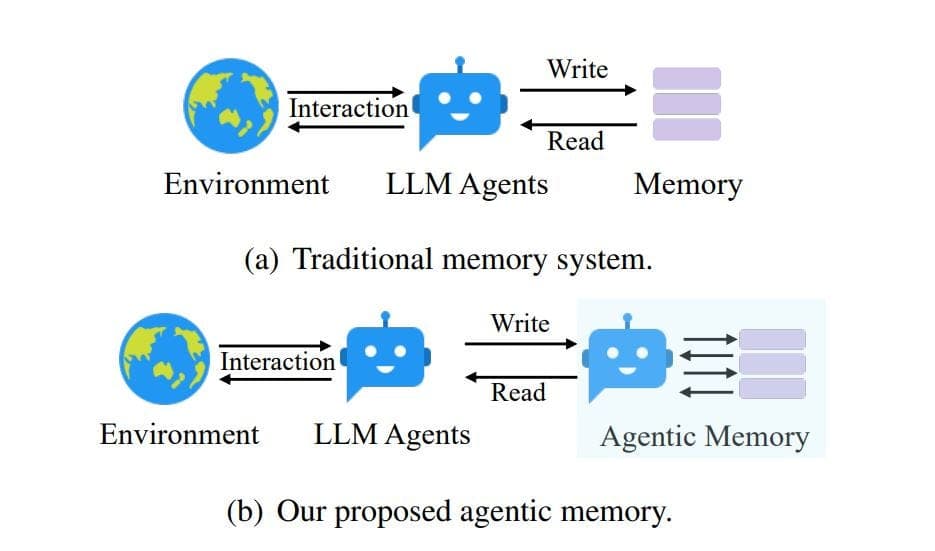

What exactly is a "model harness" in AI systems?

A model harness is the system code that wraps around a core AI model (like an LLM) to manage its operation in practical applications. It handles context window management (what information to feed the model), tool calling (when to use calculators, APIs, or databases), workflow orchestration (multi-step reasoning processes), error recovery, and output formatting. Think of it as the operating system that makes the raw model useful for specific tasks.

How does Meta-Harness differ from traditional prompt engineering or fine-tuning?

Meta-Harness operates at a higher abstraction level than prompt engineering (which modifies inputs) or fine-tuning (which modifies model weights). It optimizes the system architecture that surrounds the model—the actual code that decides how to use the model, when to call tools, how to manage memory, and how to recover from failures. This is structural optimization rather than parametric optimization.

Can organizations use Meta-Harness today, or is it just research?

The paper presents a research framework, but the core concepts are immediately applicable. Organizations can implement the key insight—systematically testing and optimizing harness code with rich instrumentation—using existing tools. The specific Meta-Harness implementation will likely be open-sourced, following Stanford's typical practice with significant AI research contributions.

Does this mean model choice doesn't matter anymore?

No, model choice still matters significantly, but it's not the only thing that matters. The research shows that harness design can create performance variations as large as model differences. An optimal system requires both a capable model and a well-designed harness. The practical implication is that organizations should allocate engineering resources to harness optimization, not just model selection.

Paper: "Meta-Harness: End-to-End Optimization of Model Harnesses"

Authors: Stanford University & MIT researchers

arXiv: 2603.28052