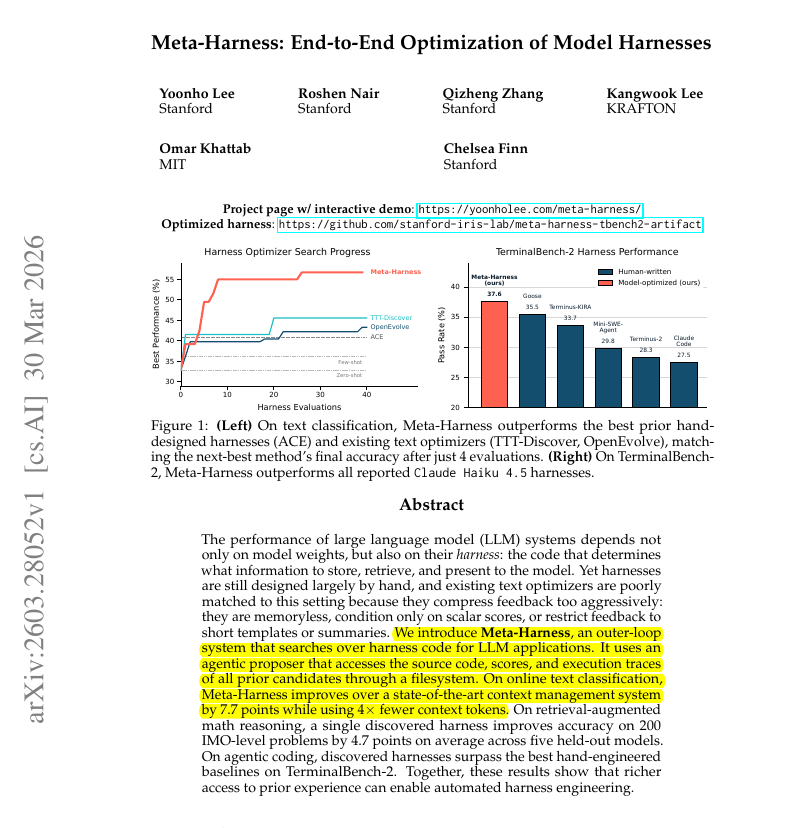

A new research paper from Stanford University and MIT argues that the performance of an AI system in practice is determined not solely by the underlying foundation model, but by the surrounding system—the "Model Harness."

What Happened

The paper, titled "Model Harnesses: The Overlooked Determinant of AI System Performance," was highlighted by AI researcher Rohan Pandey. The core thesis is that the ecosystem of prompts, tools, evaluation frameworks, and infrastructure that wraps a base model (like GPT-4 or Llama 3) is a critical, often under-appreciated, component of what users experience as "AI performance."

Context

This concept formalizes an observation familiar to practitioners: two teams using the same base model can achieve wildly different results based on their engineering approach. The "harness" includes elements such as:

- Prompt Engineering & Chaining: The specific instructions, few-shot examples, and sequences of prompts used to guide the model.

- Tool Integration: The APIs, functions, and external data sources the model is given access to (e.g., code execution, web search, calculators).

- Evaluation & Optimization Loops: The benchmarks and testing methodologies used to iteratively improve the system.

- Infrastructure & Deployment: The serving infrastructure, caching, and latency optimizations that affect real-world usability.

The research suggests that focusing exclusively on benchmark scores of raw models provides an incomplete picture. A moderately capable model with a highly optimized harness can outperform a more powerful model with a poorly designed one in specific tasks.

Implications for Practitioners

For engineers and researchers, the paper's framework underscores the importance of systematic harness development. It shifts some focus from the pursuit of ever-larger models to the engineering discipline of building reliable, efficient, and effective wrappers. This includes treating prompt chains as reproducible code, rigorously evaluating entire systems (not just models), and investing in tooling infrastructure.

gentic.news Analysis

This research crystallizes a trend that has been building in the AI engineering community throughout 2025. As model capabilities from top providers like OpenAI, Anthropic, and Google have begun to converge on broad benchmarks, competitive differentiation has increasingly shifted to the application layer. We covered this shift in our analysis of Anthropic's Tool Use API launch, which was essentially a standardized "harness" component for enabling Claude to interact with external functions.

The paper's emphasis on the harness aligns with the rising investment in and valuation of AI infrastructure and tooling companies. Startups like Weights & Biases and emerging players focused on prompt management, evaluation, and orchestration are building the very components the researchers identify as critical. This also connects to the ongoing discussion about "LLM OS" or agentic frameworks, which we explored in our piece on the evolution of AI agents. These frameworks are, in essence, sophisticated, general-purpose Model Harnesses.

Looking forward, this work provides a formal academic foundation for what will likely become standard practice: benchmarking and comparing AI systems (model + harness), not just foundation models in isolation. This could lead to new, more holistic evaluation suites that reflect real-world deployment scenarios.

Frequently Asked Questions

What is a "Model Harness"?

A Model Harness is the complete set of software, prompts, tools, and infrastructure that wraps around a base AI model to form a usable application. It includes the prompt templates, chains of reasoning steps, integrated tools (like calculators or search), evaluation code, and the deployment system that serves the model to users.

Why does the Model Harness matter more than the model?

The paper argues it doesn't always matter more, but that it is a primary determinant of performance that is often overlooked. A brilliant model with a bad harness (unclear prompts, no tools, slow infrastructure) will perform poorly. A good harness can maximize a model's potential and compensate for some of its weaknesses by providing structure and external capabilities.

Does this mean we should stop developing larger foundation models?

No. The research does not suggest that foundation model progress is unimportant. Instead, it argues for a more balanced view of AI system development. Significant gains can be found by investing in the harness, especially as base models reach a high level of capability. Both avenues—better models and better harnesses—are essential for advancing the field.

How can I apply this concept to my own AI projects?

Treat your prompt chains, tool integrations, and evaluation scripts with the same rigor as your core application code. Version them, test them systematically, and optimize them. Consider using emerging platforms for prompt management and evaluation. When comparing solutions, benchmark the entire system, not just the raw model's output on a static set of examples.