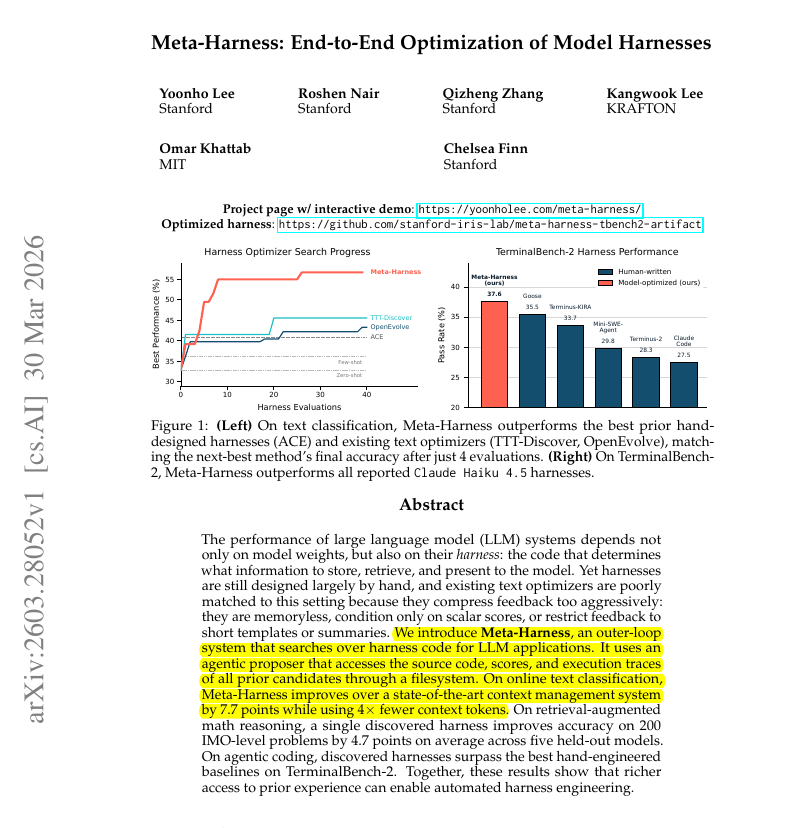

A research collaboration from Stanford University, Google, and MIT has published a paper suggesting that large language models (LLMs) can be used to iteratively improve their own prompts, a process that could automate a significant portion of manual prompt engineering work. The finding, highlighted in a social media post, points toward a future where the craft of designing effective text instructions for AI models may be increasingly handled by the models themselves.

What Happened

The source is a social media post reacting to a research paper, the specific title of which is not provided. The core claim is that researchers from Stanford, Google, and MIT have developed a method where LLMs engage in "self-prompting"—using their own outputs to refine and optimize the input prompts they receive. The implied result is improved performance on tasks without human intervention in the prompt design loop.

Context

Prompt engineering has emerged as a critical skill for effectively leveraging large language models like GPT-4, Claude, and Gemini. It involves carefully crafting instructions, examples, and context to steer a model toward a desired output. This process is often iterative and experimental. Automating this optimization could significantly lower the barrier to achieving peak model performance and reduce development time for AI applications.

The Technical Premise

While the source does not detail the methodology, the concept of LLM self-improvement typically involves a loop where:

- An LLM is given a task and an initial prompt.

- The LLM generates an output and a critique of its own performance.

- Based on this critique, the LLM generates a revised, improved prompt for the same task.

- The process repeats, with the prompt theoretically converging on a more effective formulation.

This aligns with broader research trends in AI alignment and optimization, such as Reinforcement Learning from Human Feedback (RLHF) and its automated variants like RLAIF (Reinforcement Learning from AI Feedback), where models learn from their own generated data.

Potential Implications

If the method is robust and generalizable, it could shift the role of prompt engineers from crafters of individual prompts to designers and auditors of the self-improvement systems. The focus would move to setting initial conditions, defining reward signals or success metrics for the AI to evaluate itself against, and validating the final outputs. This represents a move up the stack from tactical prompt writing to strategic oversight of autonomous optimization processes.

Limitations & Open Questions

The source offers no benchmarks, comparative results, or details on the paper's scope. Key unanswered questions include:

- How much performance gain does the self-prompting method achieve over expert human-written prompts?

- On which tasks and model sizes does it work effectively?

- What are the computational costs of running such iterative loops?

- Does the process risk prompt drift or optimization toward unintended, potentially harmful outputs?

Without the paper itself, these remain speculative. The real test will be in independent replication and benchmarking against established prompt engineering techniques.

gentic.news Analysis

This development sits at the convergence of several active research vectors we've been tracking. First, it directly follows the industry's intense focus on prompt engineering as a discipline, which became a sought-after skill following the release of powerful but instruction-sensitive models like GPT-3.5 and GPT-4. Second, it connects to the trend of AI feedback loops, exemplified by Anthropic's work on Constitutional AI and Google's research on self-discover reasoning structures. The involvement of Google here is particularly notable, as it aligns with their published efforts to improve the "promptability" of models like Gemini.

If validated, this research could partially automate the prompt optimization market, impacting tools and platforms built around this need. However, it's more likely to augment rather than replace human experts in the near term. The critical challenge will be ensuring these self-improving prompts remain aligned with complex, nuanced human intent—a problem that simply re-frames the alignment challenge rather than solving it. This work should be viewed as a step toward more autonomous and efficient AI tooling, but not an elimination of the need for human oversight in the development lifecycle.

Frequently Asked Questions

What is "self-prompting" for LLMs?

Self-prompting is a proposed method where a large language model is tasked with analyzing its own performance on a given instruction and then revising and improving that initial instruction (the prompt) autonomously. The goal is to create an iterative loop where the model generates progressively better prompts for itself, aiming to maximize performance on a target task without human intervention.

Will this research make prompt engineers obsolete?

Not in the foreseeable future. While it could automate the iterative refinement of prompts for well-defined tasks, human prompt engineers will likely remain essential for defining problem scopes, setting guardrails and ethical constraints for the self-improvement process, handling edge cases, and integrating prompts into complex, real-world applications. The role may evolve from hands-on writing to system design and oversight.

Has any company implemented this kind of self-improving AI?

Elements of this idea are already in use. For instance, some AI coding assistants can suggest improvements to a user's natural language request to generate better code. Research labs have also explored methods like "Self-Refine" where models critique and rewrite their own outputs. This paper appears to be a formalization and extension of that concept specifically applied to the prompt optimization problem itself.

Where can I find the actual research paper?

The source material is a social media post that does not link to or name the specific paper. To evaluate the claims seriously, one would need to search for recent pre-prints from Stanford, Google, and MIT collaborators on arXiv or other repositories using keywords like "self-prompting," "LLM self-improvement," or "automatic prompt optimization." The credibility of the claim hinges entirely on the unpublished details of that paper.