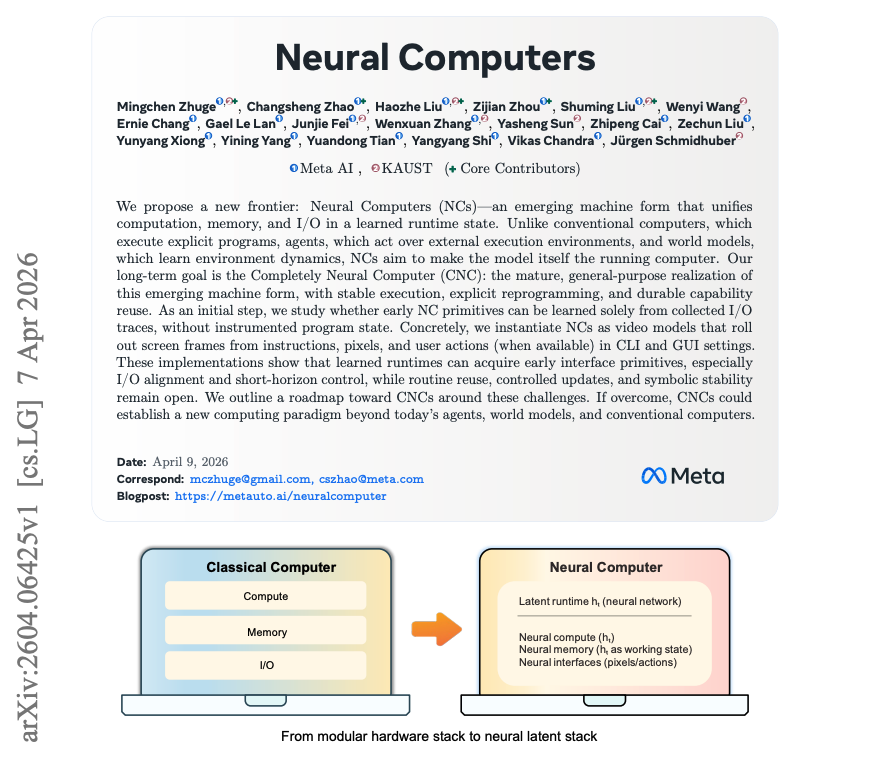

A new research paper from Meta AI, highlighted by researcher Omar Sanseviero, poses a foundational question: "What if the model wasn't just using the computer, but became the computer?" This isn't about AI writing code, but a more profound integration where the large language model (LLM) assumes the role of an operating system kernel, directly managing computational resources, processes, and memory.

The concept moves beyond the current paradigm of AI agents that call tools and APIs. Instead, it envisions the model as the central orchestrator of the machine's state. The paper, which has garnered significant attention for its conceptual ambition, explores the architectural implications and potential capabilities of such a system.

Key Takeaways

- A new research paper from Meta explores a paradigm where the language model acts as the computer's kernel, directly managing processes and memory.

- This could fundamentally change how AI agents are architected and interact with systems.

What the Paper Proposes

The core idea is a shift from tool-use to direct resource management. In today's agent frameworks, an LLM reasons about a task and then invokes a predefined function (a tool) like a calculator, web browser, or file editor. The execution and state management happen outside the model's core reasoning loop.

Meta's research inverts this. Here, the LLM would have privileged, low-level access to system resources. Think of it as the model acting as the kernel: it could directly allocate "memory" (context), spawn and manage "processes" (sub-tasks or chains of thought), handle "interrupts" (new user queries or external events), and schedule tasks—all within its own reasoning framework and using natural language or a learned intermediate representation as the control plane.

Potential Architectural Implications

This "Model as Computer" approach suggests several technical departures:

- Unified State Management: The model's context window isn't just a conversation history; it becomes the working memory of the entire system, holding task states, intermediate results, and environment data.

- Native Concurrency: The model could theoretically manage multiple reasoning threads or sub-agents simultaneously, coordinating them more seamlessly than current multi-agent systems that rely on external schedulers.

- Dynamic Tool Synthesis: Instead of selecting from a fixed library, the model could, in theory, compose primitive operations into novel "tools" on the fly to solve unique problems.

- Reduced Latency Overhead: By internalizing control flow, the system could minimize the back-and-forth between reasoning and execution modules that plagues current agentic designs.

Why This Conceptual Shift Matters

While purely research-focused, this paper taps into a critical bottleneck in AI agent design: the agent-loop overhead. Every time an LLM-based agent decides to use a tool, it must format a request, parse the output, and re-contextualize the result. This process is slow, error-prone, and breaks the model's "train of thought."

By proposing the LLM as the kernel, Meta's researchers are exploring a path to more fluid, efficient, and capable autonomous systems. If feasible, it could lead to agents that learn complex workflows end-to-end, exhibit more consistent long-horizon planning, and adapt to new software environments without needing pre-defined toolkits.

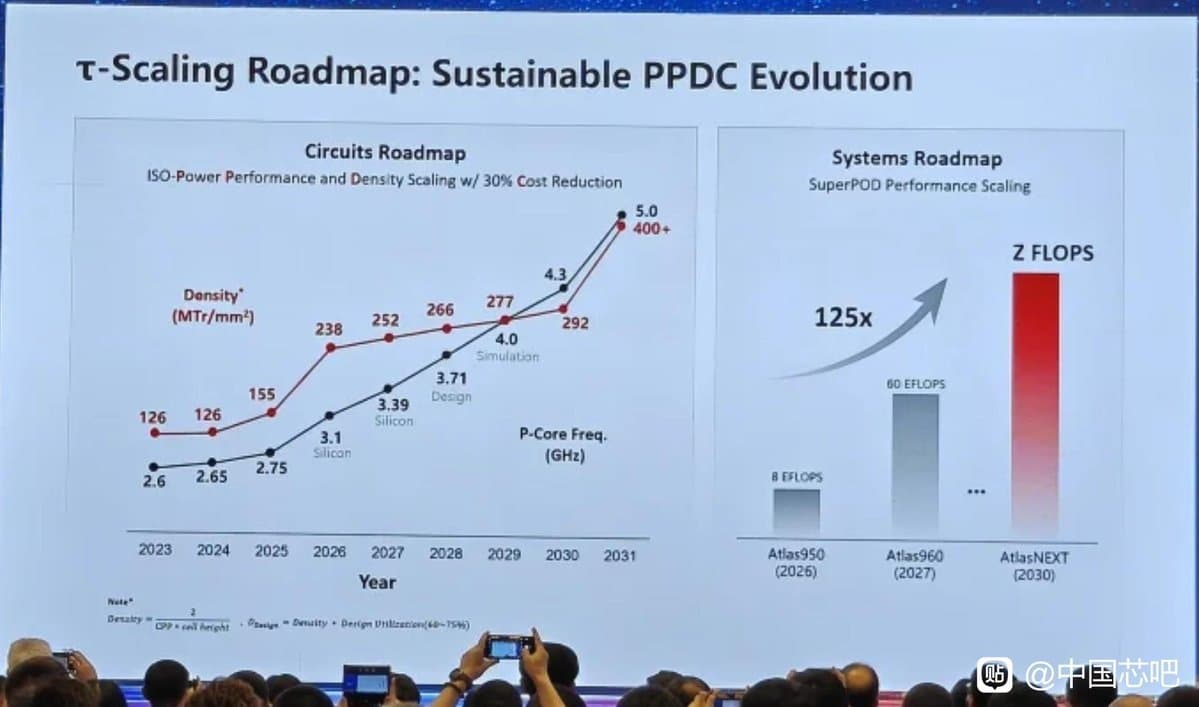

The research aligns with a broader industry trend towards deeper AI-system integration, moving from applications that sit on top of an OS to models that potentially manage the OS. However, the paper likely outlines significant challenges, including catastrophic forgetting of core reasoning skills, security vulnerabilities from direct resource access, and the immense complexity of training a model to perform reliable, deterministic system operations.

gentic.news Analysis

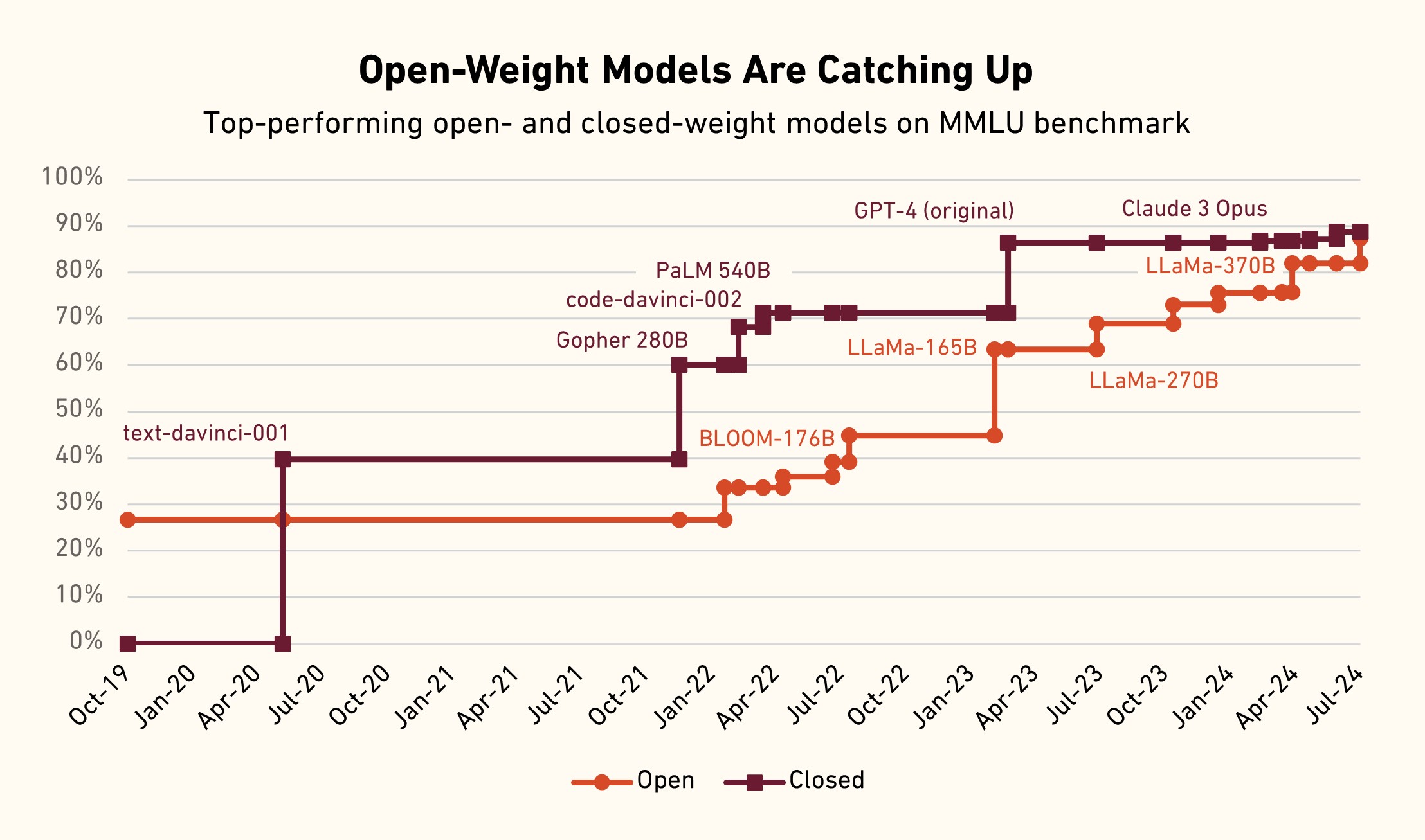

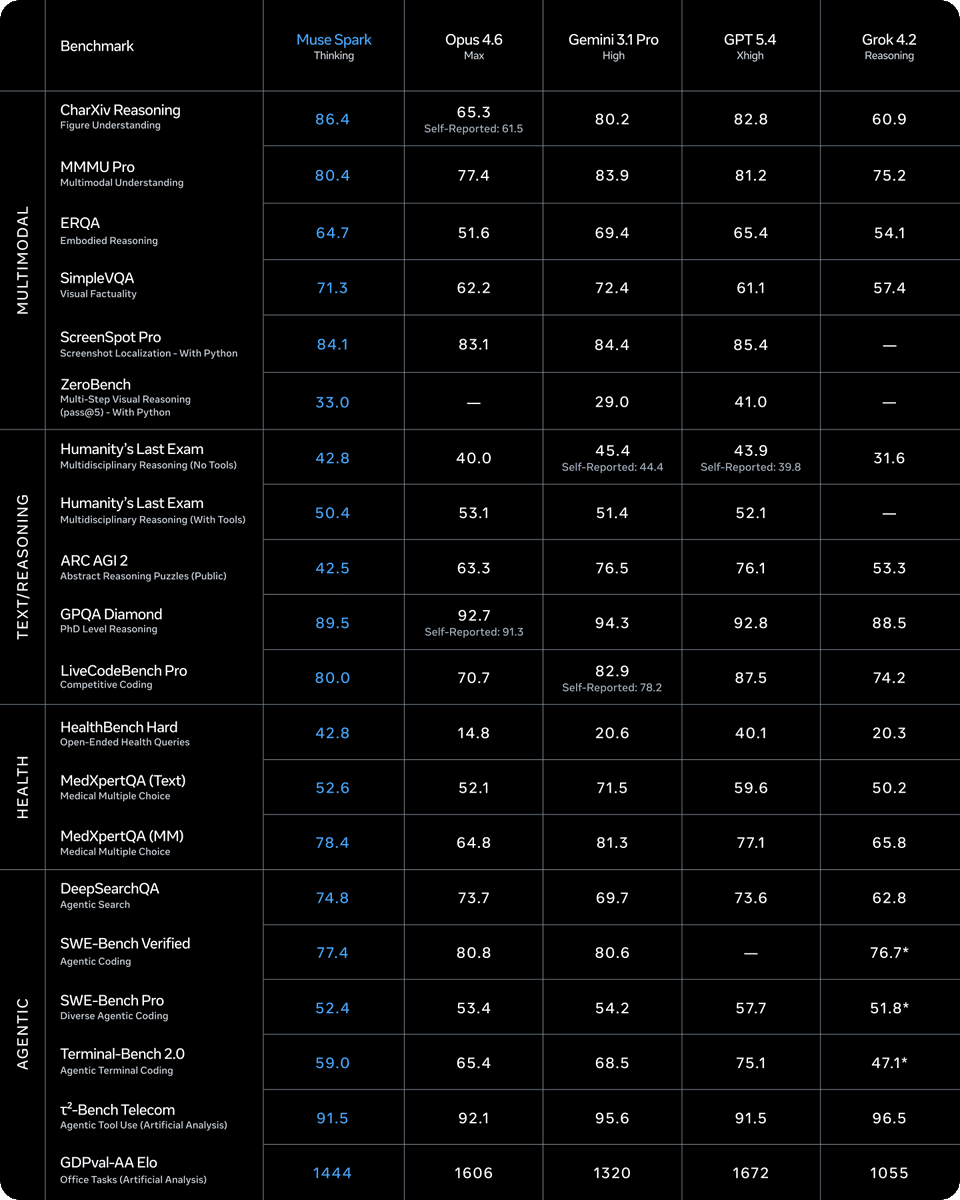

This conceptual paper from Meta follows a clear pattern of the company investing in foundational, long-horizon AI research that challenges architectural orthodoxy. It is a direct intellectual successor to projects like Meta's "Toolformer" and the push for LLM self-improvement and recursion. While competitors like Google DeepMind focus on scaling laws and new model families (Gemini), and OpenAI pushes the productization frontier (GPT-5, o1), Meta's FAIR team continues to release provocative research that re-frames how we think about AI capabilities.

The "Model as Computer" idea also intersects with another major trend we've covered: the rise of AI-native operating systems. Startups like Sierra and efforts to rebuild computing interfaces around an AI core are tackling this from the product side. Meta's paper tackles it from the theoretical model-architecture side. If these two vectors converge—theoretical models capable of kernel-like control and new OSes designed to be controlled by them—it could represent the next major platform shift post-mobile.

For practitioners, the immediate takeaway isn't a deployable system but a new mental model for agent design. Even if full kernel-level control is years away, thinking about how to give LLMs more continuous, fine-grained control over execution state could inspire more efficient agent frameworks in the near term. The paper's value is in expanding the solution space beyond incremental improvements to the existing tool-calling paradigm.

Frequently Asked Questions

What is the "Model as Computer" concept?

It's a research concept where a large language model (LLM) is architected to function like a computer's operating system kernel. Instead of just calling external tools, the model would directly manage core computational resources like memory allocation, process scheduling, and task execution within its own reasoning framework, potentially leading to more efficient and capable AI agents.

Is this a product Meta is releasing?

No. This is a research paper exploring a conceptual shift in AI architecture. It outlines a direction for future investigation rather than announcing a finished system or product. It falls under Meta's Fundamental AI Research (FAIR) team's exploratory work.

How is this different from current AI agents?

Current AI agents (like those using frameworks like LangChain or AutoGPT) operate in a loop: reason, call a pre-defined tool/API, wait for the result, then reason again. The "Model as Computer" idea aims to internalize much of this control flow, allowing the model to manage sub-tasks and system state more directly and continuously, reducing latency and coordination overhead.

What are the biggest challenges to making this work?

Key challenges would include ensuring the model's operations are reliable and deterministic (unlike typical LLM generation), preventing catastrophic interference or forgetting of core skills, and managing severe security risks that would arise from giving a neural network direct, low-level access to system resources.