Microsoft has reconfigured the underlying architecture of its Copilot Researcher agent, shifting from a single-model system to a specialized two-model pipeline. According to a report from AI researcher Rohan Pandey, the new design delegates the drafting task to a model from OpenAI (likely a GPT variant) and the auditing/verification task to a model from Anthropic (likely Claude).

What Happened

The change represents a technical pivot in how Microsoft orchestrates its AI agents for research tasks. Previously, a single language model would handle the entire workflow of a research query: searching for information, synthesizing a draft, and fact-checking its own output. The new system explicitly separates these responsibilities:

- Drafting Agent (OpenAI): Responsible for the initial creative generation and synthesis of information into a coherent draft response.

- Auditing Agent (Anthropic): Acts as a verifier or critic, reviewing the draft for accuracy, potential hallucinations, and overall quality before final output.

This architectural pattern is known as a "generator-critic" or "proposer-verifier" setup, a recognized method for improving the reliability of AI systems by introducing a separate validation step.

Context & Implications

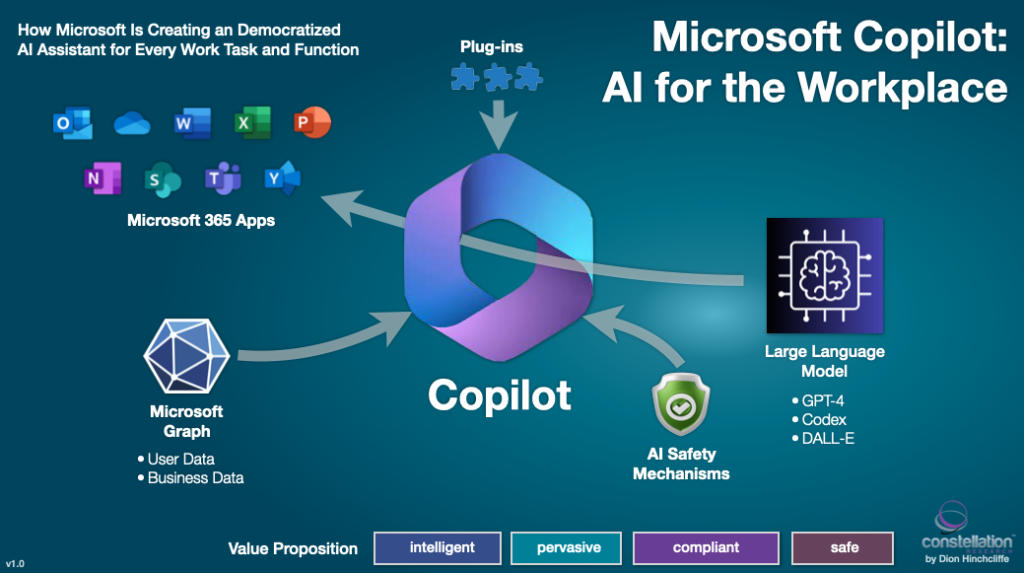

This move is a practical implementation of a growing consensus in AI safety and robustness: using multiple models with complementary strengths can yield better results than relying on a single, monolithic system. OpenAI's models (like GPT-4) are widely recognized for strong creative and generative capabilities, while Anthropic's Claude models have been heavily marketed and engineered with a focus on constitutional AI principles, harmlessness, and detailed instruction-following—traits well-suited for an auditing role.

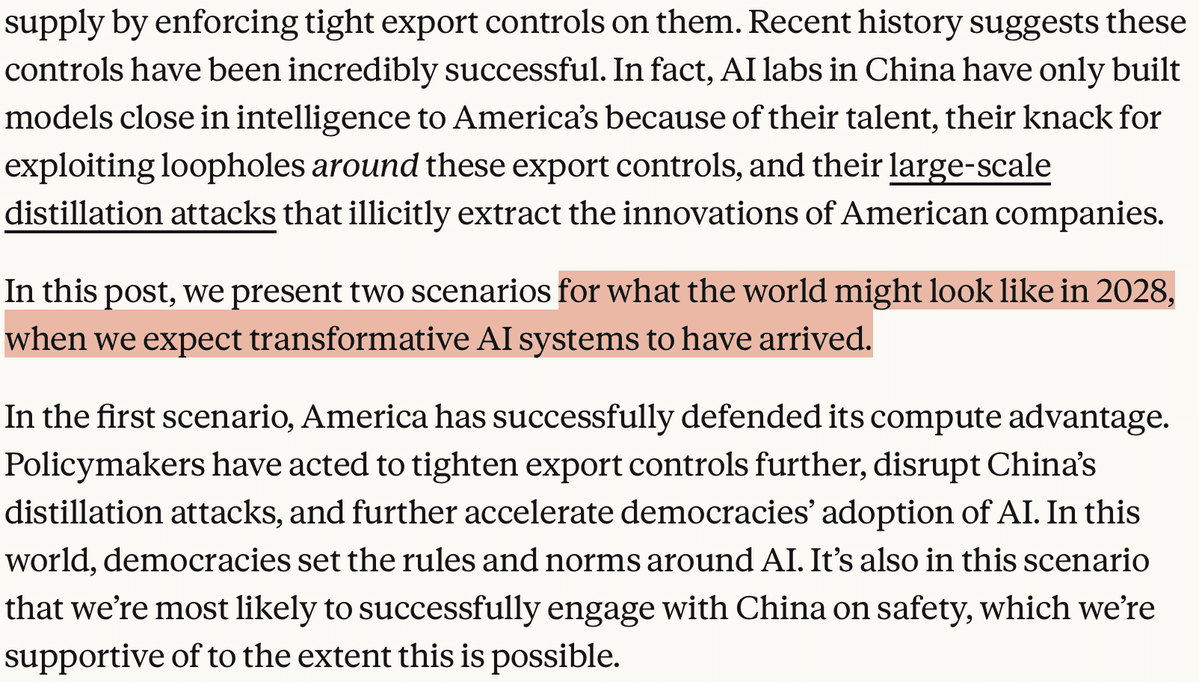

For Microsoft, this is a logical evolution of its multi-vendor AI strategy. The company is a major investor in OpenAI and also offers Anthropic's Claude models on its Azure cloud platform. Utilizing both in a single product showcases Azure's model portfolio and allows Microsoft to leverage what it perceives as the distinct advantages of each provider.

From an engineering perspective, this design introduces trade-offs:

- Potential Benefits: Increased accuracy, reduced hallucinations, and more trustworthy outputs for research tasks.

- Potential Costs: Higher latency (two sequential model calls), increased operational complexity, and doubled inference costs per query.

The specific implementation details—such as which exact model versions are used, how the audit prompt is structured, and how conflicts between the draft and audit are resolved—are not yet public.

gentic.news Analysis

This architectural shift for Copilot Researcher is a microcosm of Microsoft's broader, pragmatic approach to frontier AI. Rather than betting everything on a single architecture or partner, the company is actively engineering best-of-breed pipelines. This follows Microsoft's established pattern of leveraging its strategic partnership with OpenAI (including exclusive licensing deals and massive Azure compute commitments) while simultaneously hedging and integrating competitive models, as seen with its Azure AI Studio offering models from Meta, Mistral, and Cohere.

The choice of Anthropic as the auditor is particularly telling. It aligns with a trend we noted in our October 2025 coverage, "Anthropic's Constitutional AI Gains Traction as Enterprise Safety Layer," where enterprises began using Claude not as a primary chatbot, but as a safety and compliance filter for outputs from other, more generative models. Microsoft is effectively productizing this pattern internally.

This development also reflects the maturation of AI agent design. The initial wave of agents often used a single LLM with a ReAct (Reasoning + Acting) loop. The industry is now moving toward specialized multi-agent systems, where different models or instances handle specialized sub-tasks (coding, searching, critiquing). This move by Microsoft's Copilot team is a direct application of that research trend to a high-profile product.

Looking ahead, the success of this two-model system will be measured by a tangible improvement in the verified accuracy of Copilot Researcher's outputs, likely tracked against internal benchmarks. If successful, we expect this pattern to propagate to other Microsoft Copilot systems where accuracy is paramount, such as Copilot for Finance or Copilot for Legal.

Frequently Asked Questions

What is Microsoft Copilot Researcher?

Copilot Researcher is an AI agent within the Microsoft Copilot ecosystem designed to assist with research tasks. It can search for information, synthesize data from multiple sources, and provide summaries or answers to complex queries, aiming to function as an AI research assistant.

Why use two different AI models from different companies?

Using two models from different providers (OpenAI and Anthropic) leverages their complementary strengths. OpenAI's models are generally considered top-tier for creative generation and drafting, while Anthropic has heavily invested in making Claude models reliable, steerable, and less prone to harmful or inaccurate outputs—making it a strong candidate for an auditing role. This design aims to combine the best of both worlds.

Will this make Copilot Researcher slower or more expensive to use?

Almost certainly. Calling two large language models sequentially will increase the time to generate a final answer (latency) compared to a single-model system. It also doubles the inference cost per query for Microsoft. The company is betting that users will accept this trade-off for a significant gain in output accuracy and trustworthiness.

Does this mean Microsoft is moving away from OpenAI?

No. This is better interpreted as Microsoft diversifying its AI dependencies within a single product. OpenAI remains a cornerstone partner, as evidenced by its model handling the primary drafting task. Incorporating Anthropic is a strategic hedge and a technical choice to improve system robustness, not a replacement of OpenAI.

Source: Report based on social media analysis from Rohan Pandey (@rohanpaul_ai). Microsoft has not yet made an official announcement or published technical details on this architectural change.