What Happened

Yang Zhilin, the founder and CEO of Chinese AI company Moonshot AI, recently discussed the architectural value of attention residuals in large language models. The commentary was shared via social media, highlighting a technical perspective from the creator of the widely-used Kimi Chat long-context assistant.

While the source does not provide a detailed technical paper or new benchmark results, it signals ongoing internal research and architectural philosophy at one of China's leading AI labs. Zhilin's argument centers on the residual components within the attention mechanism—a core building block of transformer models—suggesting they hold significant, perhaps underappreciated, value for model performance and efficiency.

Context: Moonshot AI and Kimi Chat

Moonshot AI, founded by Yang Zhilin, has gained prominence primarily through Kimi Chat, an AI assistant notable for its exceptionally long context window. In March 2024, Kimi Chat reportedly supported a context length of 2 million tokens, a significant competitive differentiator in the global LLM landscape. The company is considered a major player in China's generative AI sector, having secured over $1 billion in funding in a single round earlier in 2024.

Zhilin himself has a strong technical pedigree, holding a PhD from Carnegie Mellon University and having previously worked at Google Brain. His public technical commentary, therefore, carries weight within the research community.

The Technical Nub: Attention Residuals

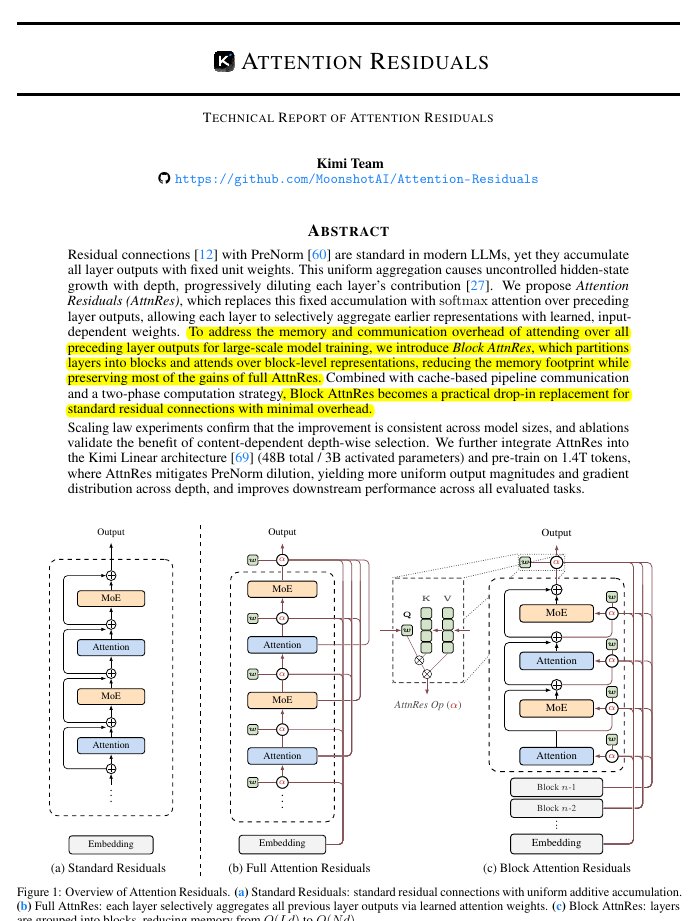

The term "attention residuals" likely refers to the residual connections within or around the multi-head attention sub-layer in a transformer block. In the standard transformer architecture (Vaswani et al., 2017), each sub-layer (like attention or feed-forward) employs a residual connection: LayerOutput(x) = LayerNorm(x + Sublayer(x)). This design mitigates vanishing gradients and enables the training of very deep networks.

Zhilin's argument suggests a specific focus on the properties or optimization of these residuals within the attention mechanism. Potential areas of interest could include:

- The flow of information through these pathways.

- Their role in stabilizing training for very long context windows.

- Innovations like "attention with linear biases" or other modifications that affect the residual stream.

Without a published paper or talk transcript, the exact nature of his argument remains speculative. However, it points to active refinement of core transformer components at Moonshot AI, which may be foundational to the capabilities of models like Kimi.

gentic.news Analysis

This brief commentary is a data point in two significant, ongoing trends we track. First, it reflects the architectural maturation phase of LLMs. After years of scaling model and data size, leading labs like Moonshot AI, DeepSeek, and OpenAI are now deeply focused on architectural efficiency and refinement—squeezing more capability from each parameter and token. This aligns with our previous coverage on DeepSeek's Mixture-of-Experts innovations and the industry-wide push for more cost-effective inference.

Second, it underscores the growing technical voice of Chinese AI labs in global discourse. Moonshot AI, backed by significant capital, is not just shipping a product (Kimi Chat) but is actively contributing to architectural philosophy. Yang Zhilin's argument about attention residuals can be seen as part of a broader, confident engagement with fundamental AI research from China, challenging the notion that technical leadership is concentrated solely in the West. This follows a pattern of increased technical communication from Chinese entities, which we noted following the release of Qwen2.5.

For practitioners, the key takeaway is to monitor Moonshot AI's future publications. If attention residual optimization is a key component of Kimi's long-context prowess, its formal publication could influence the next wave of efficient transformer variants.

Frequently Asked Questions

What are attention residuals in a transformer model?

Attention residuals refer to the residual (or skip) connections used in the multi-head attention sub-layer of a transformer block. In the standard architecture, the output of the attention computation is added to the original input before layer normalization. This creates a pathway for gradients to flow directly through the network, which is critical for training stability in deep models. Yang Zhilin's comments suggest these specific connections may have under-explored properties important for model performance.

Who is Yang Zhilin?

Yang Zhilin is the founder and CEO of Moonshot AI, a prominent Chinese AI company. He holds a PhD from Carnegie Mellon University and previously worked as a research scientist at Google Brain. He is the technical lead behind the Kimi Chat AI assistant, which is known for its industry-leading long-context capabilities.

What is Moonshot AI known for?

Moonshot AI is best known for developing Kimi Chat, an intelligent assistant that supports an exceptionally long context window (reportedly up to 2 million tokens). The company is a major player in China's generative AI sector and raised over $1 billion in funding in early 2024. Their research focuses on pushing the boundaries of context length and efficient model architecture.

Has Moonshot AI published a paper on attention residuals?

As of this reporting, Moonshot AI has not published a formal research paper detailing new work on attention residuals. Yang Zhilin's comments appear to be a high-level advocacy for the importance of this architectural component. Any technical implementation or results would need to be awaited in a future publication or model release.