A brief social media post from an account tracking AI developments has pointed to a significant demonstration from Neuralink and ElevenLabs. The companies have reportedly collaborated to "bring back the language that people have lost due to illness" for a Neuralink patient, using ElevenLabs' voice synthesis technology.

What Happened

According to the source, Neuralink and ElevenLabs have created a functional demonstration where a person with a Neuralink brain-computer interface (BCI), who has lost the ability to speak due to illness, can now communicate using an AI-generated voice. The system appears to decode neural signals related to intended speech from the BCI and translate them into synthetic speech using ElevenLabs' technology. The linked video shows a real-time or near-real-time conversion from thought to audible speech.

Context & Technical Implications

This demonstration represents a concrete application of two cutting-edge technologies converging: high-bandwidth neural interfaces and ultra-realistic voice AI.

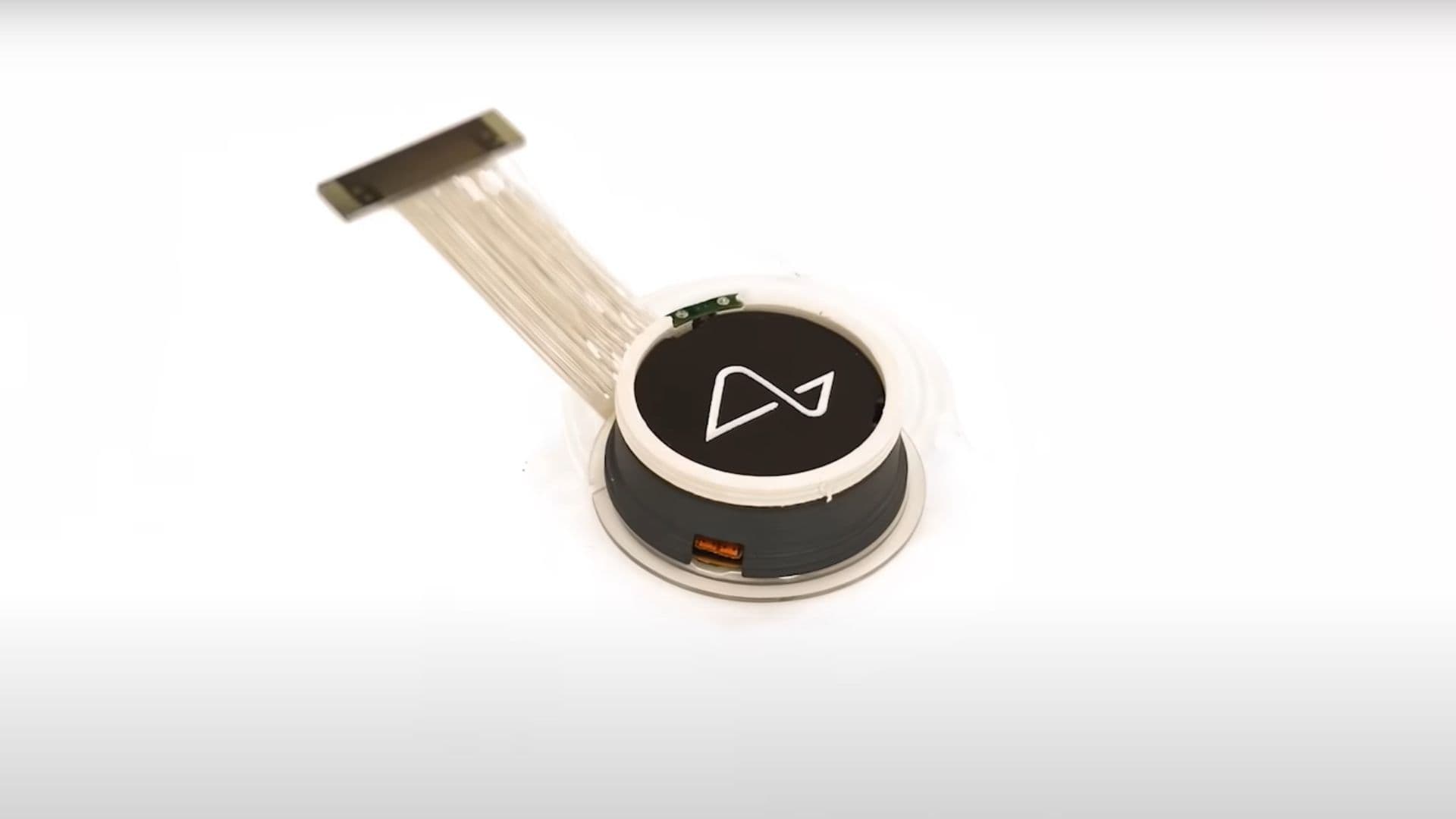

- Neuralink's Role: The company's N1 implant, placed in the motor cortex, is likely capturing neural activity patterns associated with the patient's attempt to form words or control vocal muscles. Previous public updates from Neuralink have focused on cursor control and basic device functionality. This demo shifts the focus squarely to a high-impact clinical application: restoring communication.

- ElevenLabs' Role: The voice AI company provides the synthesis layer. The critical technical challenge here is not just generating a voice, but doing so from a noisy, incomplete neural signal. The system must infer phonemes, words, and prosody from brain data, not text. This suggests significant custom integration and model fine-tuning between the BCI's output and ElevenLabs' voice engine.

The demo, while promotional, indicates a move beyond basic motor control benchmarks (like cursor speed) toward integrated, real-world assistive applications. It directly addresses a core promise of BCIs: restoring lost functions.

gentic.news Analysis

This collaboration is a strategic and technical inevitability. Neuralink's path to clinical validation and public acceptance requires demonstrating unambiguous therapeutic benefit. Restoring speech for patients with conditions like ALS, brainstem stroke, or spinal cord injury is one of the most compelling use cases imaginable. Partnering with ElevenLabs, the market leader in realistic voice cloning and generation, allows Neuralink to shortcut years of in-house audio AI development and leverage best-in-class technology.

This aligns with a broader trend we've covered of BCIs moving from lab demonstrations to integrated product stacks. In December 2025, we reported on Synchron's Stentrode achieving a milestone in continuous home use for text generation. The Neuralink+ElevenLabs demo represents the next aesthetic and functional step: moving from text-on-a-screen to a fluid, personified auditory interface. It also creates immediate competitive pressure on other BCI players like Precision Neuroscience and Paradromics to showcase similar high-level applications.

Technically, the hard problem remains the neural decoding layer. ElevenLabs' model is likely receiving a stream of inferred linguistic features (phonetic, syntactic) from Neuralink's proprietary decoder. The fidelity of the final voice is therefore a direct measure of the accuracy of that neural decoding pipeline. If the voice sounds natural and responsive, it's a strong indirect benchmark of the BCI's performance. Practitioners should watch for whether any technical details on this decoding architecture or latency metrics are released; without them, the demo remains a powerful proof-of-concept rather than a published scientific result.

Frequently Asked Questions

How does the Neuralink and ElevenLabs system work?

The system likely works in two stages. First, the Neuralink implant records neural activity from the brain's speech-related areas as the patient attempts to speak. A decoder algorithm translates this activity into intended speech components (like phonemes or words). Second, this structured linguistic data is sent to a customized ElevenLabs voice synthesis model, which generates a realistic, audible voice speaking the intended words in real-time.

What illnesses could this technology help?

This technology is primarily aimed at conditions that result in anarthria or severe dysarthria—the loss of the ability to articulate speech—while cognitive language function remains intact. This includes advanced amyotrophic lateral sclerosis (ALS), brainstem strokes, certain traumatic brain injuries, and some cases of cerebral palsy.

Is this technology available to patients now?

No, this is a demonstration. Neuralink's device is still in early clinical trials. While this demo shows a potential application, regulatory approval (from the FDA) for a commercial speech-restoration system is likely years away. The demo is a critical step in proving the concept and guiding future clinical study designs.

How is this different from Stephen Hawking's speech system?

Stephen Hawking used a system that detected minute cheek muscle movements to select letters, forming words slowly via text-to-speech. The Neuralink+ElevenLabs system aims to be fundamentally different: it bypasses the need for any residual muscle control by reading the intention to speak directly from the brain. The goal is a faster, more natural, and more fluid communication channel that mirrors the speed and expressiveness of natural speech.