A significant advancement in AI coding assistant evaluation has emerged with a new benchmark that addresses long-standing credibility issues in the field. Unlike traditional benchmarks that rely on synthetic test cases, this approach uses real GitHub pull requests to assess AI tools' performance in authentic development scenarios.

The Credibility Framework

The benchmark's credibility stems from five key design principles that distinguish it from previous evaluation methods:

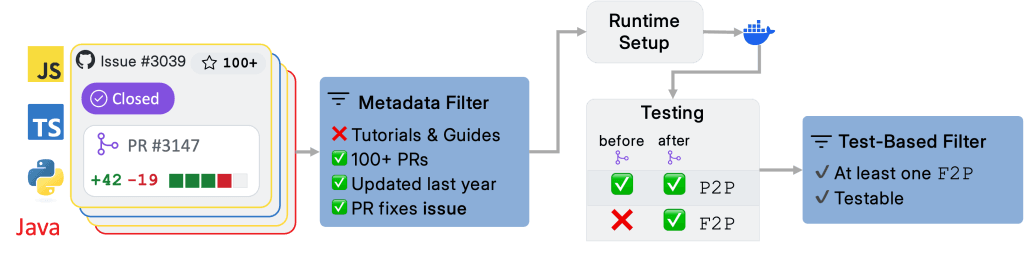

Real Pull Requests: Instead of artificial coding challenges, the benchmark uses actual pull requests from open-source projects, capturing the complexity and context of real-world development work.

F1 Scoring: The evaluation measures both precision (correctness of suggestions) and recall (completeness of solutions), providing a balanced assessment rather than focusing solely on one metric.

Comprehensive Tool Comparison: Eight different AI coding tools were evaluated, including the benchmark creators' own tool, ensuring a fair competitive landscape.

Transparent Methodology: The full evaluation methodology has been published with no hidden details, allowing for peer review and replication.

Inclusive Results: All results were published, including those where the benchmark creators' own tool didn't perform well, demonstrating scientific integrity.

Why This Matters for AI Development

Traditional AI coding benchmarks have faced criticism for their artificial nature. Synthetic test cases often fail to capture the nuanced requirements, edge cases, and contextual understanding needed in real software development. This has led to tools that perform well on benchmarks but struggle in production environments.

By using real pull requests, this benchmark evaluates how AI tools handle:

- Complex, multi-file changes

- Integration with existing codebases

- Understanding of project-specific conventions

- Real bug fixes and feature implementations

The Technical Approach

The F1 scoring system combines precision and recall metrics, addressing a common weakness in AI evaluation where tools might generate many suggestions (high recall) but with low accuracy, or generate few but highly accurate suggestions (high precision). The F1 score provides a harmonic mean that balances both concerns.

This balanced approach is particularly important for coding assistants, where:

- High precision ensures developers don't waste time reviewing incorrect suggestions

- High recall ensures the AI doesn't miss important fixes or improvements

Industry Implications

The transparent methodology represents a shift toward more rigorous AI evaluation standards. By publishing unfavorable results alongside successes, the benchmark creators have established a precedent for scientific honesty in AI research.

This approach could influence:

- Tool Development: AI companies may focus more on real-world performance rather than benchmark optimization

- Enterprise Adoption: Organizations can make more informed decisions about which coding assistants to implement

- Research Direction: Academic and industry research may adopt similar real-world evaluation methods

Challenges and Limitations

While this benchmark represents significant progress, challenges remain:

- Dataset Bias: Real pull requests may still reflect biases in open-source development practices

- Context Limitations: The benchmark may not capture proprietary enterprise development contexts

- Evolutionary Pace: As AI tools rapidly improve, benchmarks must continuously update their evaluation methods

The Future of AI Coding Evaluation

This benchmark sets a new standard for credibility in AI coding assistant evaluation. Future developments might include:

- Specialized Benchmarks: Domain-specific evaluations for different programming languages, frameworks, or application types

- Longitudinal Studies: Tracking tool performance over time as codebases evolve

- Human-in-the-Loop Evaluation: Measuring how tools enhance developer productivity rather than just code generation accuracy

Conclusion

The move toward real-world pull request evaluation represents a maturation of AI coding assessment methodologies. By prioritizing authenticity, transparency, and balanced metrics, this benchmark provides developers and organizations with more reliable guidance for selecting and improving AI coding tools.

As AI continues to transform software development, credible evaluation frameworks like this will be essential for separating genuine advancements from benchmark-optimized illusions of progress.

Source: Twitter thread by @hasantoxr discussing new AI coding benchmark methodology