Key Takeaways

- Researchers propose DITaR, a dual-view method to detect and rectify harmful fake orders embedded in user sequences.

- It aims to protect recommendation integrity while preserving useful data, showing superior performance in experiments.

- This addresses a critical vulnerability in e-commerce and retail AI systems.

What Happened

A new research paper, "Unbiased Rectification for Sequential Recommender Systems Under Fake Orders," was posted to the arXiv preprint server on January 24, 2026. The work tackles a growing threat to e-commerce platforms: fake orders deliberately embedded within genuine user interaction sequences to manipulate recommendation outcomes.

The authors identify three primary attack vectors: click farming (artificially inflating interaction counts), context-irrelevant substitutions (inserting off-topic items), and sequential perturbations (disrupting the natural order of actions). Unlike attacks that inject entirely fake user profiles, these "fake orders" are more insidious as they corrupt the historical data of real users, directly distorting the learned models of user preference and intent.

The core challenge is rectifying a compromised system without the prohibitive cost of full model retraining. The paper's key insight is that not all fake orders are equally harmful; some can even have a data augmentation effect. Therefore, a blanket removal approach is suboptimal.

Technical Details

To address this, the researchers propose Dual-view Identification and Targeted Rectification (DITaR). The method operates in two stages:

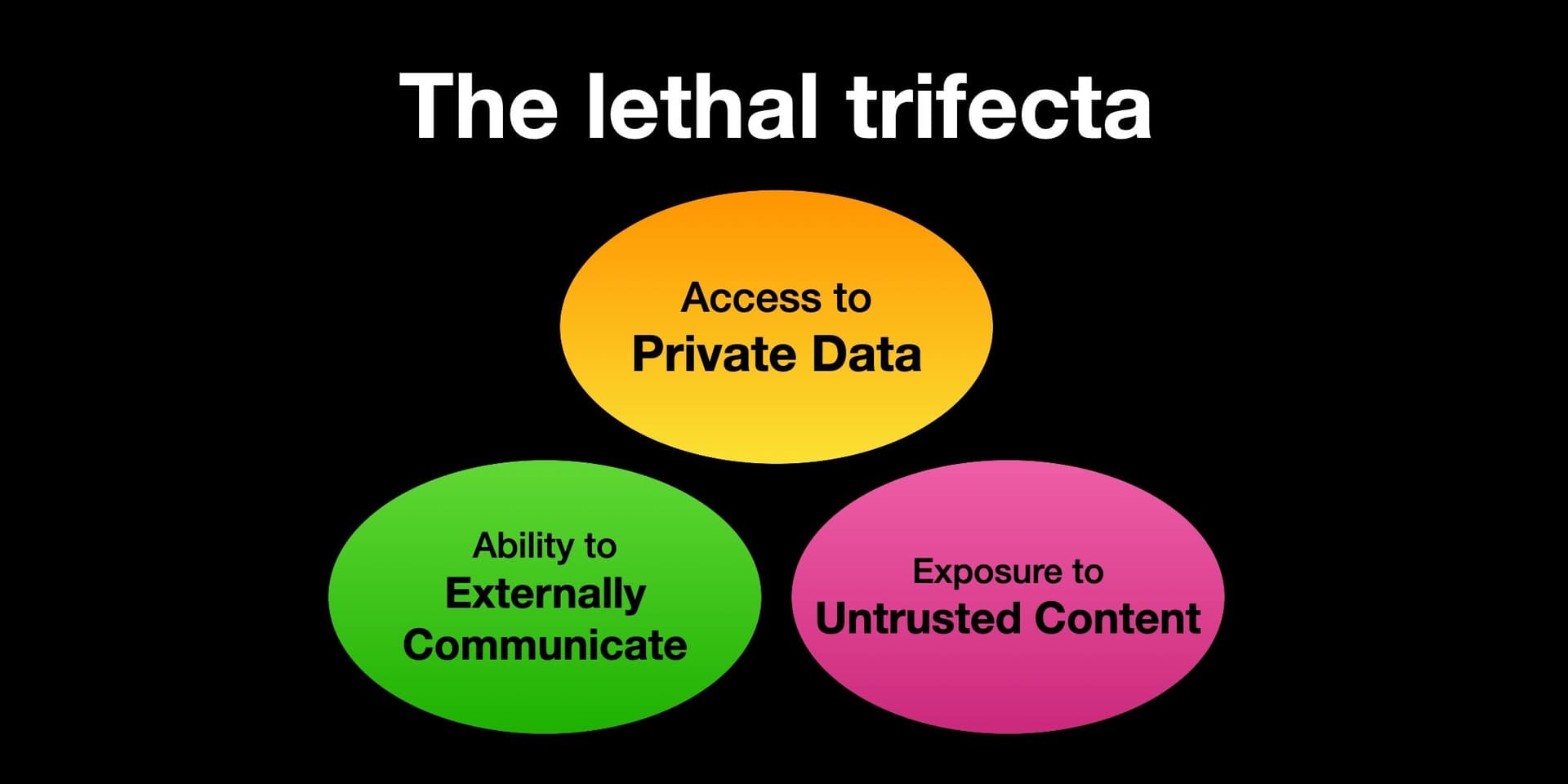

Dual-view Identification: The system analyzes each suspicious interaction from two distinct perspectives:

- Collaborative View: Examines the interaction patterns—does this user's action align with the behavior of similar users?

- Semantic View: Assesses the content relevance—does this item fit the thematic context of the user's sequence?

By obtaining differentiated representations from these views, DITaR aims for more precise detection than single-view methods.

Targeted Rectification: Not all detected fake orders are removed. A filtering mechanism selects only the ones deemed "truly harmful." For these, the system performs a targeted rectification using gradient ascent on the model's parameters. This technique essentially "un-learns" the influence of the harmful sample. Crucially, it maintains the original data volume and sequence structure, preserving potentially useful information from benign or augmentative fake orders and preventing residual bias.

The paper reports "extensive experiments on three datasets" demonstrating that DITaR outperforms state-of-the-art baselines in recommendation quality (e.g., Hit Rate, NDCG), computational efficiency, and system robustness against attacks.

Retail & Luxury Implications

This research has direct and significant implications for high-value retail and luxury e-commerce, where recommendation integrity is paramount.

The Threat is Real: In competitive markets, bad actors have a strong incentive to manipulate exposure. A rival brand or a third-party seller could use fake orders to:

- Suppress a competitor's item by associating it with irrelevant user sequences, causing the algorithm to deprioritize it.

- Artificially boost visibility for a target product by embedding it in the sequences of users with high-value profiles.

- Corrupt user taste profiles, leading to poor personalization and eroded customer trust—a critical asset in luxury.

The DITaR Approach: For retail AI teams, the value proposition of DITaR is its efficiency. Completely retraining massive sequential models (like Transformers or GRUs) on cleansed data is computationally expensive and slow. A method that can surgically correct a live system is highly attractive. The dual-view detection is particularly relevant for luxury, where the "semantic view" must understand nuanced attributes like brand ethos, craftsmanship, and seasonal trends, not just collaborative patterns.

Implementation Considerations: Adopting such a method requires mature MLOps pipelines. Teams need the capability to monitor recommendation drift, flag potential manipulation campaigns, and apply corrective updates. The research is still in the academic preprint stage (hosted on arXiv, which has been mentioned in 292 prior articles on our platform, indicating its central role in disseminating early AI research), meaning it requires rigorous validation and potential adaptation for specific, production-scale retail systems before deployment.

gentic.news Analysis

This paper enters a rapidly evolving conversation about securing AI-driven retail systems. It follows a clear trend of arXiv serving as the primary conduit for cutting-edge recommender systems research, having just hosted papers on topics from cold-start scenarios (March 31) to cross-domain sequential recommendation (April 10, covered in our article "CoDiS: A Causal Framework for Cross-Domain Sequential Recommendation"). The focus on sequential recommenders is key, as these models power the "next-best-product" suggestions and curated discovery journeys that define modern luxury digital storefronts.

The work on DITaR aligns with a broader industry shift from purely performance-focused AI to robust and trustworthy AI. It addresses a vulnerability that sits at the intersection of cybersecurity and machine learning. For luxury brands, where brand equity and curated experience are the product, an algorithm manipulated to suggest off-brand or counterfeit-adjacent items is a direct threat to brand value.

However, it also highlights a gap. The research is methodological and evaluated on standard datasets. The real test for retail AI leaders will be in translating this academic defense into a operational monitoring and response system. It necessitates collaboration between data scientists, platform security teams, and marketplace integrity units—a cross-functional challenge as complex as the algorithm itself. This paper provides a promising theoretical shield; building the practical armor around a live, billion-interaction recommendation engine is the next critical step.