Nvidia Blackwell GPUs ship Cluster Launch Control (CLC), a hardware tile scheduler that Colfax International benchmarks show delivers up to 15% higher GEMM throughput than static persistent scheduling. CLC dynamically assigns work tiles to cluster groups, eliminating load imbalance without the startup overhead of single-tile scheduling.

Key facts

- CLC is a hardware-supported feature on Nvidia Blackwell GPUs.

- Up to 15% higher GEMM throughput vs static persistent scheduling.

- Eliminates load imbalance in grouped GEMM workloads.

- Integrates with CuTe DSL kernels for easy adoption.

- Blackwell is Nvidia's GPU microarchitecture for B100, B200, GB200.

The Tile Scheduling Problem

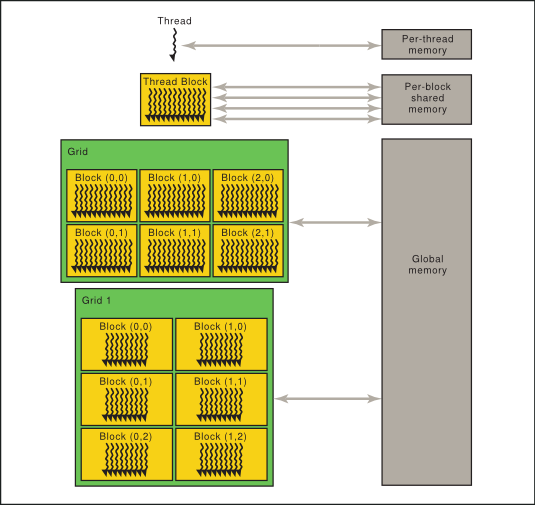

Matrix multiplication (GEMM) is the computational backbone of AI training and inference. Parallelizing it requires partitioning the output into tiles and assigning each tile to a processor — a CTA or cluster of CTAs in CUDA's execution model. The choice of scheduling strategy directly determines GPU utilization and throughput.

Naive single-tile scheduling launches a grid matching the tile count, paying a fixed startup cost per tile — pipeline initialization, descriptor setup — and cannot overlap epilogue with mainloop across tiles [According to Colfax International]. Static persistent scheduling launches only as many clusters as can run concurrently, overlapping tile phases, but suffers load imbalance, especially in grouped GEMMs where problem shapes vary.

How CLC Works

Cluster Launch Control (CLC) is a hardware feature on Blackwell that allows dynamic persistent tile scheduling. Instead of pre-assigning tiles in a linear order, CLC lets clusters request new work tiles on the fly from a hardware-managed queue. This combines the overlapping benefits of persistence with the load-balancing of single-tile scheduling, without the per-tile startup cost.

Colfax's implementation uses CuTe (CUDA Templates) DSL kernels, demonstrating that CLC can be integrated into existing codebases without rewriting the core GEMM loop. The hardware scheduler tracks cluster occupancy and distributes tiles only to idle clusters, automatically adapting to workload imbalance.

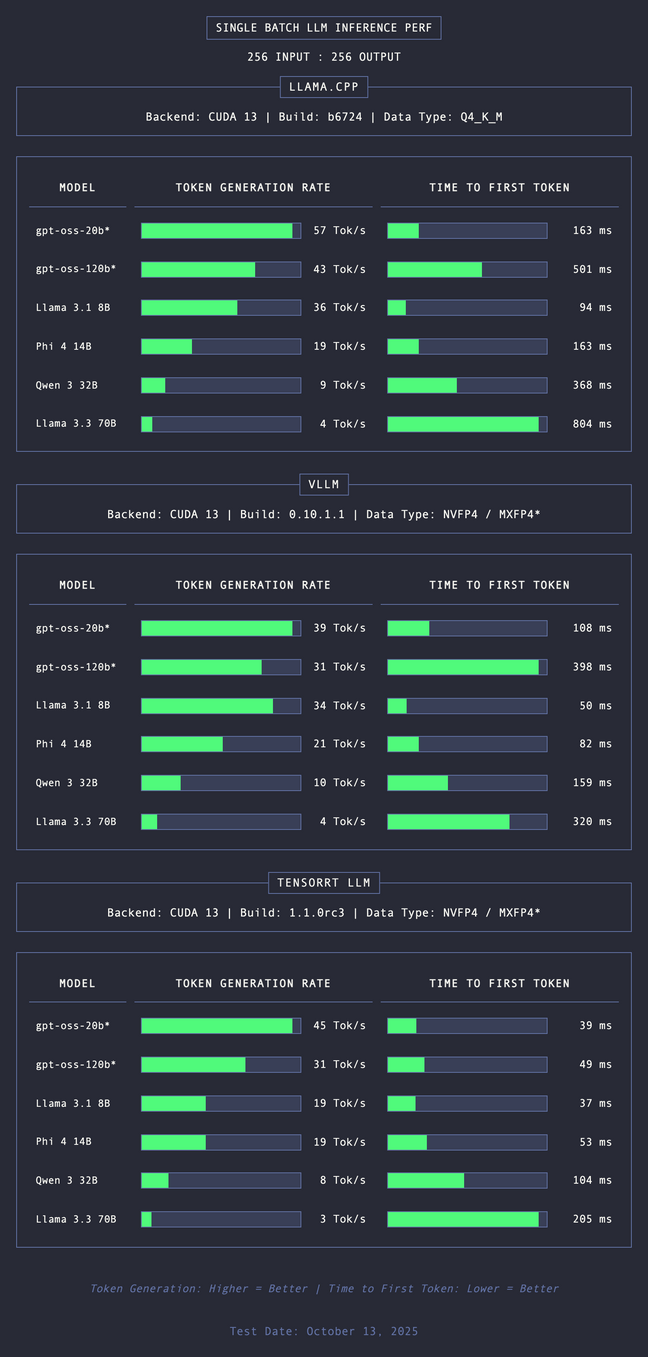

Benchmark Results

Colfax International benchmarks show CLC delivers up to 15% higher GEMM throughput than static persistent scheduling on Blackwell GPUs. The gain is most pronounced in grouped GEMM scenarios where tile compute times vary significantly — exactly the load-imbalance regime where static persistence falls short.

This is a structural improvement: it does not require larger tiles, higher clock speeds, or new number formats. It is purely a scheduling optimization that extracts more work from the same silicon.

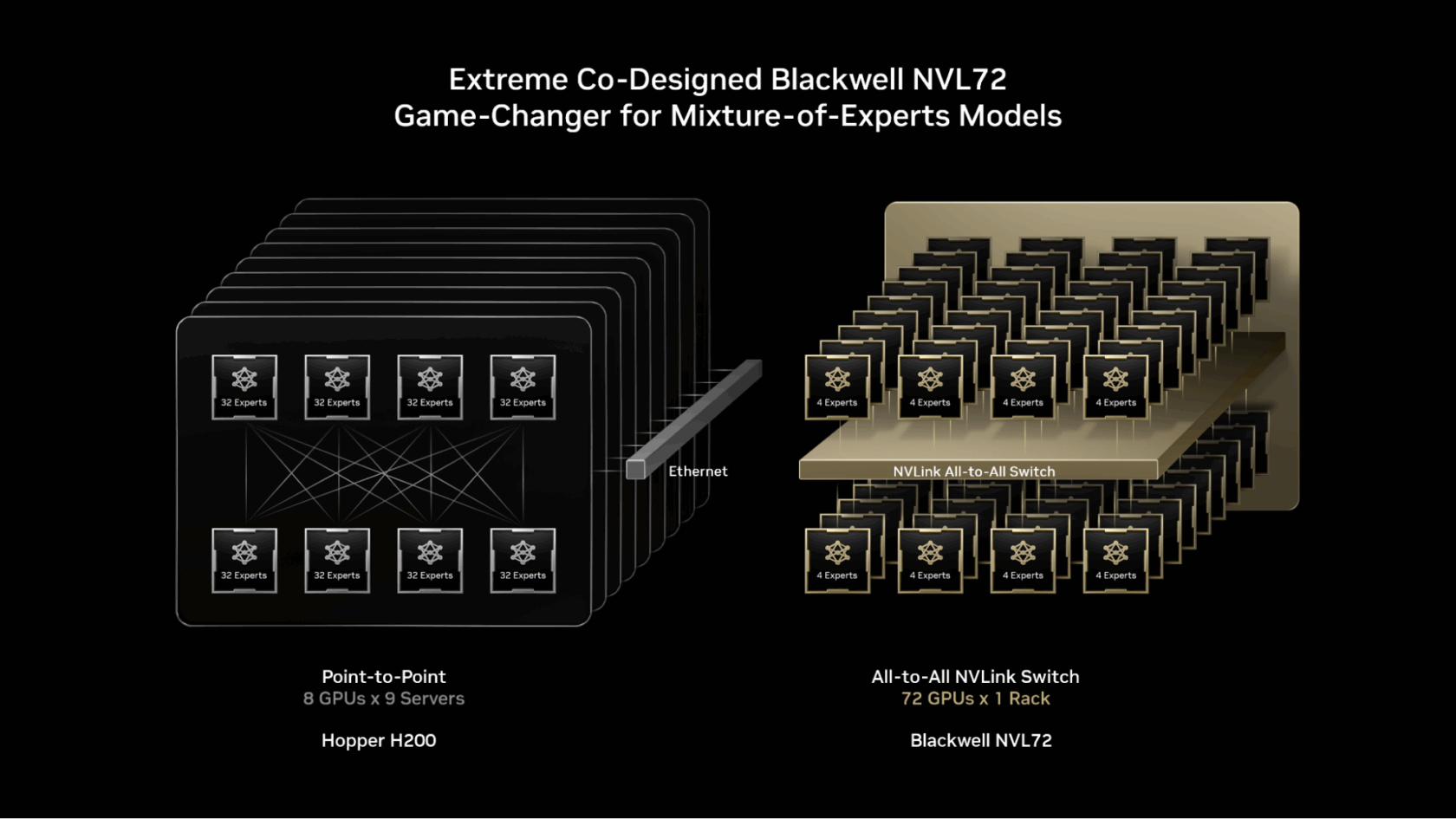

The Unique Take

CLC is not a generational leap in raw FLOPS — it is a scheduling architecture that closes the gap between theoretical peak and realized throughput. Nvidia [per the source] is betting that as AI workloads diversify beyond dense transformers, dynamic scheduling will matter more than raw compute density. Competitors like AMD, whose MI350P targets existing server installs with PCIe form factors, must now match not just memory bandwidth but scheduling sophistication.

What to watch

Watch for Nvidia's CUDA 13 release and whether CLC becomes the default scheduling mode in cuBLAS. Also monitor AMD's response in MI400 scheduling architecture — if CLC yields consistent 10-15% gains, competitors must match at the hardware level, not just software.