In a strategic blog post, Nvidia has made a direct argument to enterprise buyers: the only metric that matters when evaluating AI infrastructure is cost per token. The company contends that as data centers evolve into "AI token factories" primarily running inference for generative and agentic AI, traditional metrics like FLOPS per dollar or chip specifications are fundamentally misaligned with business outcomes.

Key Takeaways

- Nvidia asserts that total cost of ownership for AI infrastructure must be measured in cost per delivered token, not raw compute metrics.

- This shift is critical for scaling profitable agentic AI applications.

The Core Argument: From Inputs to Outputs

Nvidia's thesis is that enterprises are optimizing for the wrong thing. The post distinguishes between three key metrics:

- Compute Cost: What you pay for infrastructure (cloud or on-prem).

- FLOPS per Dollar: Raw computing power purchased per dollar.

- Cost Per Token: The all-in cost to produce each delivered token (typically cost per million tokens).

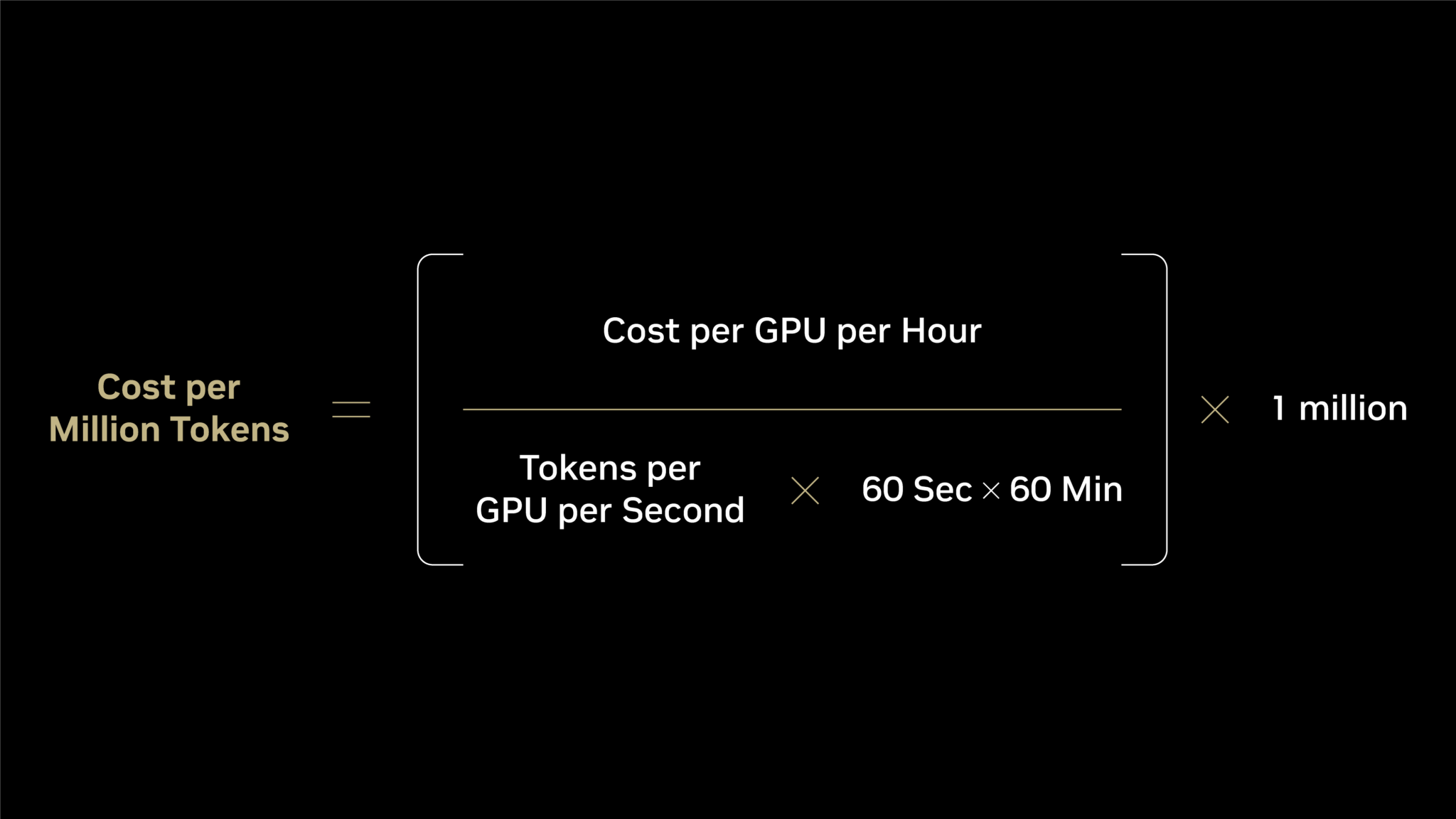

The first two are input metrics. The third is an output metric. Nvidia argues that focusing on inputs while the business runs on output creates a "fundamental mismatch." The company states that "cost per token determines whether enterprises can profitably scale AI" and claims it delivers the industry's lowest cost per token.

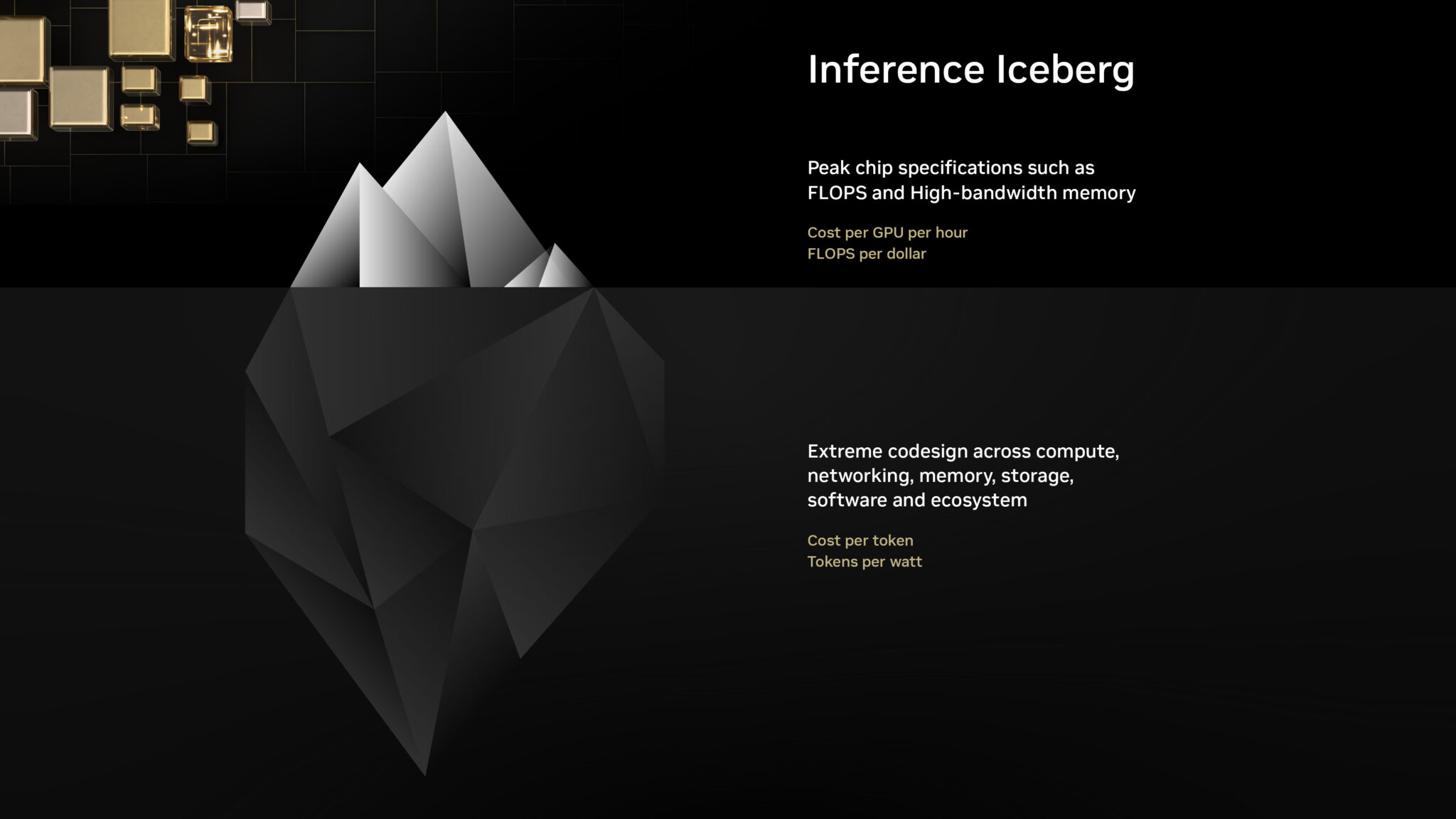

The "Inference Iceberg" and the Denominator Problem

The central technical argument revolves around the equation for calculating cost per million tokens. While many enterprises focus on the numerator—cost per GPU per hour—the real leverage is in the denominator: maximizing delivered token output.

Nvidia frames this as an "inference iceberg." The visible, surface-level inquiry includes:

- Cost per GPU hour

- Peak petaflops and high-bandwidth memory specs

Beneath the surface, the factors that actually determine real-world token output—and thus cost per token—include:

- Hardware performance under real workloads

- Software optimization (e.g., inference engines, kernels)

- Ecosystem support and integration

- System-level utilization and efficiency

Driving up the denominator (tokens delivered per second) has two direct business impacts:

- Minimizes Token Cost: Lowers the cost per token, increasing profit margin per AI interaction.

- Maximizes Revenue: More tokens delivered per second and per megawatt means more intelligence can be packaged into products and services, generating more revenue from the same capital investment.

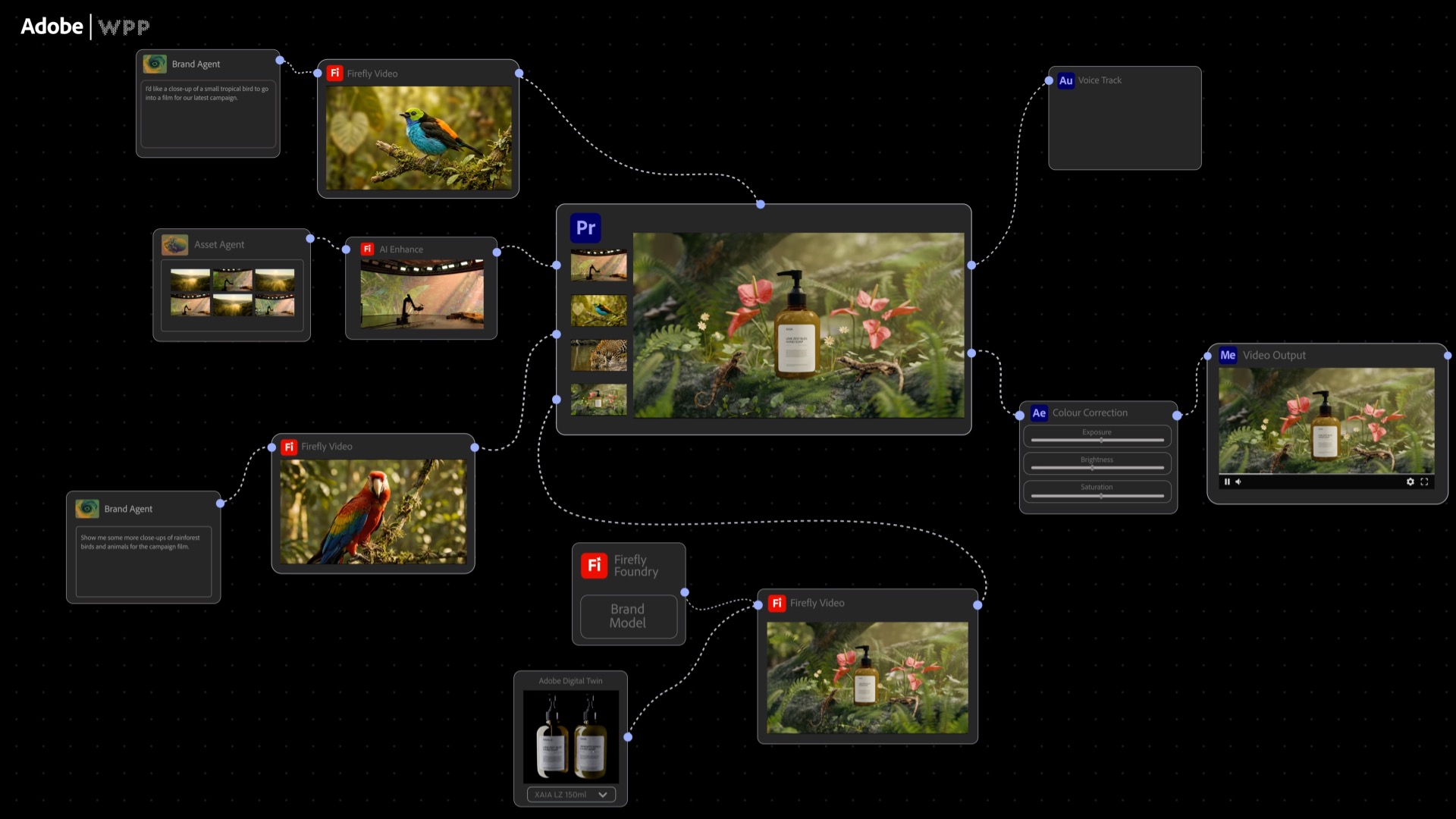

Why This Shift Matters Now: The Rise of Agentic AI

The push for this new economic model is timed with the industry's pivot toward agentic AI systems. As covered in our recent reporting, Gartner projects 40% of enterprise applications will feature task-specific AI agents by 2026, and agents are forecast to handle 50% of online transactions by 2027. These agentic workloads—like the emerging agentic AI checkout systems in retail—are characterized by long, multi-step reasoning chains that generate vast numbers of tokens per task. A single agent completing a complex software task, as in the METR benchmarks where Gemini 3.1 Pro handled 90-minute tasks, can produce millions of tokens.

In this context, an infrastructure stack optimized for raw theoretical FLOPS but inefficient at real token generation becomes a severe business liability. The cost to serve a customer via an AI agent directly determines the viability of the service.

The Competitive Landscape and Infrastructure Spend

Nvidia's framing is also a competitive move in a market where alternatives are emerging. Just last week, Intel and SambaNova Systems jointly proposed a hybrid inference architecture blueprint specifically for agentic AI workloads. Furthermore, global datacenter capital expenditure has reached an astronomical $250-300 billion annually—equivalent to 5-7 Manhattan Projects per year—making efficiency paramount.

By defining the battlefield as "cost per token," Nvidia leverages its full-stack advantage (chips, networking, software like TensorRT-LLM, and CUDA ecosystem) against competitors who may compete on narrower hardware specs. The message to enterprises is clear: when choosing infrastructure, simulate your actual agentic workloads and measure the real output cost, not the input specs.

gentic.news Analysis

Nvidia's blog is less a technical disclosure and more a strategic market-framing document. It attempts to establish "cost per token" as the definitive procurement metric just as enterprise AI spending shifts decisively from training to inference and agentic deployment. This aligns with the trend we've been tracking: Agentic AI has appeared in 47 prior articles and was featured in 3 this week alone, including our report on its emergence in retail checkout systems.

The argument has merit. As we covered in "AI Datacenter Spend Hits 5-7 Manhattan Projects Yearly," the scale of investment demands rigorous, output-oriented ROI calculations. Benchmarks like METR's evaluation of long-horizon agentic tasks reveal that real-world performance diverges sharply from peak theoretical compute. An infrastructure decision based solely on FLOPS per dollar could be catastrophic if the software stack or memory bandwidth throttles token throughput for complex agentic chains.

However, this is also a defensive play. By focusing the conversation on a holistic "cost per token" metric—where Nvidia's integrated hardware-software stack shines—the company makes it harder for competitors winning on isolated price/performance specs to gain traction. It responds directly to initiatives like Intel's hybrid inference blueprint by implying that such point solutions ignore the total system efficiency required for profitable agentic AI at scale. The ultimate test will be whether enterprises adopt this framework in their RFPs and whether cloud providers begin publishing cost-per-token metrics across different hardware stacks.

Frequently Asked Questions

What is "cost per token" in AI infrastructure?

Cost per token is the total all-in expense to generate each output token from an AI model, usually expressed as cost per million tokens. It includes hardware (GPU/CPU), software, networking, power, and cooling costs amortized over the actual tokens delivered, not just theoretical compute capacity.

Why is Nvidia pushing this metric now?

The shift is driven by the rise of agentic AI—systems that perform multi-step tasks autonomously. These workloads generate massive token volumes per interaction, making token generation efficiency the primary determinant of business profitability. With agentic AI projected to be in 40% of enterprise apps by 2026, infrastructure economics are becoming critical.

How does "cost per token" differ from "FLOPS per dollar"?

FLOPS per dollar measures raw theoretical computing power purchased. Cost per token measures the actual business output delivered. A system with high FLOPS per dollar but inefficient software or memory bottlenecks may have a terrible cost per token because it generates fewer real tokens per second per dollar.

What should enterprises do to evaluate cost per token?

Enterprises should benchmark their actual target workloads (e.g., their specific agentic AI pipelines) on candidate infrastructure, measuring total tokens delivered per second under production conditions. They should then calculate the fully-loaded hourly cost of that infrastructure (cloud instance or amortized on-prem hardware) to derive a true cost per million tokens for their specific use case.