A developer operating under the handle hasantoxr has released oh-my-claudecode, an open-source multi-agent orchestration framework built on top of Anthropic's Claude Code. The project, which has garnered over 3.6K GitHub stars, aims to transform the standalone coding assistant into a coordinated system of specialized agents, claiming performance improvements of 3-5x in speed and 30-50% in token efficiency.

The tool requires no new subscriptions or API keys beyond a user's existing Claude subscription, positioning itself as a pure productivity layer that sits between the user and the official Claude Code interface.

What's New: Five Execution Modes and 32 Specialized Agents

The core of oh-my-claudecode is its orchestration system, which introduces five distinct execution modes for different task types:

- Autopilot Mode: For fully autonomous execution. A user describes a task and the system operates without further intervention.

- Ultrapilot Mode: Designed for speed on multi-component projects. It spins up parallel agents, with the developer claiming it runs 3-5x faster for complex builds.

- Swarm Mode: Coordinates multiple independent agents working toward a single, shared goal, facilitating division of labor on large-scale tasks.

- Pipeline Mode: Executes tasks as sequential chains, ideal for multi-stage processing like data transformation pipelines.

- Ecomode: A token-optimized mode that reportedly saves 30-50% on costs by employing more efficient prompting and task structuring without sacrificing output quality.

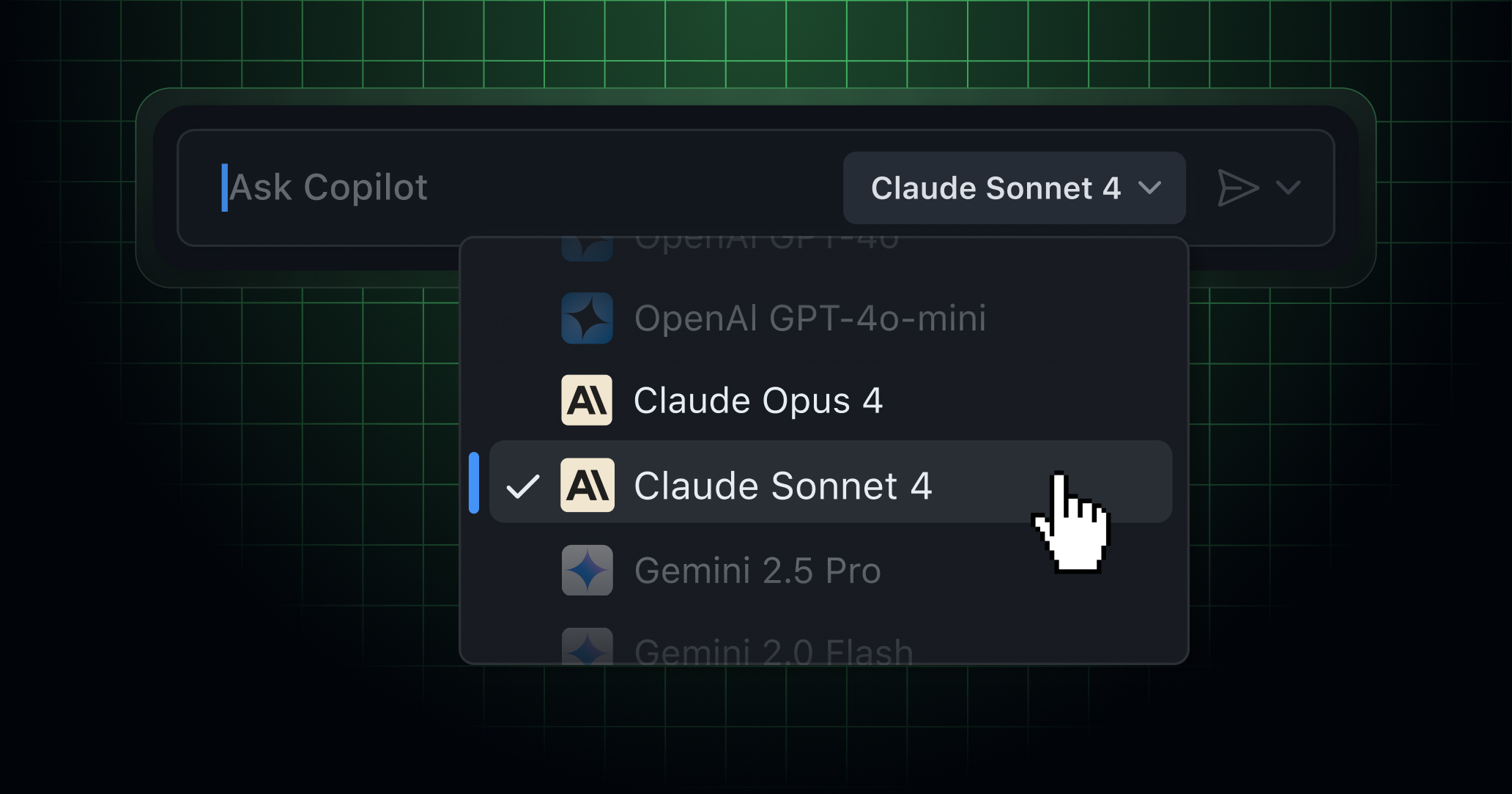

Underpinning these modes is a roster of 32 specialized agents pre-configured for domains including software architecture, research, UI/design, testing, and data science. The system includes "smart model routing" that automatically decides whether to use Claude's faster, cheaper Haiku model for simple tasks or the more capable Opus model for complex reasoning, abstracting the model selection process from the user.

Key Features: Magic Keywords and Auto-Resume

The framework is activated through simple command keywords within the Claude Code interface:

- Typing

"autopilot"triggers fully autonomous build mode. "ralph"engages a persistence mode where the system continues working until a job is verified as complete."eco"switches the session to budget-optimized execution."plan"initiates a planning interview phase before any code is written, forcing the system to outline its approach.

A notable operational feature is automatic session resumption. The tool monitors for API rate limits and, upon hitting them, can automatically pause and resume the Claude Code session once limits reset, eliminating the need for manual restart and babysitting.

Technical Implementation and Open-Source Status

As an open-source project hosted on GitHub, oh-my-claudecode is built as an orchestration layer that likely intercepts and re-routes prompts to Claude's API, managing context, agent specialization, and workflow state externally. The claims of 3-5x speed increases presumably come from parallelization in Ultrapilot mode and more efficient task decomposition, while cost savings in Ecomode would stem from optimized prompt engineering and strategic use of the lower-cost Haiku model.

The project's rapid accumulation of stars suggests strong developer interest in augmenting the capabilities of existing AI coding assistants without switching tools or paying for additional proprietary services.

gentic.news Analysis

This release is a direct, community-driven response to the growing demand for more autonomous and efficient AI coding workflows. It follows a clear trend we've covered, such as in our analysis of Smol Agents, where developers are building lightweight orchestration layers on top of foundational models to specialize and enhance their capabilities for specific domains—in this case, software development.

The approach of oh-my-claudecode mirrors a broader industry movement towards multi-agent systems for complex task completion, a concept championed by research labs and companies like Google's "Gemini Teams" project. However, this implementation is notably pragmatic and immediate, built on a readily available consumer product (Claude Code) rather than a research framework.

Its automatic model routing between Haiku and Opus is a smart cost-performance optimization that directly addresses a key pain point for developers using tiered model APIs. This aligns with a competitive pressure on AI service providers to offer more granular and automated cost-control tools. The project's success highlights a potential gap in Anthropic's own product offering: while they provide powerful models, the developer community is actively building the sophisticated workflow tooling on top.

If the performance claims hold under real-world use, it could pressure other AI coding assistants (like GitHub Copilot, Cursor, or Codeium) to either develop similar native orchestration features or foster their own third-party ecosystems. The open-source nature means its concepts and agent specializations could be rapidly adapted for other AI coding models, potentially making multi-agent orchestration a standard expectation for professional dev tools.

Frequently Asked Questions

Is oh-my-claudecode an official Anthropic product?

No, oh-my-claudecode is an independent, open-source project created by a developer (hasantoxr). It is not affiliated with, endorsed by, or an official product of Anthropic. It functions as an orchestration layer that uses a user's existing Claude subscription via its API.

How does oh-my-claudecode achieve 3-5x faster output?

According to the developer, the primary speed boost comes from the Ultrapilot mode, which parallelizes work by spinning up multiple specialized agents to work on different components of a task simultaneously. This is in contrast to a standard, sequential interaction with Claude Code. The efficiency gains are likely most apparent on large, multi-file projects that can be effectively broken down and distributed.

What are the risks of using an unofficial tool like this?

The main risks involve stability and data privacy. As a third-party tool, it could break if Anthropic changes its Claude Code interface or API. Users must also trust the orchestration layer with their code and prompts, which are routed through its system. It is crucial to review the open-source code before use and understand how it manages your data and API keys.

Can I use oh-my-claudecode with other AI models besides Claude?

The current version, as described, is specifically built as an orchestration layer for Claude Code and utilizes Claude's family of models (Haiku, Opus). Its architecture and agent specializations are tuned for this ecosystem. However, the core concept could be forked and adapted for other AI coding assistants, given sufficient developer interest.